Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "bad configuration"

-

No work is going to be tolerable if you don't enjoy it. If you got into programming or IT or any industry simply for the money you can earn doing it, you're in for a BAD TIME.

I love computers, linux, programming, configuration, automation, and problem solving. So I love what I do. I am currently three weeks into 13 weeks of parental leave, and I have been having dreams about work at night.

The best piece of advice I can offer to someone who has trouble getting motivated is: make sure to like it first.10 -

I have this little hobby project going on for a while now, and I thought it's worth sharing. Now at first blush this might seem like just another screenshot with neofetch.. but this thing has quite the story to tell. This laptop is no less than 17 years old.

So, a Compaq nx7010, a business laptop from 2004. It has had plenty of software and hardware mods alike. Let's start with the software.

It's running run-off-the-mill Debian 9, with a custom kernel. The reason why it's running that version of Debian is because of bugs in the network driver (ipw2200) in Debian 10, causing it to disconnect after a day or so. Less of an issue in Debian 9, and seemingly fixed by upgrading the kernel to a custom one. And the kernel is actually one of the things where you can save heaps of space when you do it yourself. The kernel package itself is 8.4MB for this one. The headers are 7.4MB. The stock kernels on the other hand (4.19 at downstream revisions 9, 10 and 13) took up a whole GB of space combined. That is how much I've been able to remove, even from headless systems. The stock kernels are incredibly bloated for what they are.

Other than that, most of the data storage is done through NFS over WiFi, which is actually faster than what is inside this laptop (a CF card which I will get to later).

Now let's talk hardware. And at age 17, you can imagine that it has seen quite a bit of maintenance there. The easiest mod is probably the flash mod. These old laptops use IDE for storage rather than SATA. Now the nice thing about IDE is that it actually lives on to this very day, in CF cards. The pinout is exactly the same. So you can use passive IDE-CF adapters and plug in a CF card. Easy!

The next thing I want to talk about is the battery. And um.. why that one is a bad idea to mod. Finding replacements for such old hardware.. good luck with that. So your other option is something called recelling, where you disassemble the battery and, well, replace the cells. The problem is that those battery packs are built like tanks and the disassembly will likely result in a broken battery housing (which you'll still need). Also the controllers inside those battery packs are either too smart or too stupid to play nicely with new cells. On that laptop at least, the new cells still had a perceived capacity of the old ones, while obviously the voltage on the cells themselves didn't change at all. The laptop thought the batteries were done for, despite still being chock full of juice. Then I tried to recalibrate them in the BIOS and fried the battery controller. Do not try to recell the battery, unless you have a spare already. The controllers and battery housings are complete and utter dogshit.

Next up is the display backlight. Originally this laptop used to use a CCFL backlight, which is a tiny tube that is driven at around 2000 volts. To its controller go either 7, 6, 4 or 3 wires, which are all related and I will get to. Signs of it dying are redshift, and eventually it going out until you close the lid and open it up again. The reason for it is that the voltage required to keep that CCFL "excited" rises over time, beyond what the controller can do.

So, 7-pin configuration is 2x VCC (12V), 2x enable (on or off), 1x adjust (analog brightness), and 2x ground. 6-pin gets rid of 1 enable line. Those are the configurations you'll find in CCFL. Then came LED lighting which required much less power to run. So the 4-pin configuration gets rid of a VCC and a ground line. And finally you have the 3-pin configuration which gets rid of the adjust line, and you can just short it to the enable line.

There are some other mods but I'm running out of characters. Why am I telling you all this? The reason is that this laptop doesn't feel any different to use than the ThinkPad x220 and IdeaPad Y700 I have on my desk (with 6c12t, 32G of RAM, ~1TB of SSDs and 2TB HDDs). A hefty setup compared to a very dated one, yet they feel the same. It can do web browsing, I can chat on Telegram with it, and I can do programming on it. So, if you're looking for a hobby project, maybe some kind of restrictions on your hardware to spark that creativity that makes code better, I can highly recommend it. I think I'm almost done with this project, and it was heaps of fun :D 13

13 -

This codebase reminds me of a large, rotting, barely-alive dromedary. Parts of it function quite well, but large swaths of it are necrotic, foul-smelling, and even rotted away. Were it healthy, it would still exude a terrible stench, and its temperament would easily match: If you managed to get near enough, it would spit and try to bite you.

Swaths of code are commented out -- entire classes simply don't exist anymore, and the ghosts of several-year-old methods still linger. Despite this, large and deprecated (yet uncommented) sections of the application depend on those undefined classes/methods. Navigating the codebase is akin to walking through a minefield: if you reference the wrong method on the wrong object... fatal exception. And being very new to this project, I have no idea what's live and what isn't.

The naming scheme doesn't help, either: it's impossible to know what's still functional without asking because nothing's marked. Instead, I've been working backwards from multiple points to try to find code paths between objects/events. I'm rarely successful.

Not only can I not tell what's live code and what's interactive death, the code itself is messy and awful. Don't get me wrong: it's solid. There's virtually no way to break it. But trying to understand it ... I feel like I'm looking at a huge, sprawling MC Escher landscape through a microscope. (No exaggeration: a magnifying glass would show a larger view that included paradoxes / dubious structures, and these are not readily apparent to me.)

It's also rife with bad practices. Terrible naming choices consisting of arbitrarily-placed acronyms, bad word choices, and simply inconsistent naming (hash vs hsh vs hs vs h). The indentation is a mix of spaces and tabs. There's magic numbers galore, and variable re-use -- not just local scope, but public methods on objects as well. I've also seen countless assignments within conditionals, and these are apparently intentional! The reasoning: to ensure the code only runs with non-falsey values. While that would indeed work, an early return/next is much clearer, and reduces indentation. It's just. reading through this makes me cringe or literally throw my hands up in frustration and exasperation.

Honestly though, I know why the code is so terrible, and I understand:

The architect/sole dev was new to coding -- I have 5-7 times his current experience -- and the project scope expanded significantly and extremely quickly, and also broke all of its foundation rules. Non-developers also dictated architecture, creating further mess. It's the stuff of nightmares. Looking at what he was able to accomplish, though, I'm impressed. Horrified at the details, but impressed with the whole.

This project is the epitome of "I wrote it quickly and just made it work."

Fortunately, he and I both agree that a rewrite is in order. but at 76k lines (without styling or configuration), it's quite the undertaking.

------

Amusing: after running the codebase through `wc`, it apparently sums to half the word count of "War and Peace"15 -

I think I want to quit my first applicantion developer job 6 months in because of just how bad the code and deployment and.. Just everything, is.

I'm a C#/.net developer. Currently I'm working on some asp.net and sql stuff for this company.

We have no code standards. Our project manager is somewhere between useless and determinental. Our clients are unreasonable (its the government, so im a bit stifled on what I can say.) and expect absurd things from us. We have 0 automated tests and before I arrived all our infrastructure wasn't correct to our documentation... And we barely had any documentation to begin with.

The code is another horror story. It's out sourced C# asp.net, js and SQL code.. And to very bad programmers in India, no offense to the good ones, I know you exist. Its all spagheti. And half of it isn't spelled correctly.

We have a single, massive constant class that probably has over 2000 constants, I don't care to count. Our SQL projects are a mess with tons of quick fix scripts to run pre and post publishing. Our folder structure makes no sense (We have root/js and root/js1 to make you cringe.) our javascript is majoritly on the asp.net pages themselves inline, so we don't even have minification most of the time.

It's... God awful. The result of a billion and one quick fixes that nobody documented. The configuration alone has to have the same value put multiple times. And now our senior developer is getting the outsourced department to work on moving every SINGLE NORMAL STRING INTO THE DATABASE. That's right. Rather then putting them into some local resource file or anything sane, our website will now be drawing every single standard string from the database. Our SENIOR DEVELOPER thinks this is a good idea. I don't need to go into detail about how slow this is. Want to do it on boot? Fine. But they do it every time the page loads. It's absurd.

Our sql database design is an absolute atrocity. You have to join several tables together just to get anything done. Half of our SP's are failing all the time because nobody really understands the design. Its gloriously awful its like.. The epitome of failed database designs.

But rather then taking a step back and dealing with all the issues, we keep adding new features and other ones get left in the dust. Hell, we don't even have complete browser support yet. There were things on the website that were still running SILVERLIGHT. In 2019. I don't even know how to feel about it.

I brought up our insane technical debt to our PM who told me that we don't have time to worry about things like technical debt. They also wouldn't spend the time to teach me anything, saying they would rather outsource everything then take the time to teach me. So i did. I learned a huge chunk of it myself.

But calling this a developer job was a sick, twisted joke. All our lives revolve around bugnet. Our work is our BN's. So every issue the client emails about becomes BN's. I haven't developed anything. All I've done is clean up others mess.

Except for the one time they did have me develop something. And I did it right and took my time. And then they told me it took too long, forced me to release before it was ready, even though I had never worked on what I was doing before. And it worked. I did it.

They then told me it likely wouldn't even be used anyway. I wasn't very happy at all.

I then discovered quickly the horrors of wanting to make changes on production. In order to make changes to it, we have to... Get this

Write a huge document explaining why. Not to our management. To the customer. The customer wants us to 'request' to fix our application.

I feel like I am literally against a wall. A huge massive wall. I can't get constent from my PM to fix the shitty code they have as a result of outsourcing. I can't make changes without the customer asking why I would work on something that doesn't add something new for them. And I can't ask for any sort of help, and half of the people I have to ask help from don't even speak english very well so it makes it double hard to understand anything.

But what can I do? If I leave my job it leaves a lasting stain on my record that I am unsure if I can shake off.

... Well, thats my tl;dr rant. Im a junior, so maybe idk what the hell im talking about.rant code application bad project management annoying as hell bad code c++ bad client bad design application development16 -

I'm having an existential crisis with this client.

We are spending millions of $s every year to make sure the product's performance is perfect. We are testing various scenarios, fine-tuning PLABs: the environment, application, middleware, infra,... And then we provide our recommendations to the client: "To handle load of XX parallel users focusing on YY, yy and Zy APIs, use <THIS> configuration".

And what the client does?

- take our recommendations and measure the wind speed outside

- if speed is <20m/s and milk hasn't gone bad yet, add 2x more instances of API X

- otherwise add 3xX, 1xY and give more CPUs to Z

- split the setup in half and deploy in 2 completely separate load-balanced prod environments.

- <do other "tweaking">

- bomb our team with questions "why do we have slow RTs?", "why did the env crash?", "why do we have all those errors?", "why has this been overlooked in PLABs?!?"

If you're improvising despite our recommendations, wtf are we doing here???

One day I will crack. Hopefully, not sometime soon.3 -

If you can be locked out of it remotely, you don't own it.

On May 3rd, 2019, the Microsoft-resembling extension signature system of Mozilla malfunctioned, which locked out all Firefox users out of their browsing extensions for that day, without an override option. Obviously, it is claimed to be "for our own protection". Pretext-o-meter over 9000!

BMW has locked heated seats, a physical interior feature of their vehicles, behind a subscription wall. This both means one has to routinely spend time and effort renewing it, and it can be terminated remotely. Even if BMW promises never to do it, it is a technical possibility. You are in effect a tenant in a car you paid for. Now imagine your BMW refused to drive unless you install a software update. You are one rage-quitting employee at BMW headquarters away from getting stuck on a side of a road. Then you're stuck in an expensive BMW while watching others in their decade-old VW Golf's driving past you. Or perhaps not, since other stuck BMWs would cause traffic jams.

Perhaps this horror scenario needs to happen once so people finally realize what it means if they can be locked out of their product whenever the vendor feels like it.

Some software becomes inaccessible and forces the user to update, even though they could work perfectly well. An example is the pre-installed Samsung QuickConnect app. It's a system app like the Wi-Fi (WLAN) and Bluetooth settings. There is a pop-up that reads "Update Quick connect", "A new version is available. Update now?"; when declining, the app closes. Updating requires having a Samsung account to access the Galaxy app store, and creating such requires providing personally identifiable details.

Imagine the Bluetooth and WiFi configuration locking out the user because an update is available, then ask for personal details. Ugh.

The WhatsApp messenger also routinely locks out users until they update. Perhaps messaging would cease to work due to API changes made by the service provider (Meta, inc.), however, that still does not excuse locking users out of their existing offline messages. Telegram does it the right way: it still lets the user access the messages.

"A retailer cannot decide that you were licensing your clothes and come knocking at your door to collect them. So, why is it that when a product is digital there is such a double standard? The money you spend on these products is no less real than the money you spend on clothes." – Android Authority ( https://androidauthority.com/digita... ).

A really bad scenario would be if your "smart" home refused to heat up in winter due to "a firmware update is available!" or "unable to verify your subscription". Then all you can do is hope that any "dumb" device like an oven heats up without asking itself whether it should or not. And if that is not available, one might have to fall back on a portable space heater, a hair dryer or a toaster. Sounds fun, huh? Not.

Cloud services (Google, Adobe Creative Cloud, etc.) can, by design, lock out the user, since they run on the computers of the service provider. However, remotely taking away things one paid for or has installed on ones own computer/smartphone violates a sacred consumer right.

This is yet another benefit of open-source software: someone with programming and compiling experience can free the code from locks.

I don't care for which "good purpose" these kill switches exist. The fact that something you paid for or installed locally on your device can be remotely disabled is dystopian and inexcuseable.16 -

Buffer usage for simple file operation in python.

What the code "should" do, was using I think open or write a stream with a specific buffer size.

Buffer size should be specific, as it was a stream of a multiple gigabyte file over a direct interlink network connection.

Which should have speed things up tremendously, due to fewer syscalls and the machine having beefy resources for a large buffer.

So far the theory.

In practical, the devs made one very very very very very very very very stupid error.

They used dicts for configurations... With extremely bad naming.

configuration = {}

buffer_size = configuration.get("buffering", int(DEFAULT_BUFFERING))

You might immediately guess what has happened here.

DEFAULT_BUFFERING was set to true, evaluating to 1.

Yeah. Writing in 1 byte size chunks results in enormous speed deficiency, as the system is basically bombing itself with syscalls per nanoseconds.

Kinda obvious when you look at it in the raw pure form.

But I guess you can imagine how configuration actually looked....

Wild. Pretty wild. It was the main dict, hard coded, I think 200 entries plus and of course it looked like my toilet after having an spicy food evening and eating too much....

What's even worse is that none made the connection to the buffer size.

This simple and trivial thing entertained us for 2-3 weeks because *drumrolls please* none of the devs tested with large files.

So as usual there was the deployment and then "the sudden miraculous it works totally slow, must be admin / it fault" game.

At some time it landed then on my desk as pretty much everyone who had to deal with it was confused and angry, for understandable reasons (blame game).

It took me and the admin / devs then a few days to track it down, as we really started at the entirely wrong end of the problem, the network...

So much joy for such a stupid thing.18 -

So ok here it is, as asked in the comments.

Setting: customer (huge electronics chain) wants a huge migration from custom software to SAP erp, hybris commere for b2b and ... azure cloud

Timeframe: ~10 months….

My colleague and me had the glorious task to make the evaluation result of the B2B approval process (like you can only buy up till € 1000, then someone has to approve) available in the cart view, not just the end of the checkout. Well I though, easy, we have the results, just put them in the cart … hmm :-\

The whole thing is that the the storefront - called accelerator (although it should rather be called decelerator) is a 10-year old (looking) buggy interface, that promises to the customers, that it solves all their problems and just needs some minor customization. Fact is, it’s an abomination, which makes us spend 2 months in every project to „ripp it apart“ and fix/repair/rebuild major functionality (which changes every 6 months because of „updates“.

After a week of reading the scarce (aka non-existing) docs and decompiling and debugging hybris code, we found out (besides dozends of bugs) that this is not going to be easy. The domain model is fucked up - both CartModel and OrderModel extend AbstractOrderModel. Though we only need functionality that is in the AbstractOrderModel, the hybris guys decided (for an unknown reason) to use OrderModel in every single fucking method (about 30 nested calls ….). So what shall we do, we don’t have an order yet, only a cart. Fuck lets fake an order, push it through use the results and dismiss the order … good idea!? BAD IDEA (don’t ask …). So after a week or two we changed our strategy: create duplicate interface for nearly all (spring) services with changed method signatures that override the hybris beans and allow to use CartModels (which is possible, because within the super methods, they actually „cast" it to AbstractOrderModel *facepalm*).

After about 2 months (2 people full time) we have a working „prototype“. It works with the default-sample-accelerator data. Unfortunately the customer wanted to have it’s own dateset in the system (what a shock). Well you guess it … everything collapsed. The way the customer wanted to "have it working“ was just incompatible with the way hybris wants it (yeah yeah SAP, hybris is sooo customizable …). Well we basically had to rewrite everything again.

Just in case your wondering … the requirements were clear in the beginning (stick to the standard! [configuration/functinonality]). Well, then the customer found out that this is shit … and well …

So some months later, next big thing. I was appointed technical sublead (is that a word)/sub pm for the topics‚delivery service‘ (cart, delivery time calculation, u name it) and customerregistration - a reward for my great work with the b2b approval process???

Customer's office: 20+ people, mostly SAP related, a few c# guys, and drumrole .... the main (external) overall superhero ‚im the greates and ur shit‘ architect.

Aberage age 45+, me - the ‚hybris guy’ (he really just called me that all the time), age 32.

He powerpoints his „ tables" and other weird out of this world stuff on the wall, talks and talks. Everyone is in awe (or fear?). Everything he says is just bullshit and I see it in the eyes of the others. Finally the hybris guy interrups him, as he explains the overall architecture (which is just wrong) and points out how it should be (according to my docs which very more up to date. From now on he didn't just "not like" me anymore. (good first day)

I remember the looks of the other guys - they were releaved that someone pointed that out - saved the weeks of useless work ...

Instead of talking the customer's tongue he just spoke gibberish SAP … arg (common in SAP land as I had to learn the hard way).

Outcome of about (useless) 5 meetings later: we are going to blow out data from informatica to sap to azure to datahub to hybris ... hmpf needless to say its fucking super slow.

But who cares, I‘ll get my own rest endpoint that‘ll do all I need.

First try: error 500, 2. try: 20 seconds later, error message in html, content type json, a few days later the c# guy manages to deliver a kinda working still slow service, only the results are wrong, customer blames the hybris team, hmm we r just using their fucking results ...

The sap guys (customer service) just don't seem to be able to activate/configure the OOTB odata service, so I was told)

Several email rounds, meetings later, about 2 months, still no working hybris integration (all my emails with detailed checklists for every participent and deadlines were unanswered/ignored or answered with unrelated stuff). Customer pissed at us (god knows why, I tried, I really did!). So I decide to fly up there to handle it all by myself16 -

So I ended up installing Arch Linux as the primary OS in my laptop, and to be honest, I'm not very crazy about it. Because I'm someone who likes an elegant UX, I spent three days and over 50 reboots and 5 reinstalls just trying to get Plymouth to work correctly (in the end, I just said screw it and gave up.) I know, I probably messed something up in the installation or configuration, but I didn't really want to deal with it anymore.

I'm not a big fan of the pacman package manager; I prefer apt. There were several applications I couldn't get to work properly, such as Steam, the Tor Browser, and Wine. All in all, I've basically wasted a week trying to get Arch Linux to work as the daily driver on my laptop, but I guess it's just not the distro for me.

I'm going to give Arch one last shot with the Manjaro distro. I'm hoping that Manjaro's simplified installation and configuration will produce a more usable (in my case) OS, and if not, I'll probably be going back to something Debian-based.

I'm not at all saying Arch is a bad distro. I know many people use it as their daily driver, and I have absolutely no problem with that. I'm not writing this to debate which distro is better, I'm just writing about my experience with it. Arch just may not be the distro for someone like me. At least I gave it a shot, right?15 -

After 9 weeks of waiting for my much delayed dell xps 15 i opened the box and it's fucking azerty ... While i clearly, CLEARY requested qwerty. I mentioned it 4 fucking times for the love of god make sure the keyboard is fucking QWERTY .. THEY GAVE ME AZERTY .. on top of that shittrain they also forgot to include my integrated fingerprint reader which i paid extra for.. man what a fucking shit company .. they might make half decent hardware but they piss all costumers of with their insanely bad braindead costumer service and sales team.

9 weeks of waiting , receiving the wrong configuration, probably again 5 more weeks of waiting.. man im starting to hate this shit company.7 -

I've been thinking about how to answer this for a while, but I'll approach it from a different angle. The time I (nearly) lost faith in my dev future wasn't because of a technology, bad programming language or an external influence. It was *me*.

The first job I had after the PhD, I was (in the first couple of weeks) tasked with updating various packages on a live Redhat server. "No problem", I thought, "I've done this before many a time on Debian, easy as pie!"

Long story short, I ended up practically bricking the server because I mistyped and uninstalled something I shouldn't have, didn't understand a piece of configuration, then tried to bodge it back and cocked things up further. Couldn't even log in via SSH, the hosting company had to be called, a serial connection set up, etc.

To say I was mortified, embarrassed and had my pride dented would be a massive understatement. I seriously thought I'd get fired on the spot, and that I should perhaps change careers to something where I couldn't cock things up as much.

...but you can't think like that, otherwise the world leaves you behind. So I picked myself up, apologised profusely, took some relevant training, double checked everything I was doing on that server in future and got back to work. After a few months of "proving myself", it was then seen as nothing more than a rather amusing story, and I became a senior dev there a couple of years later.1 -

When I started my current job I fucked up my first deploy ever so slightly but In the end managed to get it going with a quick hack (unpacking our war file modifying some properties and a jsp page before restarting the tomcat server )

One of the senior developers was having a really bad day and decided I was who he was going to take it out on, calling me a cowboy (in the Uk that’s a bad thing) blah blah, being really nasty and condescending to me.

I was about ready to shout at him as it was his dodgy configuration that caused the issue in the first place, but instead I took a deep breath and firmly (but calmly) told him never to speak to me like that again or we would have a problem.

5 years later he’s never spoken to me like that again and is actually a pretty decent guy.

Moral of the story people have bad days but also don’t let people take the piss. -

The argument of "vim/zsh/whatever is not good because it requires configuration, and you don't usually have that on a new server" is a weak argument and it can suck my fucking nuts.

If some people are weak and lazy and forget how to use plain bash because they added a single alias, that's their problem.

That's like saying that getting used to a car is a bad thing because you can forget how to ride a bike.

Even if I did have the brain of a fish and forgot to use a bike because of using a car, I'll still be using a car 95% of the fucking time, so I'll take it.

If you do customize your setup, you can write an install script, dockerize, or just fucking something, it's 2019, you can do whatever the fuck you want.

Get a fucking couple of neurons.5 -

Late night ramble warning.

I like to fix issues. I like to roll up my sleeves and fetch my keyboard or soldering iron on a mission to build a custom solution for whatever real world annoyance that has just triggered my problem solving caveman brain.

I have prided myself in that. I am the kind of guy who doesn't shy away from getting my hands dirty, I tell myself, and it's good because it makes my life easier, I tell myself. But increasingly, I've been wondering if this is really so. Am I really making my life easier? Am I fixing the world or just scratching an itch?

Example 1:

Instead of using conventional backup methods for my personal files like a commercial cloud based service or buying a Synology NAS or something similar, I decided it would be better to build my own linux server and set up a rather obscure configuration in order to address things like parity, ECC, bit-rot and the likes while staying cheap.

Learning a lot? Sure. Fun? Sure. Never have to worry about backups again? The opposite, of course.

While I set out to build the perfect bespoke solution to all my personal backup needs - it's as if I, by putting my time and effort into the nitty gritty of technical implementation, placed a vote for my future to contain more of that stuff. In reality this project has burdened my little brain with many new things to consider in regards to storing my files.

Example 2:

Qwerty and the conventional staggered keyboard layout are relics of past technical limitations and both of them inefficient and bad from an ergonomic perspective.

Possible solution: ignore and carry on or possibly transition to Colemak on a somewhat more ergonomic full size keyboard.

My solution: well, let's also hand build a tiny-ass super obscure ergo keyboard and spend two days to come up with my own layout for all special characters, numbers and function keys.

Fun? Somewhat. Learning a lot? I guess. Never have to think about keyboard layouts again? Lol.

I'm living in a world of pain with various key commands in various apps and edge cases. Could I fix it? Probably make it better but not without quite a bit of effort.

Anyways, it'd be interesting to hear if anyone can relate to this feeling of wanting to fix something once and for all only to find yourself deeper in it then ever before. Idk might be a just me thing. Anyways, goodnight lovely people.5 -

I love it when asshats, that wear testicles for sunglasses, like to ask me a question about my past experience with a given technology. Let's call it "X". After I've said my piece about the desired effect "X" was supposed to achieve, and describe the environment/scope where "X" was used, and describe the pain points I've encountered with it or the headaches "X" has caused in those environments, these camel spunk garglers then try to immediately rebut me by saying that every one of the times they've set "X" technology up it's worked just fine.

So, I kindly remind them that my past experience was in large enterprises where "X" technology just doesn't scale well so I've seen some issues with it.

Spunk Gargler: "Hmmm, must've just not been setup correctly."

I lose my shit (internally of course because I can't afford to be without a job right now.) and say, "I'm not so sure that it wasn't setup correctly, I just don't think that 'X' works properly at the scale of 500+ employee environments well. You've only ever set it up in small offices of like - what, 20 users?"

Shitlord McHerp-a-Derp who's Drunk on Spunk: "Maybe, but it just sounds like a bad configuration was causing those issues to me."

He shuffled back into his office shortly after I basically told him he's a fucking chump playing small team tactics and I've seen shit at scale so I've seen first hand what does and does not work well.

I'm writing this because this is the same fucking imbecile that has only ever encountered a /23 network once before from a client they inherited from a previous MSP team and they didn't know how to "safely change it" to a /24 so they just left it in place.

(BTW, just for the non-networking guys/gals out there, I'm sure you've already guessed it, but a /23 network is NOT a fucking problem!)

These puffy cancerous taint boils that call themselves IT engineers are the fucking problem!

I'm not a dev by trade or training, but trying to learn DevOps, and I can totally see why Dev teams can/sometimes get pissed with infrastructure teams... infrastructure/helpdesk side of IT is full of these fucking meat heads.1 -

Hi, my name is bohr and I'm a recovering distro hopper.

It all started with Ubuntu, out of my frustrations with the unintuitive nature of DOS I gravitated to a Unix environment which Ubuntu naturally solved. But I quickly became annoyed with the laggy nature of it's daily usage. So I switched to Linux mint. Loving the HTML/css/js configuration aspect of cinnamon I thought it was the answer to all my problems. But I became annoyed with apt and it's lack of a few programs I wanted. This got me to look into an arch based distro, because pacman seemed like the answer to my problems. Unfortunately there are way too many arch distros to use. I experimented with antegros' many DE options: gnome, kde, i3, deepin, openbox... Always finding something wrong. I tried manjaro and it's many flavors, still being annoyed with minute aspects of the os. Out of frustration, with the deep configuration settings I was getting into and the need to actually focus on the work being done on the computer I crawled back to Linux mint. But now my friends, I have decided that maybe it's time to just use a more established distro? Maybe gnome isn't actually that bad? Maybe I need to give it another try? And that is why, I promise, this is the last hop for me. Arch Linux, Gnome here I come and I'm ready to commit this time!...

But have you guys seen POP!_OS? Woah, I bet it would solve all of my problems....6 -

I often read articles describing developer epiphanies, where they realized, that it was not Eclipse at fault for a bad coding experience, but rather their lack of knowledge and lack of IDE optimization.

No. Just NO.

Eclipse is just horrendous garbage, nothing else. Here are some examples, where you can optimize Eclipse and your workflow all you like and still Eclipse demonstrates how bad of an IDE it is:

- There is a compilation error in the codebase. Eclipse knows this, as it marks the error. Yet in the Problems tab there is absolutely nothing. Not even after clean. Sometimes it logs errors in the problems tab, sometimes t doesn't. Why? Only the lord knows.

- Apart from the fact that navigating multiple Eclipse windows is plain laughable - why is it that to this day eclipse cannot properly manage windows on multi-desktop setups, e.g. via workspace settings? Example: Use 3 monitors, maximize Eclipse windows of one Eclipse instance on all three. Minimize. Then maximize. The windows are no longer maximized, but spread somehow over the monitors. After reboot it is even more laughable. Windows will be just randomly scrabled and stacked on top of each other. But the fact alone that you cannot navigate individual windows of one instance.. is this 2003?

- When you use a window with e.g. class code on a second monitor and your primary Eclipse window is on the first monitor, then some shortcuts won't trigger. E.g. attempting to select, then run a specific configuration via ALT+R, N, select via arrows, ALT+R won't work. Eclipse cannot deal with ALT+R, as it won't be able to focus the window, where the context menus are. One may think, this has to do with Eclipse requiring specific perspectives for specific shortcuts, as shortcuts are associated with perspectives - but no. Because the perspective for both windows is the same, namely Java. It is just that even though Shortcuts in Eclipse are perspective-bound, but they are also context-sensitive, meaning they require specific IDE inputs to work, regarldless of their perspective settings. Is that not provided, then the shortcut will do absolutely nothing and Eclipse won't tell you why.

- The fact alone that shortcut-workarounds are required to terminate launches, even though there is a button mapping this very functionality. Yes this is the only aspect in this list, where optimizing and adjusting the IDE solves the problem, because I can bind a shortcut for launch selection and then can reliably select ant trigger CTRL+F2. Despite that, how I need to first customize shortcuts and bind one that was not specified prior, just to achieve this most basic functionality - teminating a launch - is beyond me.

Eclipse is just overengineered and horrendous garbage. One could think it is being developed by people using Windows XP and a single 1024x768 desktop, as there is NO WAY these issues don't become apparent when regularily working with the IDE.9 -

Just needing somewhere to let some steam off

Tl;dr: perfectly fine commandline system is replaced by bad ui system because it has a ui.

For a while now we have had a development k8s cluster for the dev team. Using helm as composing framework everything worked perfectly via the console. Being able to quickly test new code to existing apps, and even deploy new (and even third party apps) on a simar-to-production system was a breeze.

Introducing Rancher

We are now required to commit every helm configuration change to a git repository and merge to master (master is used on dev and prod) before even being able to test the the configuration change, as the package is not created until after the merge is completed.

Rolling out new tags now also requires a VCS change as you have to point to the docker image version within a file.

As we now have this awesome new system, the ops didn't see a reason to give us access to kubectl. So the dev team is stuck with a ui, but this should give the dev team more flexibility and independence, and more people from the team can roll releases.

Back to reality: since the new system we have hogged more time from ops than we have done in a while, everyone needs to learn a new unintuitive tool, and the funny thing, only a few people can actually accept VCS changes as it impacts dev and prod. So the entire reason this was done, so it is reachable to more people, is out the window.3 -

Got a strange thing today in class, as a teacher in programming. We have a lab where the computers haven't yet their final configuration ended, so the user used by the students is the administrator of the computer. And today, a student calls me and tell "sir, the password isn't the one you gave to us" (temporary the same for each machine until we fix the configuration).

Go to student's place, password incorrect with a hint "you know the code : up, up, down, down... oh, you don't know, huh? Too old! Too bad!"

Password was - off course - "konami".

But... how a student born in 1997 can think he can troll me with the konami code?!

He wasn't even born when I played on the NES as kid!

Sometimes I'd like to teach my students how to fly by tossing them by the windows...1 -

Avoid ACPICA if at all possible. It's one garbage tier cluster fuck of bad design, horrible documentation and downright misleading and wrong code

It's meant to consist of an ASL compiler, disassembler, debugger, dumper, various user space utitilies and a kernel resident OSPM implementation *if* you can figure out what belongs to what. Even just compiling this pile of trash is a mystery in itself. Think you need the source files in source/common? EEEEH, wrong. Well, at least partially since most of them seem to be for the user space stuff..? Other ones *are* needed on the other hand. At least the disassembler and/or debugger and/or dumper components seem to reference them. Not that I could figure out how to compile those anyways. The real path to your goal seems to be to ignore a seemingly arbitrary subset of source and header files until your linker stops complaining

There's also a bunch of configuration defines, some of which *you* define, some defined *for* you, based on again others. Of course most of them do stupid shit. Enabling the debugger automatically enables debug logging. Enabling the disassembler force enables debug allocation tracking... What?

The code itself isn't of much help either. Looking in "os_specific/service_layers" you find what looks to be reference implementations of acpica functions in certain os' like windows and unix. Of course I had a look because AcpiOsReadMemory is supposed to read physical memory and I don't know how I would even implement that. But hey, osunixxf.c (xf for interface... of course) should tell me. I'll let you see for yourself in the attached image. Apparently it does fuck all and just returns AE_OK. No error, no logging, no nothing. Just ok. As you can imagine, AcpiOsWriteMemory doesn't do much more either.

...okay so maybe physical memory accesses aren't actually used and these functions are some sort of relic from past times? Nope! They are absolutely necessary for doing low level device interaction. WTF. So finally I went to the linux source and checked how *they* implemented them, and just as I thought, these functions are anything but no-ops...

...So for what fucking reason do these stupid interface implementations even exist but to purposefully mislead you?? They aren't used for fucking anything! As far as I know Windows doesn't even *use* ACPICA and Linux have their own fork with working implementations... They just sit there, just to tell you how to NOT do it

So that's some of my thoughts about ACPICA. Note that I haven't even used it as a library yet, I just got it to compile and link and it already fucked with me this much.

There's also so much more I didn't mention like that you *have* to modify the acpica source in order to get your own platform header working (else #error) eventhough the docs explicitely instruct you not too but you get the point

Don't use ACPICA if you don't have to. Save your sanity for something that's worth it

-

I used ShareX on Windows, but have had bad luck finding something with as much configuration options on Linux (Ubuntu). Any suggestions?

Edit: I'm currently using Flameshot, but feel like it's quite limited. I can't log in to Imgur for example.9 -

A friend of mine asked me yesterday for help for his bachelor thesis.

He wants to write about MySQL internals in regards to BLOB storage / usage.

We had a veeeerrrry long discussion....

And found a loooot of scary internet pages.

It's so .... Insane....

What some people with doctor titles or higher education generate...

Isn't content. More poo...

Most "blogs" / "articles" or whatever the author named it were missing all kinds of relevant data (version, configuration, anything relevant) but full of opinionated / biased bullshit.

Highlights were:

- we store lot of BLOB data, Backups take long and require more space

(you store additional data in an database, whaddya expect???!!!!)

- interesting guesswork about locking without any reference (interesting since it was sometimes so far away from reality that it looked more like quantum physics)

- storing blobs means that _each_ blob entry will be stored in a separate file (without any reference, but if an RDBMs did that... It would end in an amazing fireball I guess)

- BLOB's bad since it can represent only the file content, the database cannot distinguish wether it's an MP3 / MPG or anything like that...

(Ehm. Yeah. And an database cannot distinguish if you store under "Name" an Name or gibberish?!)

I somehow think that some people made an doctor and post this gibberish nonsense so people stay dumb to give them a job...

Like the TV repair men who steals the batteries from the remote.

Even conspiracy theories were more convincing -

Just mirrored sudo to my own Gitea instance yesterday (https://git.ghnou.su/mir/sudo). Turns out that this chonkster is 200MB compressed (LZ4 on ZFS). I am baffled by it... All it needs to do is reading a configuration file describing what users can be elevated, to which user and which commands they can run. Perhaps doas wasn't a bad idea after all?

Oh and it got a privilege escalation vulnerability just yesterday (https://security-tracker.debian.org/...), which is why I got interested in it. Update your sudo packages if you haven't already.11 -

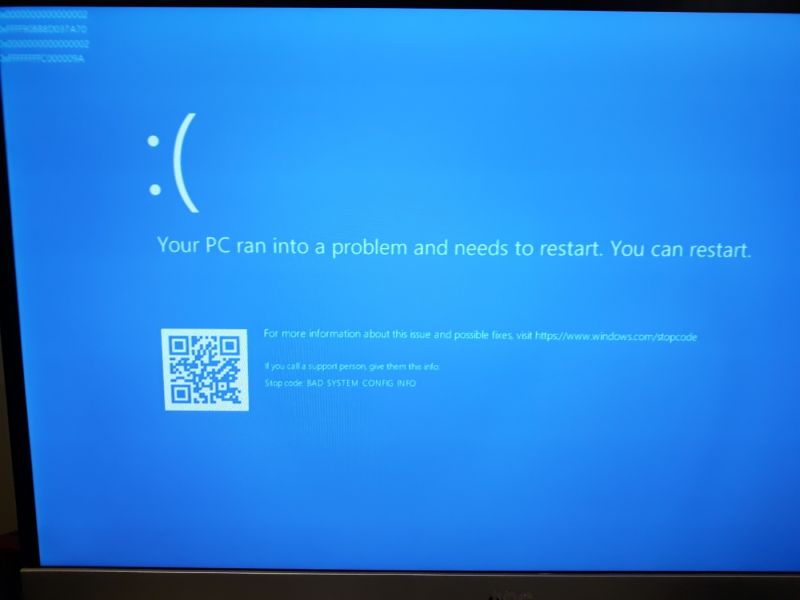

At work everybody uses Windows 10. We recently switched from Vagrant to Docker. It's bad enough I have to use Windows, it's even worse to use Docker for Windows. If God forbid, you're ever in this situation and have to choose, pick Vagrant. It's way better than whatever Docker is doing... So upon installing version 2.2.0.0 of Docker for Windows I found myself in the situation where my volumes would randomly unmount themselves and I was going crazy as to why my assets were not loading. I tried 'docker-compose restart' or 'down' and 'up -d', I went into Portainer to check and manually start containers and at some point it works again but it doesn't last long before it breaks. I checked my yml config and asked my colleagues to take a look. They also experience different problems but not like mine. There is nothing wrong with the configuration. I went to check their github page and I saw there were a lot of issues opened on the same subject, I also opened one. Its over a week and I found no solution to this problem. I tried installing an older version but it still didn't work. Also I think it might've bricked my computer as today when I turned on my PC I got greeted by a BSOD right at system start up... I tried startup repair, boot into safe mode, system restore, reset PC, nothing works anymore it just doesn't boots into windows... I had to use a live USB with Linux Mint to grab my work files. I was thinking that my SSD might have reached its EoL as it is kinda old but I didn't find any corrupt files, everything is still there. I can't help but point my finger at Docker since I did nothing with this machine except tinkering with Docker and trying to make it work as it should... When we used Vagrant it also had its problems but none were of this magnitude... And I can't really go back to Vagrant unless my team also does so...

10

10 -

I’m currently working with a devops team in the company to migrate our old ass jboss servers architecture to kubernetes.

They’ve been working in this for about a year now, and it was supposed to be delivered a few months back, no one knew what’s going on and last week they manage to have something to see at least.

I’ve never seen anything so bad in my short life as a developer, at the point that the main devops guy can’t even understand his own documentation to add ci/cd to a project.

It goes from trigger manually pipelines in multiple branches for configuration and secrets, a million unnecessary env variables to set, to docker images lacking almost all requisites necessary to run the apps.

You can clearly see the dude goes around internet copy pasting stuff without actually understanding what going on behind as every time you ask him for the guts of the architecture he changes the topic.

And the worst of all this, as my team is their counterpart on development we’ve fighting for weeks to make them understand that is impossible the proceed with this process with over 100 apps and 50+ developers.

Long story short, last two weeks I’ve been fixing the “dev ops” guy mess in terms of processes and documentation but I think this is gonna end really bad, not to sound cocky or anything but developers level is really low, add docker and k8s in top of that and you have a recipe for disaster.

Still enjoying as I have no fault there, and dude got busted.9 -

After learning a bit about alife I was able to write

another one. It took some false starts

to understand the problem, but afterward I was able to refactor the problem into a sort of alife that measured and carefully tweaked various variables in the simulator, as the algorithm

explored the paramater space. After a few hours of letting the thing run, it successfully returned a remainder of zero on 41.4% of semiprimes tested.

This is the bad boy right here:

tracks[14]

[15, 2731, 52, 144, 41.4]

As they say, "he ain't there yet, but he got the spirit."

A 'track' here is just a collection of critical values and a fitness score that was found given a few million runs. These variables are used as input to a factoring algorithm, attempting to factor

any number you give it. These parameters tune or configure the algorithm to try slightly different things. After some trial runs, the results are stored in the last entry in the list, and the whole process is repeated with slightly different numbers, ones that have been modified

and mutated so we can explore the space of possible parameters.

Naturally this is a bit of a hodgepodge, but the critical thing is that for each configuration of numbers representing a track (and its results), I chose the lowest fitness of three runs.

Meaning hypothetically theres room for improvement with a tweak of the core algorithm, or even modifications or mutations to the

track variables. I have no clue if this scales up to very large semiprime products, so that would be one of the next steps to test.

Fitness also doesn't account for return speed. Some of these may have a lower overall fitness, but might in fact have a lower basis

(the value of 'i' that needs to be found in order for the algorithm to return rem%a == 0) for correctly factoring a semiprime.

The key thing here is that because all the entries generated here are dependent on in an outer loop that specifies [i] must never be greater than a/4 (for whatever the lowest factor generated in this run is), we can potentially push down the value of i further with some modification.

The entire exercise took 2.1735 billion iterations (3-4 hours, wasn't paying attention) to find this particular configuration of variables for the current algorithm, but as before, I suspect I can probably push the fitness value (percentage of semiprimes covered) higher, either with a few

additional parameters, or a modification of the algorithm itself (with a necessary rerun to find another track of equivalent or greater fitness).

I'm starting to bump up to the limit of my resources, I keep hitting the ceiling in my RAD-style write->test->repeat development loop.

I'm primarily using the limited number of identities I know, my gut intuition, combine with looking at the numbers themselves, to deduce relationships as I improve these and other algorithms, instead of relying strictly on memorizing identities like most mathematicians do.

I'm thinking if I want to keep that rapid write->eval loop I'm gonna have to upgrade, or go to a server environment to keep things snappy.

I did find that "jiggling" the parameters after each trial helped to explore the parameter

space better, so I wrote some methods to do just that. But what I wouldn't mind doing

is taking this a bit of a step further, and writing some code to optimize the variables

of the jiggle method itself, by automating the observation of real-time track fitness,

and discarding those changes that lead to the system tending to find tracks with lower fitness.

I'd also like to break up the entire regime into a training vs test set, but for now

the results are pretty promising.

I knew if I kept researching I'd likely find extensions like this. Of course tested on

billions of semiprimes, instead of simply millions, or tested on very large semiprimes, the

effect might disappear, though the more i've tested, and the larger the numbers I've given it,

the more the effect has become prevalent.

Hitko suggested in the earlier thread, based on a simplification, that the original algorithm

was a tautology, but something told me for a change that I got one correct. Without that initial challenge I might have chalked this up to another false start instead of pushing through and making further breakthroughs.

I'd also like to thank all those who followed along, helped, or cheered on the madness:

In no particular order ,demolishun, scor, root, iiii, karlisk, netikras, fast-nop, hazarth, chonky-quiche, Midnight-shcode, nanobot, c0d4, jilano, kescherrant, electrineer, nomad,

vintprox, sariel, lensflare, jeeper.

The original write up for the ideas behind the concept can be found at:

https://devrant.com/rants/7650612/...

If I left your name out, you better speak up, theres only so many invitations to the orgy.

Firecode already says we're past max capacity!5 -

Can somebody explain to me why developers (especially web) have to micromanage every single thing into it's own f*ing component.

Story time: I have an input form with some tabs. I discovered that the UI Library (Devextreme) has a nice little component that handles forms, (including tabs, groups, etc.). So I make a page, configure tabs, inputs and whatnot.

Now, I already knew that my coworkers can't handle html that is bigger than a page. So instead of putting the configs in the frontend, I made nice files where I store those, to keep them nicely clean and seperated.

Me feeling very good, went off to have a nice lunch break.

I come back read the message from my coworker, asking me to make every tab it's own component and form and load them into a separate Tab-Component, instead of using the built in configuration

......

WHAT?

Like seriously. I have a f*ing library that handles that, why the f*ck do I need to reinvent the wheel here!?

Supposedly it's to make it more maintainable, easier to find bugs, flatten the hierarchy.

Here's a little wake up call you morons: Nesting hundreds of components into each other does *not* help you with that.

It just creates a rabbit-hole of confusing containers that you have to navigate and dissect every time you try to find something.

"Can I fix the bug in the detail Page? Sure I'll tell you tomorrow when I find out which fucking component the bug results from".

Components are there to be *reused*. It's using inheritance for reusing code all over again, but worse.

But maybe I'm just old fashioned, and conservative. Maybe I'm just a really bad software engineer, because nowadays everything seems to result in architectures spreading hundreds of folders, thousands of files with nothing but arbitrary cut-offs with no real benefit, that I don't see the value in.6 -

Since day 0, I have been fond of computers. One of my first plush was called "DataDog" and looked like a CRT screen with dog ears around. According to my mum I was "addicted" to it.

At year 2, my dad was arranging some music on some software while I was watching him on his lap. Quick jump to the present: nowadays and since 10 years I run my own home studio with three guitars, two keyboards, one bass, three monitors, a microphone, an amp and a cabinet... coincidence? I think not!

Fast forward 5 years later (so I'm 6-7 years old), and I was playing with the legendary pinball game on Win95, as well as Flight Simulator. Then I was hogging mum's laptop to play settlers II (<3 that game), I eventually got my computer, and got into Quake III Arena being aged 10 (and had to tell my mum that game was safe for my age haha - I eventually removed the blood effects).

The Quake 3 Arena chapter is interesting: it got me into router configuration as I wanted to open a port through the router to host my own dedicated games with friends, it got me into DNS configuration (I was running a no-DNS client that allowed friends to join me through a DNS while having a dynamic IP) and eventually... to modifying .cfg files to tune my server as I wanted it. No programming here but a nice intro into :)

Then I hated the fact everybody would point their finger at me and say "geek" - I was only 13, fragile, sensitive, and I wanted everything but a bad image on me.

Meanwhile I continued on getting interested in hardware and configure my own computers, and investing myself into music production.

Then, university. "What do you want to study?" I thought of everything but IT, fleeing the image of a "geek". Turns out it was a waste of time, and at 21 yo I got into web development (well, just html and css), then learned a bit of PHP, finally got a specialized 2-year training and now here I am!

I was bound to be in IT either way since day 0, and funny fact, I've used every windows edition since Win95. -

I just started a new job last week. Old-school sysadmin role for a pretty old-school company, but the pay is nice and the kids've gotta eat.

They gave me a windows laptop. I haven't used windows for work or as a daily driver since 2016, and now, a week into trying to make this machine work for me, I have the following observations to report.

WSL is nice. It's nice to have it installed(though actually installing it was an adventure unto itself), and to set alacritty to open my default user prompt straight into that is very nice. As terminal emulators are by far my most used piece of software, that's nice to have.

Command-line software management through powershell, winget, and chocolatey are also very nice.

I like the accessibility offered by autohotkey, though there is something of a learning curve on it. Once I get better with it, I suspect that what follows will be largely mitigated.

The Bad:

In general, Windows is janky. It feels like it's all kinda taped together without any particular cohesion in mind. As a desktop, it feels decidedly amateur, compared to the feature-mountain polish of MacOS, and especially compared to the flexibility and infinite possibilities of Linux.

Lots of screen real estate is wasted, with window decorations, and fonts that look terrible at smaller sizes, because the antialiasing of fonts is just terrible. Almost all the features I depend on in other desktops: ad-hoc searches and launches(alfred, rofi) are-- again --janky. They work, but they typically require more typing than alfred or rofi. I admit I haven't spent weeks on this problem yet, but I haven't found a workable solution yet with wox, hain, and keypirinha. Quick searches like what you get with alfred, alfred workflows, and the swiss army knife that is rofi, just aren't possible or reliable with the tools I've used so far, and most require some kind of indexing agent to fully function.

It beggars imagination that a desktop in which users are subjected to "default apps" that is purported to be acceptable for enterprise, professional use, does not have a default entry for text editor. I installed nvim-qt, and I want to use it to edit anything and everything I ever edit with text, but all too often, apps have hard-coded instructions to open text files with notepad.

I want to open certain URLs with firefox, certain ones with firefox developer edition, and others with vivaldi, and yet there is not an app available that I have seen yet in my searches that allows me to set this kind of configuration. I found one that's supposed to, but it just ignores everything I put into its config, and just opens MS Edge for everything. Jank.

Simple things take too long. Like the delay between when I laboriously hit ctrl-alt-del to bring up the login and when the actual text field appears, and the delay between that and when I want to start using the computer.

Changing some settings requires a reboot. Updating some software requires a reboot. Updating permissions on something sometimes requires a reboot. And those are all on top of the frequent requests to reboot for updates.

I would have thought Windows would have overcome most of the issues that create these problems, but it's just, as I said, amateur.1 -

A very long rant.. but I'm looking to share some experiences, maybe a different perspective.. huge changes at the company.

So my company is starting our microservices journey (we have a 359 retail websites at this moment)

First question was: What to build first?

The first thing we had to do was to decide what we wanted to build as our first microservice. We went looking for a microservice that can be used read only, consumers could easily implement without overhauling production software and is isolated from other processes.

We’ve ended up with building a catalog service as our first microservice. That catalog service provides consumers of the microservice information of our catalog and its most essential information about items in the catalog.

By starting with building the catalog service the team could focus on building the microservice without any time pressure. The initial functionalities of the catalog service were being created to replace existing functionality which were working fine.

Because we choose such an isolated functionality we were able to introduce the new catalog service into production step by step. Instead of replacing the search functionality of the webshops using a big-bang approach, we choose A/B split testing to measure our changes and gradually increase the load of the microservice.

Next step: Choosing a datastore

The search engine that was in production when we started this project was making user of Solr. Due to the use of Lucene it was performing very well as a search engine, but from engineering perspective it lacked some functionalities. It came short if you wanted to run it in a cluster environment, configuring it was hard and not user friendly and last but not least, development of Solr seemed to be grinded to a halt.

Elasticsearch started entering the scene as a competitor for Solr and brought interesting features. Still using Lucene, which we were happy with, it was build with clustering in mind and being provided out of the box. Managing Elasticsearch was easy since there are REST APIs for configuration and as a fallback there are YAML configurations available.

We decided to use Elasticsearch since it provides us the strengths and capabilities of Lucene with the added joy of easy configuration, clustering and a lively community driving the project.

Even bigger challenge? Which programming language will we use

The team responsible for developing this first microservice consists out of a group web developers. So when looking for a programming language for the microservice, we went searching for a language close to their hearts and expertise. At that time a typical web developer at least had knowledge of PHP and Javascript.

What we’ve noticed during researching various languages is that almost all actions done by the catalog service will boil down to the following paradigm:

- Execute a HTTP call to fetch some JSON

- Transform JSON to a desired output

- Respond with the transformed JSON

Actions that easily can be done in a parallel and asynchronous manner and mainly consists out of transforming JSON from the source to a desired output. The programming language used for the catalog service should hold strong qualifications for those kind of actions.

Another thing to notice is that some functionalities that will be built using the catalog service will result into a high level of concurrent requests. For example the type-ahead functionality will trigger several requests to the catalog service per usage of a user.

To us, PHP and .NET at that time weren’t sufficient enough to us for building the catalog service based on the requirements we’ve set. Eventually we’ve decided to use Node.js which is better suited for the things we are looking for as described earlier. Node.js provides a non-blocking I/O model and being event driven helps us developing a high performance microservice.

The leap to start programming Node.js is relatively small since it basically is Javascript. A language that is familiar for the developers around that time. While Node.js is displaying some new concepts it is relatively easy for a developer to start using it.

The beauty of microservices and the isolation it provides, is that you can choose the best tool for that particular microservice. Not all microservices will be developed using Node.js and Elasticsearch. All kinds of combinations might arise and this is what makes the microservices architecture so flexible.

Even when Node.js or Elasticsearch turns out to be a bad choice for the catalog service it is relatively easy to switch that choice for magic ‘X’ or component ‘Z’. By focussing on creating a solid API the components that are driving that API don’t matter that much. It should do what you ask of it and when it is lacking you just replace it.

Many more headaches to come later this year ;)3 -

Western Digital takes forever to RMA their hard drive under warranty. Had to buy a spare just to get my NAS back up and running before I lose everything (I only have a 1 drive fault tolerance RAID array). Too bad I only have 5 drive slots. 4 4TB WD Reds and an SSD for caching = 10TB usable space in this configuration. Actually this kinda pisses me off....I pay for 4TB drives and I get 3.64TB. For the 4 drives, that's a loss of 1.44TB.1

-

Yesterday when I came into the office my laptop (in a workstation) could not connect to the internet because of bad ip config. The auto configuration just didn't work...

Solved the problem by opening the laptop-lid for about a second, just wtf