Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "cpus"

-

Toilets and race conditions!

A co-worker asked me what issues multi-threading and shared memory can have. So I explained him that stuff with the lock. He wasn't quite sure whether he got it.

Me: imagine you go to the toilet. You check whether there's enough toilet paper in the stall, and it is. BUT now someone else comes in, does business and uses up all paper. CPUs can do shit very fast, can't they? Yeah and now you're sitting on the bowl, and BAMM out of paper. This wouldn't have happened if you had locked the stall, right?

Him: yeah. And with a single thread?

Me: well if you're alone at home in your appartment, there's no reason to lock the door because there's nobody to interfere.

Him: ah, I see. And if I have two threads, but no shared memory, then it is as if my wife and me are at home with each a toilet of our own, then we don't need to lock either.

Me: exactly!12 -

Users: Are you going to make your Products cheaper and put better GPUs and CPUs in your desktops and laptops?

Apple: 7

7 -

Today (as a joke), I asked my class if there were any “professional HTML programmers” who could help me.

Surprisingly a couple people came over with smirks on their face. I thought they were going along with the joke.

Turns out, they were serious. They legitimately believed that they were professional HTML programmers and talked to me in such a condescending way that I was speechless.

“This is called a file. See that part after the dot? That’s what makes it HTML. HTML is an incredibly hard programming language and powers CPUs and the computer that you are using.”

I didn’t know how to respond. Hopefully they were joking.9 -

Love how a teacher of mine described IO wait for CPUs on a blackboard.

"That's calculation time." *draws three small lines on the blackboard* And this is IO wait. *draws a really long line, goes out of the class, out of the school, comes back* "Yes, this is IO wait. No matter how good and fast your CPU in your gaming PC is, if your hard drive is shit, everything is shit."5 -

I'm getting ridiculously pissed off at Intel's Management Engine (etc.), yet again. I'm learning new terrifying things it does, and about more exploits. Anything this nefarious and overreaching and untouchable is evil by its very nature.

(tl;dr at the bottom.)

I also learned that -- as I suspected -- AMD has their own version of the bloody thing. Apparently theirs is a bit less scary than Intel's since you can ostensibly disable it, but i don't believe that because spy agencies exist and people are power-hungry and corrupt as hell when they get it.

For those who don't know what the IME is, it's hardware godmode. It's a black box running obfuscated code on a coprocessor that's built into Intel cpus (all Intell cpus from 2008 on). It runs code continuously, even when the system is in S3 mode or powered off. As long as the psu is supplying current, it's running. It has its own mac and IP address, transmits out-of-band (so the OS can't see its traffic), some chips can even communicate via 3g, and it can accept remote commands, too. It has complete and unfettered access to everything, completely invisible to the OS. It can turn your computer on or off, use all hardware, access and change all data in ram and storage, etc. And all of this is completely transparent: when the IME interrupts, the cpu stores its state, pauses, runs the SMM (system management mode) code, restores the state, and resumes normal operation. Its memory always returns 0xff when read by the os, and all writes fail. So everything about it is completely hidden from the OS, though the OS can trigger the IME/SMM to run various functions through interrupts, too. But this system is also required for the CPU to even function, so killing it bricks your CPU. Which, ofc, you can do via exploits. Or install ring-2 keyloggers. or do fucking anything else you want to.

tl;dr IME is a hardware godmode, and if someone compromises this (and there have been many exploits), their code runs at ring-2 permissions (above kernel (0), above hypervisor (-1)). They can do anything and everything on/to your system, completely invisibly, and can even install persistent malware that lives inside your bloody cpu. And guess who has keys for this? Go on, guess. you're probably right. Are they completely trustworthy? No? You're probably right again.

There is absolutely no reason for this sort of thing to exist, and its existence can only makes things worse. It enables spying of literally all kinds, it enables cpu-resident malware, bricking your physical cpu, reading/modifying anything anywhere, taking control of your hardware, etc. Literal godmode. and some of it cannot be patched, meaning more than a few exploits require replacing your cpu to protect against.

And why does this exist?

Ostensibly to allow sysadmins to remote-manage fleets of computers, which it does. But it allows fucking everything else, too. and keys to it exist. and people are absolutely not trustworthy. especially those in power -- who are most likely to have access to said keys.

The only reason this exists is because fucking power-hungry doucherockets exist.26 -

Intel's new CPUs are faster, so your text editor can be based on a slower version of chrome, with a even slower bloated JavaScript framework. Congratulations!7

-

Don't be ridiculous and say Mac's are good for gaming 😣, they aren't.

Their graphics are terrible CPUs are shocking ram ... Average the fact they have fast ssds is great

But that's it. For their price points it's not worth it end of story

I used to say Mac's are worth getting if your a designer or video editor...

I have now changed my position due to the shittyness of their latest products

I'm not really much of a gamer anymore to busy 😓 but I can read specs.

People won't build games for Mac's especially now it will lower the quality of their product. I actually don't even see a point of having a Mac in today's world.

Apple are meant to push boundaries ... They are doing it all wrong now 😐

Accept it... And get a PC 5 times faster then their apple counterparts

I do fucking hate apple but I respected them in the past, if nothing but their clever marketing getting sheep to buy their products . Now I just don't respect them, they could at least try to build something remotely worth the money20 -

A Developer is desperate: his java application servers are unresponsive, thousand of dead zombie threads are sucking all cpus, memory is leaking everywhere, garbage collector has gone crazy, the cluster sessions are fucked....

The Developer goes to the closest bridge, ties a stone to his neck and gets ready to jump.

Suddenly a bearded old man with a fiery look runs toward him, yelling:

- stop stop!!!! Your application is not scaling and misconfigured, your servers are melting, cpu usage is not sustainable anymore, but don't despair

The Developer, puzzled, looks at him:

-I've never seen you...how do you know...

- Hey, man, I'm the Devil. I know everything. All your problems are solved. I'll give you magic functions. They are called Lambda.

You'll never have to worry about your servers, scalability, security, configuration and shit.

The Developer seems astonished but relieved:

- Ok, sounds great! let's try it - suddenly suspicion creeps in - hmmmm but you are the Devil....so...you want something back, don't you?

(the Devil nods lightly with a diabolic smile)

- ...and...you want my soul, I guess...

- your soul??? come on!!! - the Devil burst in a laugh - we are in 2019. I don't care about your soul. I want your ass.

- What!???!!!?

- yes, I want to fuck your ass

The Developer, evaluates quickly the situation.

Few moments of pain or slight discomfort (?) in exchange for magic lambda. It could be worth. He accepts.

After a while of rough anal fucking, the devil asks

- Hey, how old are you anyway?

- 45, why?

- Oh jeeez...45!!!??? and you still believe in the devil?5 -

Okay, story time.

Back during 2016, I decided to do a little experiment to test the viability of multithreading in a JavaScript server stack, and I'm not talking about the Node.js way of queuing I/O on background threads, or about WebWorkers that box and convert your arguments to JSON and back during a simple call across two JS contexts.

I'm talking about JavaScript code running concurrently on all cores. I'm talking about replacing the god-awful single-threaded event loop of ECMAScript – the biggest bottleneck in software history – with an honest-to-god, lock-free thread-pool scheduler that executes JS code in parallel, on all cores.

I'm talking about concurrent access to shared mutable state – a big, rightfully-hated mess when done badly – in JavaScript.

This rant is about the many mistakes I made at the time, specifically the biggest – but not the first – of which: publishing some preliminary results very early on.

Every time I showed my work to a JavaScript developer, I'd get negative feedback. Like, unjustified hatred and immediate denial, or outright rejection of the entire concept. Some were even adamantly trying to discourage me from this project.

So I posted a sarcastic question to the Software Engineering Stack Exchange, which was originally worded differently to reflect my frustration, but was later edited by mods to be more serious.

You can see the responses for yourself here: https://goo.gl/poHKpK

Most of the serious answers were along the lines of "multithreading is hard". The top voted response started with this statement: "1) Multithreading is extremely hard, and unfortunately the way you've presented this idea so far implies you're severely underestimating how hard it is."

While I'll admit that my presentation was initially lacking, I later made an entire page to explain the synchronisation mechanism in place, and you can read more about it here, if you're interested:

http://nexusjs.com/architecture/

But what really shocked me was that I had never understood the mindset that all the naysayers adopted until I read that response.

Because the bottom-line of that entire response is an argument: an argument against change.

The average JavaScript developer doesn't want a multithreaded server platform for JavaScript because it means a change of the status quo.

And this is exactly why I started this project. I wanted a highly performant JavaScript platform for servers that's more suitable for real-time applications like transcoding, video streaming, and machine learning.

Nexus does not and will not hold your hand. It will not repeat Node's mistakes and give you nice ways to shoot yourself in the foot later, like `process.on('uncaughtException', ...)` for a catch-all global error handling solution.

No, an uncaught exception will be dealt with like any other self-respecting language: by not ignoring the problem and pretending it doesn't exist. If you write bad code, your program will crash, and you can't rectify a bug in your code by ignoring its presence entirely and using duct tape to scrape something together.

Back on the topic of multithreading, though. Multithreading is known to be hard, that's true. But how do you deal with a difficult solution? You simplify it and break it down, not just disregard it completely; because multithreading has its great advantages, too.

Like, how about we talk performance?

How about distributed algorithms that don't waste 40% of their computing power on agent communication and pointless overhead (like the serialisation/deserialisation of messages across the execution boundary for every single call)?

How about vertical scaling without forking the entire address space (and thus multiplying your application's memory consumption by the number of cores you wish to use)?

How about utilising logical CPUs to the fullest extent, and allowing them to execute JavaScript? Something that isn't even possible with the current model implemented by Node?

Some will say that the performance gains aren't worth the risk. That the possibility of race conditions and deadlocks aren't worth it.

That's the point of cooperative multithreading. It is a way to smartly work around these issues.

If you use promises, they will execute in parallel, to the best of the scheduler's abilities, and if you chain them then they will run consecutively as planned according to their dependency graph.

If your code doesn't access global variables or shared closure variables, or your promises only deal with their provided inputs without side-effects, then no contention will *ever* occur.

If you only read and never modify globals, no contention will ever occur.

Are you seeing the same trend I'm seeing?

Good JavaScript programming practices miraculously coincide with the best practices of thread-safety.

When someone says we shouldn't use multithreading because it's hard, do you know what I like to say to that?

"To multithread, you need a pair."18 -

Prof: So yeah this is going to be difficult. We're going to make the scalable math library. Then we have to make a functional finite elements library using that. Then make a multiphysics engine using that library. This could easily take your entire PhD. Are you prepared for that?

Me: May I show you something?

Prof: Sure, sure.

Me, showing him: We can use moose to code in the multiphysics. It's built atop libmesh for the finite elements. Which can be built with a petsc backend. Which we can run on GPUs and CPUs, up to 200k cores. All of this has been done for us. This project will, at worst, take a couple months.

Prof: ...

Guys, libraries. Fucking. Libraries. Holy fucking shit.5 -

My dad is quite supportive.

After I graduated, he brought home a bunch of broken CPUs for me to fix. -

We are devs right?

We have cpus and gpus lying around right?

We are still alive... right? 🤔

How about we do our part and utilise our PCs for helping with COVID-19 research.

I've stumbled across this little tool that not only keeps me warm at night but helps researchers with several diseases.

https://foldingathome.org/iamoneina...

It's like a a bitcoin miner but for research purposes, no it's not a dodgy bitcoin miner.

Oh and feel free to keep yourself anonymous as there are stats that will identify your username - when they work.

There are installers for windows, Mac, and linux distros so everyone can get involved.29 -

Oh, nice. Now Windows 11 doesn't just fuck up AMD CPUs with lack of performance, but also SSDs. NTFS at its finest, but see the tags.17

-

I just installed some anti-virus, little did i know it was also going to install their 'System "Speed up" software'

Was using 50% of my Disk Usage 40% of my ram and 100% of one of my CPUs ... great job at speeding up my system4 -

So recently I did a lot of research into the internals of Computers and CPUs.

And i'd like to share a result of mine.

First of all, take some time to look at the code down below. You see two assembler codes and two command lines.

The Assembler code is designed to test how the instructions "enter" and "leave" compare to manually doing what they are shortened to.

Enter and leave create a new Stackframe: this means, that they create a new temporary stack. The stack is where local variables are put to by the compiler. On the right side, you can see how I create my own stack by using

push rbp

mov rbp, rsp

sub rsp, 0

(I won't get into details behind why that works).

Okay. Why is this even relevant?

Well: there is the assumption that enter and leave are very slow. This is due to raw numbers:

In some paper I saw ( I couldn't find the link, i'm sorry), enter was said to use up 12 CPU cycles, while the manual stacking would require 3 (push + mov + sub => 1 + 1 + 1).

When I compile an empty function, I get pretty much what you'd expect just from the raw numbers of CPU cycles.

HOWEVER, then I add the dummy code in the middle:

mov eax, 123

add eax, 123543

mov ebx, 234

div ebx

and magically - both sides have the same result.

Why????

For one thing, there is CPU prefetching. This is the CPU loading in ram before its done executing the current instruction (this is how anti-debugger code works, btw. Might make another rant on that). Then there is the fact that the CPU usually starts work on the next instruction while the current instruction is processing IFF the register currently involved isnt involved in the next instruction (that would cause a lot of synchronisation problems). Now notice, that the CPU can't do any of that when manually entering and leaving. It can only start doing the mov eax, 1234 while performing the sub rsp, 0.

----------------

NOW: notice that the code on the right didn't take any precautions like making sure that the stack is big enough. If you sub too much stack at once, the stack will be exhausted, thats what we call a stack overflow. enter implements checks for that, and emits an interrupt if there is a SO (take this with a grain of salt, I couldn't find a resource backing this up). There are another type of checks I don't fully get (stack level checks) so I'd rather not make a fool of myself by writing about them.

Because of all those reasons I think that compilers should start using enter and leave again.

========

This post showed very well that bare numbers can often mislead. 21

21 -

After seeing this "old" picture I want to let know at the guyz who are in love with AMD that before Ryzen(s) I was able to cook my fuckin' breakfast's eggs on their fuckin' CPUs.

Big mistakes brings to great solutions and shut up the fuck up AMD, probably your core code is full of vulnerabilities but no one cares about your ultra threads architecture. 22

22 -

Random fact #1

AMD (Advanced Micro Devices) was producing Intel 8080 clones (AMD Am9080) before developing own CPUs. Originally they were produced without Intel license. This clone was developed basing on pictures of Intel 8080 itself and pictures of logic diagrams. These processors were much cheaper than the original model. Later AMD and Intel came up with agreement and the Am9080 was fully licensed making AMD official second party vendor.

And yeah, few years later and we got a war between two of those giants. Remember when in mid 2000s AMD almost beat the Intel marketshare?

Bonus Fact: there is AMD logo on Ferrari Formula 1 cars since 2002 (look at the front wing) 6

6 -

I'm having an existential crisis with this client.

We are spending millions of $s every year to make sure the product's performance is perfect. We are testing various scenarios, fine-tuning PLABs: the environment, application, middleware, infra,... And then we provide our recommendations to the client: "To handle load of XX parallel users focusing on YY, yy and Zy APIs, use <THIS> configuration".

And what the client does?

- take our recommendations and measure the wind speed outside

- if speed is <20m/s and milk hasn't gone bad yet, add 2x more instances of API X

- otherwise add 3xX, 1xY and give more CPUs to Z

- split the setup in half and deploy in 2 completely separate load-balanced prod environments.

- <do other "tweaking">

- bomb our team with questions "why do we have slow RTs?", "why did the env crash?", "why do we have all those errors?", "why has this been overlooked in PLABs?!?"

If you're improvising despite our recommendations, wtf are we doing here???

One day I will crack. Hopefully, not sometime soon.3 -

Building my own router was a great idea. It solved almost all of my problems.

Almost.

Just recently have I started to build a GL CI pipeline for my project. >100 jobs for each commit - quite a bundle. Naturally, I have used up all my free runners' time after a few commits, so I had to build myself a runner. "My old i7 should do well" - I thought to myself and deployed the GL runner on my local k8s cluster.

And my router is my k8s master.

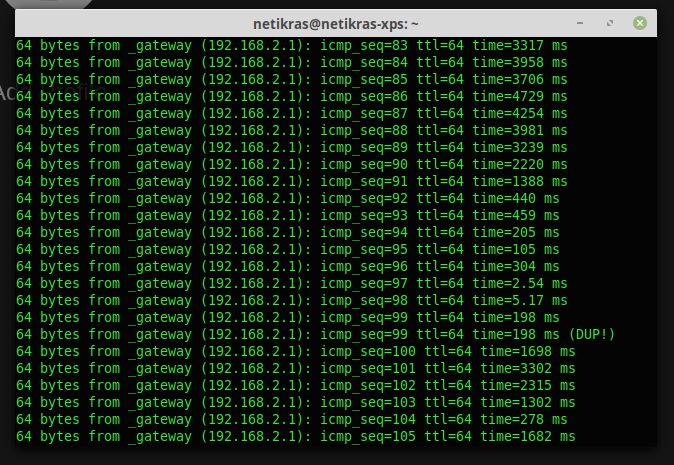

And this is the ping to my router (via wifi) every time after I push to GL :)

DAMN IT!

P.S. at least I have Noctua all over that PC - I can't hear a sound out of it while all the CPUs are at 100% 12

12 -

Last week my university decided to give away old hardware to students (CPUs, displays, keyboard, mouse, speakers, printers etc.). My roommate got me this beast. I was so excited and decided to boot it up only see to GRUB error 22 :( I think the hard drives were wiped before handing it out.

I've never set up a server before and I've been trying to boot up an Ubuntu server via USB drive but it's not detecting the OS installation files. I've been searching all around to make this work but it's not fucking working. I don't have any other cables or CD drive to try something else. I want to make this work. I have exams next week and I can't stop thinking about this. Godammit 8

8 -

Friend: I'm making a benchmark programm for CPUs. Wanna help me?

Me: Yeah sure, what language are you using?

Friend: Java.4 -

They all want to make games like this, and minimum requirements are:

Processor: Intel Core i5 3470 @ 3.2GHZ (4 CPUs) / AMD X8 FX-8350 @ 4GHZ (8 CPUs)

Memory: 8GB.

Video Card: NVIDIA GTX 660 2GB / AMD HD7870 2GB.

Sound Card: 100% DirectX 10 compatible.

HDD Space: 65GB. 3

3 -

Latest Atom with Electron 1.6 seems to be pegging multiple CPUs and maxing out ram and swap. Looks like I should start trying different editors again. :(

12

12 -

This Book....

Before doing any systems programming you should definitely read this book... most people think they know what they are doing but in fact they are completely clueless and the worst part is you don’t realize how clueless you are... you don’t know what you don’t know nor do you know how much you really don’t know.. a most people are part of this group, including myself lol.

Computers are much more than a bunch of CPUs, buses and peripherals. (Embedded folks realize this). But this goes beyond embedded this is a systems book, on architecture of computers in general.

Learning only java and the java/C# python and the others SDK/Api and spending your life with horse blinders for what’s going on below only sets you up for failure in the future, and when you that point it’s gonna be a shocker. Could be tomorrow could be 20 years from now, but most people with those horseblinders get to that point and have that “experience” no avoiding the inevitable lol.

I really enjoyed this book in their quantitative approach to teaching the subject. Especially understanding parallelism and multi core systems. 5

5 -

Any modern tech stack is this thing times 100 if we wanna count layers between our users and our cpus

Thank Python and next js for that 11

11 -

I think I still have a 64MB HDD somewhere on a shelf at my late grandpa's house.

Now they make CPUs with caches of that capacity...

o tempora..

EDIT:

FFS! This CPU cache contains >44 floppy disks! I've never even had that many!!! 11

11 -

Wow apple really did - they arrived where microsoft was 20 years ago.

Apple ads used to be about the experience, the lifestyle, simple and elegant.

Now all we get is ‚3.7 times faster‘, ‚13x faster graphics card‘, max and pro CPUs (probably home, home pro and home premium too some time …).

‚advanced thermal architecture‘ ….

Bullshit bingo deluxe …🤦13 -

!dev

Guys, we need talk raw performance for a second.

Fair disclaimer - if you are for some reason intel worker, you may feel offended.

I have one fucking question.

What's the point of fucking ultra-low-power-extreme-potato CPUs like intel atoms?

Okay, okay. Power usage. Sure. So that's one.

Now tell me, why in the fucking world anyone would prefer to wait 5-10 times more for same action to happen while indeed consuming also 5-10 times less power?

Can't you just tune down "big" core and call it a day? It would be around.. a fuckton faster. I have my i7-7820HK cpu and if I dial it back to 1.2Ghz my WINDOWS with around lot of background tasks machine works fucking faster than atom-powered freaking LUBUNTU that has only firefox open.

tested i7-7820hk vs atom-x5-z8350.

opening new tab and navigating to google took on my i7 machine a under 1 second, and atom took almost 1.5 second. While having higher clock (turbo boost)

Guys, 7820hk dialled down to 1.2 ghz; 0.81v

Seriously.

I felt everything was lagging. but OS was much more responsive than atom machine...

What the fuck, Intel. It's pointless. I think I'm not only one who would gladly pay a little bit more for such difference.

i7 had clear disadvantages here, linux vs windows, clear background vs quite a few processes in background, and it had higher f***ng clock speed.

TL;DR

Intel atom processors use less power but waste a lot of time, while a little bit more power used on bigger cpu would complete task faster, thus atoms are just plain pointless garbage.

PS.

Tested in frustration at work, apparently they bought 3 craptops for presentations or some shit like that and they have mental problems becouse cheapest shit on market is more shitty than they anticipated ;-;

fucking seriously ;-;16 -

For all the hate against windows I built over the now 8 years using linux as my main os. Now I feel windows 10 is quite good.

I got a little beefier desktop lately, been using just laptops from the last 8 years(8D) so I got this urge to get a desktop for gaming, I bought an entry level machine. ryzen 5 2400g, put my lovely linux mint and... the fucking machine was hanging up when the load was too high, and the load was too high too often because react/node etc.

I gave up in less than a day, I just did a quick search and some people said about secure boot or whatnot, some other claimed that ryzen cpus had no problem with mint, I got fed up quickly and did not try any solution with linux. Then I installed windows 10, installed the godamned drivers from the provided dvd ... since then it was a breeze.

The dark mode is gorgeous and no hanging up at all... I'm just sad that mint did not worked soo well. I wanted to have consistency between my laptop/desktop and I loved mint above everything. But well, some things improve while you're not looking at them, win 10 is quite good, I'll keep my desktop as gaming/programming pc with win 10, and well, the laptop will be auxiliar programming machine.

¯\_(ツ)_/¯5 -

This is a guide for technology noobies who wants to buy a laptop but have no idea what the SPECS are meaning.

1. Brand

If you like Apple, and love their !sleek design, go to the nearest Apple store and tell them "I want to buy one. Recommendations?"

If you don't like Apple, well, buy anything that fits you. Read more below.

2. Size

There are 11~15 inches, weight is 850g ~ 2+kg. Very many options. Buy whatever you like.

//Fun part coming

3. CPU

This is the power of the brain.

For example,

Pentium is Elementary Schoolers

i3 is Middle Schoolers

i5 is High Schoolers

i7 is University People

Dual-core is 2 people

Quad-core is 4 people

Quiz! What is i5 Dual-core?

A) 2 High Schoolers.

Easy peasy, right?

Now if you have a smartphone and ONLY use Messaging, Phone, and Whatsapp (lol), you can buy Pentium laptops.

If not, I recommend at least i3

Also, there are numbers behind those CPU, like i3-6100

6 means 6th generaton.

If the numbers are bigger, it is the most recent generation.

Think of 6xxx as Stone age people

7xxx as Bronze age people

8xxx as Iron age people

and so one.

4. RAM

This is the size of the desk.

There are 4GB, 8GB, 16GB, 32GB, and so one.

Think of 4GB as small desk to only put one book on it.

8GB as a desk to put a laptop with a keyboard and a mouse.

16GB as a normal sized desk to put some books, laptop, and food.

32GB as a boss sized desk.

And so one.

When you do multitasking, and the desk is too small...

You don't feel comfortable right?

It is good when there are spacious space.

Same with RAM.

But when the desk becomes larger, it gets expensive, so buy the one with the affordable price.

If you watch some YouTube videos in Chrome and do some document words with Office, buy at least 8GB. 16GB is recommended.

5. HDD/SSD

You take out the stuffs such as books and laptop from the basket (HDD/SSD), and put in your desk (RAM).

There are two kinds of baskets.

The super big ones, but because it is so big, it is bulky and hard to get stuffs out of the basket. But it is cheap. (HDD)

There are a bit smaller ones but expensive compared to the HDD, it is called SSD. This basket is right next to you, and it is super easy to get stuffs out of this basket. The opening time is faster as well.

SSDs were expensive, but as times go, it gets bigger as well, and cheaper. So most laptops are SSD these days.

There are 128GB, 256GB, 512GB, and 1024GB(=1TB), and so one. You can buy what you want. Recommend 256GB for normal use.

Game guy? At least 512GB.

6. Graphics

It is the eyesight.

Most computers doesn't have dedicated graphics card, it comes with the CPU. Intel CPUs has CPU + graphics, but the graphics powered by Intel isn't that good.

But NVIDIA graphics cards are great. Recommended for gamers. But it is a bit more expensive.

So TL;DR

Buying a laptop is

- Pick the person and the person's clothes (brand and design)

- Pick the space for the person to stay (RAM, SSD/HDD)

- Pick how smart they are (CPU)

- Pick how many (Core)

- Pick the generation (6xxx, 7xxx ....)

- Pick their eyesight (graphics)

And that's pretty much it.

Super easy to buy a laptop right?

If you have suggestions or questions, make sure to leave a comment, upvote this rant, and share to your friends!2 -

Windows: You paid for the whole CPU. I’m gonna use the full CPU.

Ubuntu: The CPU tax is 50%. You know the rules.

Void: Welcome to our tax haven! CPU tax is now 20%.

SliTaz: You paid for the whole CPU. I’m gonna give you the whole CPU.

Tiny Core Linux, running on a literal potato while rendering the UI in 8K 400 FPS: You guys are getting CPUs?15 -

Rant.

FUCK-WINDOWS-11! What crap! I got a gazillion CPUs, a shitload of memory and windows like:

”Don’t care! Gonna run slow and also not work at all…”12 -

Give up. Share Target API is already on Android, even in garbage like Samsung Internet. Desktop native apps are already history, mobile apps are sure to follow. Led by Apple Silicon, we will add JS-specific hardware to the CPUs and conquer the world. JavaScript will be the only language, with an exception being C and Lisp.

9

9 -

What if people, life, humanity, the universe is just a cluster of CPUs running a giant Recurrent Neural Network algorithm? 🤔

-Sun and food == power source

-People == semiconductors

-Earth/a Galaxy == a single CPU

-Universe == a local grouping of nearby nodes, so far the ones we've discovered are dead or not what same data transport protocol/port as us

-Universal Expansion == the search algorithm

-Blackholes: sector failures

-Big Bang == God turns on his PC, starts the program

-Big Crunch == rm -rf4 -

I gonna puke if I see more whining on android studio from a guy having 8GB of ram and running IDE emulator and a browser. To remind everyone, decent android phones have 4GB of ram or more. They have memory cards with io speeds comparable to SSD. They have 10 core CPUs. And still, people want to develop on potatoes.10

-

I just found this in my "Religious views" info on FB, thought I would share it even tho it's just a paste from somewhere. Don't slaughter me if this is a reoccuring thing on here😂

THE 0x17'RD PSALM

The Computer is my taskmaster; I need not think.

He maketh me to write flawless reports

He leadeth me with Computer-Aided Instruction

He restoreth my jumbled files

He guideth me through the program with menus.

Yea, though I walk through the valley of

the endless GOTO,

I will fear no error messages;

For thy User's Manual is with me.

Thy disk drive and thy Pac-Man-they comfort me.

Thou displayest a spreadsheet program before me

in the presence of my supervisor.

Thou enableth the printout;

the floor runneth over (with paper).

Surely good jobs and good pay shall follow me

All the days of my life;

And I shall access your CPUs, forever.1 -

OK semi rant... Would like suggestions

Boss wants me to figure out someway to find the maximum load/users our servers/API/database can handle before it freezes or crashes **under normal usage**.

HOW THE FUCK AM I SUPPOSED TO DO THAT WITH 1 PC? The question seems to me to mean how big a DDoS can it handle?

I'm not sure if this is vague requirements, don't know what they're talking about, or they think I can shit gold... for nothing... or I'm missing something (I'm thinking how many concurrent requests and a single Neville melee even with 4 CPUs)

"Oh just doing up some cloud servers"

Uh well I'm a developer, I've never used Chef or Puppet and or cloud sucks, it's like a web GUI, not only do I have to create the instances manually and would have to upload the testing programs to each manually... And set up the envs needed to run it.

Docker you say? There's no Docker here... Prebuilt VM images? Not supported.

And it's due in 2 weeks...12 -

Changed the Ora DB instance type to one having 2x fewer CPUs. That alone increased the db perf ~4x+5

-

One of the largest companies on the continent. Uses Oracle on AWS RDS with the beefiest resources available. It comes to the point where lowering the number of CPUs boosts the DB performance up (concurrency). Point is - Oracle is sweating hard during our tests. You can almost feel the smell of those hot ICs on AWS servers.

And then someone at higher levels, while sitting on a pooper, has a great idea: "I know! Let's migrate to Aurora! They say it's so much faster than anything there is!"

*migration starts*

Tests after migration: the database on the largest instance possible shits itself at 10% of the previous load: the CPU% is maxed out (sy:60%,us:40%), IO is far, far from hitting the limits.

Is it really possible Aurora will cope with the load better than Oracle? Frankly, I haven't seen any database perform better than Oracle yet. Not sure if it's worth to invest time in this adventure..2 -

I wanna go back to the age where a C program was considered secure and isolated based on its system interface rathe than its speed. I want a future where safety does not imply inefficiency. I hate spectre and I hate that an abstraction as simple and robust as assembly is so leaky that just by exposing it you've pretty much forfeited all your secrets.

And I especially hate that we chose to solve this by locking down everything rather than inventing an abstraction that's a similarly good compile target but better represents CPUs and therefore does not leak.31 -

I'm sick of companies putting 1.1GHz CPUs and 4GB of RAM in a laptop, Windows takes up all of the processing power and nothing is left for programs9

-

Concerning my last post on the two Commodores, (https://devrant.com/rants/963917/...) here's the great story behind the boxed one.

So at the place where I interned over the summer, I helped the tech dept. (IT herein) move to a new bldg. We had to dismantle most of the network infrastructure stuff, so we were in the server room a lot. First day on the job, Boss shows me server room, I'm amazed and all because this is my first real server room lol.

We walk around, and there's a Commodore 64 box on a table, just kinda there. I ask, "Uh, is that actually a C64?" B: "Yeah, that's E's." Me: "E?" (name obfuscated) B: "Yeah, E's a little crazy." Me: "Is it actually in there?" B: "Absolutely, check it out!" *opens box and sees my jaw drop* Me: "Well, alrighty then!" So that lingers in my mind for a while until I meet E. He is a fuckin hilarious guy, personifying the C64, making obscure and professionally inappropriate references. Everyone loves him, until he pranks them. He always did.

We’re in the server room, wiping some Cisco switches or something, and we have some downtime, so I ask him about the 64, and he's like "Yeah, I haven't had time to diagnose her issues much. If you want her, go ahead, see if you can make it work!" Me: "You're kidding, right?" E: "Nah, not at all!"

That day I walked out with a server motherboard, 2 Xeon CPUs and some RAM for the server (all from an e-waste bin, approved for me to take home from boss) and a boxed C64. Did a multimeter test on the PSU pins, one of the 9vAC pins is effectively dead (1.25v fluctuating? No thanks.) but everything else is fine except for a loose heatsink and a blown fuse in each C64. Buying the parts tonight. I wanna see this thing work!1 -

(Warning: This rant includes nonsense, nightposting, unstructured thoughts, a dissenting opinion, and a purposeless, stupid joke in the beginning. Reader discretion is advised.)

honestly the whole "ARM solves every x86 problem!" thing doesn't seem to work out in my head:

- Not all ARM chips are the same, nor are they perfectly compatible with each other. This could lead to issues for consumers, for developers or both. There are toolchains that work with almost all of them... though endianness is still an issue, and you KNOW there's not gonna be an enforced standard. (These toolchains also don't do the best job on optimization.)

- ARM has a lot of interesting features. Not a lot of them have been rigorously checked for security, as they aren't as common as x86 CPUs. That's a nightmare on its own.

- ARM or Thumb? I can already see some large company is going to INSIST AND ENFORCE everything used internally to 100% be a specific mode for some bullshit reason. That's already not fun on a higher level, i.e. what software can be used for dev work, etc.

- Backwards compatibility. Most companies either over-embrace change and nothing is guaranteed to work at any given time, or become so set in their ways they're still pulling Amigas and 386 machines out of their teeth to this day. The latter seems to be a larger portion of companies from what I see when people have issues working with said company, so x86 carryover is going to be required that is both relatively flawless AND fairly fast, which isn't really doable.

- The awkward adjustment period. Dear fuck, if you thought early UEFI and GPT implementations were rough, how do you think changing the hardware model will go? We don't even have a standard for the new model yet! What will we keep? What will we replace? What ARM version will we use? All the hardware we use is so dependent on knowing exactly what other hardware will do that changing out the processor has a high likelihood of not being enough.

I'm just waiting for another clusterfuck of multiple non-standard branching sets of PCs to happen over this. I know it has a decent chance of happening, we can't follow standards very well even now, and it's been 30+ years since they were widely accepted.5 -

Hey fellow devRanters,

I'm sure some of you have read about the newest vulnerabilities in Intels Management Engine (ME). I feel like ME and similar "features" are unacceptable backdoors into our systems. Unfortunately Intel and AMD do not offer their customers the option to acquire CPUs that lack these backdoors and make disabling them rather impossible 😒

Thus my question: Do you guys know of any 64-bit "open-source" CPU on the market that is production-ready and suitable for high-traffic web applications? Please note that I don't consider FPGAs to be viable options, since I don't trust Xilinx and Altera either.15 -

I need to estimate how much ram and CPUs my team will need next year for our apps... That have yet to be built.

We load a lot of data feeds with batch processes running on a few large machines, some can use like 30 GB RAM at times...) which should be a lot less if we get the data real time I hope...

But wondering how to estimate well... I sorta did a worse case analysis where I just multiply and sum # CPUs/memory* nodes*approx apps...

Comes out to be like 600 CPUs and 800 RAM... So wondering if that's ok...

RAM is ok but # of CPUs is way higher bc now all the apps basically run on their own machines...14 -

So AMD is introducing new CPUs with NPUs, aka Ryzen AI, for inferencing, but only for some of the lineup, making Ryzen AI even less relevant.

Not to mention that AMD comes up with new HW that nobody knows how to use, that no SW exists for, and...

Oh, wait. Making AI capable HW while completely failing on the SW side. Now it makes sense. It's just AMD being AMD.4 -

Oh, me? I am so excited about all the computing power that's gonna be stolen from people who had updated their Intel CPUs last month.

I dunno what they're up to but I'm sure it's very exciting. I'm lost between Skynet and a one world government cryptocurrency mining they will use the power for.

What do you think?3 -

For what fucking reason the ability to set the date and time programatically has been blocked on Android?!

Why you can create fucking invisible apps that work in the background, mine cryptos, steal your data but they decided that something like that is considered dangerous?

Can anyone give me a logical explanation?

P.S.

There are cases (big pharma companies) where the users don't have access to internet nor a ntp server is available on the local network, so the ability for an app to get the time of a sql server and set it in runtime is crucial, expecially when the user, for security reasons, can't have access to the device settings and change it by himself.

"System apps" can do it, but you would have to change the firmware of a device to sideload an external "System app" and in that case it would lose the warranty.

So, yeah, fucking Google assholes, there are cases where your dumb decisions make the others struggle every other day.

Give more power to third party developers, dumb motherfuckers.

It's not that difficult to ask the user, once, to give the SET_TIME permission.

It was possible in the past...

P.S.2

Windows Mobile 6.5 was a masterpiece for business.

It still could be, just mount better CPUs on PDAs and extend the support. But no, "Android is the future". What a fucking bad future. 11

11 -

Do you trust github/gitlab/bitbucket? If you self-host, do you trust your hosting? do you trust gitea? if you don't use gitea, do you trust git? do you trust the way you got your copy of git? do you trust your os, as it might have tampered with your git? did you read the code? do you trust your internet connection that might have changed some packets? do you trust your https implementation? did you examine the traffic? do you trust your traffic sniffing tool? if you use your own hardware, do you trust it? do you trust its CPU/bios? if it's risk-v, do you trust chinese vendors of your cpu? they might have put some backdoors there. do you trust your other hardware? okay, you have the money to make your own cpus. do you trust your employees? do you trust your silicon? do you trust the measuring equipment you used to check if your cpu is safe? do you trust the literature in the field? but did you verify it though? did you?

it's always who you trust. if you want to bake an apple pie from scratch, you must first create the universe.9 -

So I recently finished a rewrite of a website that processes donations for nonprofits. Once it was complete, I would migrate all the data from the old system to the new system. This involved iterating through every transaction in the database and making a cURL request to the new system's API. A rough calculation yielded 16 hours of migration time.

The first hour or two of the migration (where it was creating users) was fine, no issues. But once it got to the transaction part, the API server would start using more and more RAM. Eventually (30 minutes), it would start doing OOMs and the such. For a while, I just assumed the issue was a lack of RAM so I upgraded the server to 16 GB of RAM.

Running the script again, it would approach the 7 GiB mark and be maxing out all 8 CPUs. At this point, I assumed there was a memory leak somewhere and the garbage collector was doing it's best to free up anything it could find. I scanned my code time and time again, but there was no place I was storing any strong references to anything!

At this point, I just sort of gave up. Every 30 minutes, I would restart the server to fix the RAM and CPU issue. And all was fine. But then there was this one time where I tried to kill it, but I go the error: "fork failed: resource temporarily unavailable". Up until this point, I believed this was simply a lack of memory...but none of my SWAP was in use! And I had 4 GiB of cached stuff!

Now this made me really confused. So I did one search on the Internet and apparently this can be caused by many things: a lack of file descriptors or even too many threads. So I did some digging, and apparently my app was using over 31 thousands threads!!!!! WTF!

I did some more digging, and as it turns out, I never called close() on my network objects. Thus leaving ~30 new "worker" threads per iteration of the migration script. Thanks Java, if only finalize() was utilized properly.1 -

Anyone hear about EMIB from Intel? It seems like it might be a game changer for getting workstation power into Ultrabook form factors. They worked with a team at AMD to engineer it, and even made it sound like they're using it to combine core i processors with AMD graphics.

I ask because there has apparently been news about it since August but I've only just heard about it today.

https://anandtech.com/show/12003/... -

I want to rant about my college.

I studied computer engineering, we spent 5 years studying circuits, diodes, CPUs etc ..

It's cool and all but like in my country we don't have a single computer's manufacturing company and all computer engineering students work in software development. But they didn't teach us a single usfel thing for work! We only studied Java and C++, nothing more and our professors claim that you guys can learn software development from the internet.

Our professors whom they don't even know how to debug a code not to mention how to full screen a power point presentation.

My point is college is trash.3 -

That moment when you're away from home and you're performing a fucking system update (upgrade?) on fucking Gentoo and your fucking Chromebook can't fucking connect to your fucking computer at home running fucking Arch to offload fucking compilations over fucking SSH and fucking Polybar tells you that the fucking RAM is almost full and that both of your fucking Broadwell CPUs are fucking 100% on usage and even fucking Vim can't open your fucking work to add the fucking documentation for a fucking assignment from a fucking online course due today and everything's fucking stopped in time but the fucking window manager is still working normally?!!!!rant rand word -> prepend "fucking" away from home one-line is not necessarily >= 1 sentences rage i3wm is best2

-

Is AMD gonna beat Intel to 5nm tho?

https://notebookcheck.net/AMD-essen...

(god i can't wait for picometer shit, that'd be a good milestone to see)13 -

So I'm working on some communications app which bridges the main server/database with some equipment on the field. Now this equipment works in a redundancy pair: two cpus, A and B, both connected via ethernet, one active, the other standby/replicating and no comms on it. One obvious requirement is that when this equipment swaps the active cpus the comms app should switch as well. Fair enough, going with this into testing phase.

This guy, from qa, got some instructions from someone else:

1. Trigger the soft switch from equipment so that cpus got swapped.

2. Remove the ethernet cable from the standby unit.

3. Observe the communications.

And the test goes like: cpu A is active, B is standby. Switch is triggered, B becomes active, A goes into standby. The cable is pulled out from cpu B.

Test result: failed, no comms ever4 -

Had a dream about computers on earth mostly stopping working for no apparent reason, yes, again. But this time, they still work on Mars, so we go there, at least some of us. UAC-esque, Doom 3-ish aesthetics, but in a good way, no death and no darkness. No hell plot though, we’re all fine. Both earth and mars are equally semi-livable, but in different ways. For some reason, we can’t ship new CPUs to mars, and 775 pentium considered a good CPU. We use SQL and HDDs. Elon is also there, but he’s nothing, a peasant compared to other scientists and engineers who are a part of the exodus. I had some problems with food and shelter initially, but @netikras helped me2

-

N64 emulation possible on Nintendo hardware? Are you SUUUUUUURE???

According to GBATemp:

Wii:

CPU: Single-core PPC, 729MHz

GPU: 243 MHz single-core

RAM: 88MB

Possible? Yes (always full-speed)

New 3DS:

CPUs: 2, Quad-core ARM11 (804MHz), Single-core ARM9 (134MHz)

GPU: 268MHz single-core

RAM: 256MB

Possible? No

Switch:

CPU: Octa-core (2x Quad-core ARMv8 equivalent), 1.02GHz

GPU: 307.2MHz-768MHz (max. 384MHz when undocked)

RAM: 4GB

Possible? Barely (5FPS or less constant, only half the instruction set possible to implement)

this all makes total fucking sense 7

7 -

1. 72 hours at an heavy used street with many holes and open windows (not the os) on an hot day for those, who stop the people, who work against air pollution;

2. die nvidia;

3. a pc with an inbuilt 10 kW fusionreactor, water heater, 2 amd cpu of the latest gen, 2 of the highest tier amd graphic cards and an mainboard which follows the spec of the cpus;

That should cover everything i need.1 -

Folks

I need your input on the following

how important do you think having high core count in CPUs in your daily workflow?

I'm planning on buying a new ryzen 5000 processor, while I am going to game the hell out of it I'm also planning to run wsl2, a ton of chrome tabs, maybe have multiple IDEs for developing random stuff, maybe some virtual machines for some experimentations, some docker containers for some selfhosted software and lastly open demanding games while having everything else open.

Will a 6 core 5600x be enough? or do you think investing in a 5900x will be worth it down the line? (lets say for the next 7 years)

Assume that the GPU will handle the games im going to play and the RAM is going to be 32gb for now11 -

Little addition on my rant about the enter and leave instructions being better than push mov sub for stackframes:

I had that debate with a friend of mine, who tried the same code ... and failed to get enter to be as fast. Infact, for him, enter was twice as slow, on his older computer even 3times as slow.

Mhh... pretty bad. basically blows up my whole point.

I tried the code on my computer... Can't reproduce the error.

Weird.

"Which CPU are you on?"

>"I'm on Intel"

Both of his computers are on intel. I use an amd ryzeni1600. Now this might be a bit of a fast conclusion, but I think that its safe to say that intel should atleast do better for SOME parts of their CPUs.9 -

int totalHourSpentOnFixingBootflags = 5;

while (!isWorking) {

Clover.flags = "-x -v -s -f nv_disable=1 injectNvidia=false ncpi=0x2000 cpus=1 dart=0 -no-zp maxmem=4096" + Internet.getRandomBootFlags();

} 1

1 -

Recently we noticed a part of our web application wasn't working. After some hours of looking into it (it's an old, convoluted application), it became clear another part of the application timed out trying to get a connection from the db connection pool.

We call db admins, they respond "oh yeah looks like the DB CPUs are at 100% load. I'll do something about it." and a short while later everything was working. So now I think, our hours of looking into it and a lot of people not being able to work could have been avoided if the DB admins had some form of alerting. But also we could improve our monitoring too, had we tracked calls made to our DB.

Question: Do you think I should call the DB guys, telling them they need alerting, or should I add tracing/monitoring around our DB calls, or both? Do you think I should consider any additional actions I haven't thought of?4 -

It's weird no one seems to be mentioning a major problem with mordern Intel CPUs: Turbo boost. On newer laptops I always turn gimmick off now. Half the time the safeties don't kick in and you end up with 100+ degree C on your CPU for sustained amount of time (especially compiling!). Keep that happening over a couple of years I would not be surprised if that contributed heavily on battery stress and the shortening of the product... *cough* Apple "80°C+ idling is totally normal" *cough* (Actual reply from Apple when I queries about my McToasty 2015!)

Anyone else noticed this issue?2 -

Intel management engine pwned over USB

https://bleepingcomputer.com/news/...

With everyday that passes, this Intel ME rabbit hole just keeps getting deeper. -

There are still Android games that push CPUs and gfx to their limits? And actually worth playing/buying?

Haven't seen any non-arcade, console style games in a long time? 9

9 -

TIL all x86-compatible CPUs have ISA support and the ability to be reset by the keyboard controller via A20 line, all thanks to not wanting to alienate a single person who refuses to upgrade.

you can put ISA or AGP devices straight onto the fucking LPC bus and it'll work on most CPUs. Y'know, on the same bus as the TPM in most?

you already know i'm gonna put a floppy controller, a parallel port, and an IDE controller on the PCI-e bus with an ISA daughterboard12 -

Microsoft announced a new security feature for the Windows operating system.

According to a report of ZDNet: Named "Hardware-Enforced Stack Protection", which allows applications to use the local CPU hardware to protect their code while running inside the CPU's memory. As the name says, it's primary role is to protect the memory-stack (where an app's code is stored during execution).

"Hardware-Enforced Stack Protection" works by enforcing strict management of the memory stack through the use of a combination between modern CPU hardware and Shadow Stacks (refers to a copies of a program's intended execution).

The new "Hardware-Enforced Stack Protection" feature plans to use the hardware-based security features in modern CPUs to keep a copy of the app's shadow stack (intended code execution flow) in a hardware-secured environment.

Microsoft says that this will prevent malware from hijacking an app's code by exploiting common memory bugs such as stack buffer overflows, dangling pointers, or uninitialized variables which could allow attackers to hijack an app's normal code execution flow. Any modifications that don't match the shadow stacks are ignored, effectively shutting down any exploit attempts.5 -

nothing new, just another rant about php...

php, PHP, Php, whatever is written, wherever is piled, I hate this thing, in every stack.

stuff that works only according how php itself is compiled, globals superglobals and turbo-globals everywhere, == is not transitive, comparisons are non-deterministic, ?: is freaking left associative, utility functions that returns sometimes -1, sometimes null, sometimes are void, each with different style of usage and naming, lowercase/under_score/camelCase/PascalCase, numbers are 32bit on 32bit cpus and 64bit on 64bit cpus, a ton of silent failing stuff that doesn't warn you, references are actually aliases, nothing has a determined type except references, abuse of mega-global static vars and funcs, you can cast to int in a language where int doesn't even exists, 25236 ways to import/require/include for every different subcase, @ operator, :: parsed to T_PAAMAYIM_NEKUDOTAYIM for no reason in stack traces, you don't know who can throw stuff, fatal errors are sometimes catchable according to nobody knows, closed-over vars are passed as functions unless you use &, functions calls that don't match args signature don't fail, classes are not object and you can refer them only by string name, builtin underlying types cannot be wrapped, subclasses can't override parents' private methods, no overload for equality or ordering, -1 is a valid index for array and doesn't fail, funcs are not data nor objects when clojures instead are objects, there's no way to distinguish between a random string and a function 'reference', php.ini, documentation with comments and flame wars on the side, becomes case sensitive/insensitive according to the filesystem when line break instead is determined according to php.ini, it's freaking sloooooow...

enough. i'm tired of this crap.

it's almost weekend! 🍻1 -

https://wccftech.com/windows-pcs-wi...

this is stupid, Apple didn't kill x86 with PPC, and they'll not do the same with ARM. (ARM is cheaper, but it's not really more powerful yet. You can't do a bunch of one-opcode stuff on ARM yet, it needs to mature further. ARM'd also need massive retooling for cards and such and back-compat is gonna be hell.)23 -

- Curiosity - always eager to learn how stuff worked

- Money [obviously]

- Future is technology

- minimal interaction with people

- I'm good at it

- call it a guity pleasure but it gives me sence of being better than people around me [don't take it seriously]

Personally, I am surrounded by people who are deeply religious. Growing up, saw my family, relatives and whole nation neak deep into religion and politics. No one was interested to ask questions or see things differently.

When I was 15 got an internet connection and started consuming information as much as I can. Understood things with physics, got to know a bit about universe that gave the perspective on existence and stuff.

It was not too long my curiosity took me to learn CPUs and it's components.

Well, from there it was deep 90° slope and I'm still diving down, I just simply can't stop myself.1 -

The 1080ti is rated at a little more over 11 teraflops. GPUs with over 1 teraflops of compute performance was released in the early 2000's.

It's 2017 and we are stuck with fancy gen xxx cpus.

I smell a huge compute performance wastage.1 -

If I have several nodejs apps and JS being single threaded, how do I know that when I start all of them, they aren't running on a single cpu?

I'm. Not sure if I'm understanding it correctly but say I start 1 app, that means all the app's work will be done on whichever cpu it was started on? Or can it spawn additional threads and on other cpus to do (nonjs) work?9 -

I hate how I have battery issues with every smartphone/tablet I buy. They do well for 1 week and then I have to buy an additional charger for work because after 5 hours of only lying there it only has 50% which wouldnt be sufficient for 30 minutes car drive (Maps, Spotify, Bluetooth, GPS and mobile data)... Fml. I am tired of batteries. My next phone is going to be a huawei mate 10. Maybe I habe more luck with this one. I dont believe im Samsung anymore.

And anyway why the fuck do they introduce better CPUs more sensors etc whilst Keeping the battery capacity the same.. Instead they introduce fast charge etc. Another reason for me to go away from samsung is the fact they bloat each firmware up, my battery got worst after each system update (even the security ones) and also doing 14 factory resets didnt work. Support is shit. They also integrated Clean Master into the system and an "Antivirus Protection"... Can't get worst.

samsungrant@devrant.com # > submit && exit