Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Related Rants

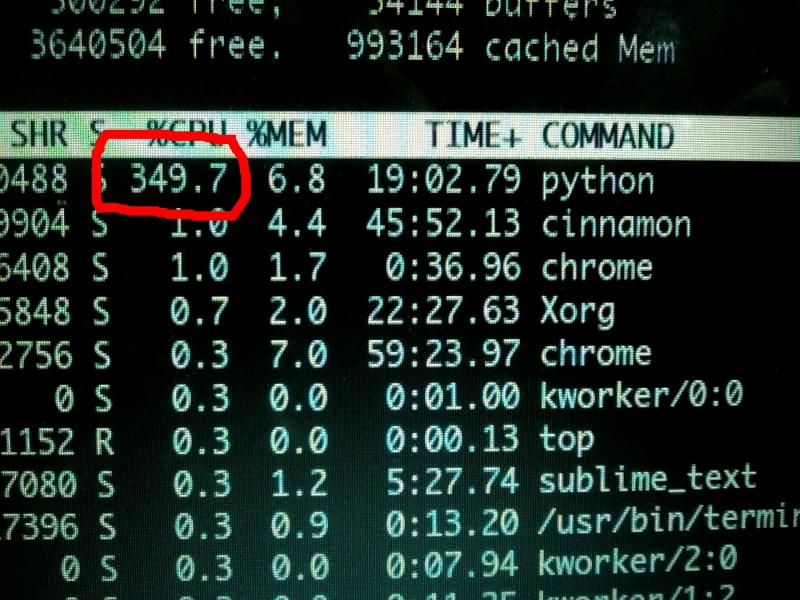

Machine Learning messed up!

Machine Learning messed up! When your CPU is motivated and gives more than his 100%

When your CPU is motivated and gives more than his 100% What is machine learning?

What is machine learning?

Everyone I tell this to, thinks it’s cutting edge, but I see it as a stitched together mess. Regardless:

A micro-service based application that stages machine learning tasks, and is meant to be deployed on 4+ machines. Running with two message queues at its heart and several workers, each worker configured to run optimally for either heavy cpu or gpu tasks.

The technology stack includes rabbitmq, Redis, Postgres, tensorflow, torch and the services are written in nodejs, lua and python. All packaged as a Kubernetes application.

Worked on this for 9 months now. I was the only constant on the project, and the architecture design has been basically re-engineered by myself. Since the last guy underestimated the ask.

rant

machine learning

wk215

distributed systems