Ranter

Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Comments

-

cool! I like your persistence.

And maybe in the next 3 years your project be a serious alternative for node.

If you're ready for primetime i would suggest you invest in some marketing. Like a beautiful website with branding and copy text that sell your advantages over for example node. And I think you should target big companies. Because the average webdev will be happy with node. But BigCorps who serve million of visitors and transactions per second will be happy for every milisecond they can save doing so. Especially banks and insurances. -

@heyheni The only problem is that I'm the only maintainer currently, and I've been neglecting the project for extended periods of time because of real-life circumstances. I'd love to find a source of funding and work on it 24/7, but that's another challenge on its own.

I think it'd make a great tool for corporations though. -

I wonder if you might receive a cease and desist letter from Sonatype for using the name Nexus. If you haven't already done so, I'd recommend you to make sure that you can use that name.

-

@Franboo I didn't state this above because I hit the character limit, but I received a lot of hate mail because of this exact reason.

When I announced that I had no plans to implement the CommonJS `require()` module loading system in favour of ES6 modules, people were very upset.

CommonJS is used with Node to this day, and is the most popular choice for JS library authors. I think 90% of the libraries published on NPM are written using CJS.

I have many articles (and a real-life benchmark/test) explaining this project listed here though: http://www.nexusjs.com/about/

I hope I can raise awareness about it somehow. I'm just not sure how to promote it, and getting contributors interested is a difficult proposition when the code itself is in C++ and does what is considered evil black magic by JS devs on a regular basis. :) -

@ethernetzero The official name is "Nexus.js", and theirs is "Nexus Repository Manager". I don't see a reason for that to ever happen.

Also, this project has been around since 2015. I think it would have already happened if that were the case. -

sSam14657yIn my opinion the biggest problem is that writing "hacky", multi-threaded JavaScript will not be the same as writing the JavaScript they know, love or hate. Thus what's the point of working around the language when you can use different language that was designed for multi-threading like Java or C++?

sSam14657yIn my opinion the biggest problem is that writing "hacky", multi-threaded JavaScript will not be the same as writing the JavaScript they know, love or hate. Thus what's the point of working around the language when you can use different language that was designed for multi-threading like Java or C++? -

@sSam What's the point of improving anything? :)

A JavaScript programmer wants a garbage-collected language. They don't want to deal with manual memory allocation or null pointers. This is why they reject multithreading. Because it will introduce complexity on the same level.

But many languages are both multithreaded and garbage-collected. Java, C#, D, and Rust are good examples.

My thoughts at the time were "Why the hell not JavaScript too? If we're having a JS revolution, let's go all the way." -

balte22727yyou mean that the code I've already written and the future code I will be writing could get a huge performance boost + no more bullshit like loosing a udp connection because more than 4 libuv pools are used? I like it.

balte22727yyou mean that the code I've already written and the future code I will be writing could get a huge performance boost + no more bullshit like loosing a udp connection because more than 4 libuv pools are used? I like it.

we recently had to implement a child_process due a UDP connection requiring for ack's being sent and there is no way to guarantee that in NodeJS. so now we have all this stupid code wasting cycles on parsing IPC messages and the only thing we MIGHT get is the worker thread API which actually makes it more complicated for maintainers.. -

@balte I bet you're going to love this benchmark then: https://dev.to/voodooattack/...

This old i7-4770 CPU handled ~1000 requests per second and probably could handle even more. The only limitation was my OS running out of file handles for sockets (as I later discovered).

Here are the connection times for the 1k req/s benchmark: Connection time [ms]: min 0.5 avg 337.9 max 7191.8 median 79.5 stddev 848.1

So yeah, I think it could handle the situation you described just fine. It will scale automatically and never choke as long you give it more cores to work with.

Also, UDP is already fully implemented. Here's an example of a UDP client (can be turned into a server quite easily): https://github.com/voodooattack/...

Note: The IO API is very different from node, because it's modelled after the boost::iostreams library and uses boost::asio internally for asynchronous streaming. -

balte22727y@voodooattack I think I'm going to try and take some time to port our UDP code over (should be a small task once I revert the child_process codr) and run some benchmarks actually! from the surface this is all sounding really good, I'm hoping it will catch on some time soon!

balte22727y@voodooattack I think I'm going to try and take some time to port our UDP code over (should be a small task once I revert the child_process codr) and run some benchmarks actually! from the surface this is all sounding really good, I'm hoping it will catch on some time soon! -

@balte Please CC my with your results. I'm extremely interested in the outcome!

I can answer any questions you may have regarding usage. Just drop me an email (my username here will work with gmail or hotmail), or you can submit an issue on GitHub. :) -

@voodooattack you could add a little topbar on your website "searching for a sponsors"

And you could write some companies and foundations to sponsor you. I mean you live in egypt and the average top income is something about 25 000 dollars per year, correct? 25k is surely easy to optain with nexusjs and you could work for a year full time on this? what do you think? -

@heyheni My income is entirely from online work, so it's not within the typical Egyptian range. (which is something I'm thankful for)

The biggest issue is that I'm not feeling particularly safe living here, and what was eating most of my free time was either online work or online job interviews. I really want to move out of Egypt as soon as possible.

Luckily, I'm hopefully moving to Germany soon. I was just offered a development position in a good company there.

I like your top-bar idea though. I'll look into it while adding HTTPS to the site, which is something I completely forgot about for almost a year.

Thanks for the idea! -

This is really interesting, and I really don't understand how anyone can be against the concept of getting better performance!

Really hoping you stick to this (well it already seems you are pretty comitted :D). Also really interested in what benchmarks @balte is going to come up with -

@KasperNS I’m very interested in the benchmark too, albeit a bit nervous as well. It’s not 100% stable as it stands, so I’m expecting bugs.

Hopefully this can show us something new at least. :) -

@voodooattack well of course there's going to be bugs. It'll just be great to see a proof of concept, and having a test done by someone else, will be a great proof!

I'm really into the idea of this project, so I'm rooting for it to work :)

Related Rants

when your code is a mess but everything work out in the end

when your code is a mess but everything work out in the end What only relying on JavaScript for HTML form input validation looks like

What only relying on JavaScript for HTML form input validation looks like

Okay, story time.

Back during 2016, I decided to do a little experiment to test the viability of multithreading in a JavaScript server stack, and I'm not talking about the Node.js way of queuing I/O on background threads, or about WebWorkers that box and convert your arguments to JSON and back during a simple call across two JS contexts.

I'm talking about JavaScript code running concurrently on all cores. I'm talking about replacing the god-awful single-threaded event loop of ECMAScript – the biggest bottleneck in software history – with an honest-to-god, lock-free thread-pool scheduler that executes JS code in parallel, on all cores.

I'm talking about concurrent access to shared mutable state – a big, rightfully-hated mess when done badly – in JavaScript.

This rant is about the many mistakes I made at the time, specifically the biggest – but not the first – of which: publishing some preliminary results very early on.

Every time I showed my work to a JavaScript developer, I'd get negative feedback. Like, unjustified hatred and immediate denial, or outright rejection of the entire concept. Some were even adamantly trying to discourage me from this project.

So I posted a sarcastic question to the Software Engineering Stack Exchange, which was originally worded differently to reflect my frustration, but was later edited by mods to be more serious.

You can see the responses for yourself here: https://goo.gl/poHKpK

Most of the serious answers were along the lines of "multithreading is hard". The top voted response started with this statement: "1) Multithreading is extremely hard, and unfortunately the way you've presented this idea so far implies you're severely underestimating how hard it is."

While I'll admit that my presentation was initially lacking, I later made an entire page to explain the synchronisation mechanism in place, and you can read more about it here, if you're interested:

http://nexusjs.com/architecture/

But what really shocked me was that I had never understood the mindset that all the naysayers adopted until I read that response.

Because the bottom-line of that entire response is an argument: an argument against change.

The average JavaScript developer doesn't want a multithreaded server platform for JavaScript because it means a change of the status quo.

And this is exactly why I started this project. I wanted a highly performant JavaScript platform for servers that's more suitable for real-time applications like transcoding, video streaming, and machine learning.

Nexus does not and will not hold your hand. It will not repeat Node's mistakes and give you nice ways to shoot yourself in the foot later, like `process.on('uncaughtException', ...)` for a catch-all global error handling solution.

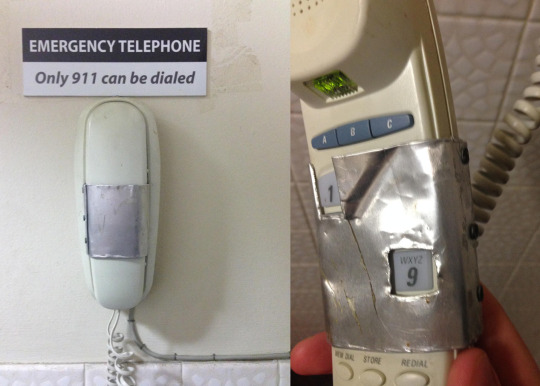

No, an uncaught exception will be dealt with like any other self-respecting language: by not ignoring the problem and pretending it doesn't exist. If you write bad code, your program will crash, and you can't rectify a bug in your code by ignoring its presence entirely and using duct tape to scrape something together.

Back on the topic of multithreading, though. Multithreading is known to be hard, that's true. But how do you deal with a difficult solution? You simplify it and break it down, not just disregard it completely; because multithreading has its great advantages, too.

Like, how about we talk performance?

How about distributed algorithms that don't waste 40% of their computing power on agent communication and pointless overhead (like the serialisation/deserialisation of messages across the execution boundary for every single call)?

How about vertical scaling without forking the entire address space (and thus multiplying your application's memory consumption by the number of cores you wish to use)?

How about utilising logical CPUs to the fullest extent, and allowing them to execute JavaScript? Something that isn't even possible with the current model implemented by Node?

Some will say that the performance gains aren't worth the risk. That the possibility of race conditions and deadlocks aren't worth it.

That's the point of cooperative multithreading. It is a way to smartly work around these issues.

If you use promises, they will execute in parallel, to the best of the scheduler's abilities, and if you chain them then they will run consecutively as planned according to their dependency graph.

If your code doesn't access global variables or shared closure variables, or your promises only deal with their provided inputs without side-effects, then no contention will *ever* occur.

If you only read and never modify globals, no contention will ever occur.

Are you seeing the same trend I'm seeing?

Good JavaScript programming practices miraculously coincide with the best practices of thread-safety.

When someone says we shouldn't use multithreading because it's hard, do you know what I like to say to that?

"To multithread, you need a pair."

rant

js

community

multithreading