Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Related Rants

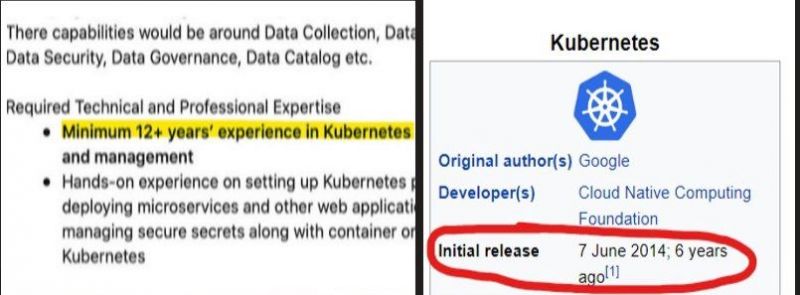

Y’all fcking kidding me right.

Y’all fcking kidding me right. Kubernetes for Beginners

Kubernetes for Beginners

## Learning k8s

Interesting. So sometimes k8s network goes down. Apparently it's a pitfall that has been logged with vendor but not yet fixed. If on either of the nodes networking service is restarted (i.e. you connect to VPN, plug in an USB wifi dongle, etc..) -- you will lose the flannel.1 interface. As a result you will NOT be able to use kube-dns (because it's unreachable) not will you access ClusterIPs on other nodes. Deleting flannel and allowing it to restart on control place brings it back to operational.

And yet another note.. If you're making a k8s cluster at home and you are planning to control it via your lappy -- DO NOT set up control plane on your lappy :) If you are away from home you'll have a hard time connecting back to your cluster.

A raspberry pi ir perfectly enough for a control place. And when you are away with your lappy, ssh'ing home and setting up a few iptables DNATs will do the trick

netikras@netikras-xps:~/skriptai/bin$ cat fw_kubeadm

#!/bin/bash

FW_LOCAL_IP=127.0.0.15

FW_PORT=6443

FW_PORT_INTERMED=16443

MASTER_IP=192.168.1.15

MASTER_USER=pi

FW_RULE="OUTPUT -d ${MASTER_IP} -p tcp -j DNAT --to-destination ${FW_LOCAL_IP}"

sudo iptables -t nat -A ${FW_RULE}

ssh home -p 4522 -l netikras -tt \

-L ${FW_LOCAL_IP}:${FW_PORT}:${FW_LOCAL_IP}:${FW_PORT_INTERMED} \

ssh ${MASTER_IP} -l ${MASTER_USER} -tt \

-L ${FW_LOCAL_IP}:${FW_PORT_INTERMED}:${FW_LOCAL_IP}:${FW_PORT} \

/bin/bash

# 'echo "Tunnel is open. Disconnect from this SSH session to close the tunnel and remove NAT rules" ; bash'

sudo iptables -t nat -D ${FW_RULE}

And ofc copy control plane's ~/.kube to your lappy :)

rant

k8s