Ranter

Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Comments

-

@asgs @tosensei @sidtheitguy I'm referring to system designs where cache is an INTEGRAL part. Meaning, if I evict cache, all sessions are dropped, part of business data is lost. Meaning, if I am having cache connectivity issues, I'm having service outages. Meaning, if I'm to use in-memory local cache and cap its size, the auto-evicted entries will destroy sessions, carts, orders, crucial enums w/o any way to recover them from anywhere else [e.g. the DB].

Cache is meant to be a helper component, speeding up data access. NOT as another database.

Yes, redis can be used as a db. But then use it as a persistence layer and not a transparent cache -

asgs109282y@netikras caching is an integral part of the system architecture in terms of being less latent but yeah not as a point of failure when it goes down

asgs109282y@netikras caching is an integral part of the system architecture in terms of being less latent but yeah not as a point of failure when it goes down

Caching enjoys a unique privilege of being there to speed up the system's behaviour while being a non-critical component of the overall system. If it fails, it should be pretty graceful with a slightly added latency in the workflows where it is involved -

https://en.m.wikipedia.org/wiki/...

https://stackoverflow.com/questions...

Using redis for persistence is a bad idea.

Though that has less to do with caching.

Caching *implies* non persistence by default... But many devs utterly ignore that fact.

A cache is never meant to be persistent.

Its a cache. Not a database. Neither a storage medium... Nothing persistent.

Volatile like some people call it.

But yeah.... Take the hammer and break some skulls, cause reason seldomly works with devs regarding caches.

... Specifically regarding Redis: https://redis.io/docs/reference/...

Redis documentation is fairly good.

But I feel you... I had this discussion so often my eyes rolled inwards reading the rant. -

@tosensei

idiots..

non-idiots..

large FLOSS projects (IDK about their idiocy level). e.g. https://www.keycloak.org/

I've seen cache misuse in various contexts. I've seen devs/archs pride in the witty solutions they've created, effectively making some in-memory cache (centralized or otherwise, local or remote) into a non-ACID persistence layer. Or use cache to act as a mediator between services (MQ and TOPICS are the right tools!!! Not a f'ing cache...) -

“Cache” is usually preceded by “Corrupted” which then people make excuses for as if corrupted caches are just a fact of life like rainy days. Being a “real time” and “function first” sort of fellow; cache is a dirty word to me. Imagine caching your car’s brake pedal. 💥🚙

-

@xcodesucks I'd like that. Then I wouldn't have to move my foot from the gas pedal

-

-

@IntrusionCM yeah, it has infinispan support.

At first it got my attention when I scaled it up to 2 replicas and I started getting random 401s. That didn't look right.

Then a colleague found that all nodes should sync their local caches and enabled insp. HOWEVER

- the most important insp caches are uncapped, with no ttl. Say hi to OOMEs

- if items are evicted, sessions are terminated. Meaning, KC is using cache as its main datasource

- this point above makes cache size tuning quite complicated

- it also means that a KC crash also destroys all sessions [in 1 replica setup]

- KC does not support centralized cache [redis, memcache, etc.] that I could dedicate plenty of resources to

- insp also means I have to calc pod mem limits very carefully, bcz of cache replication across all nodes. So I have to consider mem_needed = cache_size x no_of_instances

- the above also means I can NOT scale horizontally at peak traffic - I need a hybrid scale-up

it sucks -

@netikras

I'm a tad drunk.

But if I remember correctly, the keycloak setup at us is - and thats why your comment made me curious -

We have multiple keycloak clusters, eg. one which is pandoras box (in not entirely correct admin / elevated stuff), plus other clusters based on sensitivity level (client, dev, API, ...).

Simply to isolate.

Usually keycloak uses an infernal infinispan blub. I'd assume you mean that... And yeah that sucks.

Switch to an external infinispan cluster.

Takes a bit of time to setup, but it should solve most of your trouble as far as I can remember.

Especially updates are otherwise a PITA. Cause restarting keycloak with infinicache integrated means loosing all sessions.

Infinispan has autodiscovery. Thus the scaling should be solved.

Plus it makes it easier to tighten the screws.

E.g. pandoras box has an completely isolated infinispan setup that very few have access to.

Its literally pandoras box. Access will be reported, if you touch it out of curiosity you'll get your head whacked straight. -

@IntrusionCM even bettet.. Turns out KC explicitly disables cache persistence :)

it will only be enableable in future releases

Related Rants

-

devmonster84

devmonster84 My friend said an intern designed this UI for an internal site.

No. Just... no

My friend said an intern designed this UI for an internal site.

No. Just... no -

Sefie13

Sefie13 Implementing the application exactly like the client specified, because they won't listen.

Implementing the application exactly like the client specified, because they won't listen. -

htlr79

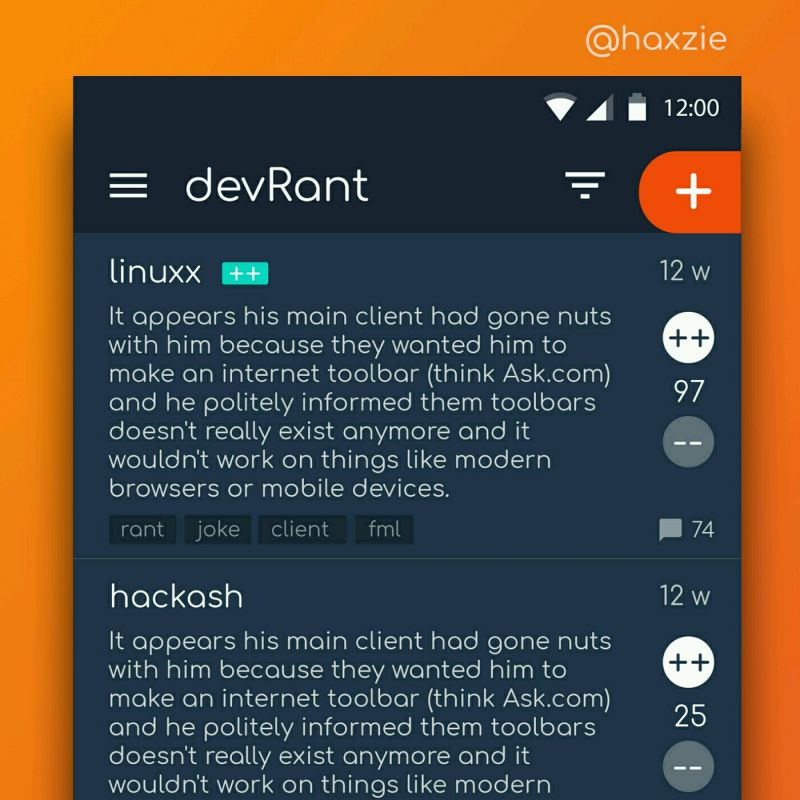

htlr79 Been looking around ways to improve devrant's user experience a little, Idk whether you guys like it or not.. ...

Been looking around ways to improve devrant's user experience a little, Idk whether you guys like it or not.. ...

Please stop using cache as an integral part of the system.

Just... don't...

rant

cache

bad habbits

design

architecture