Details

-

Skillsdotnet, webstuff

-

LocationNorway

Joined devRant on 9/24/2017

Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

-

Just had a code review where I commented that we should use linq's ".Single()" here because we don't expect or tolerate zero or multiple matches in this scenario and their response was "but copilot says -" and I didn't know so few words could make me so irrationally angry.6

-

Setting up some CSS animations and ended up with the value "infinite ease" and now I am having an existential crisis.1

-

Years ago, we were setting up an architecture where we fetch certain data as-is and throw it in CosmosDb. Then we run a daily background job to aggregate and store it as structured data.

The problem is the volume. The calculation step is so intense that it will bring down the host machine, and the insert step will bring down the database in a manner where it takes 30 min or more to become accessible again.

Accommodating for this would need a fundamental change in our setup. Maybe rewriting the queries, data structure, containerizing it for auto scaling, whatever. Back then, this wasn't on the table due to time constraints and, nobody wanted to be the person to open that Pandora's box of turning things upside down when it "basically works".

So the hotfix was to do a 1 second threadsleep for every iteration where needed. It makes the job take upwards of 12 hours where - if the system could endure it - it would normally take a couple minutes.

The solution has grown around this behavior ever since, making it even harder to properly fix now. Whenever there is a new team member there is this ritual of explaining this behavior to them, then discussing solutions until they realize how big of a change it would be, and concluding that it needs to be done, but...

not right now.2 -

When you are blocked by a trivial issue - eg., what's setting this value in the db - that someone can answer in 5 seconds in person, you can figure out on your own in 30 minutes, or you can ask via slack and get an answer after a minute or a day.

During the pandemic I had to take over a project for a guy that was retiring, and my chat log with him is basically me talking to myself, going "nm found it" up to a day after my last message. -

Two years ago we took over this project which has been a nightmare to maintain. It's a set of netcore 2.1 webapps running on an on-prem windows machine. Everyone who has worked on it so far has quit, leading to two episodes of it being passed on with near zero handover.

Its function is fairly simple, so naturally we have been nagging to redo it and cloudlift it.

I was finally given one week to see how far I'd get, and had a poc running in Azure after one day; 4 apps in clean net6, SSO, and managed identities. The only thing lacking was setting up the authentication for third parties.

And... they still don't want "something new" when the old one works. Back to IIS and debugging windows event logs.1 -

We had made an api which had endpoints for each different domain model, so /user, /company, the usual. Beyond being restful they all had basic filtering and pagination.

We also had an endpoint to return an entity from any set based on guid for when you needed to attach the related entity to notifications and logging and such.

We received a bug report on how you couldn't use filtering or pagination on this endpoint, and after weeks of asking what they need it for we just had to implement it.

You can imagine how non-trivial it is to "just" filter across different datasets, but we eventually got it working so now you can get a user via /user/123 or /entity?type=user&id=123. They only use it for one type and id at the time.1 -

My weakest skill is simply organization. I can produce great work in a decent timeframe, just maybe not for the project or task I should be working on, assuming I don't get distracted by that email I was supposed to follow up on yesterday.2

-

The scary thing about burnout is that you usually don't realize you are burning out before it's too late.

Personally, at least, I've worked on projects that just felt a little intense at the time, but after taking a step back due to holidays or hitting some milestone I realize I never want to have anything to do with it ever again. One project makes my stomach drop even today every time I see the code; Not because the code is bad, but how it takes me back to how miserable I was without admitting it to myself.

The biggest red flag I look for is when I'm tempted to work on stuff in my free time. When this starts seeming like a solution there's a serious problem with the project that needs to be addressed.2 -

The only thing I'm getting better at with experience is my ability to say upfront that I'm not able to do the requested task.7

-

My first interaction with a computer was probably playing Parsec on an old TI-99/A we dug out of the attic. After that there were a lot of troubleshooting sessions with my dad on various computers trying to get some game up and running. I still remember the IRQ/DMA combination needed to get sound in Duke.

It really is no mystery why I ended up working with this stuff. -

Triaging this project that's running on IIS makes me feel like my skillset is wasted on such banal garbage - while at the same time hoping nobody realizes what a mistake it was to put me on it because I have no idea how to troubleshoot or fix it.

I try not to be that guy who blames every issue on their tools but I can't believe some of these issues and respective fixes. Our QA was down for 2 months because I hadn't uninstalled a default module which prevents POST requests. -

Just got back from NDC and had a ton of fun. Kinda weird with government restrictions going into effect the last day and having to gtfo of an omicron outbreak.

According to their FAQ "all sessions are recorded and will be made public on our YouTube channel approximately one month after the conference."

https://youtube.com/c/...

https://ndcoslo.com/

Definitely nag your employer about going if it's ever on the table.2 -

New day. New legacy project that needs triage.

The project has existed since before 2000 so it all "works" and has no known business logic bugs. It does however have performance issues which sure I can have a look. It can actually be quite fun and rewarding to optimize performance.

This is a titanic dotnet framework leviathan consisting of over 12,000 cs files using razorpages, entity framework, and... nhibernate? I have my gripes with both EF and NH but they are both fine if used correctly, like any other tool. I've never seen them used together however.

As It Turns Out™, NH was implemented first and at a time when NH did not support async operations. It made sense if you look it up and it's meant to delegate commands via a separate layer, but different story.

Then for... reasons... EF came in and gradually took over.

Because of the way this is all set up, everything will faceplant if you try doing anything async, even if it has nothing to do with calling the db. Any attempt in making this work leads you down a slippery slope of having to rewrite the entire thing, which is out of the question in terms of their budget and expectations.

Sometimes it's a detriment when it works in spite of its issues.1 -

Does it count as a learning experience if I have still not yet fully learned it? Then it's estimating tasks.

Fix this bug where the modal is rendered underneath the message-count-badge on your profile? Heh should be easy, 10 mins to set a higher z-index.

Final cost: two weeks, where I needed two status meetings and drawing on the whiteboard to explain why this happens and why it needs a major restructuring.1 -

A couple of years ago I was working on a fairly large system with a complex (by necessity) access control architecture.

As is usually the case with those projects, it's awkward for developers to repro bugs that have to do with a user's accesses in production when we are not allowed to replicate production data in test, let alone locally.

We had a bug where I ended up making myself a new row in the production database for a thing I could have access to without affecting real data to repro it safely. I identified the bug so I could repro it in dev/test and removed the row and ensured everything worked normally, whew scary.

Have you ever walked into the office one day, and everyone is hunched over in a semicircle around one person's workstation, before one turns around to look at you and says - after a pause - "... ltlian?.."

Turns out I had basically "poisoned the well" with my dummy entity in a way where production now threw 500 for everyone BUT me who had transitive access to this post-non-entity. Due to the scope of the system, it had taken about a day for this to gradually propagate in terms of caching and eventual consistencies; new entities coming in was expected, but not that they disappear.

Luckily I had a decent track record for this to be a one-off. I sometimes think about how I would explain testing in prod and making it faceplant before going home for the day, other than "I assumed it would be fine". I would fire me.3 -

The task: Catch and log this specific error in this one function.

Me: While I'm here, let me just -

git: 12 files changed2 -

Could people kindly stop trying to expand upon the native DI in dotnet!

This is my third project where "you don't just" add new services because you have to carefully conform to hundreds of lines of boilerplate while "remembering to" whatever it demands because someone spent weeks hacking the builtin functionality in order to make it easier and shorten the startup file.

I'm trying to swap out one of the implementations that are used by one other class via DI and so far I've changed 12 files. It's literally more work to do the thing DI is designed to solve compared to not using DI because they "improved" upon it.

Sure, it might be that I'm not using your thing correctly, but that's not much better, is it. Everyone already knows how to use dotnet's DI. Literally noone knows how to use your improved version aside from yourself.

I liked how one of the team members put it after one of the former devs apologetically explained how this was some long-gone dev's baby: The only thing this code does for us is that it needs a diaper change every time we deal with it.2 -

You're kidding. You know how React, GraphQl, and Jest are made by facebook. You would think that Jest then would be framework of choice for mocking gql queries and responses for a React app. And you would be wrong. You "can, but-", depending on your implementation - ours being based on official sources - not without contorting and duplicating everything related to the query implementation at which you are barely even testing the app itself. We're using named imports from .gql files, for those familiar.

Don't you hate it when it turns out the guy going "nah tests were too hard, we didn't bother" was right.3 -

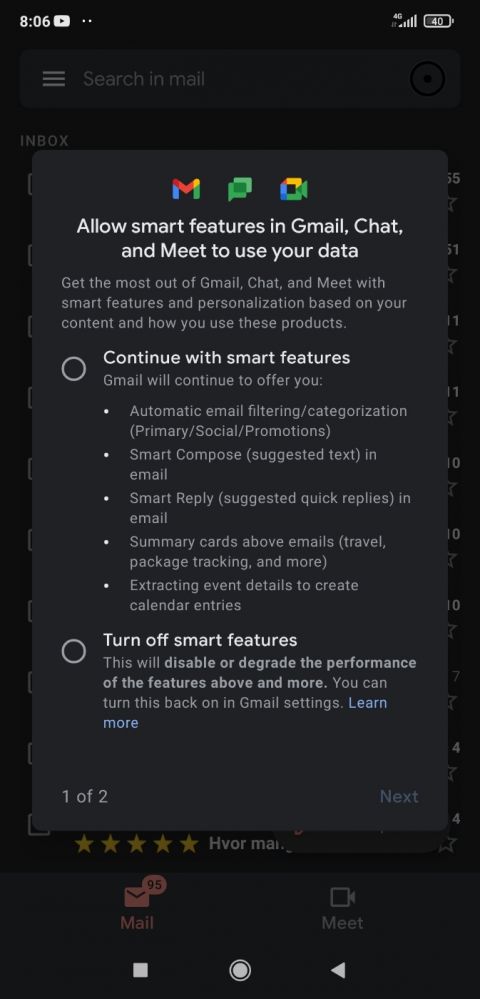

Forced choice between two options which both seemingly have irreversible and potentially destructive consequences. Tapping back or outside the modal doesn't dismiss it. No 'Read more' type link for the first option.

Laws and regulations against dark pattern design when?

edit: okay the readmore link is passable but I still want to be grumpy about it. 4

4 -

Ever have to put your work on hold due to being called into a 2 hour meeting to discuss how important it is that we need this thing finished asap.1

-

Ever have an issue you easily know how to fix while still having exactly 0 patience for it on a monday morning

3

3 -

One user could report that the data they saw didn't make sense. Turns out there was a one-off hardcoded caching detail for one of our services that cached based on a search query (yes, the entire query was the key) and before any auth checks. The system would return the results owned by whoever asked first, no matter who asked after that point.

There's "Oh dear but we all make mistakes" and there's surrender cobra. This is what PRs are for.1 -

One of the biggest reality checks you will run into when starting your first dev related job - and which they don't teach you about in school - is that a lot of the time will be spent working with other people's code, and rewriting it into "your own" is rarely an option.

You might be super into making things, but not everyone manages to maintain that same spark while taking over a 15 year old project with fundamental issues that have to be triaged "for now" because you need a hotfix on this other specific thing out in prod before lunch.

There are no gods now. They left the company years ago and nobody knows why they used the windows registry as a user repo.3 -

The bug: Some string values for an identifier property in the data objects are being sent from our frontend prefixed with a '0'. Sometimes. When it happens, it usually gets stripped away again by the time it's passed to our backend. But not always.

This 0 is never explicitly set anywhere. I even searched for a few variants of " = 0" in both the frontend and backend projects without receiving any results. You might already be suspecting where this is going.

So it turns out.

The data object which holds this value is being initialized in the aspnet (don't ask) backend and passed to the frontend, which then hydrates it. This value is always an integer number, albeit incidentally so which is why string is used as the actual type. When this object is initialized, it's hardcoded with an anonymous type where this property is set as int because I guess someone figured "it's always an int though". Being a typed language, primitive scalars can't be null objects which means the property's value becomes the concrete int 0.

Okay weird. I can think of better ways of doing this but let's just set it to string as I can't start overhauling things right now. Let's just go find where this value is somehow concatenated into the incoming parameter.

You see, this happens because at the point where the frontend sets this value, it may be an int or string depending on where it came from, and I guess someone figured that in order to cast it to string you just go prop += arg seeing as the prop is empty string and all. Because explicitly casting it or - as much as I get a rash whenever I see it - going prop = "" + arg would be too verbose and unoriginal.

Bonus round: How come the 0 only sometimes made it all the way to our backend? The thing is that this bug has been fixed before. The fix is that because this string is "always" an int, you can parse it to int before passing it to the backend in case it has leading zeroes. This path is only taken in certain views because someone forgot to copypaste their fix into all the places this is repeated.

Sometimes you find a bug and you are just somehow more grumpy after fixing it.1 -

My biggest influence on coding style is working with other people's code. I know the temptation to write "clever" code and I've been (and probably still occasionally am) guilty of it myself, but it's not until you have to debug someones oneliner iterator which has !(i-j) as the stop condition that you start to appreciate dumb, boring, obvious code.

If having a series of if checks in a long list makes it readable, keep it that way. If it makes it more readable to rewrite it into a nested switchcase with a couple of ternary bits, go ahead. Just don't spend half a day wrapping it up into two layers of abstraction that will require an onboarding process for the rest of the team.1 -

The Missing Button Paradox: The time it takes for a presenter to find a button on their screen increases based on the amount of participants who can see the button and try to help the presenter find it.