Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "raytracing"

-

My dream project is to work on a Hollywood grade raytracing engine (Pixar Renderman, Disney Hyperion,...) I have done some hobby grade ones like to produce the image here

6

6 -

Nvidia is currently running a competition on their Omniverse platform to win a top of the line RTX card

All you need to do is create a visually impressive raytracing tech demo... which requires a powerful RTX card... to win a powerful RTX card

Thanks guys3 -

While writing a raytracing engine for my university project (a fairly long and complex program in C++), there was a subtle bug that, under very specific conditions, the ray energy calculation would return 0 or NaN, and the corresponding pixel would be slightly dimmer than it should be.

Now you might think that this is a trifling problem, but when it happened to random pixels across the screen at random times it would manifest as noise, and as you might know, people who render stuff Absolutely. Hate. Noise. It wouldn't do. Not acceptable.

So I worked at that thing for three whole days and finally located the bug, a tiny gotcha-type thing in a numerical routine in one corner of the module that handled multiple importance sampling (basically, mixing different sampling strategies).

Frustrating, exhausting, and easily the most gruelling bug hunt I've ever done. Utterly worth it when I fixed it. And what's even better, I found and squashed two other bugs I hadn't even noticed, lol -

New idea: Fuck raytracing for global illumination because you just need too many rays for it to converge

What if we do surfels (to keep the number of probes down and relevant to our scene) and we update the 4x4-ish sized hemisphere irradiance maps not by tracing a single ray per frame per surfel. I have a fast as shit compute shader rasterizer... What if I just raster each surfel each frame? Should be around the same number of pixels as the primary visibility so totally feasible....

Each frame just jitter the projection a bit and voila. Should have extremely high quality diffuse global illumination at well below 1 ms. Holy shit this might just work2 -

can we just get rid of floating points? or at least make it quite clear that they are almost certainly not to be used.

yes, they have some interesting properties that make them good for special tasks like raytracing and very special forms of math. but for most stuff, storing as much smaller increments and dividing at the end (ie. don't store money as 23.45. store as 2,345. the math is the same. implement display logic when showing it.) works for almost all tasks.

floating point math is broken! and most people who really, truely actually need it can explain why, which bits do what, and how to avoid rounding errors or why they are not significant to their task.

or better yet can we design a standard complex number system to handle repeating divisions and then it won't be an issue?

footnote: (I may not be perfectly accurate here. please correct if you know more)

much like 1/3 (0.3333333...) in base 10 repeats forever, that happens with 0.1 in base 2 because of how floats store things.

this, among other reasons, is why 0.1+0.2 returns 0.300000046 -

So my homegrown raytracing engine somehow managed to render this.

(It's supposed to be a material approximating oldish gold: a texture, a diffuse material model and a specular material model)

Yay!

(Note the reddish bright interior areas. I think energy isn't being conserved in the specular BSDF. Bug. Gah.) 1

1 -

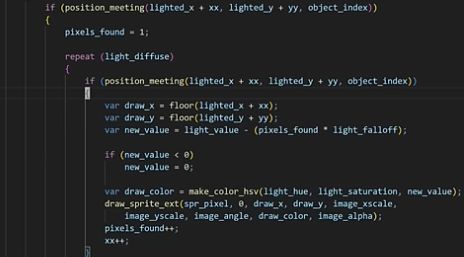

Piratesoftware's "2D Raytracing" code is just shitty radial light diffusion with collision checks. The worst part is he's individually checking each pixel and manually adjusting the lighting pixel by pixel😭 🙏

Does anyone else feel like Piratesoftware's content is just dedicated to people starting out with coding and game dev? Should this piece of shit be the person these newbie devs look up to? What a fucktard. This is the dude who has "DECADES OF INDUSTRY EXPERIENCE" hacking windmills and sending emails like "please let me hack you" in blizzard 7

7 -

I'm excited about flutter, looks like it will make cross platform mobile dev more fun.

Also dx12 rtx real time raytracing. It would be cool to work on it at work. We do animations for short movies at work and render times are huge now.

I'm kinda suspicious for both though. Couldn't check it out yet.1 -

I'm currently evaluating the best way to have both a linux distro for work and study and windows for gaming on my PC.

I need as little virtualization as possible on both systems (need to do some high performance computing and access hardware counters for uni and that sweet Ultra Raytracing 144 fps for games) and as mich flexibility in quickly switching between both systems (so dual boot isnt ideal too)

I tried WSL2 but had some issues and am currently trying out a Lubuntu VM on my windows host, but maybe someone knows the secret super cool project that magically makes this unrealistic wish work.7