Details

-

AboutCoder. A-lot-of-things wannabe.

-

SkillsNot JS

-

LocationLima, Peru

-

Github

Joined devRant on 1/30/2018

Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

-

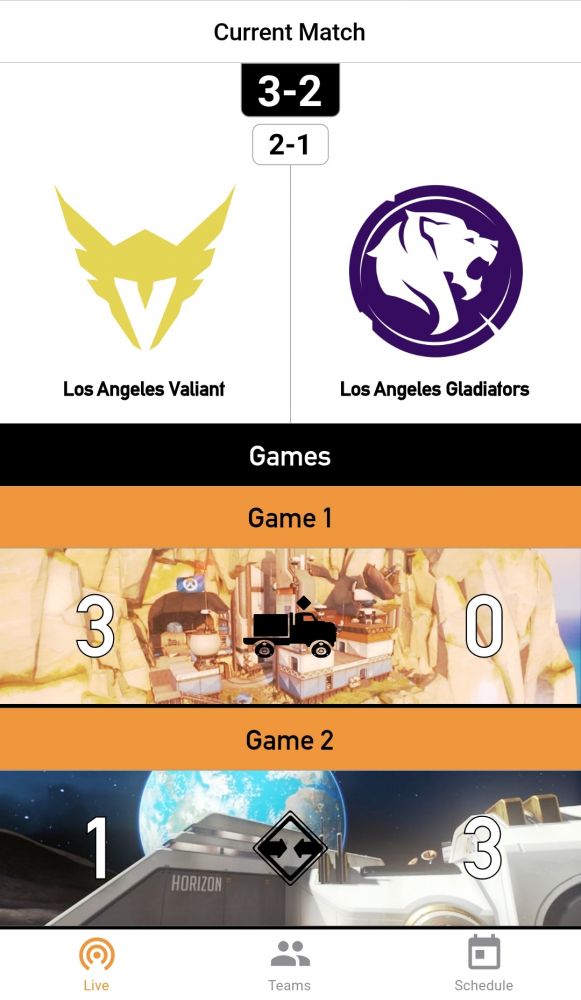

The last one was this Overwatch League Android/iOS client I made in Flutter. Basically wrote two apps in less than a month and learned (and enjoyed) Dart in the process.

I also learned that I'd probably get into trouble if I publish an app which uses a private API without permission. Hee hee.

Really cool and exhausting experience, though. 5/7 😊 -

You know what they say...

When life gives you APIs, make clients.

So I found this API that powers the Twitch overlay extension for the Overwatch League and thought it'd cool to see a mobile version of it.

Done in Flutter: OWL Live Stats (https://drive.google.com/open/...)

Give it a try if you want. Right now it's pointing to a dummy API I created based on the one I found because there are no matches at the moment.

The goal was to get it done before playoffs start (July 11) so hopefully I'll get some feedback from you in case I want to publish the thing.

Appreciate your time :)

Disclaimer: I didn't bother investigating how legal and/or useful making/publishing this app is. 2

2 -

Understand problem

Write solution

See it work on browser

Delete/comment solution

Write tests

See them fail

Restore solution

See it work on testing -

Sort of follow up to: https://devrant.com/rants/1351833/...

Now I can turn on/off my PC over the Internet. A little hacky but it works like a charm.

I'm using an Arduino IDE compatible ESP-12E with on board WiFi and a homemade optocoupler (LED + photoresistor).

Hopefully more projects like these are coming 🤗 3

3 -

Not a data loss exactly but a loss indeed.

It was my first week at my first junior developer job, I was just learning git and completely messed it all up. I lost around 3 hours of work.

I didn't want to ask anybody for help (because of that useless junior feeling, you know...) and wasn't as good using Google as I'm now.

So I re-did all the work. Thankfully, I have a decent memory.

If there's something to learn here is ask for help when you've used all your resources and still think you need it. Nobody is going to have a bad opinion about you ;) -

Fellow Spanish-speaking developer:

https://youtu.be/i_cVJgIz_Cs

Don't forget the WHERE in the DELETE statement. -

What is software development like where you live? Would you say it's good/modern or bad/outdated?

For example, in Peru (this has a degree of truth of up to 95%):

- React isn't even a thing (nevermind RN)

- Everything uses Angular

- No Django, no Rails, no Express. Everything Laravel, CodeIgniter and .NET

- No NoSQL

- Objective-C >> Swift

- AWS? cPanel!

- No testing

Of course I focused on the "bad" part, but maybe this is what rants are for :) And I haven't said anything about salaries 😪

What about you? And please don't forget to mention your country.2 -

What's really the matter with meetings?

I mean, we've all been annoyed at some point by some management person scheduling meetings we think of as pointless but I've actually found myself enjoying going to one where people can discuss and share ideas (dev related, mostly).

Sure, it's not great when you are focused and you have to stop to talk to some assholes sometimes but other times it didn't bother me to think they value my input/opinion somehow.

Surely the reason for their existence is not to make you waste time, right? 🤔1 -

- Naming things in English as a non-native English speaker.

- Maintaining a good sleep schedule as a remote worker. -

I actually learnt this last year but here I go in case someone else steps into this shit.

Being a remote work team, every other colleague of mine had some kind of OS X device but I was working this Ubuntu machine.

Turns out we were testing some Ruby time objects up to a nanosecond precision (I think that's the language defaults since no further specification was given) and all tests were green in everyone's machine except mine. I always had some kind of inconsistency between times.

After not few hours of debugging and beating any hard enough surface with our heads, we discovered this: Ruby's time precision is up to nanoseconds on Linux (but just us on OS X) indeed but when we stored that into PostgreSQL (its time precision is up to microseconds) and retrieved it back it had already got its precision cut down; hence, when compared with a non processed value there was a difference. THIS JUST DOES NOT HAPPEN IN OS X.

We ended up relying on microseconds. You know, the production application runs on Ubuntu too. Fuck this shit.

Hope it helps :)

P.s.: I'm talking about default configs, if anyone knows another workaround to this or why is this the case please share.