Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "hardware design"

-

https://git.kernel.org/…/ke…/... sure some of you are working on the patches already, if you are then lets connect cause, I am an ardent researcher for the same as of now.

So here it goes:

As soon as kernel page table isolation(KPTI) bug will be out of embargo, Whatsapp and FB will be flooded with over-night kernel "shikhuritee" experts who will share shitty advices non-stop.

1. The bug under embargo is a side channel attack, which exploits the fact that Intel chips come with speculative execution without proper isolation between user pages and kernel pages. Therefore, with careful scheduling and timing attack will reveal some information from kernel pages, while the code is running in user mode.

In easy terms, if you have a VPS, another person with VPS on same physical server may read memory being used by your VPS, which will result in unwanted data leakage. To make the matter worse, a malicious JS from innocent looking webpage might be (might be, because JS does not provide language constructs for such fine grained control; atleast none that I know as of now) able to read kernel pages, and pawn you real hard, real bad.

2. The bug comes from too much reliance on Tomasulo's algorithm for out-of-order instruction scheduling. It is not yet clear whether the bug can be fixed with a microcode update (and if not, Intel has to fix this in silicon itself). As far as I can dig, there is nothing that hints that this bug is fixable in microcode, which makes the matter much worse. Also according to my understanding a microcode update will be too trivial to fix this kind of a hardware bug.

3. A software-only remedy is possible, and that is being implemented by all major OSs (including our lovely Linux) in kernel space. The patch forces Translation Lookaside Buffer to flush if a context switch happens during a syscall (this is what I understand as of now). The benchmarks are suggesting that slowdown will be somewhere between 5%(best case)-30%(worst case).

4. Regarding point 3, syscalls don't matter much. Only thing that matters is how many times syscalls are called. For example, if you are using read() or write() on 8MB buffers, you won't have too much slowdown; but if you are calling same syscalls once per byte, a heavy performance penalty is guaranteed. All processes are which are I/O heavy are going to suffer (hostings and databases are two common examples).

5. The patch can be disabled in Linux by passing argument to kernel during boot; however it is not advised for pretty much obvious reasons.

6. For gamers: this is not going to affect games (because those are not I/O heavy)

Meltdown: "Meltdown" targeted on desktop chips can read kernel memory from L1D cache, Intel is only affected with this variant. Works on only Intel.

Spectre: Spectre is a hardware vulnerability with implementations of branch prediction that affects modern microprocessors with speculative execution, by allowing malicious processes access to the contents of other programs mapped memory. Works on all chips including Intel/ARM/AMD.

For updates refer the kernel tree: https://git.kernel.org/…/ke…/...

For further details and more chit-chats refer: https://lwn.net/SubscriberLink/...

~Cheers~

(Originally written by Adhokshaj Mishra, edited by me. ) 23

23 -

Things I hate about Microsoft (Part 1):

Windows: Does things I don't want it to do. Is not user friendly. It is just user familiar.

Outlook / Hotmail: Drops emails silently, which are RFC conform and pass every other mail service. No error messages or notifications.

Edge: Does not / Partially support(s) some modern standards.

IE: No explanation needed.

Design language: border-radius: 0 !important

Business model: Let's make our own hardware, so we can compete with our hardware partners (HP, Dell, ...). Isn't that a perfect idea.

Tracking: Let's track everything of our users. Even how many photos they open in our OS*. What they get from that? Well they could get personalised ads on Bing. Isn't that a perfect model.

*: https://blogs.windows.com/windowsex...41 -

Every single one of them, and every one that will come after them.

Google, it started out as 2 people in their garage, wanting to make a search engine that was better than the others. Nothing else, nothing evil. Just make the world a little bit better. And look what it's become now. A megacorporation with little to no regards for their user base. Because who cares about users anyway?

Microsoft, it started out with Bill Gates - young high school computer nerd - who wanted to make an operating system for the world to use. Something that's better than the competition. And boy did he do so. Well "better than the competition" aside, he did make it for the world to use. And the world adopted it. And look what it's become now. A megacorporation with little to no regards for their user base. Because who cares about users anyway?

See where I'm going here?

Apple, it started out with Steve Jobs and Steve Wozniak in their garage, just like Google did, wanting to make hardware that was better than the others. Nothing else, nothing evil. Just to make the world a little bit better. And look what it's become now. Planned obsolescence has been baked into it, just like it is in every other piece of technology. Quality control and thinking through the design has become a thing of the past. User choice, yeah who cares about that.

Samsung, it started out centuries ago actually, and I don't really remember the details of it.. ColdFusion has a video on it if memory serves me right. Do watch it if you're interested. Anyway, just like all the others they started out as a company which wanted to make the world a little bit better. And damn right did they do so.. initially. Look what they've become now. Forcing their stupid TouchWiz UI upon their customers (or products?), a Bixby button that can't even be reprogrammed.. and the latest thing.. Knox, advertised as a security feature, but as everyone who likes rooting their devices and mucking with it knows, it is an anti-feature that only serves for lockdown. Why shouldn't you be able to turn in a phone for RMA when a hardware error occurs, when all you've personally modified is the software? Why should changing the software blow that eFuse, so that you can be sure that you can't replace it without specialized equipment and a very steady hand?

I could go on and on forever about more of the tech giants out there, but I feel like this suffices for now. Otherwise I won't have anything else left for future rants! But one thing I know for sure. Every tech company started, starts, and will start out with a desire to make the world a better place, and once they gain a significant customer base, they will without exception turn into the same kind of Evil Megacorp., just like the ones before them. Some may say that capitalism itself is to blame for this, the greed for more when you already have a lot. Who knows? I'd rather say that the very human nature itself is to blame for it. We're by design greedy beings, and I hate it. I hate being human for that. I don't want humans to be evil towards one another, and be greedy for ever more. But I guess that that's just the way it is, and some things do actually never change...17 -

I'm so upset when developers starts to say that mac books are better and faster, even with windows, just because of what? Magic?apple logo? Compare them with a dell xps 15 with i7hq, ssd on pci xpress and if you care about display the 4k version is far better than retina... And no osx it's not faster than windows 10, there are comparison on the same hardware... Come on developers, you suppose to know the basics... If you prefer the macbook for design, because it's cool, fashion, light apple logo, ui, it's ok... Feel free to use it. But don't say things not true74

-

Game Development,

Because it merges together so many interesting fields so well. It has a ton of physics, a lot of art and design, psychology, philosophy, storytelling, music ...

And it really gives you the possibility to make anything work to your rules. The only limit there is is the limits of logic and your hardware.7 -

Hi,

I'm not a ranty person so I never actually thought I'd post anything here but here it goes.

From the beginning.

We use ancient technologies. PHP 5.2, Symfony 1.2 and a non RFC complient SOAP with NO documentation.

A year ago We've been thrown a new temporary project. An VOIP app for every OS.

That being iOS, Android, MAC, PC, Linux, Windows mobile. With a 3 month deadline. All that thrown at 4 PHP developers. The idea being that They'll take it, sign the delivery protocol, everyone happy. No more updates for the app needed. They get their funds they needed the app for and we get paid.

Fast forward to today...

Our dev team started the year with great news that We'll most likely have to create a new project. Since the amount of new features would be far greater than current feature set, we managed to finally force our boss to use newer technologies (ie. seperate backend symfony4 PHP7+/frontend react, rest api and so on). So we were ecstatic to say the least. With preestimates aimed at a minimum 3 month development period. Since we're comfortable with everything that needs to be done.

Two days later our boss came to me that one of our most annoying clients needs a new feature. Said client uses ancient version written on a napkin because They changed half of the specification 2 weaks before deadline in a software made not by a developer but some sysadmin who didn't know anything. His MVC model was practically VVV model since he even had sql queries in some views. Feature will take 3 days - fixing everything that will break in the meantime - 1-2 months.

F*** it, fine. A little overtime won't kill me.

Yesterday boss comes again... Apparently someone lost a delivery protocol for a project we ended that half a year ago. Whats even better at the time when we asked for hardware to test we never got any. When we asked about any testing enviornment - nothing. The app being SEMI-stable on everything is an overstatement but it was working on the os'es available at the time. Since the client started testing now again, it turns out that both Android app does not work on 8.1/9 and the iOS app does not work on ios12. The client obviously does not want to pay and we can do little with it without the protocol, other than rewriting the apps.

It will take months at least since all of those apps were written by people that didn't know neither the OS'es nor the languages. For example I started writing the iOS one in swift. Only to learn after half of the development time, that swift doesn't like working by C Library rules and I had to use ObjC also. With some C thrown in due to the library. 3 unknown languages, on an unknown platform in 3 months. I never had any apple device in my hand at that time nor do I intend to now. I'm astonished it worked out then. It was a clusterf**k of bad design and sticking everything together with deprecated apis and a gum. So I'll have to basically fully rewrite it.

If boss decides we'll take all those at the same time I'll f***ing jump of a bridge.8 -

Help.

I'm a hardware guy. If I do software, it's bare-metal (almost always). I need to fully understand my build system and tweak it exactly to my needs. I'm the sorta guy that needs memory alignment and bitwise operations on a daily basis. I'm always cautious about processor cycles, memory allocation, and power consumption. I think twice if I really need to use a float there and I consider exactly what cost the abstraction layers I build come at.

I had done some web design and development, but that was back in the day when you knew all the workarounds for IE 5-7 by heart and when people were disappointed there wasn't going to be a XHTML 2.0. I didn't build anything large until recently.

Since that time, a lot has happened. Web development has evolved in a way I didn't really fancy, to say the least. Client-side rendering for everything the server could easily do? Of course. Wasting precious energy on mobile devices because it works well enough? Naturally. Solving the simplest problems with a gigantic mess of dependencies you don't even bother to inspect? Well, how else are you going to handle all your sensitive data?

I was going to compare this to the Arduino culture of using modules you don't understand in code you don't understand. But then again, you don't see consumer products or customer-specific electronics powered by an Arduino (at least not that I'm aware of).

I'm just not fit for that shooting-drills-at-walls methodology for getting holes. I'm not against neither easy nor pretty-to-look-at solutions, but it just comes across as wasteful for me nowadays.

So, after my hiatus from web development, I've now been in a sort of internet platform project for a few months. I'm now directly confronted with all that you guys love and hate, frontend frameworks and Node for the backend and whatever. I deliberately didn't voice my opinion when the stack was chosen, because I didn't want to interfere with the modern ways and instead get some experience out of it (and I am).

And now, I'm slowly starting to feel like it was OKAY to work like this.10 -

A PCB I designed on the job over the last weeks shipped today! A benefit of hardware is the haptic element you have at the end of the design process - you made something touchable. (I am proud.)

Also, errors made earlier in the design process are permanent now. But other than on my software my design got reviewed, so I'm optimistic it'll not contain many if any.

I'm on vacation right now for moving stuff but I'm looking forward to do the "pick'n place" on monday. Soldering manually is quite relaxing for me, you should try it, too! ;)

In other news, I'm no longer sleeping on the floor in my home-office while the paint is drying in other rooms.

I already moved the most of my stuff - books and tech equipment are the worst - and I moved my furniture yesterday.

My new roommates are considerably quieter and my sleeping rhythm is slowly shifting back to normal.10 -

Hardware of laptops today.

Displays: Glossy screens everywhere. "Hurr durr it has better colors". Idgaf what colors it has, when the only thing I can see is the wall behind me and my own reflection. Make it matte or get it out.

Touchpads: Bring back mechanical buttons. Haptic feedback dying with touchscreens/surfaces is a tragedy. "But we can have bigger touchpad area without buttons" ...why? the goal shouldn't be 1:1 touchpad vs. display ratio. It ain't a bloody tablet.

Docking stations: Some bright fucker figured out that they can utilize USB C. That thing keeps falling out with slightest laptop movement disconnecting all peripherals (guess why microUSB had those small hooks?). Also it doesn't have sufficient throughput, so the 5 years old dock can feed 3 full HD monitors just fine and the new one can't.

Keyboards: Personally I hate chiclet. And it's everywhere, because "apple has it so we must too". But the thing I hate even more is retardation of the arrow keys (up and down merged into size of one key), missing dedicated Home/End/PgDwn/PgUp buttons and somebody deciding the F keys are not needed and started replacing them with some multimedia bullshit.

My overall feeling is that this happens when you give the market to designers and customer demand. You end up with eye candy and useless fancy gadgets, with lowered ergonomy and worse features than previous generations of the same hardware. My laptop dying is my daily nightmare as I have no idea with what on the current market I would replace it.5 -

I hate buying new laptops. HATE IT. The manufacturers are always trying to do something that makes it more complicated to buy a laptop confidently.

Why not name all of the laptops with numbers? Make them really hard to differentiate. Then offer the same model number across multiple years so it is difficult to determine which year the laptop is from.

Oh. And let’s make sure every laptop has a major flaw in the form factor.

Let’a add a numpad that squishes the keyboard to the left in a weird way. Lets do something to the trackpad to make it awkward to use. Maybe the keyboard should have a weird configuration. Maybe we can put 4 spare characters of various colours on the symbol key caps. How about a battery only lasts a few hours. May we add specialized hardware so you are stuck with windows. Maybe we can make it super thick and heavy. Lets have a screen with terrible viewing angles. Since this laptop has no major flaws we should overprice it. No repairs or upgrades on this one because we filled the computer with glue. Lets double the amount of useless media keys.

It is like manufacturers are trying to design laptops like RPG game character classes. The fighter has no magic or stealth. The magician is weak and gets fatigued. The rogue is very stealthy but has poor defence and attack. The cleric can use magic but only to heal so it is useless in battle. The ranger is good at distance but has poor defence and no magic.

The only notebooks sold that are trying to make balanced character classes are MacBooks. Those cost a premium and aren’t reparable.17 -

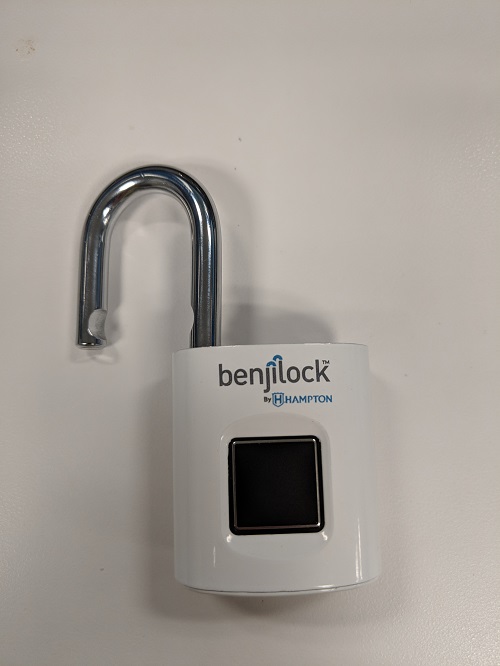

So I Bought this bio metric pad lock for my daughter. She excitedly tried to set it up without following the directions( they actually have good directions on line) first thing you do is set the "master print" she buggered that up setting her print. So when I got home I was thinking, no problem I'll just do a reset and then we cant start again.

NOPE !!! you only have one chance to set the master print! after that if you want to reset the thing you need to use the master print along with a physical key that comes with it.

What sort if Moron designs hardware / software that is unable to be reset. Imagine how much fun it would be if once you set your router admin password it was permanent unless you can long back in to change it. Yea nobody has ever forgotten a password.

Well they are about to learn a valuable financial lesson about how user friendly design will influence your bottom line. people (me) will just return the lock to the store where they bought it, and it will have to be shipped back to the factory and will be very expensive for them paying for all of the shipping to and from and resetting and repackaging of the locks and finally shipping again to another store. Meanwhile I'll keep getting new locks until at no cost until she gets it right.

poor design 34

34 -

I haven't ranted for today, but I figured that I'd post a summary.

A public diary of sorts.. devRant is amazing, it even allows me to post the stuff that I'd otherwise put on a piece of paper and probably discard over time. And with keyboard support at that <3

Today has been a productive day for me. Laptop got restored with a "pacman -Syu" over a Bluetooth mobile data tethering from my phone, said phone got upgraded to an unofficial Android 9 (Pie) thanks to a comment from @undef, etc.

I've also made myself a reliable USB extension cord to be able to extend the 20-30cm USB-A male to USB-C male cord that Huawei delivered with my Nexus 6P. The USB-C to USB-C cord that allows for fast charging is unreliable.. ordered some USB-C plugs for that, in order to make some high power wire with that when they arrive.

So that plug I've made.. USB-A male to USB-A female, in which my short USB-C to USB-A wire can plug in. It's a 1M wire, with 18AWG wire for its power lines and 28AWG wires for its data lines. The 18AWG power lines can carry up to 10A of current, while the 28AWG lines can carry up to 1A. All wires were made into 1M pieces. These resulted in a very low impedance path for all of them, my multimeter measured no more than 200 milliohms across them, though I'll have to verify and finetune that on my oscilloscope with 4-wire measurement.

So the wire was good. Easy too, I just had to look up the pinout and replicate that on the male part.

That's where the rant part comes in.. in fact I've got quite uncomfortable with sentences that don't include at least one swear word at this point. All hail to devRant for allowing me to put them out there without guilt.. it changed my very mind <3

Microshaft WanBLowS.

I've tried to plug my DIY extension cord into it, and plugged my phone and some USB stick into it of which I've completely forgot the filesystem. Windows certainly doesn't support it.. turns out that it was LUKS. More about that later.

Windows returned that it didn't support either of them, due to "malfunctioning at the USB device". So I went ahead and plugged in my phone directly.. works without a problem. Then I went ahead and troubleshooted the wire I've just made with a multimeter, to check for shorts.. none at all.

At that point I suspected that WanBLowS was the issue, so I booted up my (at the time) problematic Arch laptop and did the exact same thing there, testing that USB stick and my phone there by plugging it through the extension wire. Shit just worked like that. The USB stick was a LUKS medium and apparently a clone of my SanDisk rootfs that I'm storing my Arch Linux on my laptop at at the time.. an unfinished migration project (SanDisk is unstable, my other DM sticks are quite stable). The USB stick consumed about 20mA so no big deal for any USB controller. The phone consumed about 500mA (which is standard USB 2.0 so no surprise) and worked fine as well.. although the HP laptop dropped the voltage to ~4.8V like that, unlike 5.1V which is nominal for USB. Still worked without a problem.

So clearly Windows is the problem here, and this provides me one more reason to hate that piece of shit OS. Windows lovers may say that it's an issue with my particular hardware, which maybe it is. I've done the Windows plugging solely through a USB 3.0 hub, which was plugged into a USB 3.0 port on the host. Now USB 3.0 is supposed to be able to carry up to 1A rather than 500mA, so I expect all the components in there to be beefier. I've also tested the hub as part of a review, and it can carry about 1A no problem, although it seems like its supply lines aren't shorted to VCC on the host, like a sensible hub would. Instead I suspect that it's going through the hub's controller.

Regardless, this is clearly a bad design. One of the USB data lines is biased to ~3.3V if memory serves me right, while the other is biased to 300mV. The latter could impose a problem.. but again, the current path was of a very low impedance of 200milliohms at most. Meanwhile the direct connection that omits the ~200ohm extension wire worked just fine. Even 300mV wouldn't degrade significantly over such a resistance. So this is most likely a Windows problem.

That aside, the extension cord works fine in Linux. So I've used that as a charging connection while upgrading my Arch laptop (which as you may know has internet issues at the time) over Bluetooth, through a shared BNEP connection (Bluetooth tethering) from my phone. Mobile data since I didn't set up my WiFi in this new Pie ROM yet. Worked fine, fixed my WiFi. Currently it's back in my network as my fully-fledged development host. So that way I'll be able to work again on @Floydian's LinkHub repository. My laptop's the only one who currently holds the private key for signing commits for git$(rm -rf ~/*)@nixmagic.com, hence why my development has been impeded. My tablet doesn't have them. Guess I'll commit somewhere tomorrow.

(looks like my rant is too long, continue in comments)3 -

With every new Apple product it seems that Jony Ive's job is reading the dictionary and finding less and less understandable words to be used in the next commercial to make the production process seem more and more complex although the result is the same device over and over again.

-

Was forced to do some work on Windows this week (CAD tools that runs only on Windows). I spent a few days just setting up the tools. There were quite a few things I realized I forgot about Windows (as compared to Linux).

1) Installation times are down right horrific. What exactly are the installer doing for 10 minutes?

2) .NET is a cluster fuck. Not even Microsofts repair tool can fix it, but rather just hangs. I ended up using another tool to nuke it and reinstall.

3) Windows binary installs are insanely huge, thus, takes forever to download.

4) The registry is a pointless database that must have been written in hell with the single intent of destroying users will to live. The sole existence of the registry is another proof that completely incompetent engineers designed Windows.

5) Rebooting is the only way to solve many problems. This is another sure sign of a fundamentally fucked up OS design.

6) What the heck is wrong with the GUIs designers? The control panel must be the worst design ever. There are so many levels to get to a particular setting I'm getting dizzy. Nothing gets better by the illogical organisation.

7) Windows networking. A perversion of the tcp/ip stack that makes it virtually impossible to understand a damn thing about the current network configuration. There are at least 3 different places that effects the settings.

8) Windows command prompt. Why did they even bother to leave it in? The interpreter is as intelligent as retarded donut. You can't do anything with it, except typing "exit" and Google for another solution.

8) Updates. Why does it takes hundreds of updates per month to keep that thing safe?

9) Despite all updates that is flying out of Redmond like confetti, it is still necessary to install antivirus to keep the damn thing safe. That cost extra money, and further cost you by degrading performance of your hardware.

10) Window performance. Software runs like it was swimming in molasses. The final stab in the back on your hardware investment, and pretty much sends performance on your hardware back a few hundred bucks more.

11) Closed source is evil. If something crash consistently, you might find a forum that address the issues you have. Otherwise you're out of luck. On the other hand, it might be for the better. I imagine reading the code for Windows can lead to severe depression.

I'm lucky to be a Linux dev, and should probably not complain too much... But really, Windows, go get yourself hit by a truck and die. I won't miss you.14 -

Free ebook: For people who are into hardware analysis, hardware/software design failures.

Hacking the Xbox

by Andrew "bunnie" Huang

It's ofc not state of the art, most techniques apply today still.

Download: http://bunniefoo.com/nostarch/...

maybe some here have a use for such book 6

6 -

Worst thing you've seen another dev do? Here is another.

Early into our eCommerce venture, we experienced the normal growing pains.

Part of the learning process was realizing in web development, you should only access data resources on an as-needed basis.

One business object on it's creation would populate db lookups, initialize business rule engines (calling the db), etc.

Initially, this design was fine, no one noticed anything until business started to grow and started to cause problems in other systems (classic scaling problems)

VP wanted a review of the code and recommendations before throwing hardware at the problem (which they already started to do).

Over a month, I started making some aggressive changes by streamlining SQL, moving initialization, and refactoring like a mad man.

Over all page loads were not really affected, but the back-end resources were almost back to pre-eCommerce levels.

The main web developer at the time was not amused and fought my changes as much as she could.

Couple months later the CEO was speaking to everyone about his experience at a trade show when another CEO was complementing him on the changes to our web site.

The site was must faster, pages loaded without any glitches, checkout actually worked the first time, etc.

CEO wanted to thank everyone involved etc..and so on.

About a week later the VP handed out 'Thank You' certificates for the entire web team (only 4 at the time, I was on another team). I was noticeably excluded (not that I cared about a stupid piece of paper, but they also got a pizza lunch...I was much more pissed about that). My boss went to find out what was going on.

MyBoss: "Well, turned out 'Sally' did make all the web site performance improvements."

Me: "Where have you been the past 3 months? 'Sally' is the one who fought all my improvements. All my improvements are still in the production code."

MyBoss: "I'm just the messenger. What would you like me to do? I can buy you a pizza if you want. The team already reviewed the code and they are the ones who gave her the credit."

Me: "That's crap. My comments are all over that code base. I put my initials, date, what I did, why, and what was improved. I put the actual performance improvement numbers in the code!"

MyBoss: "Yea? Weird. That is what 'Tom' said why 'Sally' was put in for a promotion. For her due diligence for documenting the improvements."

Me:"What!? No. Look...lets look at the code"

Open up the file...there it was...*her* initials...the date, what changed, performance improvement numbers, etc.

WTF!

I opened version control and saw that she made one change, the day *after* the CEO thanked everyone and replaced my initials with hers.

She knew the other devs would only look at the current code to see who made the improvements (not bother to look at the code-differences)

MyBoss: "Wow...that's dirty. Best to move on and forget about it. Let them have their little party. Let us grown ups keeping doing the important things."8 -

!rant

Medium long story about POP!_OS

TL;DR : A true K.I.S.S. OS. Very well designed UI. In general suitable for everyone. Any distro-hoppers MUST try out. If your current OS is already heavily customized to your needs, DON'T bother with POP. (Read till the end if you are on toilet, nothing to lose)

Backstory : I am never a fanboy of anything although I am loyal to the tools I use daily. So OS is also something I picked and use to meet my needs except when I was a student. My first linux experience was about a decade ago with ubuntu. Have tried almost all kinds of light-weight and minimal distros after that (lubuntu, arch, mint, puppylinux, fedora, centos and others I forgot) during my student years.

I like all things minimal. ("Keep It Simple Stupid" is my email signature.) When I started working, Windows became the sole OS I use since it met my needs better than others. Except that one time when I tried Elementary. Although I found it a good OS, it didn't get installed as a dual-boot. I don't find Elementary minimal. It is one of well designed OSs but I still think it can be improved. (Plus I had this weird feeling that it is similar to Mac OS)

At the start of this year, Widows alone was not enough for my needs. Decided to look for a minimal linux distro. My old i7 ASUS has 8GB RAM and roughly 250GB free storage. So I am not that worried about hardware requirements. My main struggle is downloading stuffs. (Few of you guys must know by now the speed of my internet LOL.) Well, even if I had a good speed, I will still look for minimal distro as first priority. So I went with minimal ubuntu image and xubuntu environment. Although I do not like the UI design, it is acceptable. Through out the years, I have configured it to suit my needs and currently pretty happy with it.

Thoughts on POP!_OS : To me, it is literally like meeting a young girl who is perfect for my life. She has the perfect body, beautiful face, amazing appearance and good manners. And she is young, of course there is a lack of experience issue. But it can be taught and she has a very high chance to become a wonderful lady if she continues like this. Only crap is I already have someone and in a committed relationship. So I could not go any further than introduction. I do save her contact and will keep in touch with her online. You know? Things change. Things always change somehow.2 -

I fucking hate the design and aesthetics of PC gaming hardware in general. Who the fuck do they design those things for, edgy teenagers? Give me something that looks well built and professional, damnit. Heck, most console designs are much better.13

-

I use a Mac that implements MAC using MAC and its got multiple hardware MACs along with a hardware MAC.... btw, I'm eating a Big Mac.

...

Media Access Control - Networking

Manditory Access Control - Security

Message Auth Code - Security

Mac - Apple

Multiply ACcumulate - Chip Design2 -

Who makes monitors which can only change the input if there is a signal on the currently selected input?

Monitor on -> VGA -> No signal -> Going to sleep

Like, let me change it to something which has a signal instead of directly going to sleep again?9 -

Back then, I was just about a "computer guru" and friends would often ask me stuff about hardware.

One of them came to me and asked if I could make a website. I accepted despite knowing nothing about html, css, js or PHP.

I then hopped on a tutorial about html and css, and pretty much learned the basics of html in a day, then added some css and got introduced to PHP "as a way to prevent yourself from copy pasting the same bits of html everywhere".

Turned out the client wanted a CMS, which I couldn't do, then I decided I would go to a design/it school. Before finishing my 'studies' (accelerated apprenticeship), I already landed my today's job. As I'm not a "real dev" (more a self taught guy), I'm learning stuff everyday, and today I am comfortable with back end and front end web development

Code is addicting, even more than gaming!3 -

I've been doing some Codility exercises, and I can't see how it reflects on my skills as an engineer. It's just math riddles that can be solved with those fancy calculators.

It completely misses the aspects of managing resources, good design, understanding other people's code, hardware optimizations...15 -

Well shit, now I (re-)learned C,

And all I want to do is program in C,

But all C jobs are like -

"C guru that merged to Linux kernel"

"Driver writing low level must know Assembly"

"Military-grade realtime hardware design"

Isn't there a C job that's like Python - "here I wrote this script in 5 minutes and spent the rest of the day playing Eve Online" :D :D10 -

Embedded app design resources?

We have a device that is currently controlled through buttons and knobs. We are rethinking this design. We want to have a mix of buttons and knobs and in addition have a touchscreen. The controls must be redundant. So if we turn off the touch screen it must be able to control everything.

Does anyone know of any design resources for non-touchscreen embedded control?

I have been looking into how gaming console controls work. I like how they have the idea of controlling the game vs breaking the 4th wall. We have a similar constraint as we want the operator focused on what they are controlling and not the controls or even sometimes the screen. So immersion is definitely a concept for us. I have been toying with the idea of adding a d-pad for some controls. We have not solidified the hardware controls yet. That is still up to me to come up with some ideas to make it more intuitive.13 -

I really lost my faith in our profession.

A Software&Hardware solution that costs more several 10.000€ is broken after every update.

The Producer even achieves to break untouched features in new releases.

No communication at all. If you report Bugs, they are your fault. The whole system has absolutely no security at all.

It is unsecure by design.

And even if they hear your Bug report you have to pray that they will fix it.

Most if the time you have to wait the whole year for a new release tio get your bugfixes.

But there are also bugs that are untouched for years.

WHY? WE PAY YOU!

I want to cry4 -

Aren't you, software engineer, ashamed of being employed by Apple? How can you work for a company that lives and shit on the heads of millions of fellow developers like a giant tech leech?

Assuming you can find a sounding excuse for yourself, pretending its market's fault and not your shitty greed that lets you work for a company with incredibly malicious product, sales, marketing and support policies, how can you not feel your coders-pride being melted under BILLIONS of complains for whatever shitty product you have delivered for them?

Be it a web service that runs on 1980 servers with still the same stack (cough cough itunesconnect, membercenter, bug tracker, etc etc etc etc) incompatible with vast majority of modern browsers around (google at least sticks a "beta" close to it for a few years, it could work for a few decades for you);

be it your historical incapacity to build web UI;

be it the complete lack of any resemblance of valid documentation and lets not even mention manuals (oh you say that the "status" variable is "the status of the object"? no shit sherlock, thank you and no, a wwdc video is not a manual, i don't wanna hear 3 hours of bullshit to know that stupid workaround to a stupid uikit api you designed) for any API you have developed;

be it the predatory tactics on smaller companies (yeah its capitalism baby, whatever) and bending 90 degrees with giants like Amazon;

be it the closeness (christ, even your bugtracker is closed and we had to come up with openradar to share problems that you would anyway ignore for decades);

be it a desktop ui api that is so old and unmaintained and so shitty, but so shitty, that you made that cancer of electron a de facto standard for mainstream software on macos;

be it a IDE that i am disgusted to even name, xcrap, that has literally millions of complains for the same millions of issues you dont even care to answer to or even less try to justify;

be it that you dont disclose your long term plans and then pretend us to production-test and workaround-fix your shitty non-production ready useless new OS features;

be it that a nervous breakdown on a stupid little guy on the other side of the planet that happens to have paid to you dozens of thousands of euros (in mandatory licences and hardware) to actually let you take an indecent cut out of his revenues cos there is no other choice in a monopoly regime, matter zero to you;

Assuming all of these and much more:

How can you sleep at night with all the screams of the devs you are exploiting whispering in you mind? Are all the money your earn worth?

** As someone already told you elsewhere, HAVE SOME FUCKING PRIDE, shitty people AND WRITE THE FUCKING DOCS AND FIX THE FUCKING BUGS you lazy motherfuckers, your are paid more than 99.99% of people on earth, move your fucking greasy little fingers on that fucking keyboard. **

PT2: why the fuck did you remove the ESC key from your shitty keyboards you fuckshits? is it cos autocomplete is slower than me searching the correct name of a function on stackoverflow and hence ESC key is useless? at least your hardware colleagues had the decency of admitting their error and rolling back some of the uncountable "questionable "hardware design choices (cough cough ...magic mouse... cough golden charging cables not compatible with your own devices.. cough )?12 -

Hey hardware hackers, just wanted to let you know that seeedstudio offers free PCB assembly. I got 5 pieces of the PCB in the picture for 30 USD manufactured, assembled and shipped (including BOM costs), the also included 5 additional empty PCBs. However I paid another 30$ for customs and DHL customs handling (I'm inside Europe) ... But still, for assembly it's a great price, took around 4 weeks. Just upload the BOM and you get an instant quote. If you are curious, it's a simple board for an ESP32 with some mosfet drivers and two DC-DC converters.

https://seeedstudio.com/free-assemb... 4

4 -

!rant

Remember the Stress ball's TransOceanic Trackable Voyage? (Also called Devvie in a bottle.) Well, we're finalizing the design. But if you are interested in helping us with software, hardware, launching, recovery, or anything else that might help, join us at stotv.herokuapp.com. PS, we're also looking for sponsors, so if you are or know someone who is interested in sponsoring, send a message to @sven on Slack. 5

5 -

For me it was not do much a choice.

I started out using basic and simple text display (graphics existed but was quite difficult).

For a long time I was the sole or part of a pair of devs so specializing was not possible and once we grew to such a size I already was quite proficient in all areas from hardware to customer support and education.

But from that time onto today I have gravitated towards a more backend role mainly because I lack a good sense or visual design.

I know it something looks good, but doing it my self results in more boring or plain designs where more thought goes into UX than nice looking design.

That said, if we do web applications I can still keep up since it usually is more ux heavy ;)

But when it comes to adding background images, nice color sets and such I gladly defer that to colleagues with a better design sense. -

1) Learning little to nothing useful in formal post-secondary and wasting tons of time and money just to have pain and suffering.

"Let's talk about hardware disc sectors divisions in the database course, rather than most of you might find useful for industry."

"Lemme grade based on regurgitating my exact definitions of things, later I'll talk about historical failed network protocols, that have little to no relevance/importance because they fucking lost and we don't use them. Practical networking information? Nah."

"Back in the day we used to put a cup of water on top of our desktops, and if it started to shake a lot that's how you'd know your operating system was working real hard and 'thrashing' "

"Is like differentiation but is like cat looking at crystal ball"

"Not all husbands beat their wives, but statistically...." (this one was confusing and awkward to the point that the memory is mostly dropped)

Streams & lambdas in java, were a few slides in a powerpoint & not really tested. Turns out industry loves 'em.

2) Landed my first student job and get shoved on an old legacy project nobody wants to touch. Am isolated and not being taught or helped much, do poorly. Boss gets pissed at me and is unpleasant to work with and get help from. Gets to the point where I start to wonder if he starts to try and create a show of how much of a nuisance I am. He meddle with some logo I'm fixing, getting fussy about individual pixels and shades, and makes a big deal of knowing how to use GIMP and how he's sitting with me micromanaging. Monthly one on one's were uncomfortable and had him metaphorically jerking off about his lifestory career wise.

But I think I learned in code monkey industry, you gotta be capable of learning and making things happen with effectively no help at all. It's hard as fuck though.

3) Everytime I meet an asshole who knows more and accomplish than I do (that's a lot of people) with higher TC than me (also a lot of people). I despair as I realize I might sound like that without realizing it.

4) Everytime I encounter one of my glaring gaps in my knowledge and I'm ashamed of the fact I have plenty of them. Cargo cult programming.

5) I can't do leetcode hards. Sometimes I suck at white board questions I haven't seen anything like before and anything similar to them before.

6) I also suck at some of the trivia questions in interviews. (Gosh I think I'd look that up in a search engine)

7) Mentorship is nigh non-existent. Gosh I'd love to be taught stuff so I'd know how to make technical design/architecture decisions and knowing tradeoffs between tech stack. So I can go beyond being a codemonkey.

8) Gave up and took an ok job outside of America rather than continuing to grind then try to interview into a high tier American company. Doubtful I'd ever manage to break in now, and TC would be sweet but am unsure if the rest would work out.

9) Assholes and trolls on stackoverflow, it's quite hard to ask questions sometimes it feels and now get closed, marked as dupe, or downvoted without explanation.3 -

Imagine a web way ahead of our time where its size goes beyond our imagination...

This is my first rant, and I'll cut to the chase! I don't like how web currently stands. Here's what makes me angry the most altough I know there's a myriad of solutions or workarounds:

- A gazillion credentials/accounts/services in your lifetime.

- Everyone tries to reinvent the wheel.

- There's no single source of truth.

- Why the fuck there's so much design in a vision that started as a network of documents? Why is it that we need to spend time and energy to absorb the page design before we can read what we are after?

- What's up with the JS front end frameworks?! MB's of code I need to download on every page I visit and the worse is the evaluation/parsing of it. Talk about acessibility and the energy bills. I don't freaking need a SPA just give a 20-50ms page load and I'm good to go!

- I understand that there's a whole market based on it but do we really need all that developer tools and services?

- Where's our privacy by the way? Why the fuck do I need ads? Can't I have a clue about what I wan't to buy?

Sticking with this points for now... Got plenty more to discuss though.

What I would like to see:

A unique account where i can subscribe services/forums/whatever. No credentials. Credentials should be on your hardware or OS. Desktop Browser and mobile versions sync everything seemlesly. Something like OpenID.

Each person has his account and a profile associated where I share only what I want with whom I want when I want to.

Sharing stuff individually with someone is easy and secure.

There's no more email system like we know. Email should be just email like it started to be. Why the hell are we allowing companies to send us so much freaking "look at me now, we are awesome", "hey hey buy from me".. Here's an idea, only humans should send emails. Any new email address that sends you an email automatically requests your "permission" to communicate with you. Like a friend request.

Oh by the way did I tell you that static mail is too old for us? What we need is dynamic email. Editing documents on the fly, together, realtime, on the freaking email. Better than mail, slack and google docs combined.

In order for that to work reasonably well, the individual "letter" communication would have to be revamped in a new modern approach.

What about the single source of truth I talked about? Well heres what we should do. Wikipedia (community) and Larry Page (concept) gave us tremendous help. We just need to do better now.

Take the spirit of wikipedia and the discoverability that a good search engine provides us and amp that to a bigger scale. A global encyclopedia about everything known to mankind. Content could be curated from us all just like a true a network.

In this new web, new browser or whatever needed to make this happen I could save whatever I want, notes, files, pictures... and have it as I left it from device to device.

Oh please make web simple again, not easy just simple and bigger.

I'm not old by the way and I don't see a problem with being older btw.

Those are just my stupid rants and ideas. They are worth nothing. What I know for sure is that I'll do something about or fail trying to.12 -

As I'm on a research/algorithm improvement project at work I'm working pretty much independently. As such I've set up an automated test framework and writing tests for any piece of code I touch.

Today as I was fixing a bug in production area I was demoing my tests to CTO and principal design engineer. They come from a hardware background and have pushed back against automated tests in the past but they were interested in what I was doing.

I WILL DRAG THEM KICKING AND FUCKING SCREAMING INTO THE WORLD OF AUTOMATED TESTS.1 -

So today my company was removing most workspaces with USB 2 connections, DP cables and magsafe 2 power cables. This means that my MBP mid 2014 can't connect to the keyboard and monitors anymore. It already struggled with 4K, so my 2K options were already limited, but now the last few spots are mostly gone. In short: I'm being forced to upgrade.

But tell you what: I don't want to. It feels like a waste to recycle my laptop (even if it's company paid and owned) while it's perfectly acceptably fine. And mind that I will get the latest and greatest i9 for free. Yes, that overheating, throttling failure of hardware design piece of shit. 2 coworkers already own the beast and confirm that it gets really hot really quickly. One of them even has daily crashes (the laptop just turns off) and random reboots. A total waste of money. And my future time. As if it's not enough work to migrate to a new laptop (even with Time Machine).

So, fellow ranters, what do I do? I hope I can leverage the second best MBP (CPU-wise) from this situation, unless there already is a bunch of i9s in the office ready to be used. I really, really don't want one. And I think my current computer is great for what it is, even if it's old. It's a really pro machine for my needs (I'm very efficient, except for Android Studio).

I even consider asking for a Linux machine, but then a whole new world opens to me that may be a step too big (since I barely have hands-down experience).

Enlighten me with your ideas, muggles!5 -

My work product: Or why I learned to get twitchy around Java...

I maintain a Java based test system, that tests a raster image processor. The client is a Java swing project that contains CORBA bindings to the internal API of the raster image processor. It also has custom written UI elements and duplicated functionality that became available in later versions of Java, but because some of the third party tools we use don't work with later versions of Java for some reason, it's not possible to upgrade Java to gain things as simple as recursive directory deletion, yes the version of Java we have to use does not support something as simple as that and custom code had to be written to support it.

Because of the requirement to build the API bindings along with the client the whole application must be built with the raster image processor build chain, which is a heavily customised jam build system. So an ant task calls out to execute a jam task and jam does about 90% of the heavy lifting.

In addition to the Java code there's code for interpreting PostScript files, as these can be used to alter the behaviour of the raster image processor during testing.

As if that weren't enough, there's a beanshell interface to allow users to script the test system, but none of the users know Java well enough to feel confident writing interpreted Java scripts (and that's too close to JavaScript for my comfort). I once tried swapping this out for the Rhino JavaScript interpreter and got all the verbal support in the world but no developer time to design an API that'd work for all the departments.

The server isn't much better though. It's a tomcat based application that was written by someone who had never built a tomcat application before, or any web application for that matter and uses raw SQL strings instead of an orm, it doesn't use MVC in any way, and insane amount of functionality is dumped into the jsp files.

It too interacts with a raster image processor to create difference masks of the output, running PostScript as needed. It spawns off multiple threads and can spend days processing hundreds of gigabytes of image output (depending on the size of the tests).

We're stuck on Tomcat seven because we can't upgrade beyond Java 6, which brings a whole manner of security issues, but that eager little Java updated will break the tool chain if it gets its way.

Between these two components we have the Java RMI server (sometimes) working to help generate image data on the client side before all images are pulled across a UNC network path onto the server that processes test jobs (in PDF format), by reading into the xref table of said PDF, finding the embedded image data (for our server consumed test files are just flate encoded TIFF files wrapped around just enough PDF to make them valid) and uses a tool to create a difference mask of two images.

This tool is very error prone, it can't difference images of different sizes, colour spaces, orientations or pixel depths, but it's the best we have.

The tool is installed in both the client and server if the client can generate images it'll query from the server which ones it needs to and if it can't the server will use the tool itself.

Our shells have custom profiles for linking to a whole manner of third party tools and libraries, including a link to visual studio 2005 (more indirectly related build dependencies), the whole profile has to ensure that absolutely no operating system pollution gets into the shell, most of our apps are installed in our home directories and we have to ensure our paths are correct for every single application we add.

And... Fucking and!

Most of the tools are stored as source bundles in a version control system... Not got or mercurial, not perforce or svn, not even CVS... They use a custom built version control system that is built on top of RCS, it keeps a central database of locked files (using soft and hard locks along with write protecting the files in the file system) to ensure users can't get merge conflicts by preventing other users from writing to the files at all.

Branching is heavy weight and can take the best part of a day to create a new branch and populate the history.

Gathering the tools alone to build the Dev environment to build my project takes the best part of a week.

What should be a joy come hardware refresh year becomes a curse ("Well fuck, now I loose a week spending it setting up the Dev environment on ANOTHER machine").

Needless to say, I enjoy NOT working with Java. A lot of this isn't Javas fault, but there's a lot of things that Java (specifically the Java 6 version we're stuck on) does not make easy.

This is why I prefer to build my web apps in python or node, hell, I'd even take Lua... Just... Compiling web pages into executable Java classes, why? I mean I understand the implementation of how this happens, but why did my predecessor have to choose this? Why?2 -

Was watching the Google live event. Must confess that I'm highly disappointed with the looks of the Pixel 2 . I guess 2017 is just not the year for Google! I mean I love the software improvements but let's admit it that inspite of us being software devs , we ourselves love good design! The pixel 2 just does not make the cut according to me. It looks like a brick. Google does need some hardware devs!10

-

What's the point of doing estimates per quarter if you are gonna change the estimates to projects that are being worked on to match the release date?

Also doing estimates per quarter before doing investigations on the requirements is a fucking shit way to do estimates. Arguably doing it per quarter is also trash.

We are not doing hardware design for fuck sake, we work on software, you bunch of retards.4 -

Hey Linux users!

I have successfully convinced a friend to change from MacOS to a Linux based system (because she needs new hardware).

Now I am asking myself which distribution would be most qualified for her. She is a relatively old lady and only knows Mac (no Windows or Linux knowledge), so it should be easier for her if the new system would look similar to the Mac environment she knows. (Using console is no problem.)

Another point is compatibility: She needs some (commercial) software (like GitKraken and design stuff), so it would be cool if the Linux versions of them would work on the distro (for one or two programmes Wine is needed).

After my own reasearch I came up with Elementary OS or Gmac.

Because I have no experience with Mac I want to ask you: Has anyone here some experiences with these two systems and/or with a change from Mac to Linux and could recommand a distribution or desktop environment?

Thank you!10 -

Hardware classes for software dev student?

Hey guys. Currently getting into second year of a 5 year curriculum to get an 'Integrated Master of Computer Engineering & Informatics' Degree here in Greece.

I'm already into software, I'm fooling around with java, go and php, making some games, web services and anything I find interesting in general. Recently, with the logic design class, I started liking hardware stuff (I didn't really like them before).

We're getting to a point where we might have to decide between picking hardware-centered or software centered subjects. I'm thinking that I can probably learn whatever is taught on the software side by myself (with a bit more studying of course), whereas hardware would be more difficult to study alone.

That said, I'm considering picking hardware, but I am skeptical. What do you think? I'll certainly miss out on the concurrent processing, data structure and how-a-compiler-works classes.

What do you think?

P.S. University here is free2 -

So I ran into a perplexing "issue" today at work and I'm hoping some of you here have had experience with this. I got a story-time from my coworker about the early days of my company's product that I work on and heard about why I was running into so much code that appeared to be written hastily (cause it was). Turns out during the hardware bring-up phase, they were moving so fast they had to turn on all sorts of low level drivers and get them working in the system within a matter of days, just to keep up with the hardware team. Now keep in mind, these aren't "trivial" peripherals like a UART. Apparently the Ethernet driver had a grand total of a week to go from nothing to something communicating. Now, I'm a completely self-taught embedded systems focused software engineer and got to where I am simply cause I freaking love embedded systems. It's the best. BUT, the path I took involved focusing on quality over quantity, simply because I learned very quickly that if I did not take the time to think about what I was doing, I would screw myself over. My entire motto in life is something to the effect of "If I'm going to do it, I'm going to do it to the best of my abilities." As such, I tend to be one of the more forward thinking engineers on my team despite relative to my very small amount of professional experience (essentially I screwed myself over on my projects waaaay too often in the past years and learned from it). But what I learned today slightly terrifies me and took me aback. I know full well that there is going to come a point in my career where I do not have the time to produce quality code and really think about what I am designing....and yet it STILL has to work. I'm even in the aerospace field where safety is critical! I had not even considered that to be a possibility. Ideally I would like to prepare now so that I can be effective when that time does come...Have any of you been on the other side of this? What was it like? How can I grow now to be better prepared and provide value to my company when those situations come about? I know this is going to be extremely uncomfortable for me, but c'est la vie.

TLDR: I'm personally driven to produce quality code, but heard a horror story today about having to produce tons of safety-critical code in a short time without time for design. Ensue existential crisis. Help! Suggestions for growth?!

Edit: Just so I'm clear, the code base is good. We do extensive testing (for lots of reasons), but it just wasn't up to my "personal standards".2 -

Dude in my Calc 2 class just bitched about iPhones having "shitty software" referencing that bug from around ~6 years ago, when a specific iMessage text would reboot your phone. IMO, 99% of what Apple does well is software. UI is subjective, but final cut pro is unbelievable in terms of functionality for its price, their software is so well optimized that iPhones have been able to use comparably tiny batteries and still compete. They are consistent throughout their company with software design, while companies like Google are so stratified it took years before their material design had been implemented in all their services, there are still a few that aren't (not to mention the meme of Google killing off all their projects). I hate tablets, but the iPad pro has the best software/hardware implementation of any I've ever seen. Apple's interconnectivity between devices is unbelievable, whether it's Continuity features or the setup process just recognizing group devices around and pulling data to create consistent account info and saving you taps. Siri is shit, but apart from that their software isn't bad enough that you should complain about that instead of...

Their Macs are fucking pressure-cookers, and their fuckin marketing department is like a different company all-together, and their anti-fix-it-yourself policies are so user hostile that they're toe-to-toe with being as abusive to customers as Oracle.

TL;DR the biggest scam Apple has pulled off is not that the sheep still think Android and PC users are living in 2010, but they've convinced the sheep that they know what shitty software is. At that point they're too many levels deep and there is no red-pill strong enough for them.2 -

Anytime I operate with hardware RAIDs on prod servers, I still sweat from the nerves of sending a wrong command that would wipe the RAID metadata clean and make all the data disappear.

Doesn't help that the CLI tools (MegaCli / StorCli) are both kinda terrible. The prior has a terrible documentation / switch design and the latter cannot do everything the prior can... -

Design in Motion: Real-Time Rendering's Impact on Architecture

Architecture, a discipline that once relied heavily on blueprints, models, and lengthy render times, has undergone a revolutionary transformation in recent years. The advent of real-time rendering technology has fundamentally altered the way architects visualize, present, and interact with their designs. This paradigm shift has not only enhanced the creative process but has also empowered architects to make more informed decisions and create immersive experiences for clients and stakeholders.

Real-time rendering, a technological marvel that harnesses the power of high-performance graphics hardware and advanced software algorithms, allows architects to generate photorealistic visualizations of their designs in a matter of milliseconds. Gone are the days of waiting hours or even days for a single rendering to complete. This acceleration in rendering time has not only expedited the design process but has also encouraged architects to explore multiple design iterations rapidly.

One of the most significant impacts of real-time rendering on architecture is the ability to visualize a design in various lighting conditions and environmental settings. Architects can now instantly switch between daytime and nighttime lighting scenarios, experiment with different materials, and observe how their designs respond to different seasons or weather conditions. This level of dynamic visualization offers insights into how a building's appearance and functionality evolve throughout the day, contributing to more holistic and thoughtful design solutions.

Moreover, real-time rendering has transformed client presentations. Architectural concepts can now be communicated with unprecedented clarity and realism. Clients can virtually walk through spaces, observing intricate details, exploring different angles, and even experiencing the play of light and shadow in real-time. This immersive experience fosters a deeper understanding of the design intent, enabling clients to provide more targeted feedback and make informed decisions.

The impact of real-time rendering on collaboration within architectural teams cannot be overstated. Traditionally, architects and designers would need to wait for a rendering to complete before discussing design changes or improvements. With real-time rendering, team members can make adjustments on the fly, observing the immediate effects of their decisions. This seamless collaboration not only enhances efficiency but also encourages interdisciplinary collaboration as architects, engineers, and other stakeholders can work together in real-time to refine designs.

The integration of virtual reality (VR) and augmented reality (AR) into the architectural workflow is another transformative aspect of real-time rendering. Architects can now create VR environments that allow clients to step inside their designs and explore every nook and cranny. This not only enhances client engagement but also enables architects to identify potential design flaws or spatial issues that might not be apparent in 2D drawings. AR, on the other hand, overlays digital information onto the physical world, facilitating on-site decision-making and construction supervision.

Real-time rendering's impact extends beyond the design phase. It has proven to be a valuable tool for public engagement and community involvement in architectural projects. By creating virtual walkthroughs of proposed structures, architects can offer the public an opportunity to experience the design before construction begins. This transparency fosters a sense of ownership and allows for constructive feedback, contributing to the development of designs that resonate with the community's needs and aspirations.

The environmental implications of real-time rendering are also noteworthy. The ability to visualize designs in various environmental contexts contributes to more sustainable architecture. Architects can assess how natural light interacts with interior spaces, optimizing energy efficiency and reducing the need for artificial lighting during the day.

In conclusion, real-time rendering has ushered in a new era of architectural design, propelling the industry into a realm of dynamic visualization, immersive experiences, and enhanced collaboration. The ability to witness designs in motion, explore different lighting conditions, and interact with virtual environments has redefined how architects approach their craft. From facilitating client presentations to fostering sustainable design solutions, real-time rendering's impact on architecture is profound and multifaceted. As the technology continues to evolve, architects have an unprecedented opportunity to push the boundaries of creativity, efficiency, and sustainability in the built environment. -

Software or hardware design solutions that are retrofitted for Legacy systems. I understand the value of backwards compatibility, but Gah damn!

-

Hunter Kitchen & Bath, LLC

We are a boutique renovation firm that will custom design your kitchen, bath, library, outdoor kitchen or other home project. Come to our brand-new showroom in Bryn Mawr to select from cabinetry, countertops, tile, wood, hardware, & plumbing fixtures.

Address: 1042 W Lancaster Ave, Bryn Mawr, PA 19010

Phone: (484) 872-8801

Work Hours: Mon-Fri: 9:30AM-4:30PM-Sat:10AM-2PMrant kitchen remodeling bathroom remodeling kitchen desinger kitchen cabinet contractors bath remodeling