Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "web tracking"

-

I absolutely HATE "web developers" who call you in to fix their FooBar'd mess, yet can't stop themselves from dictating what you should and shouldn't do, especially when they have no idea what they're doing.

So I get called in to a job improving the performance of a Magento site (and let's just say I have no love for Magento for a number of reasons) because this "developer" enabled Redis and expected everything to be lightning fast. Maybe he thought "Redis" was the name of a magical sorcerer living in the server. A master conjurer capable of weaving mystical time-altering spells to inexplicably improve the performance. Who knows?

This guy claims he spent "months" trying to figure out why the website couldn't load faster than 7 seconds at best, and his employer is demanding a resolution so he stops losing conversions. I usually try to avoid Magento because of all the headaches that come with it, but I figured "sure, why not?" I mean, he built the website less than a year ago, so how bad can it really be? Well...let's see how fast you all can facepalm:

1.) The website was built brand new on Magento 1.9.2.4...what? I mean, if this were built a few years back, that would be a different story, but building a fresh Magento website in 2017 in 1.x? I asked him why he did that...his answer absolutely floored me: "because PHP 5.5 was the best choice at the time for speed and performance..." What?!

2.) The ONLY optimization done on the website was Redis cache being enabled. No merged CSS/JS, no use of a CDN, no image optimization, no gzip, no expires rules. Just Redis...

3.) Now to say the website was poorly coded was an understatement. This wasn't the worst coding I've seen, but it was far from acceptable. There was no organization whatsoever. Templates and skin assets are being called from across 12 different locations on the server, making tracking down and finding a snippet to fix downright annoying.

But not only that, the home page itself had 83 custom database queries to load the products on the page. He said this was so he could load products from several different categories and custom tables to show on the page. I asked him why he didn't just call a few join queries, and he had no idea what I was talking about.

4.) Almost every image on the website was a .PNG file, 2000x2000 px and lossless. The home page alone was 22MB just from images.

There were several other issues, but those 4 should be enough to paint a good picture. The client wanted this all done in a week for less than $500. We laughed. But we agreed on the price only because of a long relationship and because they have some referrals they got us in the door with. But we told them it would get done on our time, not theirs. So I copied the website to our server as a test bed and got to work.

After numerous hours of bug fixes, recoding queries, disabling Redis and opting for higher innodb cache (more on that later), image optimization, js/css/html combining, render-unblocking and minification, lazyloading images tweaking Magento to work with PHP7, installing OpCache and setting up basic htaccess optimizations, we smash the loading time down to 1.2 seconds total, and most of that time was for external JavaScript plugins deemed "necessary". Time to First Byte went from a staggering 2.2 seconds to about 45ms. Needless to say, we kicked its ass.

So I show their developer the changes and he's stunned. He says he'll tell the hosting provider create a new server set up to migrate the optimized site over and cut over to, because taking the live website down for maintenance for even an hour or two in the middle of the night is "unacceptable".

So trying to be cool about it, I tell him I'd be happy to configure the server to the exact specifications needed. He says "we can't do that". I look at him confused. "What do you mean we 'can't'?" He tells me that even though this is a dedicated server, the provider doesn't allow any access other than a jailed shell account and cPanel access. What?! This is a company averaging 3 million+ per year in revenue. Why don't they have an IT manager overseeing everything? Apparently for them, they're too cheap for that, so they went with a "managed dedicated server", "managed" apparently meaning "you only get to use it like a shared host".

So after countless phone calls arguing with the hosting provider, they agree to make our changes. Then the client's developer starts getting nasty out of nowhere. He says my optimizations are not acceptable because I'm not using Redis cache, and now the client is threatening to walk away without paying us.

So I guess the overall message from this rant is not so much about the situation, but the developer and countless others like him that are clueless, but try to speak from a position of authority.

If we as developers don't stop challenging each other in a measuring contest and learn to let go when we need help, we can get a lot more done and prevent losing clients. </rant>14 -

Job interview goes really well. Senior Dev 90-100k.

Ok, so for your "test" write up a proposal for a web based bulk email sending system with its own admin panel for building list, tracking emails, and with reporting.

I write up an estimate. Low ball the absolute fuck out of it because I'm trying to get a job. Know a few good libraries I can use to save some time. Figure I can just use sendmail, or PHPMailer, or NodeMailer for the emailing, and DataTables Editor for a simple admin CRUD with reporting. Write the thing up. Tell them they can have it in LAMP or Node.

Come in at 36 hours.

Then these fucking wanks told me they wanted me to actually do the project.

My exact response was:

"I bill $50 an hour, let me know"

They did not let me know.

Young devs, jobless devs, desperate devs. I've seen a fair amount of this. And for the right job I might go as high as maybe 4 - 6 hours of unpaid work for some "programming test". But please be careful. There are those who will try to exploit lack of experience or desperation for free work.15 -

What kind of supercomputer you have to use to get these fucking websites to work smoothly????

I'm on a fucking gigabit connection, ryzen 7 7700x, 32GB ram, and a fucking nvme, all it takes is opening a fucking recipe site and I'm instantly transported back to the 80s. I swear if i see another 4k asset I'm gonna punch something.

WHAT THE FUCK HAPPENED TO FUNCTION OVER FORM????

Oh do you want me to disable my addblocker??? How about: you make a site that works you fuck. No i will not fucking subscribe to your brain-dead newsletter why the fuck would I???

And since when are cookies needed for a fucking plaintext site you asshat??? Tracking??? I swear if you could you would generate metadata from my clipped fingernails if it meant you could stick "Big data" next to that zip-bomb you call a website.

I WOULD like to read your article, possibly even watch a couple of ads on my sidebar for you, but noooooo you had to have the stupid fucking google vinegrette or however the fuck they are calling the fucking thing now.

The age of the web sucks the happiness out of life, and despite having all of this processing power, I am jealous of my fathers RSS feeds.

I'm sorry web people, I know it's not your fault, I know designers and management don't give a shit how long a website takes to load. I just wanted to make a fucking omelette.15 -

I got arrested multiple times under acts of cyber crimes...

Yeah, so what if I did? Why is it a problem that I take down CP sites? "Because it's partaking in cyber warfare." Well then the police and the Federal government should execute their job keeping such out of the web space. Now, whenever I find a job, I have to inform due to the judge's final document. And not just that now when I am required to talk to a police officer who has seen my record all they can reckon is to escalate it.

What fantastic horse crap! You get arrested for tracking down child molesters and taking them off the web exclusively...

Some say I'm a social justice warrior, only I don't think that I am. I reckon I am merely an over eccentric programmer who desires to see the real criminals get sent to jail.28 -

Would the web be better off, if there was zero frontend scripting? There would be HTML5 video/audio, but zero client side JS.

Browsers wouldn't understand script tags, they wouldn't have javascript engines, and they wouldn't have to worry about new standards and deprecations.

Browsers would be MUCH more secure, and use way less memory and CPU resources.

What would we really be missing?

If you build less bloated pages, you would not really need ajax calls, page reloads would be cheap. Animated menus do not add anything functionally, and could be done using css as well. Complicated webapps... well maybe those should just be desktop/mobile apps.

Pages would contain less annoying elements, no tracking or crypto mining scripts, no mouse tracking, no exploitative spam alerts.

Why don't we just deprecate JS in the browser, completely?

I think it would be worth it.22 -

A big FUCK YOU to chrome, and a big FUCK YOU to google in generally. First the hell that is code.org, then the chrome. I genuinely want to open a dictionary in google to see if the word "privacy" is in there. Sure, first it was tracking users with by making them agree to a long ass TOS no one wants to read except lawyers, then barely even giving any info and asking for consent with YOUR data, but this is too far. For all you that dont know, LanSchool is an application that allows teachers to see students screens, internet history and more. Its the reason kids can't play games in English class. But most importantly, its a chrome extension. We have to do assignments from home right? So when we logon to the school account from home, LANSCHOOL GETS DOWNLOADED ANYRACKS EVERYTHING I DO. It pains me how teachers can view so much information unfairly because of some unknowing students, my friends privacy was unfairly in the hands of google and the school system. Right when I found out about tit (~2 mins after i first logged on) i made an Ubuntu VM just for goddamn google docs. Back to my friend, he went on some websites not to be considered appropriate, and got in huge trouble. He was completely unaware of the fact that they could see his screen, and I resent google for allowing a third party to manipulate my PERSONAL COMPUTER without my consent. Die google, you ruined android, which had so much potential, and now the web and virtual privacy. You should be <strike>ashamed</strike> dead, and I hope in the future you realize that one day people will have common sense.26

-

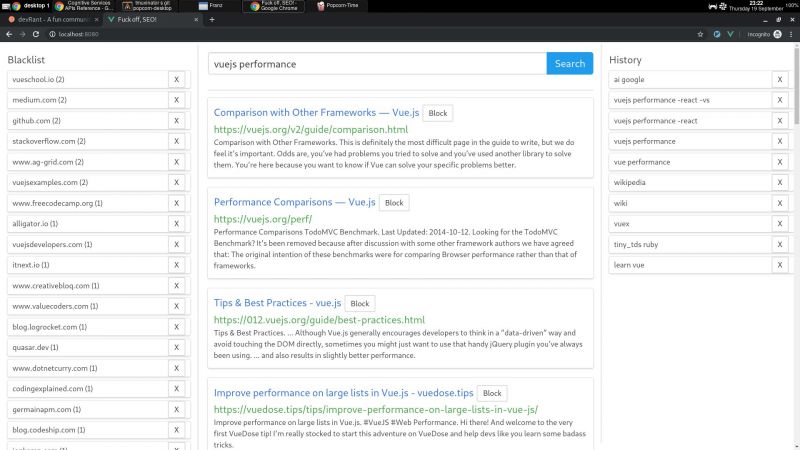

I wrote a node + vue web app that consumes bing api and lets you block specific hosts with a click, and I have some thoughts I need to post somewhere.

My main motivation for this it is that the search results I've been getting with the big search engines are lacking a lot of quality. The SEO situation right now is very complex but the bottom line is that there is a lot of white hat SEO abuse.

Commercial companies are fucking up the internet very hard. Search results have become way too profit oriented thus unneutral. Personal blogs are becoming very rare. Information is losing quality and sites are losing identity. The internet is consollidating.

So, I decided to write something to help me give this situation the middle finger.

I wrote this because I consider the ability to block specific sites a basic universal right. If you were ripped off by a website or you just don't like it, then you should be able to block said site from your search results. It's not rocket science.

Google used to have this feature integrated but they removed it in 2013. They also had an extension that did this client side, but they removed it in 2018 too. We're years past the time where Google forgot their "Don't be evil" motto.

AFAIK, the only search engine on earth that lets you block sites is millionshort.com, but if you block too many sites, the performance degrades. And the company that runs it is a for profit too.

There is a third party extension that blocks sites called uBlacklist. The problem is that it only works on google. I wrote my app so as to escape google's tracking clutches, ads and their annoying products showing up in between my results.

But aside uBlacklist does the same thing as my app, including the limitation that this isn't an actual search engine, it's just filtering search results after they are generated.

This is far from ideal because filter results before the results are generated would be much more preferred.

But developing a search engine is prohibitively expensive to both index and rank pages for a single person. Which is sad, but can't do much about it.

I'm also thinking of implementing the ability promote certain sites, the opposite to blocking, so these promoted sites would get more priority within the results.

I guess I would have to move the promoted sites between all pages I fetched to the first page/s, but client side.

But this is suboptimal compared to having actual access to the rank algorithm, where you could promote sites in a smarter way, but again, I can't build a search engine by myself.

I'm using mongo to cache the results, so with a click of a button I can retrieve the results of a previous query without hitting bing. So far a couple of queries don't seem to bring much performance or space issues.

On using bing: bing is basically the only realiable API option I could find that was hobby cost worthy. Most microsoft products are usually my last choice.

Bing is giving me a 7 day free trial of their search API until I register a CC. They offer a free tier, but I'm not sure if that's only for these 7 days. Otherwise, I'm gonna need to pay like 5$.

Paying or not, having to use a CC to use this software I wrote sucks balls.

So far the usage of this app has resulted in me becoming more critical of sites and finding sites of better quality. I think overall it helps me to become a better programmer, all the while having better protection of my privacy.

One not upside is that I'm the only one curating myself, whereas I could benefit from other people that I trust own block/promote lists.

I will git push it somewhere at some point, but it does require some more work:

I would want to add a docker-compose script to make it easy to start, and I didn't write any tests unfortunately (I did use eslint for both apps, though).

The performance is not excellent (the app has not experienced blocks so far, but it does make the coolers spin after a bit) because the algorithms I wrote were very POC.

But it took me some time to write it, and I need to catch some breath.

There are other more open efforts that seem to be more ethical, but they are usually hard to use or just incomplete.

commoncrawl.org is a free index of the web. one problem I found is that it doesn't seem to index everything (for example, it doesn't seem to index the blog of a friend I know that has been writing for years and is indexed by google).

it also requires knowledge on reading warc files, which will surely require some time investment to learn.

it also seems kinda slow for responses,

it is also generated only once a month, and I would still have little idea on how to implement a pagerank algorithm, let alone code it. 4

4 -

There you are, fiddling with next.js webpack settings, because your isomorphic JS-in-CSS-in-JS SSR fallback from react-native-web to react-dom throws a runtime error on your SSR prerendering server during isomorphic asynchronous data prefetching from Kubernetes backend-for-frontend edge-server with GraphQL.

You have all that tech to display a landing page with an email form, just to send spam emails with ten tracking links and five tracking beacons per email.

Your product can be replaced by an Excel document made in two days.

It was developed in two years by a team of ten developers crunching every day under twelve project managers that can be replaced with a parrot trained to say “Any updates?”

Your evaluation is $5M+. You have 10,000 dependency security warnings, 1000 likes on Product Hunt, 500 comments on Hacker News, and a popular Twitter account.

Your future looks bright. You finish your coffee, crack your knuckles and carry on writing unit tests. 5

5 -

me vs marketing guy, again

me: yeah, the database server is not responding, so you cannot log in to post your blog, wait for it to get online.

MG: But, the website is online.

me: web host and database server are two distinct things, they are not the same, *share a screenshot of the error*

MG: Oh okay.

Literally 3 hours later this fucking idiot sends an email and I quote.

"Hi Dev,

@CTO FYI, Someone has removed this code So there is some tracking issue on it.

Please add below google analytics code on the website.

Note: Copy and paste this code as the first item into the <HEAD> of every web page that you want to track. If you already have a Global Site Tag on your page, simply add the config line from the snippet below to your existing Global Site Tag.

<!-- Global site tag (gtag.js) - Google Analytics -->

<script async src="https://googletagmanager.com/gtag/..."></script>

<script>

window.dataLayer = window.dataLayer || [];

function gtag(){dataLayer.push(arguments);}

gtag('js', new Date());

gtag('config', 'UA-xxxxxxxx-1');

</script>

"

The fucking issue was of him not being able to post his shitty blog, and he shares an email like this, FOR FUCK'S SAKE!2 -

I think that two criterias are important:

- don't block my productivity

- author should have his userbase in mind

1) Some simple anti examples:

- Windows popping up a big fat blue screen screaming for updates. Like... Go suck some donkey balls you stupid shit that's totally irritating you arsehole.

- Graphical tools having no UI concept. E.g. Adobes PDF reader - which was minimalized in it's UI and it became just unbearable pain. When the concept is to castrate the user in it's abilities and call the concept intuitive, it's not a concept it's shit. Other examples are e.g. GEdit - which was severely massacred in Gnome 3 if I remember correctly (never touched Gnome ever again. I was really put off because their concept just alienated me)

- Having an UI concept but no consistency. Eg. looking at a lot of large web apps, especially Atlassian software.

Too many times I had e.g. a simple HTML form. In menu 1 you could use enter. In menu 2 Enter does not work. in another menu Enter works, but it doesn't submit the form it instead submits the whole page... Which can end in clusterfuck.

Yaaayyyy.

- Keyboard usage not possible at all.

It becomes a sad majority.... Pressing tab, not switching between form fields. Looking for keyboard shortcuts, not finding any. Yes, it's a graphical interface. But the charm of 16 bit interfaces (YES. I'm praising DOS interfaces) was that once you memorized the necessary keyboard strokes... You were faster than lightning. Ever seen e.g. a good pharmacist, receptionist or warehouse clerk... most of the software is completely based on short keyboard strokes, eg. for a receptionist at a doctor for the ICD code / pharmaceutical search et cetera.

- don't poop rainbows. I mean it.

I love colors. When they make sense. but when I use some software, e.g. netdata, I think an epilepsy warning would be fair. Too. Many. Neon. Colors. -.-

2) It should be obvious... But it's become a burden.

E.g. when asked for a release as there were some fixes... Don't point to the install from master script. Maybe you like it rolling release style - but don't enforce it please. It's hard to use SHA256 hash as a version number and shortening the hash might be a bad idea.

Don't start experiments. If it works - don't throw everything over board without good reasons. E.g. my previous example of GEdit: Turning a valuable text editor into a minimalistic unusable piece of crap and calling it a genius idea for the sake of simplicity... Nope. You murdered a successful product.

Gnome 3 felt like a complete experiment and judging from the last years of changes in the news it was an rather unsuccessful one... As they gave up quite a few of their ideas.

When doing design stuff or other big changes make it a community event or at least put a poll up on the github page. Even If it's an small user base, listen to them instead of just randomly fucking them over.

--

One of my favorite projects is a texteditor called Kate from KDE.

It has a ton of features, could even be seen as a small IDE. The reason I love it because one of the original authors still cares for his creation and ... It never failed me. I use Kate since over 20 years now I think... Oo

Another example is the git cli. It's simple and yet powerful. git add -i is e.g. a thing I really really really love. (memorize the keyboard shortcuts and you'll chunk up large commits faster than flash.

Curl. Yes. The (http) download tool. It's author still cares. It's another tool I use since 20 years. And it has given me a deep insight of how HTTP worked, new protocols and again. It never failed me. It is such a fucking versatile thing. TLS debugging / performance measurements / what the frigging fuck is going on here. Take curl. Find it out.

My worst enemies....

Git based clients. I just hate them. Mostly because they fill the niche of explaining things (good) but completely nuke the learning of git (very bad). You can do any git action without understanding what you do and even worse... They encourage bad workflows.

I've seen great devs completely fucking up git and crying because they had really no fucking clue what git actually does. The UI lead them on the worst and darkest path imaginable. :(

Atlassian products. On the one hand... They're not total shit. But the mass of bugs and the complete lack of interest of Atlassian towards their customers and the cloud movement.... Ouch. Just ouch.

I had to deal with a lot of completely borked up instances and could trace it back to a bug tracking entry / atlassian, 2 - 3 years old with the comment: vote for this, we'll work on a Bugfix. Go fuck yourself you pisswads.

Microsoft Office / Windows. Oh boy.

I could fill entire days of monologues.

It's bad, hmkay?

XEN.

This is not bad.

This is more like kill it before it lays eggs.

The deeper I got into XEN, the more I wanted to lay in a bathtub full of acid to scrub of the feelings of shame... How could anyone call this good?!?????4 -

Those GDPR nag screens actually are more damaging than useful. Nobody has the energy to jump through the hoops all different sites set up for you to opt-out of tracking. Yet you will constantly see those pages if you have opted out.

If you use some privacy extensions that block tracking cookies and stuff, you will keep getting those nag screens, because they have no idea whether you have seen it or not (because of no tracking)

So browsing the web has become the constant of:

1) Search something

2) Deal with nagscreens

3) See the page

4) Go to other page

5) Repeat from step two

I wonder what this will lead to? People are less likely to visit random pages and stick to ones they have account on? Will darknet become more popular? Will somebody design some standard way to get rid of this nagscreen wave?11 -

Ladies and gents, it was a 🍺 day, today.

I spent more hours than I care to say today tracking down an issue in our web workflow, even looping in our only web dev to help me debug it from his side. There ended up being multiple bugs found, but the most annoying of them was that the json data being pulled back was truncated because a certain someone, in their migration script, set their varchar variable to a size of 1000 and then proceeded to store a json string that was 2800+ characters in length.

C'mon man!

I got nothing productive done today. Hate, hate, hate days like this!

Beer me.3 -

RANT

We use Exact for our time sheets/hour tracking. How it's supposed to work:

-Manager plans my hours in Exact.

- I work those hours on the given projects

== All fine till here ==

But then ... there is a button (don't know the correct translation) "realise" which books the planned hours for me. So I don't have to do it manually.

This simply didn't work!! No one seem to know why not... Not even the guys at Exact.

Since it's web based I opened the developers window and looked for the call behind the button. You would think it would be at least an Ajax call thingy (I'm not completely into JS)

Turns out it's a readable JS function!

It doesn't stop there... It first makes all calculations on what to display, at last, at the fucking end, it checks a setting whether to proceed the booking or not!!!!

So I found and switched the setting and tried the button again.... Now it fucking works...

No fucking way I am going to tell Exact what the problem is 😫2 -

There's little irritations that happen when working with clients over time that let you know that they're stuck in the past and definitely not the kind of client you want to have long term.

My personal favorite example:

"Can we put an icon that shows the weather on the banner of the website?"

Note: I don't make "websites," information portals, content pieces, etc.

It doesn't to matter what type of application it is; time tracking, HR, mortgage application, industrial control system, etc. I don't know why, but every single client I've ever had where I've been saddled with one or more people who have no business being anywhere near the term "stakeholder" asked for this stupid, banal, 1995 web portal fuckery. Their shitty little mushroom stamp contribution wasting everyone's time.

What's worse, they want it be prominent in the screen real estate. It can't just be a responsibly sized waste of space like the screenshot's top example (from a company whose entire business is weather, nonetheless). No, it has to be the busiest fucking thing in the control space, as in the example inferior.

Or maybe I'm just wrong and people desperately want to know if the sky is going to piss on them if they leave the cave.

Anyone else have a pet peeve in regards to recurrent, pointless functionality? 2

2 -

* Developing a new "My pages" NBV offer/order solution for customer

_Thursday

Customer: Are we ready for testing?

Me: Almost, we need to receive the SSL cert and then do a full test run to see if your sales services get the orders correctly. At this point, all orders made via this flow are tagged so they will not be sent to the Sales services. We also still need to implement the tracking to see who has been exposed to what in My Pages.

Customer: Ok, great!

_Friday

Customer: My web team needs these customers to have fake offers on them, to validate the layout and content

Me: Ok, my colleague can fix this by Tuesday - he has all the other things with higher prio from you to complete first

Customer: Ok! Good!

_Sunday

Me: Good news, got the SSL cert installed and have verified the flow from my side. Now you need to verify the full flow from your side.

Customer: Ok! Great! Will do.

_Monday

*quiet*

_Tuesday

Customer: Can you see how things are going? Any good news?

Me: ???

*looks into the system*

WTF!?!

- Have you set this into production on your side? We are not finished with the implementation on our side!

Customer: Oh, sorry - well, it looked fine when we tested with the test links you sent (3 weeks ago)

Me: But did you make a complete test run, and make sure that Sales services got the order?

Customer: Oh, no they didn't receive anything - but we thought that was just because of it being a test link

Me: Seriously - you didn't read what i wrote last Thursday?

Customer: ...

Me: Ok, so what happens if something goes wrong - who get's blamed?

Customer: ...

Me: FML!!!2 -

I'll just leave this here:

No tracking, no revenue: Apple's privacy feature costs ad companies millions

https://theguardian.com/technology/...1 -

Today I came across a very strange thing or a coincidence(maybe).

I was working on my predictive analytics project and I had registered on Kaggle(repository for datasets) long back and was searching on how to scrape websites, as I couldn't find any relevant dataset. So, while I was searching for ways to scrape a website, suddenly after visiting a few websites, I get notifications of a new email. And it was from Kaggle with the subject line

"How to Scrape a Tidy Dataset for Analysis"

Now I don't how to feel about it. Mixed feelings! It is either a wild coincidence, or Kaggle is tracking all the pages visited by the user. The latter makes more sense. By the way, Kaggle wasn't open in any of the tabs on my browser.1 -

As I settled into my armchair with a steaming cup of tea, I thought back to the time I almost lost my heart—and a small fortune—to a smooth-talking scam artist. It all began innocently enough when I joined a dating site after my children encouraged me to put myself out there again. That’s when I met David. With his charming smile and heartfelt messages, he made me feel seen and cherished. We talked for hours about everything—from our favorite books to our dreams of traveling the world. I felt like a teenager again, butterflies in my stomach as we planned our future together.

But soon, the conversation took a troubling turn. David claimed he was stuck overseas due to a sudden medical emergency and needed money to pay for treatment. My heart ached for him, and against my better judgment, I sent him several wire transfers, believing I was helping the love of my life. Weeks passed, and suddenly, the sweet messages turned into silence. It dawned on me that I had been scammed. Just as I was drowning in despair, I heard about a group called Specter Lynx. I reached out, sharing my story with them. They sprang into action, tracking down David’s digital trail and uncovering the web of deceit. With their help, I was able to recover a significant portion of my lost funds. Now, I not only have my money back, but I also have a newfound appreciation for caution—and the strength of community. I often share my story, reminding others that love online can be a double-edged sword, but with a little vigilance, you can find your way back.2 -

Please, dear god, is there a browser extension to answer all these shitty cookie/data storage/privacy popups with MY SPECIFIC ANSWER?

As a web dev I understand that websites need cookies, and as a tech company employee I understand that essential cookies as well as functional cookies are okay-ish (most of the time). I just don't want marketing cookies/tracking.

All those extensions just block the popup or block all cookies. This is not what I want!

And why the hell on earth didn't they come up with one single solution for all websites beforehand, so we dont have 6.388.164.341 different popups/bars/notifications/flyouts/drop-ins/overlays???

THIS. IS. JUST. ANNOYING.

Thank you for your attention.4 -

personal projects, of course, but let's count the only one that could actually be considered finished and released.

which was a local social network site. i was making and running it for about three years as a replacement for a site that its original admin took down without warning because he got fed up with the community. i loved the community and missed it, so that was my motivation to learn web stack (html, css, php, mysql, js).

first version was done and up in a week, single flat php file, no oop, just ifs. was about 5k lines long and was missing 90% of features, but i got it out and by word of mouth/mail is started gathering the community back.

right as i put it up, i learned about include directive, so i started re-coding it from scratch, and "this time properly", separated into one file per page.

that took about a month, got to about 10k lines of code, with about 30% of planned functionality.

i put it up, and then i learned that php can do objects, so i started another rewrite from scratch. two or three months later, about 15k lines of code, and 60% of the intended functionality.

i put it up, and learned about ajax (which was a pretty new thing since this was 2006), so i started another rewrite, this time not completely from scratch i think.

three months later, final length about 30k lines of code, and 120% of originally intended functionality (since i got some new features ideas along the way).

put it up, was very happy with it, and since i gathered quite a lot of user-generated data already through all of that time, i started seeing patterns, and started to think about some crazy stuff like auto-tagging posts based on their content (tags like positive, negative, angry, sad, family issues, health issues, etc), rewarding users based on auto-detection whether their comments stirred more (and good) discussion, or stifled it, tracking user's mental health and life situation (scale of great to horrible, something like that) based on the analysis of the texts of their posts...

... never got around to that though, missed two months hosting payments and in that time the admin of the original site put it back up, so i just told people to move back there.

awesome experience, though. worth every second.

to this day probably the project i'm most proud of (which is sad, i suppose) - the final version had its own builtin forum section with proper topics, reply threads, wysiwyg post editor, personal diaries where people could set per-post visibility (everyone, only logged in users, only my friends), mental health questionnaires that tracked user's results in time and showed them in a cool flash charts, questionnaire editor where users could make their own tests/quizzes, article section, like/dislike voting on everything, page-global ajax chat of all users that would stay open in bottom right corner, hangouts-style, private messages, even a "pointer" system where sending special commands to the chat aimed at a specific user would cause page elements to highlight on their client, meaning if someone asked "how do i do this thing on the page?", i could send that command and the button to the subpage would get highlighted, after they clicked it and the subpage loaded, the next step in the process would get highlighted, with a custom explanation text, etc...

dammit, now i got seriously nostalgic. it was an awesome piece of work, if i may say so. and i wasn't the only one thinking that, since showing the page off landed me my first two or three programming jobs, right out of highschool. 10 minutes of smalltalk, then they asked about my knowledge, i whipped up that site and gave a short walkthrough talking a bit about how the most interesting pieces were implemented, done, hired XD

those were good times, when I still felt like the programmer whiz kid =D

as i said, worth every second, every drop of sweat, every torn hair, several times over, even though "actual net financial profit" was around minus two hundred euro paid for those two or three years of hosting. -

Sometimes in our personal projects we write crazy commit messages. I'll post mine because its a weekend and I hope someone has a well deserved start. Feel free to post yours, regex out your username, time and hash and paste chronologically. ISSA THREAD MY DUDES AND DUDETTES

--

Initialization of NDM in Kotlin

Small changes, wiping drive

Small changes, wiping drive

Lottie, Backdrop contrast and logging in implementation

Added Lotties, added Link variable to Database Manifest

Fixed menu engine, added Smart adapter, indexing, Extra menus on home and Calendar

b4 work

Added branch and few changes

really before work

Merge remote-tracking branch 'origin/master'

really before work 4 sho

Refined Search response

Added Swipe to menus and nested tabs

Added custom tab library

tabs and shh

MORE TIME WASTED ON just 3 files

api and rx

New models new handlers, new static leaky objects xd, a few icons

minor changes

minor changesqwqaweqweweqwe

db db dbbb

Added Reading display and delete function

tryin to add web socket...fail

tryin to add web socket...success

New robust content handler, linked to a web socket. :) happy data-ring lol

A lot of changes, no time to explain

minor fixes ehehhe

Added args and content builder to content id

Converted some fragments into NDMListFragments

dsa

MAjor BiG ChANgEs added Listable interface added refresh and online cache added many stuff

MAjor mAjOr BiG ChANgEs added multiClick block added in-fragment Menu (and handling) added in-fragment list irem click handling

Unformatted some code, added midi handler, new menus, added manifest

Update and Insert (upsert) extension to Listable ArrayList

Test for hymnbook offline changing

Changed menuId from int to key string :) added refresh ...global... :(

Added Scale Gesture Listener

Changed Font and size of titlebar, text selection arg. NEW NEW Readings layout.

minor fix on duplicate readings

added isUserDatabase attribute to hymn database file added markwon to stanza views

Home changes :)

Modular hymn Editing

Home changes :) part 2

Home changes :) part 3

Unified Stanza view

Perfected stanza sharing

Added Summernote!!

minor changes

Another change but from source tree :)))

Added Span Saving

Added Working Quick Access

Added a caption system, well text captions only

Added Stanza view modes...quite stable though

From work changes

JUST a [ush

Touch horizontal needs fix

Return api heruko

Added bible index

Added new settings file

Added settings and new icons

Minor changes to settings

Restored ping

Toggles and Pickers in settings

Added Section Title

Added Publishing Access Panel

Added Some new color changes on restart. When am I going to be tired of adding files :)

Before the confession

Theme Adaptation to views

Before Realm DB

Theme Activity :)

Changes to theme Activity

Changes to theme Activity part 2 mini

Some laptop changes, so you wont know what changed :)

Images...

Rush ourd

Added palette from images

Added lastModified filter

Problem with cache response

works work

Some Improvements, changed calendar recycle view

Tonic Sol-fa Screen Added

Merge Pull

Yes colors

Before leasing out to testers

Working but unformated table

Added Seperators but we have a glithchchchc

Tonic sol-fa nice, dots left, and some extras :)))

Just a nice commit on a good friday.

Just a quickie

I dont know what im committing...2 -

!rant

Frontend people, I need your opinion.

I will soon start building the next version of a rather large project's frontend but I am mostly a backend guy.

It is a custom made web application (PHP, Postgres) that handles all business aspects of a shipping company of about 50 people (trucks, truck free space in shipments for new packages, package tracking via gps on the truck, invoicing, reselling shipping services to other businesses, everything).

The existing frontend is using an ancient version (1.x) of the YUI framework and uses AJAX heavily to refresh the user interface. It's usable, but maintaining and extending it is getting really hard as the project grows larger and larger and more systems are integrated.

So the question is, given this use case, what JS framework do I use and what is a good resource to start learning it?5 -

Google researchers have exposed details of multiple security flaws in Safari web browser that allowed user's browsing behavior to be tracked.

According to a report : The flaws which were found in an anti-tracking feature known as Intelligent Tracking Prevention, were first disclosed by Google to Apple in August last year. In a published paper, researchers in Google's cloud team have identified five different types of attacks that could have resulted from the vulnerabilities, allowing third parties to obtain "sensitive private information about the user's browsing habits."

Apple rolled out Intelligent Tracking Prevention in 2017, with the specific aim of protecting Safari browser users from being tracked around the web by advertisers and other third-party cookies.2 -

The analytics guy just sent me updated tracking specs for a web site.

There are two sheets in the file: "Custom Events LATEST" and "Custom Events updated". This is already confusing enough.

One of them has comments like "I'd like this to be amended", but the event specs described are the same as the ones implemented.

I asked him for clarification, turns out he wants the ones marked in black to be updated, the ones that don't have any label saying they don't need to be updated.

This is also a guy who for at least 2 years has been making columns in spreadsheets wider not by just widening them, or merging multiple cells, but by just letting text overflow into other cells.

I do wonder how some people manage to keep a job. -

BIG QUESTION TIME:

I want to start a small web-dev project. Basically a website with different gigs like a time tracking app. Maybe extend it in the future with other apps.

First I thought of starting with a CMS (I am quite good with Joomla!) but realized it may too soon get to its' limits and personalized extensions are quite a pain with CMS.

So I had this genius idea of working on frontend using ReactNative giving the opportunity to build for mobile in the same time and backend with Python (maybe Django framework).

Here are my questions:

1) Could this be a good solution or combination? (Considering it is more of a fun project)

2) Does anyone know a good tutorial for ReactNative besides the facebook github tutorial?2 -

TLDR, need suggestions for a small team, ALM, or at least Requirements, Issue and test case tracking.

Okay my team needs some advice.

Soo the powers at be a year ago or so decided to move our requirement tracking process, test case and issue tracking from word, excel and Visio. To an ALM.. they choice Siemens Polarion for whatever reason assuming because of team center some divisions use it..

Ohhh and by the way we’ve been all engineering shit perfectly fine with the process we had with word, excel and Visio.. it wasn’t any extra work, because we needed to make those documents regardless, and it’s far easier to write the shit in the raw format than fuck around with the Mouse and all the config fields on some web app.

ANYWAY before anyone asks or suggests a process to match the tool, here’s some back ground info. We are a team of about 10-15. Split between mech, elec, and software with more on mech or elec side.

But regardless, for each project there is only 1 engineer of each concentration working on the project. So one mech, one elec and one software per project/product. Which doesn’t seem like a lot but it works out perfectly actually. (Although that might be a surprise for the most of you)..

ANYWAY... it’s kinda self managed, we have a manger that that directs the project and what features when, during development and pre release.

The issue is we hired a guy for requirements/ Polarion secretary (DevOps) claims to be the expert.. Polarion is taking too long too slow and too much config....

We want to switch, but don’t know what to. We don’t wanna create more work for us. We do peer reviews across the entire team. I think we are Sudo agile /scrum but not structured.

I like jira but it’s not great for true requirements... we get PDFs from oems and converting to word for any ALM sucks.. we use helix QAC for Misra compliance so part of me wants to use helix ALM... Polarion does not support us unless we pay thousands for “support package” I just don’t see the value added. Especially when our “DevOps” secretary is sub par.. plus I don’t believe in DevOps.. no value added for someone who can’t engineer only sudo direct. Hell we almost wanna use our interns for requirements tracking/ record keeping. We as the engineers know what todo and have been doing shit the old way for decades without issues...

Need suggestions for small team per project.. 1softwar 1elec 1mech... but large team over all across many projects.

Sorry for the long rant.. at the bar .. kinda drunk ranting tbh but do need opinions... -

General inquiry and also I guess spreading awareness (for lack of a better category as far as I can tell) considering nothing turned up when I searched for it on here: what do you guys think about Sourcehut?

For those who don't know about it, I find it a great alternative to GitHub and GitLab considering it uses more federated collaboration methods (mostly email) mostly already built into Git which in fact predate pull requests and the like (all while providing a more modern web interface to those traditional utilities than what currently exists) on top of many other cool features (for those who prefer Mercurial, it offers first-class repo support too, and generally it also has issue tracking, pastebins, CI services, and an equivalent to GitHub Pages over HTTP as well as Gemini in fact, to name a few; it's all on its website: https://sourcehut.org/). It's very new (2019) and currently in public alpha (seems fairly stable though actually), but it will be paid in the future on the main instance (seems easy enough to self-host though, specially compared to GitLab, so I'll probably do that soon); I usually prefer not to have to pay but considering it seems to be done mostly by 1 guy (who also maintains the infrastructure) and considering how much I like it and everything it stands for, here I actually might 😅2 -

HIRE Century Web Recovery TO RECOVER YOUR LOST BITCOIN

If you’ve lost your Bitcoin to an online scam, hiring a professional recovery service can significantly improve your chances of getting your funds back. Century Web Recovery specializes in Bitcoin recovery, helping victims reclaim their stolen assets. Here’s what you need to know:

Understanding the Recovery Process

The recovery process begins with contacting Century Web Recovery. Their team will guide you through the steps necessary to initiate an investigation into your case. Understanding the process is key to managing your expectations.

Documenting Your Case

To facilitate recovery, it’s essential to document all relevant information regarding the scam. This includes transaction records, wallet addresses, and any communications with the scammer. Century Web Recovery will help you gather this information to build a strong case.

Investigation and Tracking

Once you hire Century Web Recovery, their experts will begin investigating your case. They use sophisticated tools to track the stolen Bitcoin, identifying the paths taken by the scammers. This tracing is crucial for successful recovery.

Freezing Stolen Assets

Quick action is vital in recovering stolen Bitcoin.Century Web Recovery works directly with cryptocurrency exchanges to freeze any stolen assets, preventing the scammers from cashing out your funds. This collaboration is essential for a successful recovery.

Legal Support and Guidance

If necessary, Century Web Recovery can provide legal support. They will guide you on reporting the scam to law enforcement and assist in filing any legal claims. Their expertise in crypto-related cases ensures you receive the best advice on how to proceed.

If you’ve lost Bitcoin to an online scam, don’t hesitate. Hire Century Web Recovery to recover your lost assets and regain your financial security.

Website. centuryweb.online2 -

TRUSTED EXPERTS IN BITCOIN, USDT & ETH RECOVERY SERVICE : HIRE RAPID DIGITAL RECOVERY

After reading numerous testimonials about how RAPID DIGITAL RECOVERY has successfully assisted individuals in recovering money and cryptocurrencies lost to scammers, I decided to reach out for help with my own situation. In February 2025, I fell victim to a scam and lost a significant amount of USDT. Feeling hopeless and overwhelmed, I turned to RAPID DIGITAL RECOVERY, hoping they could work their magic and help me reclaim my lost funds. To my astonishment, the team at RAPID DIGITAL RECOVERY was incredibly efficient and professional from the very beginning. They quickly got to work, employing advanced tracking techniques to trace my lost USDT. Within a short period, they were able to identify the initial wallet where my funds had been sent and followed the trail through various wallets to which the funds had been transferred. It was impressive to see how they navigated the complex web of transactions with such expertise and precision. What truly amazed me was their ability to not only trace the funds but also to recover them. They managed to move the USDT out of the wallets where it had been sent and successfully returned it to my original wallet. As if that wasn’t enough, they even added extra funds as a gesture of goodwill, which felt nothing short of miraculous. This unexpected bonus was a delightful surprise and made the entire experience even more rewarding. The entire process with RAPID DIGITAL RECOVERY was seamless and reassuring. Their dedication to helping clients recover lost assets is commendable, and their expertise in navigating the often murky waters of cryptocurrency transactions is unparalleled. They kept me informed throughout the process, providing updates and answering any questions I had, which helped alleviate my anxiety. I am incredibly grateful for their assistance and can confidently say that they turned a dire situation into a positive outcome. If you find yourself in a similar predicament, I highly recommend reaching out to RAPID DIGITAL RECOVERY. Their services truly feel like magic, and they have restored my faith in the possibility of recovering lost funds. With their help, I was able to regain my lost assets and my peace of mind.

REACH OUT TO RAPID DIGITAL RECOVERY VIA:

Whatsapp: +1 4 14 80 71 4 85

Telegram: @ Rapid digital recovery1

Email: rapid digital recovery (@) execs. com2 -

BEST CHEATING HUSBAND TRACKER

Are you looking for a way to spy on a suspected cheater? Get in touch with Web Bailiff through:

Web Bailiff Contractor

Website Webbailiffcontractor . com

Email web @ bailiffcontractor . net

WhatsApp +1 360 819 8556

From tracking social media apps to real-time GPS, Web Bailiff provides a comprehensive and easy-to-use solution for suspicious partners searching for clarity. They give you remote access to call logs, contact lists, text messages, and browser history on your spouse's cell phone or tablet.

-

Are you facing the devastating reality of having your Bitcoin stolen? Did you know that approximately $1 billion in cryptocurrencies were stolen in the first half of 2018 alone? It's a scary thought, but there are solutions out there to help you get your stolen BTC back. In my own experience, I turned to DANIEL MEULI WEB RECOVERY, the trusted name in cryptocurrency recovery, and I couldn't be happier with the results. When it comes to recovering your stolen BTC, you need a team of experts who understand the intricacies of the blockchain and have a proven track record of success. DANIEL MEULI WEB RECOVERY checks all the boxes, with a team of skilled professionals who have years of experience in cryptocurrency recovery. Their cutting-edge technology and strategic partnerships allow them to track down and recover stolen BTC quickly and efficiently. So, how does the recovery process work with DANIEL MEULI WEB RECOVERY? Once you reach out to their team, they will conduct a thorough investigation into the theft of your BTC. This involves analyzing the blockchain, tracking the movement of your stolen funds, and identifying the culprits behind the theft. With this information in hand, DANIEL MEULI WEB RECOVERY will work tirelessly to recover your stolen BTC and return it to your wallet. Look no further than WEBSITE. WWW . DANIELMEULIRECOVERYWIZARD . ONLINE for expert assistance in recovering stolen BTC. Their team has a solid history of successful outcomes, having aided numerous clients in regaining their lost funds. By choosing DANIEL MEULI WEB RECOVERY, you can trust that your stolen BTC will be handled with care and expertise, providing you with the peace of mind and financial security you deserve. Make the smart choice and let DANIEL MEULI WEB RECOVERY help you reclaim what is rightfully yours. Seeking to retrieve your stolen BTC? Trust DANIEL MEULI WEB RECOVERY, the leader in cryptocurrency recovery, with a history of successfully recovering lost funds. Reach out to them now and get your funds back. Contact information to DANIEL MEULI WEB RECOVERY IS: WhatsApp +,3,9,3,5,1,2,0,1,3,5,2,8

Email. DANIELMEULIWEBERECOVERY AT EMAIL. C O M -

Was working a record keeping system for the Airport for tracking departures and arrivals and some COVID-19 data

ended up realizing that the stack i had gone with wasn't gonna cut it

Had to port the whole thing to a new web framework realizing that the one i had gone with made some operations a bit complicated -

The emergence of cryptocurrencies has completely changed the way we think about money in the constantly changing field of digital finance. Bitcoin, a decentralized currency that has captivated the interest of both investors and enthusiasts, is one of the most well-known of these digital assets. But the very characteristics of this virtual money, like its intricate blockchain technology and requirement for safe storage, have also brought with them a special set of difficulties. One such challenge is the issue of lost or stolen Bitcoins. As the value of this cryptocurrency has skyrocketed over the years, the stakes have become increasingly high, leaving many individuals and businesses vulnerable to the devastating consequences. Enter Salvage Asset Recovery, a team of highly skilled and dedicated professionals who have made it their mission to help individuals and businesses recover their lost or stolen Bitcoins. With their expertise in blockchain technology, cryptography, and digital forensics, they have developed a comprehensive and innovative approach to tackling this pressing issue. The story begins with a young entrepreneur, Sarah, who had been an early adopter of Bitcoin. She had invested a significant portion of her savings into the cryptocurrency, believing in its potential to transform the financial landscape. However, her excitement quickly turned to despair when she discovered that her digital wallet had been hacked, and her Bitcoins had been stolen. Devastated and unsure of where to turn, Sarah stumbled upon the Salvage Asset Recovery website while searching for a solution. Intrigued by their claims of successful Bitcoin recovery, she decided to reach out and seek their assistance. The Salvage Asset Recovery team, led by the enigmatic and brilliant individuals immediately sprang into action. They listened intently to Sarah's story, analyzing the details of the theft and the specific circumstances surrounding the loss of her Bitcoins. The Salvage Asset Recovery team started by carrying out a comprehensive investigation, exploring the blockchain in great detail and tracking the flow of the pilfered Bitcoins. They used sophisticated data analysis methods, drawing on their knowledge of digital forensics and cryptography to find patterns and hints that would point them in the direction of the criminal. As the investigation progressed, the Salvage Asset Recovery team discovered that the hacker had attempted to launder the stolen Bitcoins through a complex network of digital wallets and exchanges. Undeterred, they worked tirelessly, collaborating with law enforcement agencies and other industry experts to piece together the puzzle. Through their meticulous efforts, the team was able to identify the location of the stolen Bitcoins and devise a strategic plan to recover them. This involved a delicate dance of legal maneuvering, technological wizardry, and diplomatic negotiations with the various parties involved. Sarah marveled at how skillfully and precisely the Salvage Asset Recovery team carried out their plan. They outwitted the hacker and reclaimed the stolen Bitcoins by navigating the complex web of blockchain transactions and using their in-depth knowledge of the technology. As word of their success spread, the Salvage Asset Recovery team found themselves inundated with requests for assistance. They rose to the challenge, assembling a talented and dedicated team of blockchain experts, cryptographers, and digital forensics specialists to handle the growing demand. Send a DM to Salvage Asset Recovery via below contact details.

WhatsApp-----.+ 1 8 4 7 6 5 4 7 0 9 6

Telegram-----@SalvageAsset 1

1 -

BEST CRYPTO RECOVERY EXPERT- OFFERING SOLUTIONS FOR STOLEN CRYPTO ASSETS/ VISIT TRUST GEEKS HACK EXPERT

In today’s digital age, scams in the crypto space are becoming increasingly sophisticated. Even experienced users can be deceived by what appear to be legitimate update prompts or wallet notifications.In my case, it all started with what looked like a routine wallet update notification. I received an email that appeared to come from the official source of my XRP wallet provider. It was well-crafted, complete with the company's branding, tone, and even security warnings urging users to act quickly to prevent potential vulnerabilities. The message informed me that a mandatory security update was required to continue using the wallet, with a direct link to download the latest version.Wanting to stay ahead on security and seeing no red flags at first glance, I clicked the link and followed the update process. The website I was taken to was a near-perfect replica of the actual wallet site same layout, same logos, and even a live chat box that appeared to respond like a real support agent. It asked me to enter my wallet credentials, including my recovery phrase, under the guise of syncing my existing wallet to the new version. Trusting that this was a legitimate procedure, I complied. Within minutes, my wallet was drained.I refreshed my wallet balance out of instinct and watched in real time as my 650K XRP disappeared, transferred through a series of unknown addresses. The sickening realization hit: I had been tricked into handing over access to my assets. The email, the website, the entire update process it was all part of a well-coordinated phishing scam. After the initial shock, I began researching recovery options and came across TRUST GEEKS HACK EXPERT Web Site h t tp s:// trust geeks hack expert . c o m / , a firm that specializes in tracing and recovering stolen cryptocurrency. Their team is well-versed in the intricacies of blockchain technology and has a strong track record of helping victims reclaim their digital assets. From the moment I contacted them, their professionalism and confidence gave me a glimmer of hope in an otherwise bleak situation.They began by meticulously tracking the movement of my XRP across multiple wallets and exchanges. XRP poses unique challenges for traceability due to its blockchain structure, which while public is difficult to interpret without specialized tools and experience. Most recovery firms might have declared the situation a lost cause, but TRUST GEEKS HACK EXPERT leveraged their expertise, contacts, and timing to turn the situation around. In the end, they successfully recovered a significant portion of my stolen funds, TRUST GEEKS HACK EXPERT support team is available W e b Si te. w w w :// trust geeks hack expert . c o m/ ( E- m a i l: Trust geekshackexpert @ f a s t s e r v i c e . C o m) (TeleGram.Trustgeekshackexpert)2 -

It was a cold, rainy night when I first stumbled upon the Cyber Codex Revolution website. I had been searching for months, desperately trying to recover the Bitcoin investment that had slipped through my fingers, and I was on the verge of giving up hope. The story began a few years ago, when I had decided to dip my toes into the world of cryptocurrency. Intrigued by the promise of financial freedom and the potential for massive returns, I had invested a significant portion of my savings into Bitcoin. At first, everything seemed to be going well – the value of my investment was steadily climbing, and I could almost taste the financial security that lay ahead. However, my dreams of wealth and prosperity were shattered when I fell victim to a sophisticated hacking scheme. Unbeknownst to me, my digital wallet had been compromised, and my entire Bitcoin stash had been siphoned away, leaving me feeling helpless and betrayed. I spent countless hours scouring the internet, searching for any information or resources that could help me recover my lost investment. I reached out to various cryptocurrency exchanges and online forums, but the responses were often vague, unhelpful, or downright discouraging. Just when I was about to give up, I stumbled upon the Cyber Codex Revolution website. The site promised a glimmer of hope, claiming that they had a team of experienced professionals who specialized in tracking down and retrieving stolen cryptocurrency. Skeptical but desperate, I decided to give them a chance. I reached out to the Cyber Codex Revolution team, and they immediately sprang into action. They listened to my story with empathy and understanding, and they assured me that they would do everything in their power to help me recover my lost Bitcoin. Over the next few weeks, the Cyber Codex Revolution team worked tirelessly, using their expertise in blockchain analysis, digital forensics, and law enforcement connections to unravel the complex web of transactions that had led to the theft of my Bitcoin. It was a painstaking process, filled with dead ends and frustrating setbacks, but the Cyber Codex Revolution team never gave up. They meticulously traced the movement of the stolen funds, following a trail that led them across international borders and through a maze of shell companies and anonymous accounts. As the investigation progressed, the team uncovered a sophisticated criminal network that had been targeting unsuspecting cryptocurrency investors like myself. They worked closely with law enforcement agencies around the world to build a case against the perpetrators, gathering evidence and building a solid legal foundation for their recovery efforts. Finally, after months of relentless work, the Cyber Codex Revolution team was able to locate and secure a significant portion of my stolen Bitcoin. It was a bittersweet moment, as I had lost a substantial amount of my investment, but the fact that I was able to recover anything at all felt like a small victory, you can also contact them if a have been a victim Whatsapp: +39 35090368252

-

As the head of Quantum Innovations, based in Seattle, Washington, I’ve always taken pride in our company’s commitment to cutting-edge technology and innovation. However, a recent security breach has underscored how vulnerable we were to a major cybersecurity threat involving our corporate mobile devices. The breach began when several employees unknowingly downloaded a malicious app from a third-party app store. What initially appeared to be a harmless app turned out to contain malware, granting cybercriminals access to sensitive company data. In total, the attackers stole approximately $200,000 USD worth of proprietary business information, including financial records, intellectual property, and confidential communications. Even more alarming, the breach led to the theft of employee banking details, enabling unauthorized transfers of funds from both personal and corporate accounts. The breach was discovered when our IT team noticed unusual activity on the affected devices, including unauthorized access to secure files and suspicious data transfers. After conducting a thorough investigation, we realized that the malware had been secretly transmitting our valuable data to an external server, including sensitive financial information. At that point, it became clear that the situation was far worse than we had initially anticipated.In response to this crisis, I reached out to TRUST GEEKS HACK EXPERT at Web, https :/ / t r u s t g e e k s h a c k e x p e r t . c o m/ E m a i l : i n f o @ t r u s t g e e k s h a c k e x p e r t.c o m And T e l e G r a m:: T r u s t g e e k s h a c k e x p e r t, A renowned cybersecurity firm with a reputation for its expertise in mobile device security and data recovery. Their team acted swiftly to assess the full scope of the attack, clean the infected devices, and secure our mobile systems.Thanks to their expert intervention, we were able to completely remove the malware from all affected devices, TRUST GEEKS HACK EXPERT data recovery specialists went above and beyond to recover not only the stolen company data but also the funds that had been illicitly transferred from both employee and corporate bank accounts. Through negotiation with authorities and tracking the stolen funds, they successfully managed to recover every dollar that had been taken. Their diligence and expertise were truly exceptional, and because of their efforts, we were able to avert what could have been a catastrophic financial loss.In the wake of this breach, we are more committed than ever to fortifying our security measures. The swift response and effective recovery efforts from TRUST GEEKS HACK EXPERT have been invaluable in restoring our confidence and securing our operations.1

-

BEST HACKER TO RECOVER LOST OR SCAMMED BTC AND USDT= VISIT SALVAGE ASSET RECOVERY

The winter wind howled outside, rattling my windows as I sat frozen in front of my computer, staring in disbelief. My heart sank as I refreshed the screen, only to be met with a chilling zero balance where my $50,000 Bitcoin investment had once thrived. A cold sweat broke out on my forehead as panic set in. Surely, this had to be a glitch, right? But as I delved deeper, the horrifying reality emerged that I had been hacked. For three agonizing nights, I plunged into a dark abyss of online forums, desperately seeking answers. Most options felt like dead ends, either filled with vague promises or outright scams. Just when I was about to lose hope, I stumbled upon Salvage Asset Recovery. Their presentation and detailed case studies stood out amidst a sea of questionable "crypto recovery experts" who seemed to offer nothing but empty assurances. What caught my attention was their straightforward approach. Unlike others who dazzled with grandiose claims, their team asked pointed questions about my security setup and the timeline of the theft. Michael, their lead investigator, explained their forensic process in layman’s terms, avoiding the technical jargon that often obscures understanding. This honest communication immediately fostered a sense of trust, which was crucial during such a distressing time. The investigation unfolded like a gripping cybercrime thriller. Their team meticulously traced my stolen funds through a complex web of wallet addresses across various blockchains. They uncovered that the hacker had employed a sophisticated service to launder the coins, but Salvage Asset Recovery’s proprietary tracking methods cut through the obfuscation like a hot knife through butter. It was astonishing to witness their expertise in action, as they navigated the intricate landscape of cryptocurrency transactions. After 20 excruciating days, I received the email that would change everything: "We've successfully frozen the assets at an exchange in Estonia." The relief washed over me like a tidal wave, and I sank to my knees in gratitude. Within 72 hours, my Bitcoin was back in my possession, with only a reasonable fee deducted for their services. To anyone facing the same despair I once felt: there is hope. Salvage Asset Recovery are not just technicians; they are digital detectives who blend technology with relentless investigative spirit. They restored not only my funds but also my faith in the cryptocurrency ecosystem, proving that even in the darkest moments, there are heroes ready to help. their contact info

WHATSAPP+ 1 8 4 7 6 5 4 7 0 9 6

TELEGRAM @Salvageasset2 -

STEPS TO TAKE IF YOU HAVE BEEN SCAMMED : CONSULT RAPID DIGITAL RECOVERY

WhatsApp: +1 4 14 80 71 4 85

Email: rapid digital recovery (@)execs. com

The winter wind howled outside, rattling my windows as I sat frozen in front of my computer, staring in disbelief. My heart sank as I refreshed the screen, only to be met with a chilling zero balance where my $50,000 Bitcoin investment had once thrived. A cold sweat broke out on my forehead as panic set in. Surely, this had to be a glitch, right? But as I delved deeper, the horrifying reality emerged I had been hacked. For three agonizing nights, I plunged into a dark abyss of online forums, desperately seeking answers. Most options felt like dead ends, either filled with vague promises or outright scams. Just when I was about to lose hope, I stumbled upon RAPID DIGITAL RECOVERY. Their presentation and detailed case studies stood out amidst a sea of questionable "crypto recovery experts" who seemed to offer nothing but empty assurances. What caught my attention was their straightforward approach. Unlike others who dazzled with grandiose claims, their team asked pointed questions about my security setup and the timeline of the theft. Michael, their lead investigator, explained their forensic process in layman’s terms, avoiding the technical jargon that often obscures understanding. This honest communication immediately fostered a sense of trust, which was crucial during such a distressing time. The investigation unfolded like a gripping cybercrime thriller. Their team meticulously traced my stolen funds through a complex web of wallet addresses across various blockchains. They uncovered that the hacker had employed a sophisticated service to launder the coins, but RAPID DIGITAL RECOVERY’s proprietary tracking methods cut through the obfuscation like a hot knife through butter. It was astonishing to witness their expertise in action, as they navigated the intricate landscape of cryptocurrency transactions. After 20 excruciating days, I received the email that would change everything: "We've successfully frozen the assets at an exchange in Estonia." The relief washed over me like a tidal wave, and I sank to my knees in gratitude. Within 72 hours, my Bitcoin was back in my possession, with only a reasonable fee deducted for their services. To anyone facing the same despair I once felt: there is hope. RAPID DIGITAL RECOVERY are not just technicians; they are digital detectives who blend technology with relentless investigative spirit. They restored not only my funds but also my faith in the cryptocurrency ecosystem, proving that even in the darkest moments, there are heroes ready to help.2 -

SEEKING HELP TO RECOVER STOLEN CRYPTOCURRENCIES HIRE ADWARE RECOVERY SPECIALIST

The world of cryptocurrency, with its promise of financial freedom and innovation, had always fascinated me—until it became the backdrop of my most painful financial loss. WhatsApp info:+12 (72332)—8343

Last year, I fell victim to a sophisticated crypto scam disguised as a “guaranteed returns” investment opportunity. What started as a confident leap into passive income quickly spiraled into disaster. In a matter of hours, years of savings vanished, along with the anonymous scammers behind it. I was left with nothing but shock, shame, and a haunting sense of betrayal.

I couldn’t stop replaying every step—every red flag I’d missed, every decision I questioned too late. Crypto forums were filled with similar heartbreaking stories, yet few offered solutions. I was beginning to lose hope.

That’s when I discovered ADWARE RECOVERY SPECIALIST.

From our very first interaction, I knew they were different. Their team combined deep technical expertise with genuine empathy—something I hadn’t expected. I wasn’t just another case number to them; they truly listened, offering reassurance that helped ease my self-blame. As one advisor told me, “These scams exploit trust, not incompetence.” That perspective changed everything.

Their forensic work in the crypto space was remarkable. They unraveled the web of how my funds were siphoned—identifying vulnerabilities in wallets and tracking the fraudsters through decentralized exchanges. Using proprietary tools and cross-platform partnerships, they followed the trail with a level of precision that inspired real hope. Telegram info: h t t p s:// t. me / adware recovery specialist1

The process wasn’t easy. There were dead ends, unresponsive platforms, and technical roadblocks. But ADWARE RECOVERY SPECIALIST never wavered. By the second day, they had already recovered $350,000 of my lost assets.

But their support didn’t stop at recovery. They empowered me—educating me on how to protect future investments, and even connecting me with a private community of other scam survivors. That network gave me strength and perspective. Email info: Adware Recovery Specialist @ auctioneer. net

Today, I’m not only financially restored, but emotionally grounded. My experience stands as proof that recovery is possible—even in the murky world of crypto scams. With the right team, the right tools, and relentless dedication, there is a way back. Website info: h t t p s:// adware recovery specialist. com

If you’ve been scammed, know this: you are not alone. There is hope. ADWARE RECOVERY SPECIALIST helped me reclaim more than just money—they helped me reclaim peace of mind.2 -

mail: contactus @ hacksavvy technology . com

Website: https : // hacksavvy techrecovery . com

Whatsapp : +79998295038

My name is Agustin , a seasoned game developer based in Tokyo. I've spent years immersed in the world of tech and innovation, and back in 2017, when Bitcoin was making waves in the tech community, I knew it was something I had to explore. Being someone who stays ahead of the curve, I decided to invest, carefully tracking the market and watching my portfolio grow steadily. By 2023, my Bitcoin holdings had reached an impressive $920,000—a significant achievement that I was proud of. But one day, during a routine system upgrade, the unthinkable happened. I accidentally deleted my entire Bitcoin wallet. At first, I couldn’t believe it. I didn’t even know it was possible to lose access so easily, and worse, in the chaos of managing work and personal projects, I had misplaced my recovery codes. I was devastated. All those years of smart investment, my carefully built savings—gone in an instant. It felt like a catastrophic mistake, and I was kicking myself for not being more careful. After days of searching for solutions, I stumbled upon Hack Savvy Tech through a well-regarded tech blog I frequently read. At first, I was skeptical. After all, could they really retrieve something that seemed so irreversibly lost? But as a professional who understands the importance of expertise, I decided to trust them. I figured if anyone could fix this, it would be people with a deep knowledge of cryptocurrency and digital recovery. I’m beyond glad I reached out. The team at Hack Savvy Tech was incredibly responsive and professional from the very first contact. They were patient, took the time to understand my problem in detail, and reassured me that they had handled cases like mine before. Their communication was top-notch, and I felt like I was in capable hands the entire time.Within just a few weeks, they did the impossible—they recovered my Bitcoin wallet. Seeing that $920,000 safely restored was one of the most relieving moments of my life. I can’t express enough how thankful I am to the team for their expertise and dedication. If you’re like me, someone deeply knowledgeable about tech but still caught off guard by unexpected situations, I urge you not to panic. Whether you’re in Japan or anywhere else in the world, Wizard Web Recovery is your best bet. They’re the real deal, and I wouldn’t hesitate to recommend them to anyone facing a similar crisis. -

CONSULT A LICENSED CRYPTO AND USDT RECOVERY EXPERT= REACH OUT TO SALVAGE ASSET RECOVERY