Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "opengl"

-

Microsoft is investing in Git, VSCode, Electron, Github, Bash-on-Windows. Things that decentralize and help prevent lock-in.

Apple is taking away the only universal cross platform graphics system (OpenGL), locking developers into Metal, and taking away our escape keys.25 -

Me: I know so many languages, I don't need to learn c++ to follow along with this 3D OpenGl tutorial!

Narrator: Oh, but he did need to know c++ to follow along with the 3D OpenGl tutorial.4 -

Y'all are ranting about Microsoft GitHub when Apple decides to deprecate OpenGL support on macOS.

https://developer.apple.com/macos/...

Gaming on Mac? Pah, Linux is where the fun happens.10 -

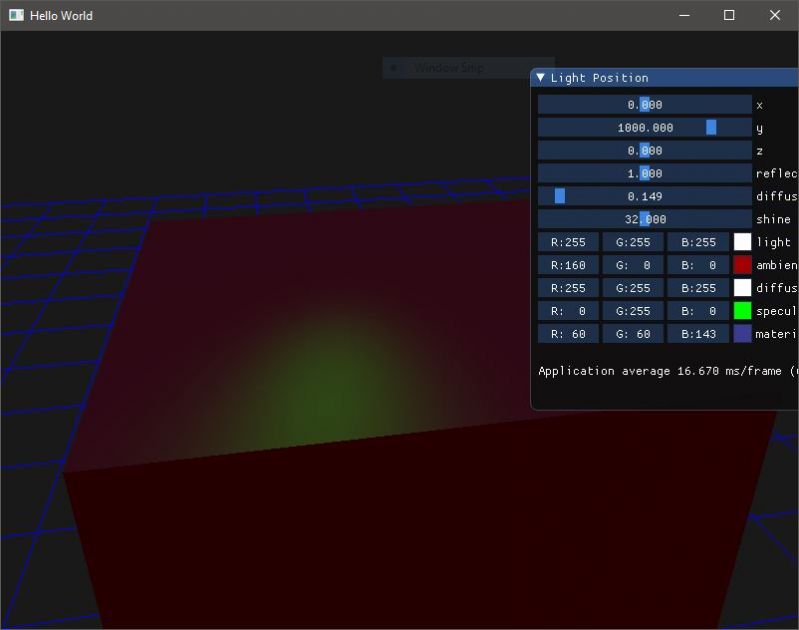

Seeing someone prototype a 3D game with complex lighting using OpenGL in a 15 minute video (It was sped up about 4x but, still, fuck me)

Using c. Not c++.

He also did 3D graphics in BASIC from scratch to explain how they work, generally.15 -

After almost 3 years of hard work here’s my Bedroom setup. I produce music as a hobby in addition to dev’ing and 3d graphics(blender/VR/opengl) stuff. Sorry for the unmade bed 😅. Build my first custom pc last October

- I7 7700K

- Asus OC 1080ti

- 32 GB DDR4

- 2 512GB Samsung 960 pro (one for each os)

Feel free to ask about anything else 14

14 -

Holy shit! Apple deprecates OpenGL.

How dumb can they be? They really want to be this arrogant kid in the corner that only plays with itself, won't they?6 -

Still trying to get good.

The requirements are forever shifting, and so do the applied paradigms.

I think the first layer is learning about each paradigm.

You learn 5-10 languages/technologies, get a feeling for procedural/functional/OOP programming. You mess around with some electronics engineering, write a bit of assembly. You write an ugly GTK program, an Android todo app, check how OpenGL works. You learn about relational models, about graph databases, time series storage and key value caches. You learn about networking and protocols. You void the warranty of all the devices in your house at some point. You develop preferences for languages and systems. For certain periods of time, you even become an insufferable fanboy who claims that all databases should be replaced by MongoDB, or all applications should be written in C# -- no exceptions in your mind are possible, because you found the Perfect Thing. Temporarily.

Eventually, you get to the second layer: Instead of being a champion for a single cause, you start to see patterns of applicability.

You might have grown to prefer serverless microservice architectures driven by pub/sub event busses, but realize that some MVC framework is probably more suitable for a 5-employee company. You realize that development is not just about picking the best language and best architecture -- It's about pros and cons for every situation. You start to value consistency over hard rules. You realize that even respected books about computer science can sometimes contain lies -- or represent solutions which are only applicable to "spherical cows in a vacuum".

Then you get to the third layer: Which is about orchestrating migrations between paradigms without creating a bigger mess.

Your company started with a tiny MVC webshop written in PHP. There are now 300 employees and a few million lines of code, the framework more often gets in the way than it helps, the database is terribly strained. Big rewrite? Gradual refactor? Introduce new languages within the company or stick with what people know? Educate people about paradigms which might be more suitable, but which will feel unfamiliar? What leads to a better product, someone who is experienced with PHP, or someone just learning to use Typescript?

All that theoretical knowledge about superior paradigms won't help you now -- No clean slates! You have to build a skyscraper city to replace a swamp village while keeping the economy running, together with builders who have no clue what concrete even looks like. You might think "I'll throw my superior engineering against this, no harm done if it doesn't stick", but 9 out of 10 times that will just end in a mix of concrete rubble, corpses and mud.

I think I'm somewhere between 2 and 3.

I think I have most of the important knowledge about a wide array of languages, technologies and architectures.

I think I know how to come to a conclusion about what to use in which scenario -- most of the time.

But dealing with a giant legacy mess, transforming things into something better, without creating an ugly amalgamation of old and new systems blended together into an even bigger abomination? Nah, I don't think I'm fully there yet.8 -

I like playing tf2.

I play every video game with max brightness on the lappy.

The problem is that when I alt tab back to anything else, eg chrome, I get dazzled and my eyes hurt.

I'm on linux and accidentally noticed that I can connect to the X server and do stuff.

So I'm listening for events with the PropertyChangeMask, and when the active window has the name "Team Fortress 2 - OpenGL", I run "light -S 100", otherwise I use what I already had.

Very happy with this hack, instant brightness changes on alt tab. -

"OpenGL, OpenCL deprecated in favor of Metal 2 in macOS 10.14 Mojave". Another reason I'm never buying another Apple computer.2

-

DirectX. Just plainly because DirectX is _used_ and propagated comercially. That lured game developers to it and glued them to Windows.

If I could change something in the past, I'd want to switch Win to Linux as a game platform. There's practically no sane reason to fix yourself to a single platform, especially to Windows. Hell, I'd even go for Mac because it uses OpenGL!

And don't give me that fancy DirectX 12 description you've seen on some Microsoft's or "professional" gaming website. DirectX is evil.5 -

College student.

Had Computer Graphics project to complete with OpenGL and C🙄

Night before submission * eyes are drooping*

How to stop the transition of the character?

Solution: Write an empty function.

😎 Problem solved.7 -

All the time while I'm programming I hate Java.... Don't hate me now :D I'm learning Java in high school. I very love very fast programming languages such as C and C++, so this is why I don't like Java, but there are some reasons why I like Java. I just started learning how to create own window. What the hack is this? This is so simple. I tried to create window in C/C++ with OpenGL, just blank window with color. Complicated..... But with java it's fairy tale.

You can add me now to Java familly, but remember I also love C++.

So here your are, Hello World Java FX app :D

Final goal:

Create window application similar scratch. 16

16 -

Just installed linux (Ubuntu 16.04.3 LTS x64) because windows update was being a cunt, instantly, it all fell into place and I got it fully running with minecraft (using generic driver, but it actually works pretty well, don't worry I will get the proper one tomorrow) and a desktop icon for it within two hours compare to windows (update) taking 4 days to do barely any updates, not accepting java or graphics drivers, which it requires because fuck opengl with the default drivers.

Fuck windows. Hooray for linux!

Now back to programming...

Thanks for putting up with me but I just need to vent because I felt like I couldn't program (and I didn't) because of FUCKING DOOLALY WINDOWS 8!

Btw thanks to the local charity shop for introducing me to (SUSE) linux when I was like 11, giving me a hope in hell of using linux. I now have around 11 bootable linux disks and 1 bootable flash.rant all praise ubuntu hail linux ranting my fucking arse off java works fuck windows opengl by default3 -

'yes' in linux shell has become my favourite command when i discovered it. it has a careless touch to it, like "yeah whatever just do the thing".

also, i like glutMainLoop. a saw doll inside my head says "let the game begin!" each time i type this function.1 -

Fuck android studio updates, fuck OpenGL libraries, fuck nvidia graphics on Linux, fuck it all to hell.

These bullshit updates keep breaking stuff in apps, and then the emulator itself gets broken somehow, then I need to run a bullshit shell script I found on some esoteric Arch Linux forum to symlink OpenGL .so libraries on my Linux machine every time I update because fucking OpenGL and nvidia crappy drivers on Linux, which also broke my xrandr configs and segfaulted the xfce4-display app.

Seriously that's horseshit. Linux will never ever ever break through the glass ceiling and become idiot-proof non-tech user friendly like the botnets Winblows and macPrison.6 -

Was interested to learn about OpenGL and WebGL. Just found out no fucking drivers exist for my graphics card on Ubuntu.

WTF AMD !!! Atleast provide some compatibility for your product if I am purchasing it !!!8 -

Ditched MacOS in favour for Fedora.

First thing that I notice is the increased framerate and more OpenGL extensions in OpenGL extensions viewer.

Never imagined a day that the video drivers in Linux surpasses these of MacOS.3 -

So I'm doing some OpenGL stuff in C++, for debugging I've created a macro that basically injects my error check code after every OpenGL API call. Basically I don't want any of the code in release builds but I want it to be in debug. Also it needs to be usable inline and accept any GL function return type. From what I can tell I've satisfied all requirements by making the macro generate a generic lambda that returns the original function call result but also creates a stack object that uses the scope to force my error check after the return statement by using the destructor.

Basically I can do:

Log(gl(GetString(...));

gl(DeleteShader(...));

Etc where the GL call can be a function parameter or not.

So my question is, is the code shown in the picture the best way to achieve my goals while providing the behaviour im going for? 13

13 -

One of the coolest projects I've worked on recently was this little adventure game I made for a game jam a while back, It was made from scratch with Golang and C over two days. It also features procedural level generation (that technically should allow the user to walk in one direction for at least 7 decades).

7

7 -

Discovered pro tip of my life :

Never trust your code

Achievements unlocked :

Successfully running C++ GPU accelerated offscreen rendering engine with texture loading code having faulty validation bug over a year on production for more than 1.5M daily Android active users without any issues.

History : Recently I was writing a new rendering engineering that uses our GPU pipeline engine.. and our prototype android app benchmark test always fails with black rendering frame detection assertion.

Practice:

Spend more than a month to debug a GPU pipeline system based on directed acyclic graph based rendering algorithm.

New abilities added :

Able to debug OpenGL ES code on Android using print statement placed in source code using binary search.

But why?

I was aware of the issue over a month and just ignored it thinking it's a driver bug in my android device.. but when the api was used by one of Android dev, he reported the same issue. In the same day at night 2:59AM ....

Satan came to me and told me that " ok listen man, here is what I am gonna do with you today, your new code will be going production in a week, and the renderer will give you just one black frame after random time, and after today 3AM, your code will not show GL Errors if you debug or trace. Buhahahaha ahhaha haahha..... Puffff"

And he was gone..

Thanks satan for not killing me.. I will not trust stable production code anymore enevn though every line is documented and peer reviewed. -

Me: oh cool, using OpenGL and GLFW makes it nice and easy to draw a triangle! Might look into using GLEW to start making things a bit more cross platform..

* 48 hours later *

Me: Oh joy, of course everyone uses fucking visual studio, why can't people just offer tutorials or documentation for people using meson or you know... literally anything else that isn't visual studio!

It's fairly easy for me to port C++ to C with my limited knowledge but fuck me am I sick of documentation and articles always targeting a single method...6 -

Is it just me or does it seem like everyone is "making their own game" but they mean they got opengl to render a triangle.3

-

In a class i attended we learned about advanced image synthesis and had to write OpenGL in the exercises.

It was incredibly difficult and i put incredible amounts of time into it and solved the tasks and was incredibly happy when it started to work ☺️2 -

Protip: always account for endianness when using a library that does hardware access, like SDL or OpenGL :/

I spent an hour trying to figure out why the fucking renderer was rendering everything in shades of pink instead of white -_-

(It actually looked kinda pretty, though...)

I'd used the pixel format corresponding to the wrong endianness, so the GPU was getting data in the wrong order.

(For those interested: use SDL_PIXELFORMAT_RGBA32 as the pixel type, not the more "obvious" SDL_PIXELFORMAT_RGBA8888 when making a custom streaming SDL_Texture)5 -

Writing an efficient, modern renderer is truly an exercise of patience. You have a good idea? Hah, fuck you, GPUs don't support that. Okay but what if I try to use this advanced feature? Eh, probably not going to support exactly what you would like to do. Okay fuck it I'm gonna use the most obscure features possible. Congratulations, it doesn't work even on the niche hardware that supports that extension

If I sound jaded, ya better believe I f*cking am! I cannot wait for more graphics cards to support features like mesh shaders so we can finally compute shader all the things and do things the way we want to god dammit -

Apparently "in" and "out" are the new "varying" and "attribute" in OpenGL's shader language...

Even buying a new graphics card can f*ck up your whole day! 😥2 -

OK so after working with SDL for a bit, we have a circle rendering!

Next step is to start working on keyboard input and then onto importing sprites, first time building a game engine from the ground up and working with Vala in this capacity...

EDIT: Gif in comments because it doesn't want to work .-. 6

6 -

So I'm struggling to finish this library which among other things is supposed to write flowing text. And this one's taking foreeeever and I'm hating it so much already.

I just keep daydreaming of starting a "simple" platformer. And then I go, "hm the parallax must be nice, it needs to have as many layers as possible, oh and look at this video, here they're even zooming and each layer rescales differently, good effect, I need to add that too. Also a plain platformer is just boring, it needs to have adventure elements, and even RPG too, yeah why not. Hm, it needs to have some motion blur, but oh I need this 1/48 shutter speed to make it look cinematic. Okay how do I go about adding this blur effect? What? Libgdx doesn't provide one out of the box? I need to use opengl shaders? A shader, eh... I'm not even sure what that is. Okay, let's see how to do it. Wow that's a total mess and resource hungry, and how will I calculate it all as to make it match the 1/48 thing?"

You know... Simple. And in the end, I'll abandon the library and won't get anywhere with the platformer (as usual).

Tsk tsk tsk5 -

- Continue working through my CS degree

- Finish my current game I'm working on

- get an Internship

- Learn OpenGL or Vulkan

- Learn C (I already know C++ and C#)

I got some work ahead of me, but this seems doable! I'm excited for the next 100 weeks! -

hey everyone.

guess what - it’s my anniversary of first joining devRant. I joined exactly 0x16d days ago, and it’s been great.

ive learnt so much, made more stuff, and y’all are great.

id like to follow up on my resolutions from last year ( https://devrant.com/rants/364344/... ), and im proud to say i did everything except the 3d game (i was looking opengl and got distracted by opencl).

when i first saw devRant, i was on a plane, coming home. i saw a news article and decided to check it out, and when i joined i quickly realized i was the second youngest on the platform. one year, 316 rants, 2181 comments, and a score of 18594++ later, here i am.

thanks for this wonderful platform!20 -

How old were you guys when you started writing code? What language and what inspired you?

My first programming memories come from writing some QBasic text-based RPG and graphics demos when I was like 12. I remember moving into C/C++ soon after with Borland's C++ compiler and playing around with WinAPI and OpenGL 1.16 -

I've been working on my term paper and writing an SDL-OpenGL program for it. I wanted to implement GLSL shaders. Took me only 6 hours and three cocktails.

And after the ordeal I ended up with this unholy flickering mess which just hurts to look at.

It's frustrating, doing all this shit for something that doesn't even work properly.

Fuck this2 -

I made a game out of boredom and I think it looks cute.

Full size: https://dl.dropboxusercontent.com/s... 4

4 -

Everytime you use OpenGL in a brand new project you have to go through the ceremonial blindfolded obstacle course that is getting the first damn triangle to show up. Is the shader code right? Did I forget to check an error on this buffer upload? Is my texture incomplete? Am I bad at matrix math? (Spoiler alert: usually yes) Did I not GL enable something? Is my context setup wrong? Did Nvidia release drivers that grep for my window title and refuse to display any geometry in it?

Oh. Needed to glViewport. OK.4 -

Be me, wanting to try out PyOpenGL.

Lets start by drawing a rectangle.

Nice.

And now add some text?!

NOPE CANT RENDER FROM TTF OR SOME OTHER STUPID SHIT! YOU NEED TO CREATE A BITMAP FONT. WHICH IS THE MOST INEFFECTIVE BS IVE EVER SEEN.

K but there is surely a library for Python which enables the usage of text in OpenGL? Yes but its last support was 12 years ago.

FFS WHAT IS THIS GARBAGE?

At the end i found out cpp uses freetype to convert each character of a font to a bitmap with the according spacing.

THIS SHOULD BE WAY SIMPLER!5 -

On monday I had to present my 3D graphics assignment to a teacher at uni. I was very nervous at the beginning, but the presentation turned out very well. They liked my project so much that they told me that I could help with one of their research projects, and they even offered me a teacher/demonstrator position. Is this reality or am I dreaming?3

-

A few days ago I decided to learn C by writing a simple Minecraft clone. Guess who has less than 26¼ hours to do university exercises for two weeks. And It's not like I had double that time because of Christmas Holidays.

-

I hate Apple since they dropped OpenGL and Nvidia support. My question to them:

WHAT THE FUCK IS THE PURPOSE OF DROPPING IT? YOU ARE JUST GETTING MORE HATE9 -

I'm very angry at C# 😡 (and java in some degree). Recently I decided to create huge project in C#. (It is my favorite launguage now because of great VS2017 its features, lib and such). I used windows form app in order to make pretty gui for this program. Everything worked fine, but i decided to implement some 3d rendering system in order to display grafs in 3d, oh how foolish was I.

Ok so what are my options?

1.DirectX9 -> abandoned by microsoft, they say its ded so nope.

2. DX11 -> great! i even can use sharpdx or simpledx to use it! oh wait, what is that? INVALID DX CALL

(in demo code)Damit!

3.OpenGL -> obsolete, lib non existent.

4. Library that comes with .NET -> WFP only sorry!

(i found some dogdy tutorials on yt for dx11 but they need .net 2.0 really?) 😐

In that moment i decided to swich to java. (because Java c#_launguage = new Java("microsoft");)

After 1 day of instaling eclipse and 2 more to install the newest jdk MANUALY i realized that java isn't that easy to use as C#, because:

- no dynamic type-> HUGE PAIN i cant use a single list to store everything buuuu!

-console? yes but its burried inside some random lib and its not consistent with every java version!

-gui editor similar to VS one? oh you need to create it from scrach!😫

Well at lest i can render things. So maybe java will render suff as another tool in my app? Nope pipes NON existent, we need to use sockiets! (unity pipe plugin was easier! worked but it was SLOW)

Ok so after few more days of struggling i managed to render simple graf using directx9 in my original C# project that works fine.. 😥 I only need to create a lib to wrap in and we are done!

Why can't companies create a laungage that will have ALL the features i need? Or at lest give me something like pipes that work in every laungage that will be helpful!

I know it is sometimes stressful to be a dev. But when your program works 😀 that is great feeling! Especialy when you learned to code yourself like me 😁. (student before a university, that lives in small abadoned town)6 -

!rant

How to earn a lot of money as a programmer?

So this question might sound a little naive and too simple, but earning a lot of money is what we all want after all right? Collecting experiences from people in the business should be a good idea.

So this is the position I am in:

I am a German student in my 13th year of school (which means I will graduate this summer) and I am very interested in information technology. I know C++ pretty well by now and I have built a rendering engine for a game I want to make using openGL already, which I am very proud of.

I would love to turn this passion into my profession and thats why I plan to attend a dual course of computer science next year (dual means that I will be employed at a company (or similar) in parallel to the studying course).

But what direction should I be going in if I want to make big money later on? I am ready to spend a lot of time and work on this life project but I don't know which directions are the most promising. I hate being a tiny gear in a huge machine that just has to keep spinning to keep the machine alive, I want to be part of a real project (like most people probably) and possibly sell a product (because I think that is how you really make money).

Now I know there is no magic answer to this, but I bet many people here have made experiences they can share and this could help a lot of people directing their path in a more success oriented way.

I personally am especially interested in fields which are relatively low-level and close to memory (C++), go hand in hand with physics and 3D simulation and are somewhat creative and allow new solutions. (These are no hard lines, I just thought I should give a little direction to what I know already and what I am interested in)

But really, I am interested in any work you are likely to earn a lot of money with.12 -

Apple: Mojave update breaks OpenGL code and causes a black screen? It's ok, no rush, nothing to fix here. Anyways OpenGL is deprecated right?

I literally spent a couple of hours debugging my engine because it would show a black screen until the rendering window was moved or resized. Only to find out it's a known OpenGL bug after the Mojave update. No biggie.4 -

Definitely not a rant.

After the OpenGL ranting (here and by myself), I wanted to share with you my joy to have achieved a little goal:

https://instagram.com/p/...

My TARDIS is spinning and moving into space!1 -

I have a local variable that is completely unused, can be of any name or type as long as it's assigned.

Yet, if I delete it the program no longer works.

Huh?8 -

Android development is so frustrating. Seriously, Android studio is such a bloated piece of... I found that is easier to make apps in open source game engines rather than that headache of a software. On the other end, most of these engines are not supported by my budget laptop because it doesn't have OpenGL...

I just want to give up sometimes.7 -

I have a dream that someone will write completely free linux driver for AMD videocards

and for Nvidia too

and fixes OpenGL5 -

Anyone who wants to contribute to an opensource C++ game engine?

https://github.com/sebbekarlsson/...2 -

Debugging is fun sometimes.

Especially when said bug fills up RAM, sends a bunch of guff to VRAM, and pins a core at 100%, rendering my computer near unusable in mere seconds.

(I accidentally load the same texture maybe a hundred or so times) -

Me: "I wanna mod Ikemen so it can have tag presets, a replay recording manager, a player card for online games, a gallery,..."

Windows: takes a whole day to update

Me: "I wanna mod Ikemen so it runs on Ubuntu" -

WH YDOES IT FUCKING THINK I AM NOT WRITING TO GL_POSITION FOR FUCK'S SAKE I JUST WANT TO RENDER MY OBAMA PRISM WITHOUT WORRIES! THIS IS A HUGE PROBLEM THAT I HAVE BEEN WORKING ON FOR 3 FUCKING DAYS NOW WITH NOTHING BUT HEADACHES AS THE REWARD! FUCK YOU WHO INVENTED OPENGL5

-

Today, I have installed/uninstalled a combination of [windows 7, arch linux, dual-boot] a total of 9 times...

I wouldn't be surprised if my 120G SSD fails next week

It all started when I had to whip up a GUI-wrapped youtube-dl based program for a windows machine.

Thinking a handy GUI python library will get it done in no time, I started right away with the Kivy quick-start page in front of me.

Everything seemed to be going fine, until I decided it would be "wise" to first check if I can run Kivy on said windows machine.

Here I spent what felt like a day (5 hours) trying to install core pip modules for kivy.. only before realizing my innocent cygwin64 setup was the reason everything was failing, and that sys.platform was NOT set to "win32" (a requirement later discovered when unpacking .whl files)

"Okay.. you know what? Fuck........ This."

In a haze of frustration, I decided it was my fault for ever deciding to do Python on windows, and that "none of this would've happened if I were installing pip modules on a Linux terminal"...

I then had the "brilliant" idea of "Why don't I just use Linux, and make windows a virtual machine within, for testing."

And so I spent the next hour getting everything set up correctly for me get back to programming.... And so I did.

But uh... you're doing GUI stuff, right? -> Yeah...

And you uh.. Kivy uses OpenGL on windows, doesn't it? -> Yeah..?

OpenGL... 2.

-> Fuck.

That's when I realized my "brilliant" idea, was actually a really bad prank. Turns out.. I needed a native windows environment with up-to-date non-virtual graphics drivers that supported at least OpenGL2 for Kivy GUI programs!

Something I already had from square 1.

And at this point, it hurts to even sigh knowing I wasted hours just... making... poor decisions, my very first one being cygwin64 as a substitution for windows cmd.

But persistent as any programmer should be in order to succeed, I dragged my sorry ass back to the computer to reinstall windows on the actual hardware... again.

While the windows installer was busy jacking off all over my precious gigabytes (why does it need that much spaaace for a base install??? fuck.). I had "yet another brilliant idea" YABI™

Why not just do a dual-boot? That way, you have the best of both worlds, you do python stuff in Linux, and when it's time to build and test on the target OS, you have a native windows environment!

This synthetic harmony sounded amazing to the desperate, exhausted, shell of a man that I had become after such a back-breaking experience with cygwin

Now that my windows platter with a side of linux was all set-up and ready-to-go, I once again booted up windows to test if Kivy even worked.

And... It did!

And just as I began raising my victory flags, I suddenly realized there was one more thing I had to do, something trivial, should take me "no time" to do, being in a native windows environment and all.................... -.- (sigh)

I had to make sure it compiles to a traditional exe...

Not a biggy, right? Just find one of those py2exe—sounding modules or something, and surprisingly enough, there was indeed a py2exe—sounding module, conveniently named... py2exe.

Not a second thought given, I thought surely this was a good enough way of doing it, just gonna look up the py2exe guide and...

-> 3 hours later + 1 extra coffee

What do you meeeeean "module not found"? Do I need to install more dependencies? Why doesn't it say so in the DAMN guide? Wait I don't? Why are you showing me that error message then????

-------------------------------

No. I'm not doing this.

I shut off my computer and took a long... long.. break.

Only to return sometime the next day and end up making no progress, beating my SSD with more OS installs (sometimes with no obvious reason to do so).

Wondering whether I should give up Kivy itself as it didn't seem compatible with py2exe.. I discovered pyInstaller, which seemed to be the way Kivy wants exe's to be made on windows..

Awesome! I should've looked up how Kivy developers make exe's instead of jumping straight into py2exe land, (I guess "py2exe" just sounded more effective to me then)

More hours pass, and you'd think I'd have eliminated all of my build environment problems by now... but oh, how wrong you'd be...

pyInstaller was failing, and half the solutions I found online were to download some windows update KB32946..whatever...

The other half telling me to downgrade from Python 3.8.1 to Python 3.8.0000.009 (exaggeration! But you get the point)

At the end of all that mess, I decided it wasn't worth some of my lifespan, and that maybe.. just maybe.. it would've been better to create WINDOWS GUI with the mother fuc*ing WINDOWS API.

Alright, step 1: Get Visual Studio..

Step 2: kys

Step 3: kys again.6 -

Just found my first little game I wrote in c++ and opengl(like 5yrs ago). Need to rename over 300 file names, class names and for each class their members names and function names now because ewww how can you call vars in programming like that. Porting it to Linux now. Library linking is working yet. I remember how awful it was to do that shit in vs. In Linux its ez. Also wrote a makefile because vs always compiled my whole project every time I ran it (for whatever reason).

I think that's what I'm going to be doing as a side project this week.2 -

Last internship : I learned modern opengl, libav and ffmpeg. I even was the only dev on some big contracts. Was a fucking cool internship because I learned everything I wanted to. But my manager had really low social skills. So been able to teach myself all of that was a good thing for everybody, but not for him. When the internship was over I got the worst mark of my promotion for the business with for comment that I didn't enough ask for help Oo wtf dude. Still get the best final markof the promotion and the only one who didn't work on web technologies :p but fuck you should have tell me sooner man...2

-

Anyone else getting a bug with every opengl application on Linux? Gives me an error something like "X error of failed request: badvalue"

Weird- maybe happened in the latest kernel update? I'm using 4.147 -

So, in opengl 4.x, there are no primitives for circle, and the only ways to draw an almost perfect circle are following

Draw a triangle fan and fk up your memory for a circle

Draw a rectangle and use the fragment shader and distance equation to discard the bit that is not used

But you will need to add an if statement and potentially increase the frame time (from what i have heard)

And it will be more complicated than just using a triangle fan14 -

My Dev goals for 2018:

- build a raycaster in OpenGL and Vulken

- finish my game

- actually write a Dev blog2 -

I was so close to getting my macOS virtual machine fully working on my Razer Blade... But then I discovered that something with OpenGL/GLX doesn’t work properly, so I can’t use any graphics-heavy applications until I somehow figure out what’s wrong 😞5

-

I wrote some 3d opengl application years back on a windows machone. wanted to get back into it except now i have a macbook and apple no longer supports opengl in favor of their own metal graphics library

why cant people just let other technologies be. why do we have to keep deprecating stuff. why cant we just chill...3 -

I started building a voxel engine with openGL and Clojure during December, so my goal is to make a game out of it. Long way to go but seems fun.1

-

in the workplace, i have no access to internet, am not admin to my own computer and am not allowed to install anything (due to security reasons). i also happen to have quite some spare time so i'm writing nokia's good old snake game in visual studio and opengl so i can amuse myself both coding and playing. in a way, company pushes creativity and productivity even for slacking.6

-

I've decided to switch my engine from OpenGL to Vulkan and my god damn brain hurts

Loader -> Instance -> Physical Devices -> Logical Device (Layers | Features | Extensions) | Queue Family (Count | Flags) -> Queues | Command Pools -> Command Buffers

Of course each queue family only supports some commands (graphics, compute, transfer, etc.) and everything is asynchronous so it needs explicit synchronization (both on the cpu and with gpu semaphores) too4 -

I like rants that are thought provoking and push a message forward regardless of whether they may sting a little, so for my first post on here I'd like to hit at home with many of you.

Html5 "Native" Applications are not needed. Let's cover mobile first of all, the misconception that apps are written in either javascript or Native android/ Native ios environment. Or even some third party paid tools like xamarin is quite strange to me. OpenGL ES is on both IOS and Android there is no difference. It's quite easy to write once run everywhere but with native performance and not having to jump through js when it's not needed. Personally I never want to see html or css if I'm working on a mobile app or desktop. Which brings me to desktop, I can't begin to describe how unthought out an electron app is. Memory usage, storage space for embedding chromium, web views gained at the expense of literally everything else, cross platform desktop development has been around for decades, openGL is everywhere enough said. Finally what about targeting browser if your writing a native app for mobile and desktop let's say in c++ and it's not in javascript how can it turn back into javascript, well luckily c++ has emscripten which does that simply put, or you could be using a cross complier language like haxe which is what I use. It benefits with type safety, while exporting both c++ and javascript code. Conclusion in reality I see the appeal to the js ecosystem it's large filled with big companies trying to make js cross development stronger every day. However development in my mind should be a series of choices, choices that are invisible don't help anyone, regardless of the popularity of the choice, or the skill required.8 -

So this was a conversation.

tl;dr You can't just FUCKING RECOMPILE for an older OpenGL version you dimwit!

Context: Person Y has OpenGL 3.1, my program requires OpenGL 2.1, but refused to launch with "Pixel format not accelerated"

--------

Person X - Today at 9:28 PM

Nope

or optionally compile it for old opengl

Or just use my old junk.

Me - Today at 9:29 PM

No

Person X - Today at 9:29 PM

Why?

Me - Today at 9:29 PM

You don't just "compile it for old opengl"

Person X - Today at 9:29 PM

I can

Btw

Me - Today at 9:29 PM

For one, Person Y has an OGL version new enough so... /shrug

Person X - Today at 9:29 PM

shrug

Me - Today at 9:30 PM

And there is no way I'm ripping the rendering code apart and re-doing everything with glBegin, glVertex, glEnd guff

Person X - Today at 9:30 PM

You don't have to

Me - Today at 9:30 PM

You do

Person X - Today at 9:30 PM

Just use a vbo

Than a vba

Me - Today at 9:30 PM

I ALREADY USE FUCKING VBOS

Person X - Today at 9:30 PM

....

There's two typws

Types

Btw one with indacys and one with out

Ones 3.0 ones 4.0

Me - Today at 9:31 PM

tl;dr. I am not rewriting half of everything for worse performance just for the sake of being compatible with even more legacy OGL, that might not even work anyway for Person Y. idc

Person X - Today at 9:32 PM

Plus if your using glut you can set the version I want to say

Also it's not worse

<Some more conversation>

Person X - Today at 9:33 PM

Btw crafted [Me] taking th lazy way as normal

Btwx500

Me - Today at 9:33 PM

Taking the lazy way eh.

You have no idea do you

Person X - Today at 9:33 PM

Yes you are

I have more of one :p

Than you think2 -

My friend makes fun of me for liking Java, php and the fact i like coding OpenGL instead of using game engines.

Where would holy devrant stand ?3 -

Turns out the reason the selection of RA cores on the PS3 sucks so much dick is that it has a half-complete OpenGL implementation.

Fuck it, we're doing it live. I've got CFW, i'll dynarec if I damn well please. If it won't compile i'll fucking make it compile, I don't give a fuck about "sanity restraints" and "needing OpenGL 2" and "LV1 required" I can fucking replace all of LV1 and LV2 if I fucking want FUCK YOU

The Cell processor is pretty beefy, I could prolly do it all-CPU if I wanted.5 -

!rant && question

So, I am doing a complete burn and rebuild of a C++ library into a C# library. My question: this thing uses GDI/OpenGL to do graphics things (Mostly rendering lines and shapes over images). Is it worth trying to use WPF for that, or does GDI/OpenGL work in C#?2 -

So I just found out that GL_MAX as a blend func is supported pretty much universally (~92%). Pretty nice considering I kinda gave up my baricentrics based anti-aliasing technique months ago7

-

When I finally learned how to use vertex lists with OpenGL bindings in Python and optimized my game's tilemap rendering. I think it is my best written code snippet so far. It took me weeks to finish it.1

-

I'm trying out Picolisp. Cool, I think, an OpenGL library. I'll try the example program.

(Clicks mouse on program window)

(A wild SIGILL appears!)

Two hours later, still trying to figure out why it's doing that, with Google and DuckDuckGo returning no helpful results whatsoever. This is very annoying.2 -

When they decided to deprecate the old app that went back to early DOS, they decided to use VB.NET because they'd used some VBA and were familiar with it. Except they had a vague idea that C# was faster and decided to write the OpenGL code in that. Also they had some C++ code and decided to write more of it, accessed by the main program via COM.

I come in and the decision is made to integrate some third-party libs via a C++/CLI layer. On one hand screw COM, but on the other we're now using two non-standard MS C++ extensions. Then we decide we need scripting, so throw in some IronPython.

I'm the build engineer for all this, by the way. No fancy package managers since almost all the third-party dependencies are C++; a few of them are open source with our own hacks layered on top of the regular code, a few are proprietary. When I first started here you couldn't build on a fresh SVN checkout (ugh) without repeatedly building the program, copying DLLs manually, building again, ad nauseum. I finally got sick of being called in to do this process and announced that I was fixing it, which took a solid week of staring at failed compiler output.

Every so often someone wants to update that damn COM library and has to sacrifice a goat to figure out how the hell you get it to accept a new method. Maybe one day I'll do a whole rant just based on COM. -

There was this question I came up with that was very good at inducing hallucinations on what at the time I thought was a *lobotomized* LLM.

I can't recall the exact wording right now, but in essence you asked it to perform OpenGL batched draw calls in straight x86_64 assembly. It would begin writing seemingly correct code, quickly run out of registers, and then immediately start making up register names instead of moving data to memory.

You may say: big deal, it has nowhere to pull from to answer such an arcane fucking riddle, so of course it's going to bullshit you. That's not the point. The point is it cannot realize that it's running out of registers, and more importantly, that it makes up a multitude of register names which _will_ degrade the context due to the introduction of absolute fabrications, leading to the error propagating further even if you clearly point out the obvious mistake.

Basically, my thought process went as follows: if it breaks at something fundamental, then it __will__ most certainly break in every other situation, in either subtle or overt ways.

Which begged the question: is it a trait of _this_ model in particular, or is it applicable to LLMs in general?

I felt I was on to something, but I couldn't be sure because, again, I was under the impression that the model on which I tested this was too old and stupid so as to consider these results significant proof of anything; AI is certainly not my field, so I had to entertain the idea that I could be wrong, albeit I did so begrudgingly -- for obvious reasons, I want at least "plausible based on my observations" rather than just "I can feel it in my balls".

So, as time went on, I made similar tests on other models whenever I got a chance to do so, and full disclosure, I spent no money on this so you may utilize that fact in your doomed attempt to disprove me lmao. Anyway, it's been a long enough while, I think, and I have a feeling you folks can guess the final answer already:

(**SLIGHTLY OMINOUS DRUM ROLL**)

The "lobotomy" in question was merely a low cap on context tokens (~4000), which I never went over in the first place; newer/"more advanced" models don't fare any better, and I have been _very_ lenient in what I consider a passable answer.

So that's that, is what I'm starting to think: I was right all along, and went through the burdensome hurdle of sincerely questioning the immaculate intuition of my balls entirely for naught -- learn from this mistake and never question your own mystical seniority. Just kidding, but not really.

The problem with the force of belief is it can work both ways, by which I mean, belief that I could be wrong is the reason I bothered looking further into it, whereas belief to the contrary very much compels me to dismiss doubt entirely. I don't need that, I need certainty, dammit. And though I cannot in good faith say that I am _certain_, "sufficiently convinced" will have to do for the time being.

TL;DR I don't know but the more I see it just seems shittier.7 -

Just finished a little proof of concept of a reprojected multisample antialiasing technique and daaaaamn it's looking good. First time ever a rendering technique I invented isn't complete shit so that's an improvement

It still has some (pretty big) issues with both spatial and temporal ghosting but I have some ideas in the pipeline

I wish I could show you guys comparison images but as it turns out most anti aliasing techniques look pretty good on still frames but only the good ones stand up under motion and I don't know of a good way right now to capture pixel perfect clips like that4 -

isRant <- false

I finally got a tiny white dot displayed, using modern OpenGL, in F#, through OpenTK. There would be a picture, but picture compression made the dot invisible.1 -

There's nothing quite like an app force closing for no apparent reason, and no error log info.

Just spent an hour figuring out that one of the device I test on doesn't like linking GLSL shader programs if it contains a loop even know every other devices I've tested on are totally fine with it. 😑 -

Migrating from opengl to vulkan. In the meanwhile, DevRant, let's talk about Balmers Peak !

Share your stories!3 -

Just spent a full fucking day working on a C binding for Vala to use GLFW as the main OpenGL entry point... Only to now discover that SDL has an already impimented OpenGL window class and allows for basic 2D operations...

Well that was time well fucking spent1 -

So I was wondering if any of you know if any good ways to inject additional functionality into a function in CPP. My use case is injecting a counter into an OpenGL draw function to see how many times per frame it's called. I know I can do this using assembly Inca more hacky manner as you might do for cheats in games(code caves), but I'm more interested in adding is for debugging/statistics for the game engine I'm working on. Basically im looking for a portable stable way of doing it that when I compile as a debug build, the code gets added to various functions, and when I compile under release, it doesn't.

Example:

glDraw();

Would call

glDraw() {

drawCount++; //some debug stuff

glDraw(); //call the real one internally

}

I should mention with code caves you can do this by saving the original address of the function, patching the vTable to point to your new function that has the same parameters etc, then all calls to that function are redirected to yours instead and then you simply call the original function with the address of the function you originally saved. That said, I'm not sure how to access vTable, etc the "normal" way...2 -

*starts reviewing code that I wrote at some ridiculous hour*

var glPassiveDebugging bool = !true

and to think people say that I'm too serious, and never do anything silly... -

So I think I need more goats or I need to get my computer in to a therapist's office. Either way, I have decided that my problem is fixed. In that rather than addressing the root cause I have attached a bandaid that will work as long as the customer has much less time than me.3

-

on a 5 day rock festival vacation... a band with songs i barely know is on and i'm a bit high... there's a cool set of animations playing at the stage background and i spent the whole concert trying to figure how i could write each animation in opengl. i'll give them a shot back home, if i don't forget.3

-

Is it a good idea to switch from learning openGL to learning Vulkan now?

I was learning openGL in the past months and now that Vulkan is out I am thinking about learning that instead. I've heard that it's harder to learn though, so roughly how long do you think it would take to learn it as a openGL novice?

In openGL I have used instanced rendering with different textures, specular maps in the shader all in perspective 3D of course.3 -

guys i need serious help. I am planning to make driving simulator using c# but the problem is I don't know which API, library will be necessary for making driving simulator environment graphics. Should I use openGL or something else?2

-

If you run into occasional graphics driver crashes and you use Firefox on Windows & Radeon Graphics, then you might want to disable hardware acceleration setting in Firefox. It reduced the frequency of crashes for me

Seems like Firefox screws up Radeon OpenGL driver somehow 🤔💭🦊

It is prone to crashing when I have a 3D game open and Firefox with hw.acc=enabled open at the same time2 -

Graphics devs, I have one hour a day i can spare for learning opengl and the math it needs to be understood. Is it possible or should i look for another hobby that is doable in that amount of time.1

-

You know I'm looking around a t a museum of 3d graphics programming right now.

Not my first time but the same arcade machines are playing the same tooons over and over again in an eerie way and strange;y thertes a basketball game up there on several large screen tvs too...

I remember my first detailed look at opengl.

For some reason it just never worked for me.

But I see all these incredible sources of past fortune sitting unplayed, and think.. wow... what a waste.

these brought me many hours of joy and gave me an opportunity or so I thought to try to make friends and meet other teens when I was younger.

They represent countless hours of lovingly crafted mind-crack, and noone smokes them anymore.

Aliens armaggedon sits right in front of me, holstered faux guns glowing in red alluringly.

the huge box of unclaimed mooks and stuffed sheep sit there sadly robotic arms that can never reach them just hanging rusting, unloved by a new generation to curse them for never grasping anything and stealing their quarters and a HUGE 96 inch or more screen for Tomb Raider, FUCKING TOMB RAIDER hums in a corner just slightly out of my full view.

and noone is here. why ?

and yet the gaming industry supposedly continued to thrive.

in a way arcades were better they kept people from being addicted to wowcrack.

just like raising gasoline prices would prompt the creation of cleaner more efficient mass transit.2 -

TIL that WebGL treats IBOs and VBOs differently from actual GL, supposedly because it makes security validation easier.

That means you can't `glBindBuffer` to some target and fill in the data, because when you later `glBindBuffer` to `GL_ELEMENT_ARRAY_BUFFER` you'll get 0x0502 GL_INVALID_OPERATION.

One problem though. This is not documented *anywhere*, either in WebGL `.bindBuffer()` or in the GL ES `glBindBuffer` :V2 -

I'd like to make a simple-ish game with OpenGL, but I'm not sure if I should use the older (fixed function pipeline) versions or the newer (programmable pipeline thingy, with shaders and stuff) ones. Thoughts?3

-

yo so wtf opengl doing over there

all I can find on "APIENTRYP" is that it's "macro magic that makes everything work" but it's breaking fucking everything. I remove it and it works again (well, kinda, i still gotta fix some typing horseshit)

why is opengl in shambles i just wanna compile gdi -

Where to start learning openGl??? I know, theres sometting like google, but I would like to hear things like good practises and stuff...3

-

I'm porting an OpenGL project to work with WebAssembly, I'm using emscripten to compile/generate the 'glue' to JS. Sofar I'm able to render my gl code properly through the glfw3 framework. I know you can use emscripten callbacks for input, however I was hoping to keep my existing glfw3 callback setup, that said the only callback that seems to be working properly is mouse position, window resize, keyboard, etc never get called. If anyone knows how to enable these I'd be super greatful!1

-

Are there out solutions to create cross-platform GUIs withing a GUI (like Blend in Visual Studio) which does interface with C++?(leave out Qt)

Searching the web I only found GUI libraries in C++, which are big turnoff for designers.

Further research leaded me to a viable solution that seems haven't been built yet anywhere, I'm taking about OpenGL\Vulkan as the engine for a cross-platform GUI builder within a GUI.4 -

Ok so slowly learning C also figuring out how to get a few Legacy Opengl code examples to compile. (yeah yeah it's old yada yada) maybe I should try finding unconventional ways to help aid with my learning.

-

So doing some OpenGL crap and glSelectBuffer is occasionally returning all 0s. Not a problem except that right before the select buffer I push the names on to the name stack. So they should be on there. But they aren't ideas?1

-

Fuck windows for not implementing a software backend for modern OpenGL

I just wanted to run minecraft and my game, i have to install a linux vm to use mesa for OpenGL because my computer is from 2008 or something and doesn't have any form of gpu except for the chipset,

I know that mesa dlls are available for windows, but for some reason they will randomly work sometimes and sometimes they won't, because apparently i need to take ownership of system32 to replace default openGL dlls and those take precedence no matter what3 -

Not finding what I want via google so I'll ask here: What's the deal with opengles android shaders freezing my phone's screen?

Is it normal unavoidable behaviour for a shader with an infinite loop to fuck up the visual output irreversibly (until phone restart)? -

I remember codeblocks with pain the .h and .cpp, the pointers sometimes get the memory address that crash the program and program with opengl on codeblocks is the dead1

-

The coolest project I've worked on I did in an hour's time, for my CG Lab's mini project in college! I used OpenGL to recreate Atari Breakout from scratch :)

-

I have an idea I need to get out of my head but am not sure where to start. I want to figure out a good way to learn about real-time water simulation. I’m looking at either OpenGL, or Openframeworks, or Cinder. I have basic experience with c++ and a bit less with OpenGL but am not worried about learning. Could someone point me in the right direction? Andy good resources to learn or just general advice would be greatly appreciated.4