Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "s3"

-

In one of our first C programming classes today in college, I booted up Ubuntu on the dual boot systems to practice our first few programs which we were supposed to be doing in Turbo C on Windows.

I successfully compiled it using gcc on the first try which appeared like magic to my neighbor. Soon our teacher came to check my program and said that I made a mistake. I asked her what is the mistake? She said that I was supposed to be using conio.h!!

I argued that it is not a standard header file and using it makes the code non-portable. She tried it to edit it to include conio.h but couldn't edit it since I was using vim. I was asked to switch to Windows and use Turbo C instead and also use conio.h. I denied and she told me to follow her or leave the class.

The weather was nice.19 -

Morning conversation with wife.

As she puts a stainless steel water bottle on the counter

She: can you make a water bottle for our daughter before school.

Me: I'm not sure, does it have to look like this one, I don't have any training working with metals. But if I have full control over the design. I may be able to come up with something.

She: that not funny, why do you always do that.

Me: do what, that is exactly what you told me to do.

A little later.....

She: I'm running late, can you make sure "everything" up stairs is unplugged..... (She means her curling iron)

I can't wait until she comes home.........;-)20 -

Well I was in search for an internship and there was this Remote one posted online giving Rs1000(14$) for a month.

All he wanted was a fully functional clone of Coursera with hosted on AWS with videos of around 2-3TB streaming from S3 in a month :)

I asked him about his AWS costs and he replied - "Use the Internship money I'm giving you it won't cost much."30 -

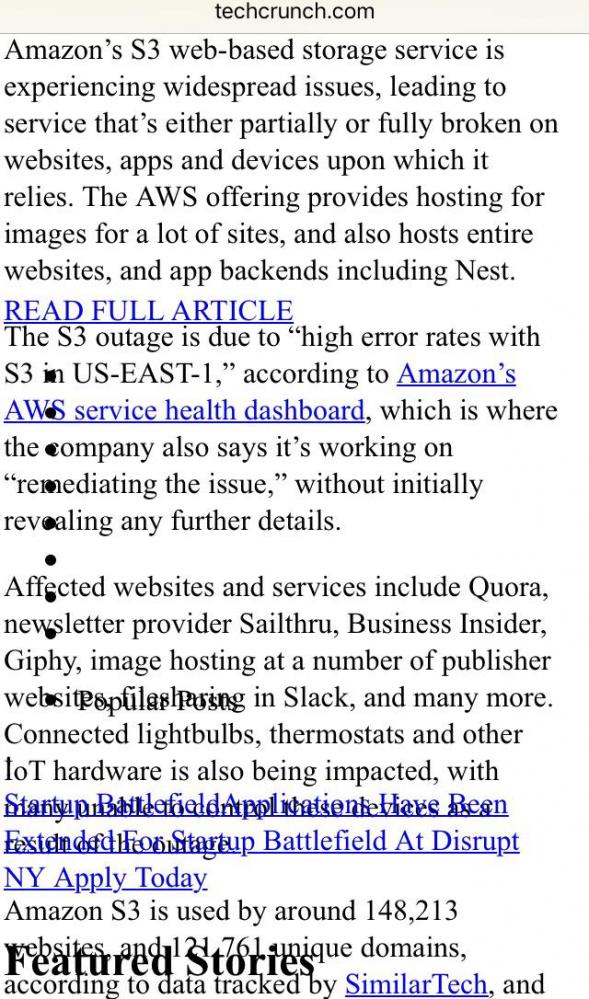

Hey everyone - in case it isn't obvious, unfortunately due to the major S3 outage, no images can currently be uploaded :/

Sorry about that.4 -

Currently on an internship, PHP mostly, little bit of Python and the usual web stuff, and I just had the BEST FUCKING DAY EVER.

Wake up and find out I'm out of coffee, oh boy here we go.

Bus leaves 10 minutes late, great gonna miss my train.

Trains just don't wanna ride today, back in a bus I go, what's normally a 10 minute train travel is now a 90 minute bus ride.

Arrive at internship, coffee machine is broke, non problem, I'll just lose it slowly.

NOW HERE COMES THE FUCKING GOOD PART!!

Alright, so I'm working on a CMS that can be used just about on any device you want, mobile or desktop, it's huge, billion's of rows of scientific data. Very specific requirements and low error margins. Now, yesterday I was really enjoying myself here until today, Project manager walks in, comes to my desk and hands me a Samsung Gear S3, an Apple watch and some cheap knockoff. He tells me that before the Friday deploy, THE ENTIRE CMS SHOULD WORK ON THOSE WATCHES!

I mean, don't get me wrong, I like a challenge but it's just not right, I mean, I'm still not sure what the right way to handle tables on phones is, but smart watches, just no. Besides that, I've never worked with any Apple devices, let alone WatchOs, nor have I worked with Android Wear.

Also, Project Manager is a total dickhead, he's the kinda guy that prefers a light theme, doesn't clean up his code, writes 0 documentation for an API, 1 space = tab, pure horror.

So after almost flipping my desk, I just called my school coach to announce I'm leaving this internship. After a brief explanation he decides to come over, and guess what, according to the Project Manager I wasn't supposed to do that, I was supposed to test if it would be possible.

FUCKING ASSFUCKFACE9 -

I'm getting ridiculously pissed off at Intel's Management Engine (etc.), yet again. I'm learning new terrifying things it does, and about more exploits. Anything this nefarious and overreaching and untouchable is evil by its very nature.

(tl;dr at the bottom.)

I also learned that -- as I suspected -- AMD has their own version of the bloody thing. Apparently theirs is a bit less scary than Intel's since you can ostensibly disable it, but i don't believe that because spy agencies exist and people are power-hungry and corrupt as hell when they get it.

For those who don't know what the IME is, it's hardware godmode. It's a black box running obfuscated code on a coprocessor that's built into Intel cpus (all Intell cpus from 2008 on). It runs code continuously, even when the system is in S3 mode or powered off. As long as the psu is supplying current, it's running. It has its own mac and IP address, transmits out-of-band (so the OS can't see its traffic), some chips can even communicate via 3g, and it can accept remote commands, too. It has complete and unfettered access to everything, completely invisible to the OS. It can turn your computer on or off, use all hardware, access and change all data in ram and storage, etc. And all of this is completely transparent: when the IME interrupts, the cpu stores its state, pauses, runs the SMM (system management mode) code, restores the state, and resumes normal operation. Its memory always returns 0xff when read by the os, and all writes fail. So everything about it is completely hidden from the OS, though the OS can trigger the IME/SMM to run various functions through interrupts, too. But this system is also required for the CPU to even function, so killing it bricks your CPU. Which, ofc, you can do via exploits. Or install ring-2 keyloggers. or do fucking anything else you want to.

tl;dr IME is a hardware godmode, and if someone compromises this (and there have been many exploits), their code runs at ring-2 permissions (above kernel (0), above hypervisor (-1)). They can do anything and everything on/to your system, completely invisibly, and can even install persistent malware that lives inside your bloody cpu. And guess who has keys for this? Go on, guess. you're probably right. Are they completely trustworthy? No? You're probably right again.

There is absolutely no reason for this sort of thing to exist, and its existence can only makes things worse. It enables spying of literally all kinds, it enables cpu-resident malware, bricking your physical cpu, reading/modifying anything anywhere, taking control of your hardware, etc. Literal godmode. and some of it cannot be patched, meaning more than a few exploits require replacing your cpu to protect against.

And why does this exist?

Ostensibly to allow sysadmins to remote-manage fleets of computers, which it does. But it allows fucking everything else, too. and keys to it exist. and people are absolutely not trustworthy. especially those in power -- who are most likely to have access to said keys.

The only reason this exists is because fucking power-hungry doucherockets exist.26 -

So apparently the Amazon S3 outage happened because of one setting being wrong in a looooong string of commands issued to shut down just a few servers.

Am I the only Linux user who totally gets how that could happen to just about anyone regardless of how awesomely competent they might be?3 -

Let's take bets on the root cause of the S3 outage!

I'm guessing a bad deploy of a sever-side Java application with a garbage collection problem.5 -

Dear recruiters,

if you are looking for

- Java,Python, PHP

- React,Angular

- PostgreSQL, Redis, MongoDB

- AWS, S3, EC2, ECS, EKS

- *nix system administration

- Git and CI with TDD

- Docker, Kubernetes

That's not a Full Stack Developer

That’s an entire IT department

Yours truly #stolen9 -

*Me feeling productive on a day

Today I am going to start working on the complex part of my proect. Spends 1 hour deciding what all technologies to use , how to implement it, which design patterns to use .

Let's do it

*15 min later

Making some tiny css corrections

*3 hrs later

Making some tiny css corrections

*An eternity later

REALISED DIDN'T SET THE SIZE OF THE PARENT CONTAINER TO 100%

So much for thinking about being productive for today :(((5 -

I can't figure out what's worst in the new system we got handed over from a client:

1. that they have an every-minute-cron-job with "sudo chmod -R /var 777"

2. that the backup of their database haven't worked since 2014 because the S3 bucket is full

3. It's written in PHP, by one guy, who didn't knew PHP when he started work on it. (All MYSQL calls are String-concats, etc)3 -

LONELINESS IS REAL

I am a freshman in a university ( about to complete my first year ) with a girl to boy ratio of around 1:10. During my first semester I was spending a lot of time with friends, chatting up with people and making connections. Due to this my productivity as a dev, if I am even capable of being called that decreased ( I was not a developer before joining , but I had an aim of being one , esp at least the best in my batch ) after 1st year. In retrospect I did nothing productive till 3 months out of 4 in my first sem and the guilt hit me hard . During the last month I had to catch up with my much neglected studies and all I had done was a little bit of html and css, and barely scratched the surface of js( please don't judge me for this :) , I had to start somewhere < although I learned a little bit of C++ > ). BUT I WAS A HAPPY CUNT, and had no sign of lonelines. Now during this sem , I had made progress ( learn js with es6 syntax and still learning, did c++ and extended my knowledge ) . Currently I am working on my Vue full stack app ( along with express and some websocket library , TBD ) < yeh I learnt some backend too > , and increasing my knowledge of dsa using clrs. Although my productivity has increased manifolds but I know feel the need of closure. I am kinda happy with the fact that I know a lot of people around here ( thanks to my extroverted 1st semester ) but sometimes it hits me hard at night when I don't have a monitor to drown my eyes and thoughts in. I have increased my academic performance too but I need someone to share and express my feelings with. I could have made a girlfriend earlier but now most of them are taken and I have lost touch. But believe me, all I want is a companion to spend these lonely days and night ( not talking about as a friend ). Staying away from home isnt easy you know...m :(

KUDOS TO DEVRANT FOR DEVELOPING A COMMUNITY WHERE PEOPLE LIKE ME CAN FEEL SAFE IN OUR NATURAL HABITAT. I COULDN'T HAVE EXPRESSED MY FEELINGS ANYWHERE ELSE EXCEPT IN A PERSONAL BLOG ( where no one would have read it )

PS1: I apologise if I sounded arrogant about any of my skill, I didn't mean that way. I ain't even that good, just kinda proud of myself a little for achieving something I couldn't have thought.

PS2: Any type of suggestions and help is much appreciated ( considering I am a college student who went into some serious development 4 months ago , I am pretty impressionable ;) )

PS3: Please don't confuse this with depression. I am HAPPY BUT LONELY

PS4: Is there a way so that I can change my username?16 -

When you a Visual learner

Learning Beziér Curve📈📉. I like learning thing visually, it helps me figure something out easily.

Do you too? 9

9 -

I just deleted 3.5TB of junk data from S3, effectively saving my company about 88 dollars.

I feel so fucking good.

Think I'm going to ask for a raise😂3 -

1. talked to a dev and found out he never used git

2. saw a guy formatting the code in eclipse line by line, even when eclipse provides automatic formatting.2 -

Bought myself a Samsung s3 and while choosing what apps I want to receive notifications from, I noticed everything was off by one.

6

6 -

I hate it when your non dev friend uses top notch hardware and I am stuck with a piece of shit junk15

-

Does balding scare the shit out of anyone else here? I am 19 and have started showing signs of male pattern baldness *sigh*. Just hope to make it to 25 without balding completely.20

-

Thanks to devrant , now I can be one those people trying to look social in one those so called meets with personal touch where everyone is scrolling their Facebook (still)

-

!rant

When people listen to a complete story and then ask who the villian was..

DEVS : It's like reading JavaScript and then asking what is "this"2 -

Pro tip for job candidates:

If you push a code challenge to a live hosting service like github pages or S3, don’t give the reviewers a link to the repo!! Instead put the link into the home page and send the reviewer only a link to the live hosted page.

Why?

Because, if you host with github pages, you’re required to use the project path as the domain root. If the reviewer pulls your project and doesn’t bother to read your readme file with the link at the top, he’ll complain that he couldn’t figure out why your project isn’t hosted from the root domain, and he’ll pass on your application.

True story.2 -

So there I was productivity coding away in my office since early in the morning it was about noon when my coworkers kept saying. " Hey have you seen how nice it is outside." "Wow it's really nice out there" and " hey you should really go outside and get some fresh air".

So I'm all ok, cool it's lunchtime I'll check it out. So I go outside and I'm out there for 30 seconds when a bee lands on my face and stings me just under my eye.

Ouch! WTF! No No No it is not nice outside at all. Infact it is painful outside.

so now the rest of my day is ruined all I can feel is my face throbbing and I can't think about anything anymore but my face in pain. Amazing how one little insect can ruin days of coding.

Don't listen to the muggles stay inside.4 -

A few years ago I found a public AWS S3 bucket owned by a fortune 500 company containing a database dump backup with all of their users unsalted md5 hashed passwords.

I didn't report it because I don't want to get sued or charged. I don't know whether it's still public or not.6 -

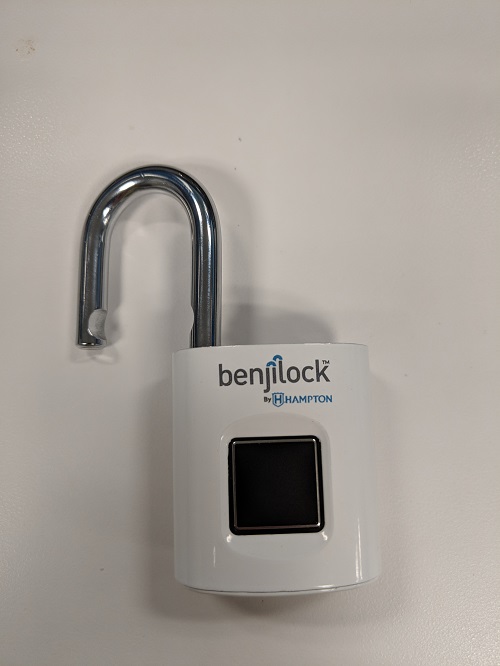

So I Bought this bio metric pad lock for my daughter. She excitedly tried to set it up without following the directions( they actually have good directions on line) first thing you do is set the "master print" she buggered that up setting her print. So when I got home I was thinking, no problem I'll just do a reset and then we cant start again.

NOPE !!! you only have one chance to set the master print! after that if you want to reset the thing you need to use the master print along with a physical key that comes with it.

What sort if Moron designs hardware / software that is unable to be reset. Imagine how much fun it would be if once you set your router admin password it was permanent unless you can long back in to change it. Yea nobody has ever forgotten a password.

Well they are about to learn a valuable financial lesson about how user friendly design will influence your bottom line. people (me) will just return the lock to the store where they bought it, and it will have to be shipped back to the factory and will be very expensive for them paying for all of the shipping to and from and resetting and repackaging of the locks and finally shipping again to another store. Meanwhile I'll keep getting new locks until at no cost until she gets it right.

poor design 34

34 -

Full HD 27' monitor 😍😍😍 when you can divide your screen into two halves easily and there's no need to do Alt+tab

3

3 -

wanted to try fedora on my laptop nd instead it removed my Windows from the laptop,

now installing Ubuntu 😪😪😪

such a start to the Sunday8 -

Everybody still using Windows 7 is waking up to this this morning.

Thank you Microsoft for doing sales for us 😁 5

5 -

Diary of an insane lead dev: day 447

pdf thumbnails that the app generates are now in S3 instead of saved on disk.

when they were on disk, we would read them from disk into a stream and then create a stream response to the client that would then render the stream in the UI (hey, I didn't write it, I just had to support it)

one of my lazy ass junior devs jumps on modifying it before I can; his solution is to retrieve the file from the cloud now, convert the stream into a base64 encoded string, and then shove that string into an already bloated viewmodel coming from the server to be rendered in the UI.

i'm like "why on earth are you doing that? did you even test the result of this and notice that rendering those thumbnails now takes 3 times as long???"

jr: "I mean, it works doesn't it?"

seriously, if the image file is already hosted on the cloud, and you can programmatically determine its URL, why wouldn't you just throw that in the src attribute in your html tag and call it a day? why would you possibly think that the extra overhead of retrieving and converting the file before passing it off to the UI in an even larger payload than before would result in a good user experience for the client???

it took me all of 30 seconds to google and find out that AWS SDK has a method to GetPreSignedURL on a private file uploaded to s3 and you can set when it expires, and the application is dead at the end of the year.

JFC. I hate trying to reason with these fuckheads by saying "you are paid for you brain, fucking USE IT" because, clearly these code monkeys do not have brains.3 -

Damn, how have I only just discovered localstack?

The ability to spin up and use SQS queues, S3 buckets, lambdas, Kinesis streams etc. for development without worrying about bankrupting myself if I screw something up is really quite liberating.3 -

Been developing with AWS EC2 since 2015. Have deployed multiple apps in my Ubuntu servers. And have deployed Dockerized apps into Elastic Beanstalk. And used S3 to store files.

But just because I don't know AWS Lambda, the recruiter thought I'm not qualified enough for the job.

Fml.5 -

When you realise that you no longer interpret s3 as a smartphone model, fork as an eating tool, eclipse as natural phenomenon... :( :|2

-

Can someone explain the pricing for DO, AWS, or any other cheap hosting? DO $15 bucks for 1 database or multiple database in one server? AWS S3 or E2? Or should I stick with Heroku $7 (Web App server) and $9 for Postgres database?24

-

Spends a summer building a neat webapp for the father who subsequently receive an invoice of $2 for the s3 bucket. Father exclaims "what is this!?" Then proceeds to block the account. Love you Dad.

-

Only touching the topic slightly:

In my school time we had a windows domain where everyone would login to on every computer. You also had a small private storage accessible as network share that would be mapped to a drive letter so everyone could find it. The whole folder containing the private subfolders of everyone was shared so you could see all names but they were only accessible to the owner.

At some point, though, I tried opening them again but this time I could see the contents. That was quite unexpected so I tried reading some generic file which also worked without problems. Even the write command went through successfully. Beginning to grasp the severity of the misconfiguration I verified with other userfolders and even borrowed the account of someone else.

Skipping the "report a problem" form, which would have been read at at least in the next couple hours but I figured this was too serious, I went straight to the admin and told him what I found. You can't believe how quickly he ran off to the admin room to have a look/fix the permissions. -

When you are trying to be supportive to a colleague but he sees your act as condescension on your part. BITCH I DON'T GIVE A SHIT ABOUT YOU, BUT UNFORTUNATELY I HAVE TO WORK WITH YOU SO PLEASE KNOW THE BARE MINIMUMS TO DO THE TASK. Also he complained to others that he was offended . He was tryna learn react before knowing es6 and nodejs , doesn't know asynchronous and was strongly suggesting that our whole fucking team move to React and I just suggested some topics to look to. I carried his ass once , and seems like now will have to carry it once more :(

-

!rant

I just watched the new Mr. Robot Trailer.

I'm sure that s3 will be awesome.

2017-10-11 contdown is started.5 -

Was interested to learn about OpenGL and WebGL. Just found out no fucking drivers exist for my graphics card on Ubuntu.

WTF AMD !!! Atleast provide some compatibility for your product if I am purchasing it !!!8 -

EoS1: This is the continuation of my previous rant, "The Ballad of The Six Witchers and The Undocumented Java Tool". Catch the first part here: https://devrant.com/rants/5009817/...

The Undocumented Java Tool, created by Those Who Came Before to fight the great battles of the past, is a swift beast. It reaches systems unknown and impacts many processes, unbeknownst even to said processes' masters. All from within it's lair, a foggy Windows Server swamp of moldy data streams and boggy flows.

One of The Six Witchers, the Wild One, scouted ahead to map the input and output data streams of the Unmapped Data Swamp. Accompanied only by his animal familiars, NetCat and WireShark.

Two others, bold and adventurous, raised their decompiling blades against the Undocumented Java Tool beast itself, to uncover it's data processing secrets.

Another of the witchers, of dark complexion and smooth speak, followed the data upstream to find where the fuck the limited excel sheets that feeds The Beast comes from, since it's handlers only know that "every other day a new one appears on this shared active directory location". WTF do people often have NPC-levels of unawareness about their own fucking jobs?!?!

The other witchers left to tend to the Burn-Rate Bonfire, for The Sprint is dark and full of terrors, and some bigwigs always manage to shoehorn their whims/unrelated stories into a otherwise lean sprint.

At the dawn of the new year, the witchers reconvened. "The Beast breathes a currency conversion API" - said The Wild One - "And it's claws and fangs strike mostly at two independent JIRA clusters, sometimes upserting issues. It uses a company-deprecated API to send emails. We're in deep shit."

"I've found The Source of Fucking Excel Sheets" - said the smooth witcher - "It is The Temple of Cash-Flow, where the priests weave the Tapestry of Transactions. Our Fucking Excel Sheets are but a snapshot of the latest updates on the balance of some billing accounts. I spoke with one of the priestesses, and she told me that The Oracle (DB) would be able to provide us with The Data directly, if we were to learn the way of the ODBC and the Query"

"We stroke at the beast" - said the bold and adventurous witchers, now deserving of the bragging rights to be called The Butchers of Jarfile - "It is actually fewer than twenty classes and modules. Most are API-drivers. And less than 40% of the code is ever even fucking used! We found fucking JIRA API tokens and URIs hard-coded. And it is all synchronous and monolithic - no wonder it takes almost 20 hours to run a single fucking excel sheet".

Together, the witchers figured out that each new billing account were morphed by The Beast into a new JIRA issue, if none was open yet for it. Transactions were used to update the outstanding balance on the issues regarding the billing accounts. The currency conversion API was used too often, and it's purpose was only to give a rough estimate of the total balance in each Jira issue in USD, since each issue could have transactions in several currencies. The Beast would consume the Excel sheet, do some cryptic transformations on it, and for each resulting line access the currency API and upsert a JIRA issue. The secrets of those transformations were still hidden from the witchers. When and why would The Beast send emails, was still a mistery.

As the Witchers Council approached an end and all were armed with knowledge and information, they decided on the next steps.

The Wild Witcher, known in every tavern in the land and by the sea, would create a connector to The Red Port of Redis, where every currency conversion is already updated by other processes and can be quickly retrieved inside the VPC. The Greenhorn Witcher is to follow him and build an offline process to update balances in JIRA issues.

The Butchers of Jarfile were to build The Juggler, an automation that should be able to receive a parquet file with an insertion plan and asynchronously update the JIRA API with scores of concurrent requests.

The Smooth Witcher, proud of his new lead, was to build The Oracle Watch, an order that would guard the Oracle (DB) at the Temple of Cash-Flow and report every qualifying transaction to parquet files in AWS S3. The Data would then be pushed to cross The Event Bridge into The Cluster of Sparks and Storms.

This Witcher Who Writes is to ride the Elephant of Hadoop into The Cluster of Sparks an Storms, to weave the signs of Map and Reduce and with speed and precision transform The Data into The Insertion Plan.

However, how exactly is The Data to be transformed is not yet known.

Will the Witchers be able to build The Data's New Path? Will they figure out the mysterious transformation? Will they discover the Undocumented Java Tool's secrets on notifying customers and aggregating data?

This story is still afoot. Only the future will tell, and I will keep you posted.6 -

Me: We need to allow the team in the newly acquired subsidiary to access our docker image repositories.

Sec Guy: Why?

Me: So they can run our very expensive AI models that we have prepared onto container images.

Sec Guy: There is a ban on sharing cloud resources with the acquired companies.

Me: So how we're supposed to share artifacts?!?

Sec Guy: Can't you just email them the docker files?

Me: Those images contain expensively trained AI models. You can't rebuild it from the docker files.

Sec Guy: Can't you email the images themselves?

Me: Those are a few gigabytes each. Won't fit in an email and won't even fit the Google drive / onedrive / Dropbox single file size limit.

Sec Guy: Can't you store them in a object storage like S3/GCS/Azure storage?

Me: Sure

Proceed to do that.

Can't give access to the storage for shit.

Call the sec guy

Me: I need to share this cloud storage directory.

Sec Guy (with aparent amnesia): Why?

Me: I just told you! So they can access our AI docker images!

Sec Guy: There is a ban on sharing cloud resources with the acquired companies.

Me: Goes insane

Is there a law or something that you must attempt several alternative methods before the sec people will realize that they are the problem?!?! I mean, frankly, one can get an executable artifact by fucking email and run it but can't pull it from a private docker registry? Why the fuck would their call it "security"?9 -

well there's an interesting error😂😂😂😂😂

Stackoverflow clears our error and then I get a Stackoverflow error

java😂 2

2 -

I've kinda ghosted DevRant so here's an update:

VueJS is pretty good and I'm happy using it, but it seems I need to start with React soon to gain more business partnerships :( I'm down to learn React, but I'd rather jump into Typescript or stick with Vue.

Webpack is cool and I like it more than my previous Gulp implementation.

Docker has become much more usable in the last 2 years, but it's still garbage on Windows/Mac when running an application that runs on Symfony...without docker-sync. File interactions are just too slow for some of my enterprise apps. docker-sync was a life-saver.

I wish I had swapped ALL links to XHR requests long ago. This pseudo-SPA architecture that I've got now (still server-side rendered) is pretty good. It allows my server to do what servers do best, while eliminating the overhead of reloading CSS/JS on every request. I wrote an ES6 component for this: https://github.com/HTMLGuyLLC/... - Frankly, I could give a shit if you think it's dumb or hate it or think I'm dumb, but I'd love to hear any ideas for improving it (it's open source for a reason). I've been told my script is super helpful for people who have Shopify sites and can't change the backend. I use it to modernize older apps.

ContentBuilder.js has improved a ton in the last year and they're having a sale that ends today if you have a need for something like that, take a look: https://innovastudio.com/content-bu...

I bought and returned a 2019 Macbook pro with i9. I'll stick with my 2015 until we see what's in store for 2020. Apple has really stopped making great products ever since Jobs died, and I can't imagine that he was THAT important to the company. Any idiot on the street can you tell you several ways they could improve the latest models...for instance, how about feedback when you click buttons in the touchbar? How about a skinnier trackpad so your wrists aren't constantly on it? How about always-available audio and brightness buttons? How about better ports...How about a bezel-less screen? How about better arrow keys so you can easily click the up arrow without hitting shift all the time? How about a keyboard that doesn't suck? I did love touch ID though, and the laptop was much lighter.

The Logitech MX Master 3 mouse was just released. I love my 2s, so I just ordered it. We'll see how it is!

PHPStorm still hasn't fixed a couple things that are bothering me with the terminal: can't reorder tabs with drag and drop, tabs are saved but don't reconnect to the server so the title is wrong if you reopen a project and forget that the terminal tabs are from your last session and no longer connected. I've accidentally tried to run scripts locally that were meant for the server more than once...

I just found out this exists: https://caniuse.email/

I'm going to be looking into Kubernetes soon. I keep seeing the name (docker for mac, digitalocean) so I'm curious.

AWS S3 Glacier is still a bitch to work with in 2019...wtf? Having to setup a Python script with a bunch of dependencies in order to remove all items in a vault before you can delete it is dumb. It's like they said "how can we make it difficult for people to remove shit so we can keep charging them forever?". I finally removed almost 2TB of data, but my computer had to run that script for a day....so dumb...6 -

So at the old job, i needed support for an issue relating to Amazon S3. We used a third party Python plugin for sending files to our buckets, but had some pretty severe performance issues when trying a 2-way sync.

Naturally, I sought help on StackOverflow, and was asked to share my config. Without much thought, I pasted the config file.

Next comment made me aware that our API id and key was listed in this config (pretty rediculous to keep such private info in the same file as configuration, but oh well).

I edited my question and removed the keys, and did not think about the fact that revisions are stored.

Two weeks later, my boss asks me if I know why the Amazon bill is for 25.000$ when it used to be <100$ 😳

I've never been so scared in my life. Luckily, Amazon was nice enough to waive the entire fee, and I leaned a little about protecting vital information4 -

It's rant time again. I was working on a project which exports data to a zipped csv and uploads it to s3. I asked colleagues to review it, I guess that was a mistake.

Well, two of my lesser known colleague reviewed it and one of the complaints they had is that it wasn't typescript. Well yes good thing you have EYES, i'm not comfortable with typescript yet so I made it in nodejs (which is absolutely fine)

The other guy said that I could stream to the zip file and which I didn't know was possible so I said that's impossible right? (I didn't know some zip algorithms work on streams). And he kept brushing over it and taking about why I should use streams and why. I obviously have used streams before and if had read my code he could see that my code streamed everything to the filesystem and afterwards to s3. He continued to behave like I was a literall child who just used nodejs for 2 seconds. (I'm probably half his age so fair enough). He also assumed that my code would store everything in memory which also isn't true if he had read my code...

Never got an answer out of him and had to google myself and research how zlib works while he was sending me obvious examples how streams work. Which annoyed me because I asked him a very simple question.

Now the worst part, we had a dev meeting and both colleagues started talking about how they want that solutions are checked and talked about beforehand while talking about my project as if it was a failure. But it literally wasn't lol, i use streams for everything except the zipping part myself because I didn't know that was possible.

I was super motivated for this project but fuck this shit, I'm not sure why it annoys me so much. I wanted good feedback not people assuming because I'm young I can't fucking read documentation and also hate that they brought it up specifically pointing to my project, could be a general thing. Fuck me.3 -

3 weeks into a new job I learned that my predecessor (who resigned and was out the door two days after I started) didn’t know how to secure s3 buckets when all of our production image assets got replaced with elder porn.

Jury’s still out if it was actually him the whole time.1 -

I'm thinking of buying a Samsung Galaxy S7 as a replacement to my Nexus 6P.. from what I can tell, it ticks all the boxes.. nice battery (3000mAh), 5GHz Wi-Fi, octacore CPU, kernel source being available, QHD display... However, it being sold at €350 - and that only in the Netherlands - does introduce quite some hurdles. For anyone who's owned this device, how long did you own this device and did any issues show up, especially hardware-related ones? Last time I owned a Samsung device was with a Galaxy S3 Mini, which was a delight to use. Other than that I don't really have any experience with it.

Another thing that piqued my curiosity - I still have 3 Raspberry Pi's unused, as well as one LCD display (but without touch). It got me thinking, the only things that I really use my phones for on the go is for calling, texting and listening to music via Bluetooth. Perhaps a Raspberry Pi or even an Arduino could take care of that? The smart devices that I'd consume and produce most content on are my tablet and my PC anyway.38 -

So a typo brought down large swaths of S3. Programming is a merciless profession. No wonder I am stressed all the time.1

-

Does anyone here just wake up and feel shitty for absolutely no reason why ? When I say absolutely no reason means none whatseover from previous day etc . I wanna know if it's just me 😅4

-

When you want only 10 rows of query result.

Mysql: Select top 10 * from foo.... 😁

Sql server: select top 10 * from foo.. 😁

PostgreSQL: select * from foo limit 10.. 😁

Oracle: select * from foo FETCH NEXT/FIRST 10 ROWS ONLY. 🌚

Oracle, are you trying to be more expressive/verbose because if that's the case then your understanding of verbosity is fucked up just like your understanding of clean-coding, user experience, open source, productivity...

Etc.6 -

A friend came to me whether i want to do a project on c++(someone asked him to find a c++ guy).

Me needing money didn’t refuse. Even though i am a Java developer with 0 skills on c++, but wanted to give it a try.

So project started, and it was about a plugin for rhinoceros app(3d graphics app).

The plugin was simple, had some views and some services to upload a file into s3 and some api calls, not something complex..

So i ended up working on the project together with my friend(web dev).

So long story short, we had a lot of issues, but considering we both had no knowledge on c++, we were really lucky to finish the product almost on time(3 days after).

Did no memory management even though i’ve read that we have to do that by our selfs and that c++ doesn’t have garbage collector.

But the plugin worked great even without garbage collector.

Had a lot issues with string manipulation, which almost drive me crazy.

PS: did a post here before taking the project, to ask whether it is a good idea to take the project or not, had some positive and some negative replies, but i deleted the post since i thought i was breaking the NDA i signed 😂😂

PS2: just finished OCAJP 8 last week with a great score😃6 -

>Get java "From zero to hero" book at the age of 12

>Follow along and despair at all the java jargon

>write small programs for fun

>ff to 14yo

>Get my first android phone (galaxy S3)

>Get android 4.0 book

>Follow along and despair at all the android jargon

>Develop small apps for fun

>Learn Java, C and python for the rest of high school

>discover functional programming (erlang/elixir) towards the end of highschool

>love_at_first_sight.jpg

>Learn said language

>Find first job and current job right after that

>happy 3

3 -

What the hell is it with WordPress people. Just read a rant where this dude is calling himself a "developer" . What the hell you're not a developer stop calling yourself a developer. All you do is click and drag pictures into squares. And type plain English into text boxes. Using software thay an actual developer actually did develop. You don't see me on cook rant calling myself a cook you know why cuz I can't cook. Leave don't learn a respectable language and get back to me. And no HTML is not a language.16

-

I have a big progress / update meeting to lead my team tomorrow.

Our investor has "ideas" on features and things that will significantly change the information we have to include in our code.

We are suppose to launch Jan,1 2019

He says I'll Call you tonight to give you the details so you will be ready for tomorrows meeting. .........

............

...........

yep never calls.

Fucking Awesome! can't wait to tell my team tomorrow. "glad you all came in today, looks like we have to change somethings I'm just not sure what yet."

Maybe I'll order pizza and beer to the office and we will all play video games until he shows up. and say if you aren't going to take this seriously why should we.

Fuckers!!!!!!!!!!5 -

Now my client does not want to rely on Amazon S3 because of the One Outage that it ever had a couple what weeks ago I forgot already. So my dumbass blurts out well we could always just back up to some other image or file storing website. But now I'm expected to implement this right away when I really haven't thought about it at all I mean I would have to write some sort of failover and some sort of daily or syncing mechanism. I guess I should forget about any direct upload to S3 code that I have written. Really I guess I have to wrap all of the image and file handling stuff with my own solution. Which actually that will be very nice when it is done and I could use this on other projects but it's quite a lot of work for something that I don't feel we really need at this stage in development. Just because you're using stuff on production that has am enormous red TEST label in the way of the ui doesn't mean i can code bullet proof software any faster4

-

just saw a production level code, all the fucking variables in the code are in capital letters🤪😵😵😵2

-

My Unix class

👨💻using nice looking theme for vs code to edit my bash script

Prof: That's a nice looking theme( he thought it was vim theme)

Me: um.. um.. It's vs code, new guy in a town

Prof: uh! 🤔

Me: ( 5 sec silence) um, It's from Microsoft

Prof: GET OUT!3 -

Today was a SHIT day!

Working as ops for my customer, we are maintaining several tools in different environments. Today was the day my fucking Kubernetes Cluster made me rage quit, AGAIN!

We have a MongoDB running on Kubernetes with daily backups, the main node crashed due a full PVC on the cluster.

Full PVC => Pod doesn't start

Pod doesn't start => You can't get the live data

No live data? => Need Backup

Backup is in S3 => No Credentials

Got Backup from coworker

Restore Backup? => No connection to new MongoDB

3 FUCKING HOURS WASTED FOR NOTHING

Got it working at the end... Now we need to make an incident in the incident management software. Tbh that's the worst part.

And the team responsible for the cluster said monitoring wont be supported because it's unnecessary....3 -

It finally came! Super excited.

Yes it takes amazing pictures even in the dark and the radar is super cool. Can't wait to dig into what I can do with the radar. 13

13 -

So Mr robot s3 has started & is already 6 episodes in, why hasn't the hype built up this time like last season ?6

-

My Gear S3's charger all of a sudden decided not to stop full charge when the watch was at 100%, so I came home to a watch that was flaming hot and going crazy with error messages that it's overheated, battery going down WHILE charging.

Tried it a few more times, never stops at 100% anymore

Samsung you fucking retards, thanks for trying to burn my house down (again)4 -

what to do with this android studio, taking up 2.3 gigs of RAM😪😪😪

good thing i upgraded my ram from 4 gigs to 8 gigs before getting into android development 😪6 -

--- s3-r-w.us-west-2.amazonaws.com ping statistics ---

44 packets transmitted, 16 received, 63,6364% packet loss, time 43544ms

rtt min/avg/max/mdev = 258.995/280.765/377.149/37.359 ms

Sounds like a good day to grab a ball and go outside.4 -

So the library we used to interact with s3 had this very cool delete function. (Pseudocode)

function delete(bucket, file)

request = buildRequest(bucket, file)

sendRequest(request)

OK

Now imagine how many problems we setup without delete permission, because we assumed this librsry actually returns an error code -

Awesome, at lease devRant is up and running, where else would we all go otherwise when the Internet is falling apart! Go @dfox !4

-

Upvote if you are one of the lucky devs, which companies use Google Cloud Storage or any other AWS S3 competitor :)2

-

What cloud service does DevRant use?

Aws Or Azure?

And how much do you pay a month for maintenance costs?

What tables to store data? MongoDB, dynamoDB, oracle?

Or S3 streams?

Just wondering.2 -

Can we please make a Over Engineered Section....

This happened a couple of weeks ago...

Hey platform engineer team, we need a environment spun up, it's a static site, THATS IT!

PE Team response.. okay give us a 2 weeks we need to write some terraform, update some terraform module, need you to sign your life away as the aws account owner, then use this internal application to spin up a static site, then customize the yml file to use nuxt, then we will need you to use this other internal tool to push to prod...

ME: ITS a static site... all I need is a s3 bucket, cloudfront, and circleci9 -

When you know your product sucks and even you won't support it. Too bad for those two windows phone users left in the world.

Working on a Project. Forced to use xaml I hate xaml. C# is so much more efficient/ easier. Now at least I have an excuse

. 😤 3

3 -

If you're going to build an open source command line tool, please for fuck sake publish the Linux x64 artifact to the world. I don't want to waste half my day setting up a box just to compile your project.

I know you build the artifact, I see it in your public CI system. The badge at the top of your GitHub repo even says it's good today. So seriously, why can't you just publish that binary to S3 so I don't have to waste my day ranting.1 -

It's been a while DevRant!

Straight back into it with a rant that no doubt many of us have experienced.

I've been in my current job for a year and a half & accepted the role on lower pay than I normally would as it's in my home town, and jobs in development are scarce.

My background is in Full Stack Development & have a wealth of AWS experience, secure SaaS stacks etc.

My current role is a PHP Systems Developer, a step down from a senior role I was in, but a much bigger company, closer to home, with seemingly a lot more career progression.

My job role/descriptions states the following as desired:

PHP, T-SQL, MySQL, HTML, CSS, JavaScript, Jquery, XML

I am also well versed in various JS frameworks, PHP Frameworks, JAVA, C# as well as other things such as:

Xamarin, Unity3D, Vue, React, Ionic, S3, Cognito, ECS, EBS, EC2, RDS, DynamoDB etc etc.

A couple of months in, I took on all of the external web sites/apps, which historically sit with our Marketing department.

This was all over the place, and I brought it into some sort of control. The previous marketing developer hadn't left and AWS access key, so our GitLabs instance was buggered... that's one example of many many many that I had to work out and piece together, above and beyond my job role.

Done with a smile.

Did a handover to the new Marketing Dev, who still avoid certain work, meaning it gets put onto me. I have had a many a conversation with my line manager about how this is above and beyond what I was hired for and he agrees.

For the last 9 months, I have been working on a JAVA application with ML on the back end, completely separate from what the colleagues in my team do daily (tickets, reports, BI, MI etc.) and in a multi-threaded languages doing much more complicated work.

This is a prototype, been in development for 2 years before I go my hands on it. I needed to redo the entire UI, as well as add in soo many new features it was untrue (in 2 years there was no proper requirements gathering).

I was tasked initially with optimising the original code which utilised a single model & controller :o then after the first discussion with the product owner, it was clear they wanted a lot more features adding in, and that no requirement gathering had every been done effectively.

Throughout the last 9 month, arbitrary deadlines have been set, and I have pulled out all the stops, often doing work in my own time without compensation to meet deadlines set by our director (who is under the C-Suite, CEO, CTO etc.)

During this time, it became apparent that they want to take this product to market, and make it as a SaaS solution, so, given my experience, I was excited for this, and have developed quite a robust but high level view of the infrastructure we need, the Lambda / serverless functions/services we would want to set up, how we would use an API gateway and Cognito with custom claims etc etc etc.

Tomorrow, I go to London to speak with a major cloud company (one of the big ones) to discuss potential approaches & ways to stream the data we require etc.

I love this type of work, however, it is 100% so far above my current job role, and the current level (junior/mid level PHP dev at best) of pay we are given is no where near suitable for what I am doing, and have been doing for all this time, proven, consistent work.

Every conversation I have had with my line manager he tells me how I'm his best employee and how he doesn't want to lose me, and how I am worth the pay rise, (carrot dangling maybe?).

Generally I do believe him, as I too have lived in the culture of this company and there is ALOT of technical debt. Especially so with our Director who has no technical background at all.

Appraisal/review time comes around, I put in a request for a pay rise, along with market rates, lots of details, rates sources from multiple places.

As well that, I also had a job offer, and I rejected it despite it being on a lot more money for the same role as my job description (I rejected due to certain things that didn't sit well with me during the interview).

I used this in my review, and stated I had already rejected it as this is where I want to be, but wanted to use this offer as part of my research for market rates for the role I am employed to do, not the one I am doing.

My pay rise, which was only a small one really (5k, we bring in millions) to bring me in line with what is more suitable for my skills in the job I was employed to do alone.

This was rejected due to a period of sickness, despite, having made up ALL that time without compensation as mentioned.

I'm now unsure what to do, as this was rejected by my director, after my line manager agreed it, before it got to the COO etc.

Even though he sits behind me, sees all the work I put in, creates the arbitrary deadlines that I do work without compensation for, because I was sick, I'm not allowed a pay rise (doctors notes etc supplied).

What would you do in this situation?4 -

Around 7 months ago,me and my friend started working for a startup as interns. it was work from home internship. they first told us to research about CDN and study about RESTapi. when we finished with that they told us to make a personal storage portal ( ) using CDNs which we did. After 3 month of work when we presented the same they then told us to scrap the project. what they wanted was cloud storage space which i am now implementing using amazon s3 FUCK THIS FUCKING STARTUP1

-

mfw

> 1 year into project in React.js with a 10+ members in team

> PM panics over last Apache statement

> PM: "fuck, rewrite it in Angular 4 : /"1 -

Time for a rant about shitstaind, suspend/hibernate, and if there's room for it at the end probably swappiness, and Windows' way of dealing with this.

So yesterday I wanted to suspend my laptop like usual, to get those goddamn fans to shut up when I'm sleeping. Shitstaind.. pinnacle of init systems.. nope, couldn't do it. Hibernation on the other hand, no problem mate! So I hibernated the laptop and resumed it just now. I'm baffled by this.

I'll oversimplify a bit here (but feel free to comment how there's more to it regardless) but basically with suspend you keep your memory active as well as some blinkenlights, and everything else goes down. Simple enough.. except ACPI and I will not get into that here, curse those foul lands of ACPI.

With hibernation you do exactly the same, but on top of that, you also resume the system after suspending it, and freeze it. While frozen, you send all the memory contents to the designated swap file/partition. Regarding the size of the swap file, it only needs to be big enough to fit the memory that's currently in use. So in a 16GB RAM system with 8GB swap, as long as your used memory is under 8GB, no problem! It will fit. After you've moved all the memory into swap, you can shut down the entire system.

Now here's the problem with how shitstaind handled this... It's blatantly obvious that hibernation is an extension of suspend (sometimes called S3, see e.g. https://wiki.ubuntu.com/Kernel/...) and that therefore the hibernation shouldn't have been possible either. The pinnacle of init systems.. can't even suspend a system, yet it can hibernate it. Shitstaind sure works in mysterious ways!

On Windows people would say it's a hardware issue though, so let's talk a bit about that clusterfuck too. And I'll even give you a life hack that saves 30GB of storage on your Windows system!

Now I use Windows 7 only, next to my Linux systems. Reason for it is it's the least fucked up version of Windows in my opinion, and while it's falling apart in terms of web browsing (not that you should on an EOL system), it's good enough for le games. With that out of the way... So when you install Windows, you'll find that out of the box it uses around 40GB of storage. Fairly substantial, and only ~12GB of it is actually system data. The other 30-ish GB are used by a hibernation file (size of your RAM, in C:\hiberfil.sys) and the page file (C:\pagefile.sys, and a little less than your total RAM.. don't ask me why). Disable both of those and on a 16GB RAM system, you'll save around 30GB storage. You can thank me later.

What I find strange though is that aside from this obscene amount of consumed storage, is that the pagefile and hibernation file are handled differently. In Linux both of those are handled by the swap, and it's easy to see why. Both are enabled by the concept of virtual memory. When hibernating, the "real" memory locations are simply being changed to those within swap. And what is the pagefile? Yep.. virtual memory. It's one thing to take an obscene amount of storage, but only Windows would go the extra mile and do it twice. Must be a hardware issue as well.

Oh, and swappiness. This is a concept that many Linux users seem to misunderstand. Intuitively you'd think that the swappiness determines what percentage of memory it takes for the kernel to start swapping, but this is not true. Instead, it's a ratio of sorts that the kernel uses when determining how important the memory and swap are. Each bit of memory has a chance to be put into either depending on the likelihood of it being used soon after, and with the swappiness you're tuning this likelihood to be either in favor of memory or swap. This is why a swappiness of 60 is default most of the time, because both are roughly equally important, and swap being on disk is already taken into account. When your system is swapping only and exactly the memory that's unlikely to be used again, you know you've succeeded. And even on large memory systems, having some swap is usually not a bad idea. Although I'd definitely recommend putting it on SSD in a partition, so that there's no filesystem overhead and so that it's still sufficiently fast, even when several GB of memory are being dumped in.6 -

1.Working on a repo's 20 day old version without pulling the changes first

2. Then blaming me to not tell him

3. Ultimately sending me a see screenshot of his code to incorporate in my code ( which he himself didn't write, but asked a coworker to do it)

WTF DUDE. Atleast you could have realised your mistake and not blamed me for it -

Learn:

Docker, kubernetes, ceph, s3, and aws in general. Not really dev per se, but still worthy technical goals.1 -

After working with a coworker on some odd issues, I finally decided to check on the actual ticket he needed assistance with.

From now on, we will optimize our HTML for aesthetic appeal in Chrome's dev tools. display:none is verboten.

Sometimes I wonder if I've had a stroke or if I've died and am in purgatory. -

!rant

After 4 - 5 months of learning webD, I am trying to build my first fullstack web application (simple chat one ).

My stack :

FRONTEND:

Vue.js + Materialize

Backend:

Express ( handling routes )

Mongoose/MongoDB ( Database )

Socket.io ( web sockets for real time connection )

JWT

Had dreamt of this 2 months ago where I built a basic front end using html and css, and now porting it to Vue is like a breeze.

Wish me luck and let's hope it doesnot become one of the unfinished projects. ( My university semester exams are coming up , would have to complete this as fast as possible ). I am also learning DSA + STL and aim to learn basic python syntax before holidays so that I can focus my time on ML during them. It's so fucking overloaded that I have my doubts ::((4 -

0100100101100110001000000111100101101111011101010010000001110101011100110110010101100100001000000110000100100000011101000110111101101111011011000010000001110100011011110010000001100011011011110110111001110110011001010111001001110100001000000111010001101000011010010111001100100000011110010110111101110101001000000110000101110010011001010010000001101100011000010111101001111001001000000111000001101001011001010110001101100101001000000110111101100110001000000111001101101000011010010111010000100000001010000110101001110101011100110111010000100000011011000110100101101011011001010010000001101101011001010010000001111000010001000010000000001101000010100100100101100110001000000110111001101111011101000010000000101000011101110110100101100011011010000010000001101001011100110010000001101001011011010111000001101111011100110111001101101001011000100110110001100101001000000110001101100001011101010111001101100101001000000111010001101000011010010111001100100000011010010111001100100000011101000110111101101111001000000110110001101111011011100110011100101001001000000110111001101001011000110110010100100000011101110110111101110010011010115

-

Now... I understand 2FA is to make things more secure, and I do appreciate it. BUT can we please work out a damn solution for people who work in an agency for other corporates which only have one shared account across the agency that bundles one phone number or mobile app.

What if people are on leave or sick? I need stupid 2FA to be able to login/work. uhhhhhhh.....8 -

Ok so I'm am a black end kind of guy and have had to deal with making our site again and again and again and fu#@ing again.

Who the hell thought up using SVG icons. My first look at it today and I'm not sure if I hate it or love it. Any thoughts?9 -

I can't believe this shit happened in time for this week's rant!

Here it goes.

I have a table on AWS Athena which has partitions. Now, in the earlier versions of this project whenever I write something to a new partition a simple `MSCK` query worked (and keep in mind I am NOT deleting anything)!

Now, my so called Team Lead in the PR for the latest (major) release tells me to change it to an `ALTER TABLE`. I was like fine, but I did not add the s3 location to it, because it was NOT NEEDED. TL asks me to add location as well. I try to convince this person that it's not needed, but I lose. So there it is in production, all wrong.

Today I notice that the table is all fucked up. I bring this up in the stand up. The main boss asks me to look into it, which I do. Figure out what the issue is. This TL looks at it and says you need to change the location. I put my foot down.

"NO. What I need is to remove the bloody location. IT'S NOT NEEDED!"

TL's like, "Okay. Go ahead"

Two things:

1. It's your fault that there's this problem in production.

2. Why the fuck are you looking into this when I was clearly told to do so? It's not like you have nothing to do!1 -

Who wants to build with me the European search engine?

- Rust or Go for high-performance crawling

- LLM (Mistral / Mixtral / Zephyr) for reasoning and answering

- Vector DB (Qdrant / Weaviate) for semantic memory

- Retrieval-Augmented Generation (RAG) instead of classic indexing

- Postgres / SQLite / S3 for smart buffer storage

- LLM-powered garbage filter (kill SEO sludge)

- Nightly retraining & hot-swappable models

- Minimalist frontend (SvelteKit or Next.js)

- No chatbot behavior. No endless replies. Just answers.6 -

Robbery of nearby future :

A broke dev decided to do a robbery by stealing the whole DAVE -2 system from the Tesla S3 model

While asking why he chose a drastic path as this, he said "My client wanted the training to be ready within 2 days and I couldn't arrange that much GPU in such small notice, so decided to do what I did.*ignored(But I reinstalled it back in the car)*

As you can see, client's have turned into money hungry, cock sucking, fist fucking, and God-knows-what-fetish wanting prices of shit"

Over to you, Clara 3

3 -

So it turns out that a lot of writes to S3 is slow, regardless of whether you spent the time to rewrite your code from SAX to JAXB, then Go, then finally C++, thinking the problem was always with your code.

-

back in college i started a project to manage my MTG and YuGiOh cards. I wanted to have a database for them with a graphical manager (already some older ones on github but i don't like the feel of them).

But between college work, the difficulty of building a SQL database schema for them and the fact I had hundreds of cards I'd need to put into the database manually I dropped the project after the 2 friends working with me also dropped out of the project.

But recently I found this hackster project (https://hackster.io/mportatoes/...), and i'm mostly sure I could retrofit it to use opencv to at least read the card title reliably allowing me to scrape the rest of the information from some wikia page as a new card is scanned. I'd just have to pick up a bin of legos at walmart lol

And previously learned about mongodb which would make storing the cards ina DB a lot easier than dealing with SQL.

I might pick this back up again, but when I first started I had 2 friends working on it with me who both dropped out before I finally gave up, so starting by myself might be a little demotivating. -

my workstation, can't imagine to work without it,

waiting for dev rant stickers even left some space for them

Proudly running Ubuntu Gnome 16.04 3

3 -

Today from 9am till now 1am (picture this time in your head) i was getting cumganged✌️ by amazon

Trynna setup aws s3 bucket

And cloudfront

I died at least 17 times in the process

I cant anymore4 -

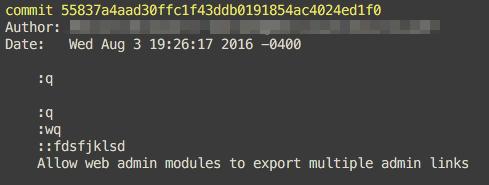

Those people who don’t even understand the commit message

Who commits using commit message “commiting”?3 -

Don't understand file perms?

No prob...just sudo chmod -R 0777 /

and don't forget to make all your S3 buckets public!3 -

Amazon's stock price is a better uptime indicator than their fucking status page.

https://google.co.uk/search/...2 -

I don't like when client decide which tech use in the project. I got some weird tech request like:

1. Move existing database from postgresql to Hadoop because hadoop is Big Data (is kinda move from amazon rds to amazon s3 just why? have you index, cluster your postgresql table?)

2. Move from mysql to postgresql because mysql cause deadlock (maybe their previous developer just fucking moron)

In this situation we just explain why we don't use that and propose alternative solution. If they insist with their solution either ignore it or decide not continuing the project.5 -

Here, code reviews are not happening 🙁☹️

When the actual error comes to the prod, we dig into the logs and figure out the reason.

The project is not stable and in the development phase, so requirements are coming too much every month with deadlines.

Deadlines are mostly 1 to 2 weeks.

Sr. Devs mostly merge PR without reviewing it.

I had lots of opportunities though due to various requirements like I learnt AWS dynamo DB, S3, and a few things regarding EC2.

But the coding standard which needs to be learned that I think I'm lacking because my code is not getting reviewed.

Not only about coding, we have to create a ticket in Jira for our task which is decided in the scrum and needs to assigned to ourselves.

In the name of scrum, there are 1 to 2 hours of meeting where they started brainstorming about new requirements and how we are going to implement them.

What should I do to make my code more cleaner and professional?1 -

Not the biggest hurdle, but I felt like THE BOSS on finishing the task.

I have to create Branch in a repository for respective folders in S3 bucket and have to commit that folder into it's respective branch. There are around 29 folders in the bucket, the task would have taken my entire day. Rather I completed the task in less than half an hour. Shell Script is the coolest tool, which saved my entire day, indeed I felt like THE BOSS. -

When you accidentally send a screenshot of a chat to the person him/herself. Lot of shitload to get out off .. FYI I blocked the person. Would have to deal with it 1.5 months later when I actually meet her after vacations. I need luck :(

-

Infrastructure took away our read access in S3 to data that we own and our ability to manually delete/upload to S3 in that prefix (which we own). Without waiting for us to confirm that we have alternative means to read and change what is in there. And I had no warning about this, so here I am doing a midnight mod on an existing solution of mine in hopes that I can finish it before tomorrow morning for some legal reporting deadline.

Things would be so much easier if the infrastructure team let the emergency support role have those permissions for emergencies like this, but they didn't. I guess "least privilege" means "most time spent trying to accomplish the most trivial of things, like changing a file".8 -

Amazon S3 down: Cant pull any docker images >:/ ....but I wanted to update my GitLab instance. So good that I deleted the old container and image. FML

-

I have to do a transfer of about 2 GB of data from one remote server to another. Any suggestions?

My idea was to do multiple curl requests while compressing the data using gzcompress.

Preliminary testing shows that won't work. Now I'm considering putting the data in a file on our S3 bucket for the other server to obtain.14 -

TFW the wife calls asking about her website being down, and you realize Amazon S3 is having trouble.1

-

What programming language did you study in high school? In my country they teach us Pascal for what ever reason, me coming from c++ I can t support it16

-

I've never been a big fan of the "Cloud hype".

Take today for example. What decent persistent storage options do I have for my EKS cluster?

- EBS -- does not support ReadWriteMany, meaning all the pods mounting that volume will have to be physically running on the same server. No HA, no HP. Bummer

- EFS -- expensive. On top of that, its performance is utter shit. Sure, I could buy more IOPS, but then again.. even more expensive.

S3 -- half-assed filesystem. Does not support O_APPEND, so basically any file modifications will have to be in a

`createFile(file+"_new", readAll(file) + new_data); removeFile(file); renameFile(file + "_new", file);`

way.

ON TOP of that, the s3 CSI has even more limitations, limiting my ability to cross-mount volumes across different applications (permission issues)

I'm running out of options. And this does not help my distrust in cloud infras... 9

9 -

"feel free to choose which ever size pixel you prefer because you get the same great experience on both we don't set aside better features for the larger device"

-Google7 -

After having struggled with trying to set up a server for my static files, I finally gave in and signed up for AWS S3. Why did I wait so long?1

-

Watch your shell. Someone did it again.

Sysadmin grilled s3 with a typo in his command, shutting down whole subsystems of amazons infrastructure2 -

In my project to process CSV file it needs to be transformed into XML file. Basically 100 MB CSV is turned into 3GB XML. They can be deleted afterward but it is not implemented and no one cares. Our storage gains around 1-2 TB data each month. Right now we have ~200 TB of XML in our s3

I think I can add Big Data to my CV *sarcasm*5 -

!rant

Anyone here experienced with Route53?

I have a small issue I'm trying to think through on how to achieve with minimum effort and maintenance, essentially set once and walk away and never care about it again solution.

Basically what I have is:

sub.domain.com

and I need to get it to redirect over to

otherdomain.com/folderToGetTo/

Using a 301 would be ideal but how for the life of me do I go about serving a 301 redirect over a dns entry - short answer is I can't unless I'm missing something!

Both domains are owned by the same company so no issue in hijacking a subdomain... well besides internal politics but that's just another day 😏

First thoughts include setting up a S3 bucket with hosting and forcing the dns to that and then, redirect out of the bucket... seems overkill but will work.

Hoping to find a smaller solution that I don't have to justify a S3 bucket being used for a single file - audits suck alright🤷♂️

Oh and setting up a redirect at the originating domain will take longer then it's worth to setup and get approvals for so not worth the effort internally.

Yes I will accept "fuck off @C0D4" as an answer.question popcorn supplied c0d4 has a question redirect why can't we do it like normal people route537 -

Story of a first-time hackathon.

So, I took part in the COVID-19 Global Hackathon.

Long story short, I got excited at OCR and just went with the most challenging challenge - digitizing forms with handwritten text and checkboxes, ones which say whether you have been in contact with someone who could have Coronavirus.

And, unsurprisingly, it didn't work within 4 days. I joined up with 2 people, who both left halfway through - one announced, one silently - and another guy joined, said he had something working and then dissapeared.

We never settled on a stack - we started with a local docker running Tesseract, then Google Cloud Vision, then we found Amazon Textract. None worked easily.

Timezone differences were annoying too. There was a 15-hour difference across our zones. I spent hours in the Slack channel waiting.

We didn't manage the deadline, and the people who set the challenge needed the solution withing 10 days, a deadline we also missed. We ended up with a basic-bitch Vue app to take pictures with mock Amazon S3 functionality, empty TDD in Python and also some OCR work.

tbh, that stuff would've worked if we had 4 weeks. I understand why everyone left.

I guess the lesson from this is not to be over-ambitious with hackathons. And not to over-estimate computers' detection abilities.rant covid hackathon slack s3 google cloud vision python tdd aws tesseract textract covid-19 global hackathon2 -

This is a part rant-part question.

So a little backstory first:

I work in a small company (5 including me) which is mostly into consultation (we have many tech partners where we either resell their products or if there is a requirement from one of our clients, we get our partners to develop it for them and fulfill the client requirements) so as you can see there is a lot of external dependencies. I act as a one-hat-fits-all tech guy, handling the company websites, social media channels, technical documentation, tech support, quicks POCs (so anything to do with anything technical, I handle them). I am a bit fed up now, since the CEO expects me to do some absurd shit (and sometimes micro manages me, like WTF I am the only one who works there with 100% commitment) and expects me to deliver them by yesterday.

So anyway long story short, our CEO finally had the brains to understand that we should start having our own product (which i had been subtly suggesting him to do for a while now!).

Now he came up with a fairly workable concept that would have good market reach (i atleast give him credits for that) and he wanted me to suggest the best way to move forward (from a both business and technical point of view). The concept is to have an auction-based platform for users to buy everyday products.

I suggested we build a web app as opposed to a mobile one (which is obvious, since i didnt want to develop a seperate website and a mobile app, and anyway just because we can doesnt mean we have to make a mobile app for everything), and recommended the Node/react based JS tech stack to build it.

At first he wanted me to single handedly build the whole platform within a month, I almost flipped (but me being me) then somehow calmed down and finally was able to explain him how complicated it was to single-handedly build a platform of such complexity (especially given my limited experience; did I mention that this is my first job and I am still in college, yeah!!) and convinced him to get an experienced back-end dev and another dev to help me with it.

Now comes the problem, I was to prepare a scope document outlining all the business and technical requirements of the project along with a tentative cost, which was fairly straightforward. I am currently stuck at deciding the server requirements and the system architecture for the proposed solution (I am thinking of either going with AWS - which looks a bit complicated to setup - or go with either Digital Ocean or Heroku):

I have assumed that at peak times we would have around 500-1000 users concurrently

And a daily userbase of 1000 users (atleast for the first few months of the platform running)

What would be the best way forward guys?

I did some extensive (i mean i read through some medium blogs! and aws documentation) research and put together the following specs (if we are going through AWS):

One AWS t3.medium ec2 instance for the node server (two if we want High Availability by coupling with the AWS load balancer and Elastic Beanstalk)

The db.t3.small postgres database

The S3 Storage bucket (100gb) for the React Front end hosting

AWS SNS for email/sms OTP and notification

And AWS CloudMonitor for logging amd monitoring.

Am I speculating the requirements properly, where have I missed??

Can u guys suggest what is the best specification for such a requirement (how do you guys decide what plan to go with)?

Any suggestions, corrections, advices are welcome3 -

One CDN goes down and whole world thinks it’s the end of the world!

It’s an opportunity to improvise and bring up something better3 -

Every time I check my old codes i start insulting my self..... How the fuck was i that stupid..... Still Stupid tho but i m progressing :D

I m learning to code by myself without any instructor :').... I wanna use unreal engine but i forgot how to code with cpp since i m only using C# now.. made winform apps and installing xamarin to learn about cross platform devloppment :)1 -

So I just acquired a Samsung galaxy S3 running Android 4.3 with Clockwork mod recovery off friend, and last night it ran out of battery. Then I tried charging it. It's not charging or turning on. Any ideas what's going off?8

-

So I have purchased the domain studioentropy.com. It's taken me all afternoon but I've set up and configured aws s3 buckets and route 53 zone an entries and my shite asse site is now online, hooray... My question is with regards to https. Given that ny site is really only going to be a single page site with relatively static content, no eccomerce of any kind, no passing of sensative information is required, is it really worth going through the pain of configuring cloudfront so the site uses https instead of http?13

-

Been working on a Covid App in this quarantine. Right now it’s only on Android but still can get the view from the page below. Do give it a go..

https://neo7337.github.io/cvkavach_...

open for suggestion and bugs!3 -

wanted to do a poll for my team, Which should we use for development of spring-boot?

I personally recommend VSCode, what about you guys? 17

17 -

I found a good method of testing everything before I add it to the project so rn I’m calm

On the other side of things, Stranger Things S3 is killing me2 -

"We're excited to announce that we've disabled the deprecated Storefront Toolkit by default for new....."

I am also so freaking "excited" that you have also disabled the close button on the popup when you login to Salesforce BM genius.

How the hell i am suppose to use SFBM now?!! developer tool to remove the popup markup?!

Learn more?? I don't need FAQ doc, I need the popup closed!!! 3

3 -

So all my code is Lambda serverless funcs, hurray!

But I still need NAT gateway / VPC endpoints that cost $50pm to reach S3 from private VPC so what's the fucking point?!1 -

The pain of creating a data pipeline in AWS to dump all your DynamoDB tables into S3 is something I don't want anyone else to go through..Why don't you have a copy button..😭😭

-

Complaints about how FE rendering is so slow when BE apis take forever to return. Working on performance projects and feel like you've done nothing at all at the end of each day.2

-

Fuck Oracle, fuck you oracle! The stupidest shittiest worst nightmare company with the most user-unfriendly, productivity-killing, illogical, stupid pile of software garbage products ever! And unfortunately I want to extends my worm-fucks to all Oracle employees and maintainers and to the whole fucking community of shit that made up oracle-community and to every conscious being who ever liked, enjoyed or have found the slightest genuine interest of any product tagged "oracle".

I installed the pile of shit a.k.a Oracle 18c and imported a dumb file locally, everything was working in the slightest amount of the word (fine) before it turns to nightmare. I created a C# client to call a stored procedure in that shit of a database engine. I kept getting error related to the parameter types, specifically one which is custom type of Table of numbers. It turns out that the only of doing this is through that shit they called (unmanaged driver), the "managed" doesn't support custom types. So I had to install another package of shit they call (odbc universal install) "universal my a$$ by the way", at that moment, where everything just crashed and stopped working. I spent 3 hours trying to connect to the fucking database to no avail. I shockingly found a folder in my desktop folder called (OracleInstallation) and all windows services related to oracle installation "suddenly" got somehow (re-routed) to that folder.

In conclusion, fuck oracle.4 -

looking for a nice side project very badly..😖😖it sucks when you have nothing interesting to work on.3

-

* Create S3 Bucket

* Enable versioning

* Setup lifecycle to delete small temporary objects after 7 days

* Wait 7 years

* Say "Wow, I was fucking stupid, and I've learned a lot since then."

* Write devRant post

* Profit with lower monthly AWS bill1 -