Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "format"

-

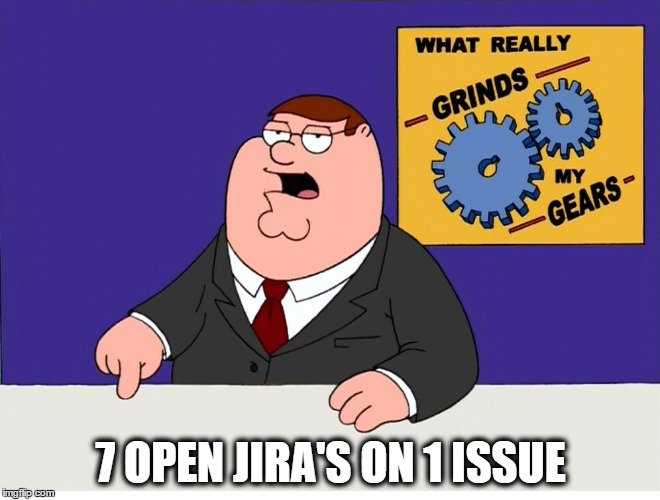

Seriously, why? WHHHYYYY?

US-date-format sucks, every FUCKING TIME!

The only time I really notice is when the "month" is larger than 12:

05/13/2017

"5th of Dec... oh. Fuck. Not this shit again..."

(It makes no sense. Absolutely none.)34 -

7/4/2018

I can never read this date properly

Is this 7th april

Or is this 4th july????

Fuck your american date format38 -

About to install a new linux distro.

Re-checking if I am about to format the right partition for 25+ times.

I really hope I won't fuck this up.23 -

Someone: how do I convert cute girls to .gf format ?

Me : Sorry, this feature is not available in the beta version7 -

Found this gem on GitHub:

// At this point, I'd like to take a moment to speak to you about the Adobe PSD format.

// PSD is not a good format. PSD is not even a bad format. Calling it such would be an

// insult to other bad formats, such as PCX or JPEG. No, PSD is an abysmal format. Having

// worked on this code for several weeks now, my hate for PSD has grown to a raging fire

// that burns with the fierce passion of a million suns.

// If there are two different ways of doing something, PSD will do both, in different

// places. It will then make up three more ways no sane human would think of, and do those

// too. PSD makes inconsistency an art form. Why, for instance, did it suddenly decide

// that *these* particular chunks should be aligned to four bytes, and that this alignement

// should *not* be included in the size? Other chunks in other places are either unaligned,

// or aligned with the alignment included in the size. Here, though, it is not included.

// Either one of these three behaviours would be fine. A sane format would pick one. PSD,

// of course, uses all three, and more.

// Trying to get data out of a PSD file is like trying to find something in the attic of

// your eccentric old uncle who died in a freak freshwater shark attack on his 58th

// birthday. That last detail may not be important for the purposes of the simile, but

// at this point I am spending a lot of time imagining amusing fates for the people

// responsible for this Rube Goldberg of a file format.

// Earlier, I tried to get a hold of the latest specs for the PSD file format. To do this,

// I had to apply to them for permission to apply to them to have them consider sending

// me this sacred tome. This would have involved faxing them a copy of some document or

// other, probably signed in blood. I can only imagine that they make this process so

// difficult because they are intensely ashamed of having created this abomination. I

// was naturally not gullible enough to go through with this procedure, but if I had done

// so, I would have printed out every single page of the spec, and set them all on fire.

// Were it within my power, I would gather every single copy of those specs, and launch

// them on a spaceship directly into the sun.

//

// PSD is not my favourite file format.

Ref : https://github.com/zepouet/...16 -

Just noticed the date format mm/dd/yy. Can we switch (or give an option to switch) to a superior to format like dd.mm.yy or iso 8601

@dfox20 -

The ignorance of the 12 year old me destroyed 2years of family memories.

...I wonder what this format option does...5 -

"Yeah, we didn't send out a notice about changing the format. We figured you'd notice when your code stopped working."1

-

People sending text documents in .doc format... what is fucking wrong with you?! Word has even had a build in .pdf converter for decades!?15

-

So my local job center had me convert my LaTeX CV into Word format, as apparently PDF is to techy for them.5

-

Telling someone you don't like the way they format their code is like... Telling them their girlfriend is ugly.4

-

Finally put tiger inside grub!

Had to-do lot of circus!

Had grub without any background twice!

What are format of pictures supported by grub? 8

8 -

I love Json format. It is so simple, powerful, easy to read and all that good stuffs. There is only one thing I wish Json could support, that's commenting.13

-

Elixir:

solution = problem |> process |> process_more |> finalize |> format

Java:

New class() implements quantum mechanics; get(); set(); get(); set(); super.fuckshitup(); throw new nonsense 300 line error and crash6 -

Sure, WhatsApp. It's not like humans have been using the 12-hour format for literal millenniums. Change it to this weird English format with no apparent way to change it back. Thanks.

4

4 -

Today I'm trying to study how to encode data in idx-ubyte format for my machine learning project.

Professors I'm going to astonish you!

Good day and good coding to all of you! :) 6

6 -

When you are a Dev at UBER & cannot figure out the date/year format..

You are the right person for the right company !! 4

4 -

For a developers ranting social media platforms, it sure seems strange that we can't format code snippets... 🤔

At least something like `foo(bar){}` would be awesome!3 -

- Can you format my computer ?

- I am developper Bro, this is not my kind of business

- I know you studied IT, so when you format my computer ?

-...6 -

Fun fact: a 4TB HDD replaces about 2,744,000 1.44MB floppy disks. (Floppy size based on free space left on floppy after format in DOS 6.22 as FAT16.)5

-

It took me around 10 hours, but I finally got a new feature onto my discord-bot. Best thing about it is, that I can basically transfer it onto every meme format

4

4 -

We work remotely, the only way I can collaborate with other Devs, team lead, product owner is slack. Respond to my f***ing messages people!3

-

Connect a pen drive, format it successfully. Connect to a new machine to copy data and see the data exists.

Crap! which drive did I formatted :(1 -

New format!!!

Junior: We have a problem

Senior: Well what is it you're working on? Maybe I can-

Junior: Nevermind, got it!

Senior: ...

Junior: ...16 -

Thanks so much for the clarification Pikepass....such a big help. Now I know exactly what format my account number should look like and where to find it!

-

I just got asked to print out last weeks production data in the "pristine squiggly brackets format".

😐

WHAT DO YOU MEEEEAAAANNN!4 -

Long time ago, back in a day of Microsoft Office 95 and 97, I was contracted to integrate a simple API for a payment service provider.

They've sent me the spec, I read it, it was simple enough: 1. payment OK, 2. payment FAILED. Few hours later the test environment was up and happy crediting and debiting fake accounts. Then came the push to prod.

I worked with two other guys, we shut down the servers, made a backup, connected new provider. All looked perfectly fine. First customers were paying, first shops were sending their products... Until two days later it turned out the money isn't coming through even though all we are getting from the API is "1" after "1"! I shut it off. We had 7 conference calls, 2 meetings, 3 days of trying and failing. Finally, by a mere luck, I found out what's what.

You see, Microsoft, when you invent your own file format, it's really nice to make it consistent between versions... So that the punctuation made in Microsoft Word 97 that was supposed to start from "0" didn't start from "1" when you open the file in Microsoft Word 95.

Also, if you're a moron who edits documentation in Microsoft Word, at least export it to a fucking PDF before sending out. Please. -

Me: I fucking hate people using proprietary data formats when there is something more than capable already...

Also me: *Spends an hour designing file structure for a proprietary image format* Hmmm... How can we trim even more bytes off this...

(Designing the format to be smaller than a typical PNG and make it easier to load in data programatically)8 -

Always triple check which drive you are about to format. It may sound dumb but there are some people (like me) who had to reinstall the whole os because of that.6

-

I want to stab the person that decided to make dates in JavaScript so fucking complicated to format.16

-

Customer: can you fix my flashdrive? I think it's corrupted or something.

Me: sure no problem

*plugs in flashdrive in pc*

*tried to format*

*Disk is write protected*

Me: ... not you again.9 -

Ages ago, it was still in the last millenium which will not end soon at that point of tine, I had a 10MB HDD in my first computer. It was a gift and second hand, and DOS 3.2 was installed on it, and my younger self, unable to talk or write english, had that cool game on it (Pitfall, if I remember correctly). But that game was not enough, so I tried to enter all the filenames in all the folders to find other games on that machine. Some commands were ther which I have not understand correctly, and one of them was 'format'. Typed in 'format' and pressed enter, an error message appears that I have to enter a drive letter as argument. Because I had known only A: for the floppy drive and C: for the HDD i tried at first with the floppy. Nothing happens, vecause there was no disk in the drive. Then I entered C: ...

Poof, everything deleted...

I was unable to setup that pc again and my so beloved game was gone also.. still sad about it, because that machine would be a real treasure today but it is gone a long time ago.1 -

Can someone please build an SVG vector file format equivalent that uses json instead of xml? k thnx! 😆14

-

Me: why are we paying for OCR when the API offers both json and pdf format for the data?

Manager: because we need to have the data in a PDF format for reporting to this 3rd party

Me: sure, but can we not just request both json and PDF from the vendor (it’s the same data). send the json for the automated workflow (save time, money and get better accuracy) and send the PDF to the 3rd party?

Manager: we made a commercial decision to use PDF, so we will use PDF as the format.

Me: but ...3 -

Company logos in jpg format... Compressed maybe 100000 times because it looks blurry as fuck...

Sure, dear blind incompetent moron, it will look great on your website.2 -

day 1: try not to use ad blocker, and install few programs from several websites.

day 2: format my pc9 -

Clients keep asking if our software will support XYZ format.

XYZ format is a proprietary format that we are not the proprietors of. Unfortunately, it has become something of a de-facto standard in our industry.

It is not practical to support the format because being able to figure it out is difficult, time consuming and not even a certainty. In fact while we have historically done so for previous versions, it has been upgraded several times so this becomes something of an arms race for us (whether intentional or not).

Responses from clients when we try to explain this vary, but a not insignificant number of them intimate that this is a failing or fault on our part.

It is pretty annoying, and considering the damage in perception it can do, is a pretty interesting and subtle form of economic moat I had not previously considered.9 -

Received screen designs for a new website in Adobe Illustrator format with all the measurements in centimetres.3

-

I'm addicted to the Visual Studio shortcut ctrl+K - ctrl+D to automatically format my code and hate it when I pull down the latest version and it's not formatted properly. 😲5

-

I'm currently removing hard-coded DB creds in our modules which is in production. I've thought, this format is the worst:

db_dsn = 'db_dsn_conn_1'

conn = pyodbc.connect('DSN=%s'%(db_dsn))

Behold!!

conn = pyodbc.connect('DSN=db_dsn_conn_1') -

JSON: "Ok fine you can use our syntax and everything else but make sure you change the name of the format so people know there is a difference"

MongoDB : "K"3 -

All arguments about tabs and spaces, I just write my code in a non-formatted clusterfuck and then let the IDE format it.6

-

FUCK! The fucking previous dev on this project who set up the fucking web service that he knew would be shared among multiple platforms set it up to use an audio format that's only supported on one platform. Now I'm faced with either doing some fucking JS black magic to decode the fucking base64 audio, convert it to another audio format, and then possibly re-encode it or attempting to re-write the fucking web service and already in production app! Fucking hell!1

-

External Dev. Job aplication website - please attach resume. CLICK. Unknown document format: (your format .pdf, acceptable format .PDF). 🤔1

-

After doing a regular CV update, I realised I started coding more than a quarter of a century ago... I then remembered the first command the succeedded on my first PC.

format C:

There was a book that expained how to format a floppy disk (format A:) but it didn't work. At that time I had no idea what floppy is but I knew that C: works, so I thought I'd give it a try...

Oh, was there laughter in the repair shop :) -

Me: *spends three days trying to format a string to replace two backslashes with one*

Python: Here you go!

str = bytes(str,"utf-8")

.decode("unicode_escape")7 -

Roommate's laptop.

Only 1 chrome tab open, why so many processes in Task Manager. Should I tell him to format it? 10

10 -

!rant

<curious>

How do you guys format data like this? Using js framework? Or pure HTML tags?

html labels? invisible table?

</curious> 14

14 -

I've been working as a programmer for 16 years now, and would say I'm not inexperienced, so it's frustrating to feel like a noob after months at a new company when they have poor internal documentation, hundreds of repos with default readme, pretty much no use of docker, sub standard equipment and use their own weird software for deployment. It's hard to meet expectations under these conditions.4

-

Petition for devrant to start using iso dates. Or at least pick up the format from the device's locale. But preferably iso. Seeing m/d/y does my head in.12

-

Managers should not communicate with a customer then sit on it for a week or two before passing it to a Dev as high priority/fix it now. They also should not say our 'team' is working on it when that team is one person who is also busy with other tasks.1

-

This is Slack, bro. No need to formally address me every message, and definitely no need format your messages like an email. Just say what you need to say.6

-

For the love of Jeebus and all his holyness!!

These fuckers, that I've been studying with for the last semester need to get there shit together!

It's one thing that they want to discuss every single thing and NOT come to a different conclusion after a couple of hours....

BUT I fucking draw the fucking line in the dirt, when you shit eating wimps "forget" to format your code and do the worst half-assed job I have ever seen!

Why the fuck would you only indent half of the lines, without any sort of system?!?

And what is this? A huge fucking bunch of random spaces and tabs at the end of a line? Jeebus, save me! -

Me to customer: I need that image in either svg or png format.

Customer sends a zipped .docx file containing nothing but an image.2 -

Just started my exams training! (Doing a study called Application Development).

The application doesn't sound that complicated but I have to implement a data exporting feature. Sounds alright, doesn't it?

THE 'CLIENT' DOES NOT KNOW WHAT DATA FORMAT THE FICTIVE CUSTOMERS CAN PARSE/HANDLE BUT I HAVE TO MAKE IT GENERIC SO THAT THEY CAN USE IT ANYWAYS. HOW THE FUCKING FUCK AM I SUPPOSED TO KNOW WHAT FUCKING FORMAT I SHOULD CHOOSE?!? SHOULD I TRY TO SMELL IT OR SOMETHING?

FML.2 -

LINUX TO THE RESUCE!

I was helping with stuff for my ComTech teacher. For some reason his mac refused to format an SSD.

Dont worry though, mkfs.hfsplus had no problems :D -

If 3D design software could agree on the same fucking format being used the same fucking way, that'd be greaaaaaaaattttt!11

-

The process of making my paging MIDI player has ground to a halt IMMEDIATELY:

Format 1 MIDIs.

There are 3 MIDI types: Format 0, 1, and 2.

Format 0 is two chunks long. One track chunk and the header chunk. Can be played with literally one chunk_load() call in my player.

Format 2 is (n+1) chunks long, with n being defined in the header chunk (which makes up the +1.) Can be played with one chunk_load() call per chunk in my player.

Format 1... is (n+1) chunks long, same as Format 2, but instead of being played one chunk at a time in sequence, it requires you play all chunks

AT THE SAME FUCKING TIME.

65534 maximum chunks (first track chunk is global tempo events and has no notes), maximum notes per chunk of ((FFFFFFFFh byte max chunk data area length)/3 = 1,431,655,763d)/2 (as Note On and Note Off have to be done for every note for it to be a valid note, and each eats 3 bytes) = 715,827,881 notes (truncated from 715,827,881.5), 715,827,881 * 65534 (max number of tracks with notes) = a grand total of 46,911,064,353,454 absolute maximum notes. At 6 bytes per (valid) note, disregarding track headers and footers, that's 281,466,386,120,724 bytes of memory at absolute minimum, or 255.992 TERABYTES of note data alone.

All potentially having to be played

ALL

AT

ONCE.

This wouldn't be so bad I thought at the start... I wasn't planning on supporting them.

Except...

>= 90% of MIDIs are Format 1.

Yup. The one format seemingly deliberately built not to be paged of the three is BY FAR the most common, even in cases where Format 0 would be a better fit.

Guess this is why no other player pages out MIDIs: the files are most commonly built specifically to disallow it.

Format 1 and 2 differ in the following way: Format 1's chunks all have to hit the piano keys, so to speak, all at once. Format 2's chunks hit one-by-one, even though it can have the same staggering number of notes as Format 1. One is built for short, detailed MIDIs, one for long, sparse ones.

No one seems to be making long ones.6 -

QMS admin: you only finished the code review, you didn't complete it!

Me: opens review clicks complete

QMS: you didn't export the code review comments!

Me: opens code review again. Clicks Export. Attaches *.txt

QMS: you exported the comments in the wrong format, I can't read them

Me: what is the right format?

QMS: SOP document <random alphanumeric> clearly states the format

Me: spends 20 minutes navigating the piece of crap QMS software with no search function folder by folder.

Finds document.

It's 120 pages and 4 years old.

On page 68, I find "template to be implemented"

Reply to QMS, that document doesn't actually give a reference to a template

QMS: Email my line manager "Please teach your staff how to do a code review"3 -

!rant

Been here since 2/2017 and didn't make myself an avatar. I feel ashamed.

Edit: I hate the MM/DD/YYYY format. More shame on me that I did not notice it was in retard format.4 -

sr: XML is difficult format parse PDF instead.

me: (-_-)ゞ゛

checks code...

foo.fooBar = element

.match("<XMLELement>(.*)</XMLElement>")[1]

?.trim();

me: (☉_☉)7 -

Manager:

Hey this client sent us a list with all of their employees in this format... we would tell them to input it themselves but they're a pretty big client, so could you do it?

Me: Sure

*3 hours later*

... why am I taking so long...?

I look back at my code, and see that I've done a whole framework to input data into our system, which accepts not only the client's format but it's actually pretty abstract and extensible for any format you'd like, all with a thorough documentation.

*FACEPALM*

Why can I do this with menial jobs and not for our main code?3 -

just sent an id_rsa private key file to our corporate Linux Administrator and he asked me to send him the private key in .ppk format. ==)))9

-

Recruiter: «I have an opportunity [...] J2EE development [...] please send CV in .doc format»

Where is my flame thrower?4 -

Microsoft Excel, what a beautiful beautiful tool. Absolutely wonderful and insanely powerful.

But it annoys the fuck out of me whenever I see a poorly formated sheet.

How fucking difficult it is to format few cells and be consistent with it?!!

Presentation does matter and if you cannot format a single spreadsheet, I am judging you for sure.

On the other hand, a well formatted email/excel or anything, is a huge turn on.

Aesthetically pleasing things are good (which don't serve any underlying functional purpose).11 -

When I asked the client if they have an existing API with xml or json, they said that they will send me Excel xlsx per email.3

-

I sometimes wrote song lyrics in comment format on my code while i listen to the music, so my boss will think that i actually write some badass lines of codes.

-

cant we already get to a point where we have one single fat datetime format in CS...

ISO this, RFC that, UNIX those10 -

Wrote some docs in a simple format. Really KISS here with the docs and the tech they describe. How's the readability?

https://algorythm-dylan.github.io/q...3 -

Hey guys, this is my first rant. I like this friendly community very much so far and hope it stays that way. So here it goes...

I have this Trello app on my Android phone. It has this nice feature - calendar... But week starts on Sunday. So I started investigating, how I could change it to Monday. Googled and found that you have to change the language, which I did. Now I wish I had this nice ISO date format yyyy-MM-dd, but this motherfrakker doesn't allow me to!

How much I hate this little piece of shit! What does he want from me? Download the sources, add the functionality, compile for a week and flash it into my Xiaomi?!13 -

Me: Gets my friends code and opens it on Eclipse to study it

Me: Sees code and tries to study it and actually understands it

Inner me: (OCD kicks in and realizes code is not formatted well)

Me: alt + shift + f

Code format changes

Me: how the fuck does this work now4 -

*using random JS for website*

*finds stuff not working*

*opens JS file*

(mesh of code)

*select all*

*Format Selection*

I love you, Visual Studio :')3 -

Hey support, if you just copy and paste entire paragraphs from me of informal language which I've written with you as the intended recipient to help you understand what's happening, and send that to the customer, tell the customer you're waiting on me, tell my boss you're waiting on me, when the messaging clearly demonstrates that I'm waiting for you to liaise with the customer and get specific information, then really you're just making our company look incompetent and me look lazy, when those things apply to you.

-

OpenCV is like an USB port: You never have the right input format and it only works after converting the damn thing multiple times!

-

Whenever I clone a github repo, the first thing I do is to format the code from tabs to spaces.

Tried not to do it, but the itch is unbearable.4 -

Mozilla and Wikimedia thankfully encourage Internet users into choosing the open and patentless VP9 format by denying H.265 support until H.265 is patent-free.

Well-done, Mozilla and WMF! I don't think H.265 is a bad format, but so long it is encumbered by patents, it is in the public interest that it does not become the dominant format.

https://developer.mozilla.org/en-US...

> "Mozilla will not support HEVC while it is encumbered by patents."1 -

Solving a competitive coding problem.

Expected date format dd.mm.yyyy

My format dd:mm:yyyy

After spending some time on it, self cursing begins2 -

Okay. I don't get quiet the idea why Project Manager doesn't want to enhance or revise the current format to proper format clinical prescription. I deeply understand that we have lots of bug fixes and enhancements to do. But, you know, one suggestion of a client can make a difference literally, compared to the previous one and can benefit to all.5

-

I honestly treat JavaScript as a binary executable format nowadays. It's an output format for me.

I choose you, TypeScript.4 -

Who tf are these people who write files called things like handlers.js which are over 1000 lines long?5

-

my scrum master keeps changing the format of retro and it works worse every time. We're at the 2.5 hour mark right now. It is aggressively hot and humid in the office. Everyone is asleep.

I don't think there's a way to explain to him that the purpose of a retro is not to magically fix things within the format but to financially commit to the preference for conversation, from which the hidden work of resolving conflicts can be billed.3 -

Wondering whether there will ever be a day where I will not have to google for "how to auto format code in [some IDE]" 😌3

-

Classmate from final year of computer engineering class: my computer is acting strange, I think I'm gonna have to give it a formation.

😧2 -

This should put an end to those code formatting rants. Now everyone can be on the same page.

https://prettier.io/2 -

What does projektaquarius do when he doesn't have a working IDE? Reformat code (that I am already refactoring) to an industry standard format and prepare for the arguments that are going to come from the other group who has their own coding standard that isn't industry standard.

Already preparing for the Pascal case versus Camel case argument. Emotionally that is. Mentally the argument basically just amount to "your group didn't want to refactor the code so we did it. Live with it or you do it." -

App: You have only 15gb left, would you like to download your video (2GB) in our compressed format

Me: Ok let's give it a try....

WOW... now only needs 400MB!

**download finishes and I look for the video... It's in it's own folder... ok**

Opens the folder... and finds 800 video files in some unheard format.. each are 10s long

WTF?!?!?!?!?!4 -

Intel, wtf kind of drugs is your stupid site on?

Trying to make an account, the password requirement says "at least one special character".

Ok, no problem.

"Password format is invalid"

Wut? Hmm, maybe it doesn't like that one. Let's try one from their suggested ones.

"Password format is invalid"

WTF? The fuck is your problem?!

*reloads the page, tries again*

"Password format is invalid"

ARE YOU FUCKING RETARDED?

*adds the special at the end of the password instead of the beginning*

It works.

https://youtube.com/watch/...

And then we wonder why bugs like Meltdown and Spectre come up. These guys can't even do fucking password validation properly.

And I've just lost 30 minutes because of this shit.

FUCK! -

Is there some way to format code on devrant or say, surround a section of text with (at minimum) italics?

I mean we *are* on a site dedicated to developers.20 -

That moment when all of your code has reached to max distortion in format and you forget the shortcut to format the code again.1

-

As a moron, I write all tasks in this format even though it makes no sense to do so, so I won't have to put up with business colleagues moaning.7

-

Ok, guys, which configuration format is your favorite? (something like toml, ini, json, yaml, etc)28

-

I'm the guy who downvotes and flags your questions on Stack overflow, for being in poor format and incomplete background of question.

P.S. I'm not hot head.5 -

When date format is hard coded and application goes international. Application works ok until day turns 13. Error is seen a couple of days later, then it's the 1st again and everything is ok. Just to mention one of many strange errors. Just to make it harder, app works well if running in other countries that is using the same format. Daahh1

-

I was using SimpleDateFormat and everything was great but then next thing I find out, the time offset for GMT is stored as Z and not +00:00 which is the format I needed for API calls.

Every other time zone follows a similar format but yet somebody thought it was a good idea to switch it up for GMT 3

3 -

I can't imagine any worse decision than the one to make document.cookie accessed as the string format it's actually in.3

-

FUCKING PIECE OF SHIT USB STICK. What the actual fuck how hard can it be to format a usb-stick? Excuse me?

Basically, flashed arch .iso on my usb stick. After stuff was done I want to format my usb stick again so I can put files on it. Normally thats a super easy process. I tried a shitload of things.

1) On windows: Quick format -> Windows was unable to format.

2) Went to Linux. Opened GParted. Gparted didn't detect the usb drive? Wtf. Rebooted then it showed up. Tried to delete all partitions, tried to clear the entire drive. Gparted just freezes. Ok... wtf is going on?

3) Tried to go the bruteforce way and zero out the entire drive with dd. After a few seconds dd freezes and is not doing anything anymore.

Wth is going on lol? Why can I not wipe my usb drive? Any ideas? 9

9 -

No matter how many years experience you have, a part of your job will always be copy and pasting malformed client data in to a useable format.1

-

I spent the week working on an adapter to a specific format, the client came this morning to tell us Json would also have worked. Then why didn't you tell me earlier?!?

-

Still waiting for the Windows 10 October 2018 Update to be re-released or declared stable to go ahead, format and start over on my laptop.

Also still having trouble when using ZIP files 😑5 -

Learning Dart the first time is driving me insane!!! I'm so not used with it's format.

PS: Send help.6 -

can't fcking post a question in stackoverflow!! it says i need to format my codes but i dont have fckin codes in my post!

-

Format must be png so we have a transparent background and image must be animated.

Me: Do you need to pause on mouseOver?

Dumb fuck.3 -

I need to link two APIs such that data sent on one API gets processed and it hits a second API with the processed data.

The first API needs to format the data and decide according to data format which second API needs to be used. I checked Zapier and it's working but it is not exactly what i need to do. So are there any options other than setting up my own server?3 -

Let's pick a datepicker for the project. Me: jquery-ui ? Supervisor: No something better.

Jquery-ui: any css selector, standard date format masks (dd/mm/yyyy or mm-dd-yyyy....), Opens if the field is access using Tab key

Something better: only Id selector, custom date format mask ( %d/%m/%Y ...), Tab does not work -

Fucking excel...

I opened up my CSV and changed values in one column... You fucking didn't need to take it in yourself to change all of my dates in another column to one you prefer, they were fucking fine! -

What is this format? Spaces are separated by + sign and columns are separated by & sign and it’s value is followed by = sign. Can I directly convert it to Spark dataframe?

8

8 -

Why is it, I've handled data in JSON format quite a few times, but every time I try to use it again, I completely forget how JSON works

-

When the new guy changes the format of the code and fucks it up and you have to go back and fix it... slowly raises gun to head

-

Grrrr

I love JS, but I hate browsers.

Universal ES5 way to initialize a date from a input value in "dd.mm.YYYY" format:

var split = input.value.split('.');

var from = {};

from.day = parseInt(split[0]);

from.month = parseInt(split[1])-1;

from.year = parseInt(split[2]);

var myDate = new Date(from.year, from.month, from.day);

// if a timestamp format is needed:

var myDateTimestamp = +new Date(from.year, from.month, from.day);

No, I won't use moment.js or other bloat-braries just for fucking dates.1 -

I have forgot to store time on mysql database in UTC format, now i have to rebuild lots of application code. Damn...

-

Why is it that when people are anal about linting they don't like the default/mainstream conventions?6

-

view-source:https://www.google.com/?gws_rd=ssl

“Oh my GOD! I've heard of obfuscation, but this is just hell in text format!”5 -

Me to co-worker: The tests are failing because you didn't format your code before submitting your PR

-Co-worker changes the test command to run the format command just before running the tests-

Co-worker: The tests are passing now!

-facepalm- -

Me when a client who will listen reason insists on me sending files as email attachments in .zipx format. :(

-

The age-old question between `DD/MM/YYYY` or `MM/DD/YYYY`.

After some shower thoughts, my new preference is `YYYY/MM/DD` for Americans and Lithuanians (only 2 that I know) it just looks like the year has been placed first, otherwise, they read it as normal. To everyone else, the date is reversed and therefore will be read in reverse leading to the same answer.

In addition, `YYYY/MM/DD/some-dated-file` as a file path works exceptionally well for storing files as it uses the least amount of repetition.10 -

List of things one of my Python projects needs:

- cross-platform IMA/VFD/VHD/VHDX/qcow/VMDK/IMG/DSK/others image read/extract support that doesn't need admin/root privs (so no, can't use dd or mount)

- custom DB format (for speedups when indexing files and retrieving info based on hash) and converter from previous DB format

- GUI or actually good CLI

- massive speedups

kill me now4 -

Another dev concept butchered by business people:

BDD, but does not integrate into any tests, uses arbitrary language and format, covers only happy path...

Kill me now, please1 -

Command --help prints there's a --format=xml option but I never got it to work. Downloaded the source code and found:

case XML:

/* NYI */ -

rust, where pressing autoformat won't format your code but will format your comments which are just there because you're trying to keep track of the data you're going to parse and now that shit is off the page motherfucker can you not4

-

How is FBX the main format of blender when it is so damn bad

For the last 10 minutes I have tried exporting a model as fbx so that it has the same scale and coordinate system as in blender. That's literally the only job a model file format has to do

I still haven't managed to do it. How can a model format be this frustrantingly bad???10 -

"Hi X, I'm getting some push back on a bigger hard drive seeing as you'll be on the only person in the company who has something bigger than 250GB. Are you able to give me the headline values of how you've used up 249GB so far please?"7

-

Try inserting today's date in access db using sql ( 01/03/2017 in dd/MM/yyyy format). Open db and check what has inserted instead.1

-

I don't understand people that say

'I like the current format of the readme more'

...

What formatting??

This was the PR:

https://github.com/SakuraNoKisetsu/... 9

9 -

Devrantron leads to a deb in the AUR? makepkg isn't handling it, and it litterally just tosses me an error saying "file format not recognized"

-

New feature request that could be unecessary by client just sticking to one of 4 different very similar input formats instead of many off the cuff formats, that conflict and i cant guess let alone a computer. But i present an outline idea of the solution with his specs

I didnt complain just told him what needs to change and what our constraints will be how the info is interpretted etc

Client says "dont spend time on code for that feature.. stick to other original work for now" ! omg hes getting it! Sweet. I only wasted an hour this time, and if he does want the feature, we have an agreed spec for it. We can get back to handling the customer level shit and maybe he can make some more money finally.

Scope creep plus 0, me plus one. Scope creep still in thr lead by a lot. Oh well. Still, this guy is getting more tolerable -

To the experienced coders here on devrant: Any valuable tip(s) for newbie programmers (independent of language)?

My suggestion: Enable 'format on save' in your IDE. Seriously, how did I survive without this?!5 -

question1: Are you a programmer?

answer: yes

question2: Can you repair my computer or format it?

answer: ...

what would you answer then pls help me?9 -

I'm gonna write a book and call it "Errata Obscura" and it's just going to be greentext format of all the weird ass coupling problems I've come across in the last few years.1

-

Here's something that should be a standard rule for writing APIs:

When you offer a date filter for your API, the date format passed in should be a UNIX timestamp and not a literal date. For example,

Incorrect API URL format: '?start_date=2024-03-01&end_date=2024-04-01'

Correct API URL format: '?start_date=1709251200&end_date=1711929600'5 -

I'm facing a strange problem, I have a 400GB microsd, it is formatted as exFAT

I tried formatting it again to either ntfs or ext4, on either Linux or macOS, but every tool says format complete then when scans again it still shows the files that storage had + that it's exFAT

I tried gparted, disk utilities (macOS), Disks (ubuntu), mkfs all show same result that it successfully formatted the card but after refresh still shows old filesystem + the contents of the memory already there no file was removed

Can anyone help?21 -

Prettier is absolute cancer.

Just logged in here to say this.

esLint is annoying as fuck, but Prettier is absolute cancer.

Are you webdev guys to stupid to format code or commit in a team how to format so that you got those annoying horrror tools with default bullshit rules like a comma after the last element in arrays or that shit not to use " but ' ? What the fuck is wrong with web devs?9 -

Implement a rest API for elasticsearch.

Follow the client's index's mapping.

Generate json document from Java pojos, given by the client.

Jsons don't match the schema mapping, one (at least) field, for geographic coordinates, is in another format.

Ask the client for explanation.

Client response, after 6 hours:

"We build it in this shape so you have to convert them to another format before posting into ES".

What the hell is wrong with you?!1 -

Difference between security threat and programming bug ?

Found a cool paper about format string attacks which mentioned buffer Overflow is a security threat while format string is a programming bug.

Had no idea what that really meant.

Tnx1 -

For some unfathomable fucking reason, rust-analyzer decided to dump formatter crash reports into the worktree alongside the file that failed to format. How do I even begin to investigate this?

2

2 -

"It's really important this gets done."

"Also in fixing one of the APIs we changed the format of the data returned and now things are broken on the front end ...."

🤔1 -

Am i allowed to name an app/project starting with lowercase "i"?

E.g.

iDriveCar

iPoopShit

iEatFood

Etc...

Or has this been patented by Apple so only they can name words in such format?9 -

`load pubkey "/Users/karunamon/.ssh/id_rsa": invalid format`

The fuck? I've been using this keyfile for ages. And that's the private key, not the public key.

Maybe I'll try converting it to a different format.

(20 minutes of ssh-keygen command attempts)

Same error. I don't freaking get it. It works. I mean, I know my public key is..

(public key is actually completely mangled with newlines everywhere)

..yknow what, my fault, but you could have at least given me the public key filename, ya jerk.1 -

“X” and “Meta” is exactly how a serial killer disguises themselves. Same piece of shit, different identity.

https://mashable.com/article/...3 -

Not a fan of legacy products which make use of a tonne of TSQL stored procedures, especially when they include a mess of logic, spelling errors, no version control of course, all squirreled away for some unlucky person to find and unpick in years to come.

-

What is it with companies putting me on their half assed legacy products that are critical but they won't commit enough staff and resources to improve them properly?1

-

So I apparently forgot to encrypt some parameters when sending error reports from our app to the server.

Which means the server tried to decrypt them but couldn´t and just threw an error...

No error logs for the app this week I guess. Yay!

I need "git reset --hard head~1" for my brain this weekend, to get rid of this week... -

Can somebody please explain to me how in the fuck does :

{

"src" : "./template.html",

"format" : "plain",

"input" : {

"noun" : "World"

},

"replace" : "templateExampleSkeleton"

}

Result in:

format: "plain"

input: "[object Object]"

replace: "templateExampleSkeleton"

src: "./template.html"

When put through JSON.parse()???12 -

Whyyy has to be the price in xml import in format impossible to parse. Please use a fucking dot next time, thanks!8

-

i'll be truly happy if just for once, a girl contact me for stuffs unrelated to computer.

eg; format their laptop, install printer, hack into fb =.=1 -

Void Linux: weeee youw thinkpad hdd is bwoken, can’t instaww, gwub ewwow 17😭

Windows XP: hold my “Format NTFS (quick)”, wimp3 -

fuck Jira's 5 days ago date format.

I hate that i fucking have to inspect it every time to get a normal date.6 -

They want me to document Nifi flows.... In a spreadsheet

Like... Just...

Abd the existing format is just awful1 -

argghh no auto format standards on JavaScript

also though libraries breaking for no reason because no types _I guess_4 -

Open question:

Do you and your dev team openly embrace using a formatter for your projects, or do you not? And why?

My senior dev is trying to argue that it creates too much noise for commits, especially on older files and it's driving me nuts because I religiously use it (default TS formatter in VSCode btw, nothing crazy or super opinionated!)12 -

University asks for a uml diagram as a companion for the project files (which need to be in bluej format, FML cancerous ide..)

Yeah right.... -

rust can't even do rustfmt properly

it just does things unadvertised

like reorder_impl_lines which is described as putting type and const on top of files adds new lines between fn declarations and that's not disclosed anywhere. ffs took me a while to figure it out

and chain_width should be different for fn calls and match statements. because newlining multiple fn calls makes it readable, but newlining match statements and wrapping them in {} does not / makes it ugly. there is match_arm_blocks but it still newlines random stuff awkwardly, raaghh

I thought hey so cool I can write without caring about formatting and just press Ctrl + shift + i and all done but now I'm arguing with the formatter and the settings available suck and are poorly described. please don't write a formatting documentation with no examples, wtf? And disclose everything it does, preferably with consistent language so I can search the page (some of the descriptions say new line others call a new line a break. thanks)1 -

I have a bunch of numbers and I need to draw a chart. How to do it....hey, I have Excel! So, I'll just select the lines from text file....and copy/paste that to excel...Clickety-click through the import wizard and viola!

"I imported the numbers and set them to dates!"

No. Just numbers. But ok, I'll select to format to "general".

"Ok! You numbers are now 0.33343, 0.939393 etc.!"

No no, I just want the original numbers. Let's delete everything and import again. I'll pre-set the cells to "text" just to be sure...

"Ok! I imported your dates and set the cell format to shit!"

WTF you dumb fuck. Just paste the numbers like I wanted! They are *not* dates...Click-click-click....

"Dates added and the format is your local format that you never set and never wanted!"

<tearing hair from my head> God damn holy fuck.

And every time you go through the same import "wizard" tabs. More like import retard. -

My father lost is password of is google account =_= TFA need phone number ... but the phone is lock ... cannot format the phone because of FRP ... technologie is so shit these time...11

-

I really look forward to getting rid of end user and front end crap!

Just wasted 3 hours because of a bug report of a client stating, that "the printouts always have a useless empty page after the desired content".

Well, yeah. There actually is content on the site that's meant to be printed.

After 3 hours of fine-tuning and debugging I found out, that the content is in A4 (European default paper format: 210x297mm) and the customer tried printing in some weird ~219.9x279.4mm format. Apparently that's the US 8x11" letter format.

FML3 -

When you have to do an assignment for university but the sheet with the instructions is so badly formated, that you have to read the whole sheet again to find a line. BTW the prof also have a philosophical degree.

-

The Todo App is such a simple example that for a lot of libraries/frameworks it does very little to give me confidence that it's useful in large scale applications. So the solution is always get stuck in using the thing, hope the community are active enough to help answer questions and that the whole thing isn't a waste of time.4

-

Hey guys ,

I just finished the first specification of a format I call CommandFile.

https://github.com/thosebeans/...

It's a configuration file format, largely designed after dockerfile with bits of TOML.

Can anyone of you, who is more well versed in writing specifications than me, read over the spec and check if it's concrete enough and if restrictions are reasonable?4 -

I will always fill out dates following ISO 8601 in the margins of forms that require the American format.

2018-02-27

2018-02-27

2018-02-27 -

How should I format my Indian Address so that I get stickers from devrant...

What should be the Format??6 -

json encode...... helps when the need of integration with other platforms arrive. json is good format for sending streams

-

I don't know how to tell Visio to print my drawings in A3 format so I print them in A4, put the papers on the copy machine and copy them to A32

-

The best explanation why we need Static Import Libraries to load a dll and wtf they contain

https://cnblogs.com/adylee/p/...

and many interesting stuff about PE file format3 -

I'm gonna do it, no one can stop me! I will do it now, I will create yet another file format using YAML for Biblical software!!2

-

Why tf are you telling me that I get to choose the format of the text that I'll need to parse later if you keep using any other freakin possible format on earth except for the one I chose 😠

-

Two days working on company server, format and reinstall ubuntu.

Using cd is much better than using thumb drive -

Depends on what style means...

How I format the code: language, team/style-check rules, IDE auto format settings

How I structure my code and design programs: experience... Mainly from blowing stuff up, having to rewrite monolith code, trying to understand other people's shitty code and why they can't seem to organize it better so you don't need to be a surgeon or God to even attempt to figure out wtf it's doing and how it works... Or supposed to work. -

MP3 did not get re-licensed by the Fraunhofer institute - they want to push a new format :0

MPEG-H?5 -

Using input type time and the format is set by chrome language.

Is there any way to force 24h-format other than change locale if using a external library such as date picker isn't an option?

I have found out that South African is a locale in English with 24h-format time. -

Accidentally started mkfs.ntfs without --fast.

Is there a way to kill a program without waiting for writes to finish?6 -

if you guys have an hour or two to burn, mind helping fix pages on my new wiki, https://malwiki.org? We need to upload images and such from our old wiki, https://malware.wikia.com, and turn the somewhat-broken Wikia format back into proper MediaWiki format.

-

I know there is this huge argument about whether to use tabs or 4 spaces and while I'm on neither side, just sitting there using tabs, in this new project I'm FORCED to use a 1 space indentation and no line breaks in Android layout XML files format.

I sat there for about 10 minutes trying to wrap my head around d this absurd specification they agreed upon with the client. The code looks SHIT and every time I copy some beautifully formatted reference code into this project it turns into a piece of unreadable garbage.

But since I'm just a part-timer and the senior developer working on this project for some years now is much more experienced than me, I'm hesitant to criticise it more than I already did.

Maybe I'll start arguing with industry standards and the improvement for new developer to read our code... -

Convert any SlideShare slide into PDF format in one click. Time pass project

https://slidesharepdfdownloader.herokuapp.com/...

-

Twitter now allows to add up to 4 images and format count them in symbols count. I have no idea what to do with such a great power.

-

Mad how Jobs proposed that apps should basically follow the PWA format on the original iPhone yet Safari is the one lagging behind now.

-

You know what I think they should extend chatgpt to do ? Output some of it's results in math latex or circuit diagrams in jpg or some popular xml format

-

Code examples with dozen of lines in the project documentation (docx) are screenshots... lazy to format or to prevent simple copy and paste? That's the question.

-

Everything I did was wrong with a CTO because I didn't format identically to him, balls were busted daily.

-

I wish sophos had some sort of out of the box mode for development which avoided slowing down IDEs and build servers1

-

HERO GRAPHICS: Your Trusted Partner for Wide Format Printing in Burbank

Located in the vibrant city of Burbank, HERO GRAPHICS specializes in delivering top-tier wide format printing solutions tailored to meet the diverse needs of businesses, artists, and event organizers. With cutting-edge technology and a commitment to quality, we bring your big ideas to life with vivid, high-impact prints that stand out.

Expert Wide Format Printing in Burbank

Wide format printing is essential for creating large-scale visuals that grab attention — from banners and posters to signage and vehicle wraps. At HERO GRAPHICS, we combine advanced printing equipment with premium materials to produce sharp, durable, and vibrant prints. Whether you need promotional materials for your business or eye-catching displays for an event, our team in Burbank ensures your project is handled with care and precision.

Why Choose HERO GRAPHICS for Your Printing Needs?

Local Expertise: Proudly serving Burbank and the surrounding areas, we understand the unique needs of our community.

High-Quality Prints: Our wide format printing guarantees crisp images and long-lasting results.

Customized Solutions: We tailor every project to your specifications, ensuring your vision is realized perfectly.

Fast Turnaround: Need it quickly? We offer efficient service without sacrificing quality.

Dedicated Support: Our experienced team is here to guide you from concept to completion.

Contact HERO GRAPHICS Today

Ready to make a big impression with wide format printing in Burbank? Contact HERO GRAPHICS at:

Address: 119 E Graham Pl, Burbank, CA 91502, United States

Phone: +1 (818) 768-8437

Let HERO GRAPHICS be your go-to resource for stunning, professional wide format printing that elevates your brand and message.5 -

Is it bad practice to write a line of code longer than the screen, meaning you need to scroll on the X? I've had one lecturer who hates it and one who doesn't give a shit and I'm not sure of the standard8

-

I was once formatting a pendrive and Windows decided to shutdown and killed the pendrive... It ain't mine you know?1

-

How does FS-based locking,

Eg. What sqlite does, work on the inside?

I'm working on a file-format for storing binary data and I want it to be safe for concurrent use.1 -

How would one approach writing an ai that writes code given an objective and some data sources and some output format ? Theoretically

-

You know that your are working with a DB-Guy when he provides you a "REST" interface that is outputting table data in JSON format and not even the JSON syntax is correct.

-

Pigment 0.2

🎨 A lightweight utility for color manipulation and conversion.

Features

Color Conversion: Convert colors between HEX, RGB, HSL, HSLA, RGBA and Tailwind CSS formats.

Lightness Control: Lighten or darken a color by a specified percentage.

Random Color Generation: Generate random colors in HEX, RGB, HSL, HSLA, RGBA or Tailwind CSS format.

Opacity Control: Set the opacity of color in any format.

Blend Colors: Blend two colors in any format together in a specified ratio.1 -

I need a CV for a small non important side job. Do i need a fancy shmancy CV or can i go with just the most important informations propely formated in a simple word document? I think simplicity is crutial. I have pretty high qualifications so they should hire me for that if nobodt better arrives.8

-

so we want to use this software for document mangement (versioning and stuff). i totally understand why the developer used rtf for document templates. but it took me freaking 6 hours to create a simple document header while finding out 500 designs and methods that didn't work due to the rtf format corset that differs more from word formatting abilities that i expected.

-

!Rant

I've got somewhat of a problem: a client claims that the date format at a website is wrong. I am using Carbon for Date output which extends PHP's DateTime which uses the Linux locales. Can someone here confirm that they have seen a similar but that the date is wrong in Romanian, slowenian and Czech? (The format would be somewhat like wednesday, 17. January 2017). -

I got android nougat on my phone and of course my root was gone

It was dearly easy, but along the step of doing it I had to format some partitions (like /data), after that I flashed some zips and put the backup of my data partition back on my phone

Pictures where missing, some app data where missing, also WhatsApp pictures and videos

Turns out, twrp saves pretty much everything, but leaves out the user data folder, where all of that stuff would've been

I'm just happy that I'm not one of those people who don't need to keep thousands of pictures

I don't really know what kind of stuff I lost, probably not too important