Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "proxy"

-

A scammer called me today. They were saying that harmful files were moved to my computer and they needed to remove them. I don't think they are ever going to call me again.

S = scammer; M = me;

S: this is tech support we need access to your computer because we detected harmful files and need to remove them.

M: oh my! Hold on, let me go to my computer now. How can you access it?

S: we can just use RDP and delete the files. They are in a hidden folder that is encrypted so this Is the only way.

M: oh ok I believe you. Hm... it looks like my son only allows certain IP addresses to access our computers.. I don't know how to disable this so can you just email me your IP address?

S: Sure...

He then sends me his actual IP address... it doesn't even look like a proxy or VPN.

M: oh my I forgot that you need my password to login. It's really long and complicated... can I just email it to you?

S: Sure!!

I then tell him to hold on I have to find it that my "son" stored it somewhere.

At this time I'm taking a photo of my bare ass and attaching it to the email. I then say in the email "Please note what my job title is in my signature.. I just sent the FBI your name, phone number, email, and IP address. Please enjoy my bare ass, you'll see a lot of it in prison."23 -

Browsing to a porn site while still being in the corporate VPN.

Got a proxy page which said this type of content isn't allowed at work. Nearly had a heart attack ;D14 -

So I got the job. Here's a story, never let anyone stop you from accomplishing your dreams!

It all started in 2010. Windows just crashed unrecoverably for the 3rd time in two years. Back then I wasn't good with computers yet so we got our tech guy to look at it and he said: "either pay for a windows license again (we nearly spend 1K on licenses already) or try another operating system which is free: Ubuntu. If you don't like it anyways, we can always switch back to Windows!"

Oh well, fair enough, not much to lose, right! So we went with Ubuntu. Within about 2 hours I could find everything. From the software installer to OpenOffice, browsers, email things and so on. Also I already got the basics of the Linux terminal (bash in this case) like ls, cd, mkdir and a few more.

My parents found it very easy to work with as well so we decided to stick with it.

I already started to experiment with some html/css code because the thought of being able to write my own websites was awesome! Within about a week or so I figured out a simple html site.

Then I started to experiment more and more.

After about a year of trial and error (repeat about 1000+ times) I finally got my first Apache server setup on a VirtualBox running Ubuntu server. Damn, it felt awesome to see my own shit working!

From that moment on I continued to try everything I could with Linux because I found the principle that I basically could do everything I wanted (possible with software solutions) without any limitations (like with Windows/Mac) very fucking awesome. I owned the fucking system.

Then, after some years, I got my first shared hosting plan! It was awesome to see my own (with subdomain) website online, functioning very well!

I started to learn stuff like FTP, SSH and so on.

Went on with trial and error for a while and then the thought occured to me: what if I'd have a little server ONLINE which I could use myself to experiment around?

First rented VPS was there! Couldn't get enough of it and kept experimenting with server thingies, linux in general aaand so on.

Started learning about rsa key based login, firewalls (iptables), brute force prevention (fail2ban), vhosts (apache2 still), SSL (damn this was an interesting one, how the fuck do you do this yourself?!), PHP and many other things.

Then, after a while, the thought came to mind: what if I'd have a dedicated server!?!?!?!

I ordered my first fucking dedicated server. Damn, this was awesome! Already knew some stuff about defending myself from brute force bots and so on so it went pretty well.

Finally made the jump to NginX and CentOS!

Made multiple VPS's for shitloads of purposes and just to learn. Started working with reverse proxies (nginx), proxy servers, SSL for everything (because fuck basic http WITHOUT SSL), vhosts and so on.

Started with simple, one screen linux setup with ubuntu 10.04.

Running a five monitor setup now with many distro's, running about 20 servers with proxies/nginx/apache2/multiple db engines, as much security as I can integrate and this fucking passion just got me my first Linux job!

It's not just an operating system for me, it's a way of life. And with that I don't just mean the operating system, but also the idea behind it :).20 -

Funny story about the first time two of my servers got hacked. The fun part is how I noticed it.

So I purchased two new vps's for proxy server goals and thought like 'I can setup fail2ban tomorrow, I'll be fine.'

Next day I wanted to install NginX so I ran the command and it said that port 80 was already in use!

I was sitting there like no that's not possible I didn't install any server software yet. So I thought 'this can't be possible' but I ran 'pidof apache2' just to confirm. It actually returned a PID! It was a barebones Debian install so I was sure it was not installed yet by ME. Checked the auth logs and noticed that an IP address had done a huge brute force attack and managed to gain root access. Simply reinstalled debian and I put fail2ban on it RIGHT AWAY.

Checked about two seconds later if anyone tried to login again (iptables -L and keep in mind that fail2ban's default config needs six failed attempts within I think five minutes to ban an ip) and I already saw that around 8-10 addresses were banned.

Was pretty shaken up but damn I learned my lesson!8 -

List of things that my fucking corporate proxy blocks

* Maven

* The NPM registry

* Github

List of things that aren't blocked

* Google drive

* Twitter

* Porn

Half my mobile data is burned away by NPM sinkholes. Fuck this place.20 -

This is super childish but it's the gameserver insidstry and karma is a bitch.

TLDR: I hacked my boss

I was working for a gameserver and I did development for about 3 months and was promised pay after the network was released. I followed through with a bunch of dev friends and the guy ended up selling our work. He didn't know that I was aware of this as he tried to tell people to not tell us but one honest person came forward and said he sold our work for about 8x the price of what he owed ALL OF US collectively.

I proceeded to change the server password and when he asked why he couldn't log in I sent him an executable (a crypted remote access tool) and told him it was an "encryption tunnel" that makes ssh and file transfers secure. Being the idiot that he is he opened it and I snagged all of his passwords including his email and I changed them through a proxy on his machine to ensure I wouldn't get two factored with Google. After I was done I deleted system 32 :334 -

We're using a ticket system at work that a local company wrote specifically for IT-support companies. It's missing so many (to us) essential features that they flat out ignored the feature requests for. I started dissecting their front-end code to find ways to get the site to do what we want and find a lot of ugly code.

Stuff like if(!confirm("blablabla") == false) and whole JavaScript libraries just to perform one task in one page that are loaded on every page you visit, complaining in the js console that they are loaded in the wrong order. It also uses a websocket on a completely arbitrary port making it impossible to work with it if you are on a restricted wifi. They flat out lie about their customers not wanting an offline app even though their communications platform on which they got asked this question once again got swarmed with big customers disagreeing as the mobile perofrmance and design of the mobile webpage is just atrocious.

So i dig farther and farthee adding all the features we want into a userscript with a beat little 'custom namespace' i make pretty good progress until i find a site that does asynchronous loading of its subpages all of a sudden. They never do that anywhere else. Injecting code into the overcomolicated jQuery mess that they call code is impossible to me, so i track changes via a mutationObserver (awesome stuff for userscripts, never heard of it before) and get that running too.

The userscript got such a volume of functions in such a short time that my boss even used it to demonstrate to them what we want and asked them why they couldn't do it in a reasonable timeframe.

All in all I'm pretty proud if the script, but i hate that software companies that write such a mess of code in different coding styles all over the place even get a foot into the door.

And that's just the code part: They very veeeery often just break stuff in updates that then require multiple hotfixes throughout the day after we complain about it. These errors even go so far to break functionality completely or just throw 500s in our face. It really gives you the impression that they are not testing that thing at all.

And the worst: They actively encourage their trainees to write as much code as possible to get paid more than their contract says, so of course they just break stuff all the time to write as much as possible.

Where did i get that information you ask? They state it on ther fucking career page!

We also have reverse proxy in front of that page that manages the HTTPS encryption and Let's Encrypt renewal. Guess what: They internally check if the certificate on the machine is valid and the system refuses to work if it isn't. How do you upload a certificate to the system you asked? You don't! You have to mail it to them for them to SSH into the system and install it manually. When will that be possible you ask? SOON™.

At least after a while i got them to just disable the 'feature'.

While we are at 'features' (sorry for the bad structure): They have this genius 'smart redirect' feature that is supposed to throw you right back where you were once you're done editing something. Brilliant idea, how do they do it? Using a callback libk like everyone else? Noooo. A serverside database entry that only gets correctly updated half of the time. So while multitasking in multiple tabs because the performance of that thing almost forces you to makes it a whole lot worse you are not protected from it if you don't. Example: you did work on ticket A and save that. You get redirected to ticket B you worked on this morning even though its fucking 5 o' clock in the evening. So of course you get confused over wherever you selected the right ticket to begin with. So you have to check that almost everytime.

Alright, rant over.

Let's see if i beed to make another one after their big 'all feature requests on hold, UI redesign, everything will be fixed and much better'-update.5 -

Once we were going to present a web service to governmental firm. All is going well so far and my boss asks me to host the web application the day before the presentation.

I hosted it and all was good with demo production tests, but I had a bad feeling.

While it was running on our server, I also ran it locally with a reverse proxy just in case.

* Meeting starts *

* Ice broken and down to business *

"And now our developer will run the demo for you..."

* Run the demo from my laptop to double check --> 500 Internal Server Error *

Holy shit!!!

* Opens reverse proxy link on my laptop. Present demo during meeting. Demo works like a charm. *

Firm representative: "Great! Looking forward to go live."

*Our team walks out*

GM: "Good job guys"

ME: 4

4 -

So just recently my school blocked the following for unknown reasons websites

Github

Gitlab

Amazons aws

stack exchange

Bitbucket

Heroku

The hacker news

DuckDuckGo

The Debian package repositories yea all of em

And all domains that end in .io

Now some of you out there are probably just saying "well just use a vpn" the answer to that is I can't the only device I have a locked down school iPad can't install apps cannot delete apps cannot change vpn or proxy setting's I cannot use Safari private tab they have google safe search restricted to "on" they even have "safari restricted mode which lets safari choose what it wants to block" and even when I'm on my home wifi it's s still blocked as they use Cisco security connector THIS IS HELL

Also this is my first post :)30 -

It's never enough, is it?

I was going to write a simple dns server/proxy/firewallish thingy in php.

That's working. I'm adding a dashboard and api now 😅13 -

Co-worker: I need a proxy to do this task.

Me: Why do you need a proxy?

Co-worker: So all these reviews for the company I'm posting don't look sketchy.

Me: Download the TOR browser.

Co-worker: That's kinda sketchy I don't wanna do that.

So falsifying information about the company is okay, but using a browser to do it anonymously is right out.1 -

I was offered to work for a startup in August last year. It required building an online platform with video calling capabilities.

I told them it would be on learn and implement basis as I didn't know a lot of the web tech. Learnt all of it and kept implementing side by side.

I was promised a share in the company at formation, but wasn't given the same at the time of formation because of some issues in documents.

Yes, I did delay at times on the delivery date of features on the product. It was my first web app, with no prior experience. I did the entire stack myself from handling servers, domains to the entire front end. All of it was done alone by me.

Later, I also did install a proxy server to expand the platform to a forum on a new server.

And yesterday after a month of no communication from their side, I was told they are scraping the old site for a new one. As I had all the credentials of the servers except the domain registration control, they transferred the domain to a new registrar and pointed it to a new server. I have a last meeting with them. I have decided to never work with them and I know they aren't going to provide me my share as promised.

I'm still in the 3rd year of my college here in India. I flunked two subjects last semester, for the first time in my life. And for 8 months of work, this is the end result of it by being scammed. I love fitness, but my love for this is more and so I did leave all fitness activities for the time. All that work day and night got me nothing of what I expected.

Though, they don't have any of my code or credentials to the server or their user base, they got the new website up very fast.

I had no contract with them. Just did work on the basis of trust. A lesson learnt for sure.

Although, I did learn to create websites completely all alone and I can do that for anyone. I'm happy that I have those skills now.

Since, they are still in the start up phase and they don't have a lot of clients, I'm planning to partner with a trusted person and release my code with a different design and branding. The same idea basically. How does that sound to you guys?

I learned that:

. No matter what happens, never ignore your health for anybody or any reason.

. Never trust in business without a solid security.

. Web is fun.

. Self-learning is the best form of learning.

. Take business as business, don't let anyone cheat you.19 -

Have multiple and some server related but hereby:

I forcefully quit php on the server I use for devRant related stuffs because I wanted to quit the bakgrounded php process I had running for the dns proxy thingy since I somehow couldn't find the pid.

Two days later I noticed that none of my sites on that server where running anymore and started looking at nginx error logs.

It took me way too long to figure out that I had PHP-FPM installed which runs as a service and by forcefully quitting php the other day.... Yeah, you get it I think.

Started the process again and remembered that one 😅 -

When I'm on call and its weekend, I'm often a little nervous the entire weekend and time seems to go slow.

Programming on the dns proxy/firewall now and time is suddenly going quite faster.

This is a damn relieve.6 -

I just can't understand what will lead an so called Software Company, that provides for my local government by the way, to use an cloud sever (AWS ec2 instance) like it were an bare metal machine.

They have it working, non-stop, for over 4 years or so. Just one instance. Running MySQL, PostgreSQL, Apache, PHP and an f* Tomcat server with no less than 10 HUGE apps deployed. I just can't believe this instance is still up.

By the way, they don't do backups, most of the data is on the ephemeral storage, they use just one private key for every dev, no CI, no testing. Deployment are nightmares using scp to upload the .war...

But still, they are running several several apps for things like registering citizen complaints that comes in by hot lines. The system is incredibly slow as they use just hibernate without query optimizations to lookup and search things (n+1 query problems).

They didn't even bother to get a proper domain. They use an IP address and expose the port for tomcat directly. No reverse proxy here! (No ssl too)

I've been out of this company for two years now, it was my first work as a developer, but they needed help for an app that I worked on during my time there. I was really surprised to see that everything still the same. Even the old private key that they emailed me (?!?!?!?!) back then still worked. All the passwords still the same too.

I have some good rants from the time I was there, and about the general level of the developers in my region. But I'll leave them for later!

Is it just me or this whole shit is crazy af?3 -

3 rants for the price of 1, isn't that a great deal!

1. HP, you braindead fucking morons!!!

So recently I disassembled this HP laptop of mine to unfuck it at the hardware level. Some issues with the hinge that I had to solve. So I had to disassemble not only the bottom of the laptop but also the display panel itself. Turns out that HP - being the certified enganeers they are - made the following fuckups, with probably many more that I didn't even notice yet.

- They used fucking glue to ensure that the bottom of the display frame stays connected to the panel. Cheap solution to what should've been "MAKE A FUCKING DECENT FRAME?!" but a royal pain in the ass to disassemble. Luckily I was careful and didn't damage the panel, but the chance of that happening was most certainly nonzero.

- They connected the ribbon cables for the keyboard in such a way that you have to reach all the way into the spacing between the keyboard and the motherboard to connect the bloody things. And some extra spacing on the ribbon cables to enable servicing with some room for actually connecting the bloody things easily.. as Carlos Mantos would say it - M-m-M, nonoNO!!!

- Oh and let's not forget an old flaw that I noticed ages ago in this turd. The CPU goes straight to 70°C during boot-up but turning on the fan.. again, M-m-M, nonoNO!!! Let's just get the bloody thing to overheat, freeze completely and force the user to power cycle the machine, right? That's gonna be a great way to make them satisfied, RIGHT?! NO MOTHERFUCKERS, AND I WILL DISCONNECT THE DATA LINES OF THIS FUCKING THING TO MAKE IT SPIN ALL THE TIME, AS IT SHOULD!!! Certified fucking braindead abominations of engineers!!!

Oh and not only that, this laptop is outperformed by a Raspberry Pi 3B in performance, thermals, price and product quality.. A FUCKING SINGLE BOARD COMPUTER!!! Isn't that a great joke. Someone here mentioned earlier that HP and Acer seem to have been competing for a long time to make the shittiest products possible, and boy they fucking do. If there's anything that makes both of those shitcompanies remarkable, that'd be it.

2. If I want to conduct a pentest, I don't want to have to relearn the bloody tool!

Recently I did a Burp Suite test to see how the devRant web app logs in, but due to my Burp Suite being the community edition, I couldn't save it. Fucking amazing, thanks PortSwigger! And I couldn't recreate the results anymore due to what I think is a change in the web app. But I'll get back to that later.

So I fired up bettercap (which works at lower network layers and can conduct ARP poisoning and DNS cache poisoning) with the intent to ARP poison my phone and get the results straight from the devRant Android app. I haven't used this tool since around 2017 due to the fact that I kinda lost interest in offensive security. When I fired it up again a few days ago in my PTbox (which is a VM somewhere else on the network) and today again in my newly recovered HP laptop, I noticed that both hosts now have an updated version of bettercap, in which the options completely changed. It's now got different command-line switches and some interactive mode. Needless to say, I have no idea how to use this bloody thing anymore and don't feel like learning it all over again for a single test. Maybe this is why users often dislike changes to the UI, and why some sysadmins refrain from updating their servers? When you have users of any kind, you should at all times honor their installations, give them time to change their individual configurations - tell them that they should! - in other words give them a grace time, and allow for backwards compatibility for as long as feasible.

3. devRant web app!!

As mentioned earlier I tried to scrape the web app's login flow with Burp Suite but every time that I try to log in with its proxy enabled, it doesn't open the login form but instead just makes a GET request to /feed/top/month?login=1 without ever allowing me to actually log in. This happens in both Chromium and Firefox, in Windows and Arch Linux. Clearly this is a change to the web app, and a very undesirable one. Especially considering that the login flow for the API isn't documented anywhere as far as I know.

So, can this update to the web app be rolled back, merged back to an older version of that login flow or can I at least know how I'm supposed to log in to this API in order to be able to start developing my own client?6 -

Marketing wants to remove the word "sex" from one of my slide decks.

Fuck people who get outraged for others. They are making a bad situation much worse.

Yes, there are people who get triggered by the slightest thing---but those people are going to be triggered no matter what you do. And it seems to me that I'd not want to have them as customers anyway---massive support cost.

We are in danger of washing everything until it becomes an inoffensive shade of beige.

Why do the 99% have to be bored for the 1%?

It's not like I'm doing a live demo...yet...

So, fuck outrage by proxy. If you are personally outraged then say that. If not, shut the fuck up.13 -

The overhead on my JS projects is killing me. Today, I went to implement a simple feature on a project I haven't touched in a few weeks. I wasted 80% of my time on mindless setup crap.

- "Ooh, a simple new feature to implement. Let's get crackin'!"

- update 1st party lib

- ....hmm, better update node modules

- and Typescript typings while I'm at it

- "ugh yeah," revert one node module to outdated version because of that one weird proxy bug

- remove dead tsd references

- fix TS "errors" generated by new typings

- fix bug in 1st party lib

- clean up some files because the linter is nagging me

- pee

- change 6 lines of code <-- the work

- commit!3 -

FUCK YOU WORDPRESS

Omfg never been so fucking pissed in my life.

I just wasted 3 hours because this fucking bullshit rewrites the fucking URL based on the URL on a config fucking file?!!?

It fucking ignores: apache virtual host configs and nginx reverse proxy

omfg...8 -

hey everyone, I'm new to Dev rant. not quite sure what to say. so i guess I'll just observe.

cheers3 -

I've been pleading for nearly 3 years with our IT department to allow the web team (me and one other guy) to access the SQL Server on location via VPN so we could query MSSQL tables directly (read-only mind you) rather than depend on them to give us a 100,000+ row CSV file every 24 hours in order to display pricing and inventory per store location on our website.

Their mindset has always been that this would be a security hole and we'd be jeopardizing the company. (Give me a break! There are about a dozen other ways our network could be compromised in comparison to this, but they're so deeply forged in M$ server and active directories that they don't even have a clue what any decent script kiddie with a port sniffer and *nix could do. I digress...)

So after three years of pleading with the old IT director, (I like the guy, but keep in mind that I had to teach him CTRL+C, CTRL+V when we first started building the initial CSV. I'm not making that up.) he retired and the new guy gave me the keys.

Worked for a week with my IT department to get Openswan (ipsec) tunnel set up between my Ubuntu web server and their SQL Server (Microsoft). After a few days of pulling my hair out along with our web hosting admins and our IT Dept staff, we got them talking.

After that, I was able to install a dreamfactory instance on my web server and now we have REST endpoints for all tables related to inventory, products, pricing, and availability!

Good things come to those who are patient. Now if I could get them to give us back Dropbox without having to socks5 proxy throug the web server, i'd be set. I'll rant about that next.

http://tapsla.sh/e0jvJck7 -

Just wrote a (PHP based) proxy which can cache resources being requested and serve them to clients.

The idea is that (I'm going to write a firefox add-on for it too, yes) you can install the add-on and any resource (js/CSS, general web resources which would be downloaded off of googleapi's etc) hosted with Google would be proxied through the server running the proxy, meaning that one wouldn't have to connect to the mass surveillance networks directly anymore as for static resources.

I think checksum verify stuff would still work as the proxy is literally a proxy, the content will be identical to the 'real' resource. (Not sure about this one, enlighten me if this isn't true)

Input appreciated!17 -

In a moment of boredom I decided to pen test the new system I've been writing on the live server. Ran sqlmap but forgot to proxy my connection.

DDOS protection kicked in and blocked the entire offices connection to the server, had to drive home quickly to use my home internet to un-blacklist my office ip. 😂10 -

The company I work for (very big IT consultancy) has made the absolutely genius decision to put a block on the corporate proxy for GitHub. GITHUB. Because no fucking software developer ever needs to visit there. Their reason? "We don't want people publishing our intellectual property". Mate, I can fucking guarantee you that if unscrupulous bastards want to publish code against our T&C's, they will do so. Why make every body else's job harder and block it?!

But the best bit, you can submit a request (that is accepted without question) to get yourself an exemption. WHY THE FUCKING FUCK HAVE THE BLOCK IN THE FIRST PLACE THEN

To add to their fucktardery, they blocked the CDN that hosted stackoverflows css and JavaScript last year (CloudFlare) weeks after the alleged hack was fixed, and seemingly without any research at all. This obviously rendered stackoverflow unusable. Because again, why would a company full of engineers need to go there.

Morons.3 -

Every year my team runs an award ceremony during which people win “awards” for mistakes throughout the year. This years was quite good.

The integration partner award- one of our sysAdmins was talking with a partner from another company over Skype and was having some issues with azure. He intended to send me a small rant but instead sent “fucking azure can go fuck itself, won’t let me update to managed disks from a vhd built on unmanaged” to our jv partner.

Sysadmin wannabe award (mine)- ran “Sudo chmod -R 700 /“ on one of our dev systems then had to spend the next day trying to fix it 😓

The ain’t no sanity clause award - someone ran a massive update query on a prod database without a where clause

The dba wannabe award - one of our support guys was clearing out a prod dB server to make some disk space and accidentally deleted one of the databases devices bringing it down.

The open source community award - one of the devs had been messing about with an apache proxy on a prod web server and it ended up as part of a botnet

There were others but I can’t remember them all4 -

> dockerized gitea stops working 502,

> other gitea with same config works just fine

> is the same config the issue? maybe the network names can't be the same?

> no

> any logs from the reverse proxy?

> no

> does it return anything at all on that port?

> no

> any logs inside the container?

> no

> maybe it logs to the wrong file?

> no others exist

> try to force custom log levels

> ignored

> try to kill the running pid

> it instantly restarts

> try to run a new instance with specifying the new config

> ignores config

> check if theres anything even listening

> nothing is listening on that port, but is listening in the other working gitea container

> try to destroy the container and force a fresh container

> still the same issue

> maybe the recent docker update broke it? try to make a new one and move only necessary

> mkdir gitea2

> all files seem necessary

> guess I'll try to move the same folder here

> it works

> it is exactly the same files as in gitea1, just that the folder name is different

> 10

10 -

Are you using socat?

Any interesting use case you would like to share?

I am using it to create fake / proxy docker containers for network testing. 7

7 -

I've found and fixed any kind of "bad bug" I can think of over my career from allowing negative financial transfers to weird platform specific behaviour, here are a few of the more interesting ones that come to mind...

#1 - Most expensive lesson learned

Almost 10 years ago (while learning to code) I wrote a loyalty card system that ended up going national. Fast forward 2 years and by some miracle the system still worked and had services running on 500+ POS servers in large retail stores uploading thousands of transactions each second - due to this increased traffic to stay ahead of any trouble we decided to add a loadbalancer to our backend.

This was simply a matter of re-assigning the IP and would cause 10-15 minutes of downtime (for the first time ever), we made the switch and everything seemed perfect. Too perfect...

After 10 minutes every phone in the office started going beserk - calls where coming in about store servers irreparably crashing all over the country taking all the tills offline and forcing them to close doors midday. It was bad and we couldn't conceive how it could possibly be us or our software to blame.

Turns out we made the local service write any web service errors to a log file upon failure for debugging purposes before retrying - a perfectly sensible thing to do if I hadn't forgotten to check the size of or clear the log file. In about 15 minutes of downtime each stores error log proceeded to grow and consume every available byte of HD space before crashing windows.

#2 - Hardest to find

This was a true "Nessie" bug.. We had a single codebase powering a few hundred sites. Every now and then at some point the web server would spontaneously die and vommit a bunch of sql statements and sensitive data back to the user causing huge concern but I could never remotely replicate the behaviour - until 4 years later it happened to one of our support staff and I could pull out their network & session info.

Turns out years back when the server was first setup each domain was added as an individual "Site" on IIS but shared the same root directory and hence the same session path. It would have remained unnoticed if we had not grown but as our traffic increased ever so often 2 users of different sites would end up sharing a session id causing the server to promptly implode on itself.

#3 - Most elegant fix

Same bastard IIS server as #2. Codebase was the most unsecure unstable travesty I've ever worked with - sql injection vuns in EVERY URL, sql statements stored in COOKIES... this thing was irreparably fucked up but had to stay online until it could be replaced. Basically every other day it got hit by bots ended up sending bluepill spam or mining shitcoin and I would simply delete the instance and recreate it in a semi un-compromised state which was an acceptable solution for the business for uptime... until we we're DDOS'ed for 5 days straight.

My hands were tied and there was no way to mitigate it except for stopping individual sites as they came under attack and starting them after it subsided... (for some reason they seemed to be targeting by domain instead of ip). After 3 days of doing this manually I was given the go ahead to use any resources necessary to make it stop and especially since it was IIS6 I had no fucking clue where to start.

So I stuck to what I knew and deployed a $5 vm running an Nginx reverse proxy with heavy caching and rate limiting linked to a custom fail2ban plugin in in front of the insecure server. The attacks died instantly, the server sped up 10x and was never compromised by bots again (presumably since they got back a linux user agent). To this day I marvel at this miracle $5 fix.1 -

My school just tried to hinder my revision for finals now. They've denied me access just today of SSHing into my home computer. Vim & a filesystem is soo much better than pen and paper.

So I went up to the sysadmin about this. His response: "We're not allowing it any more". That's it - no reason. Now let's just hope that the sysadmin was dumb enough to only block port 22, not my IP address, so I can just pick another port to expose at home. To be honest, I was surprised that he even knew what SSH was. I mean, sure, they're hired as sysadmins, so they should probably know that stuff, but the sysadmins in my school are fucking brain dead.

For one, they used to block Google, and every other HTTPS site on their WiFi network because of an invalid certificate. Now it's even more difficult to access google as you need to know the proxy settings.

They switched over to forcing me to remote desktop to access my files at home, instead of the old, faster, better shared web folder (Windows server 2012 please help).

But the worst of it includes apparently having no password on their SQL server, STORING FUCKING PASSWORDS IN PLAIN TEXT allowing someone to hijack my session, and just leaving a file unprotected with a shit load of people's names, parents, and home addresses. That's some super sketchy illegal shit.

So if you sysadmins happen to be reading this on devRant, INSTEAD OF WASTING YOUR FUCKING TIME BLOCKING MORE WEBSITES THAN THEIR ARE LIVING HUMANS, HOW ABOUT TRY UPPING YOUR SECURITY, PASSWORDS LIKE "", "", and "gryph0n" ARE SHIT - MAKE IT BETTER SO US STUDENTS CAN ACTUALLY BROWSE MORE FREELY - I THINK I WANT TO PASS, NOT HAVE EVERY OTHER THING BLOCKED.

Thankfully I'm leaving this school in 3 weeks after my last exam. Sure, I could stay on with this "highly reputable" school, but I don't want to be fucking lied to about computer studies, I don't want to have to workaround your shitty methods of blocking. As far as I can tell, half of the reputation is from cheating. The students and sysadmins shouldn't have to have an arms race between circumventing restrictions and blocking those circumventions. Just make your shit work for once.

**On second thought, actually keep it like that. Most of the people I see in the school are c***s anyway - they deserve to have half of everything they try to do censored. I won't be around to care soon.**undefined arms race fuck sysadmin ssh why can't you just have any fucking sanity school windows server security2 -

Switched to DuckDuckGo, because Google thought it would be nice, to ban the Proxy IP of our company (because you know, many requests) from searching and putting us behind these captcha monstrosities. I don't want to captcha myself out of every query I have for goddamn 2 minutes with slow ass fading images.

Turns out, I like their service even more. 9

9 -

*describes problem that a system doesn't work behind an nginx proxy, routing to another nginx instance*

*some random "expert" jumps in*

hurrdurr: It works behind nginx proxy, with APACHE, I don't even get why you would want to run nginx behind nginx omg9 -

Boss calls: "Can you give me more bandwith?"

Me: "I can, but the other coworkers will have issues"

Boss: "Doesn't matter, and please, lift up the proxy too"

Me: "I am sorry, but I can't, that could compromise our security"

Boss: "I am giving you an order..."

Me: "Ok then..."

Me: *proceeds to give boss more bandwith and lifts up proxy (all is lost now)*

I go to see what is the boss doing with the bandwith...he was downloading League of Legends in his personal notebook...

TL;DR: Boss asks to put company at risk for the sake of a game...2 -

Trying to learn some golang after a break.

Made http / https transparent proxy for personal project.

Mind: You need to add configuration file with domains you allow traffic and block everything else using list of regex.

Me: Ok I can do it, 4 hours later ok done

Mind: Why not make it differently by making list of url you can block and test this shit on fucking ads and stop using adblock that downloads content.

Me: ok that will be handy I can watch websites faster and drop traffic I don’t want to.

Funny fact, it works I broke analytics, logging, quantum shit fucks and even youtube plays ok.

Go is awesome for networking stuff lol.12 -

Dear Product Owners,

If you tell me how I need to architect my software again I'm going to ask you to provide a network topology of the architecture you want me to build.

I'll also need you to request the new servers, work with the ops teams to setup credentials, provision the NAT, register the domains and document the routes that the proxy will need to use.

then I'll need you to hook the repo up to our non-existent pipeline so that I can make sure I won't do all that testing I already can't do.

I hope you're paying attention, because that framework you told me I needed to use is going to be a pain to setup correctly.

after you're done with that, please attach any documentation you shit out to the ticket you never created.

Enragedly yours,

Looking for a new job

PS: get fucked3 -

First (working) attempts at writing a proxy that rewrites live requests from the devrant app, right now it only rewrites all notifications to be unread

Though the first attempt that finally works is built with mitmproxy and it's add-on scripting, plan is to get that stuff work with e.g. goproxy instead 37

37 -

My Android phone is 5 years old. Everybody tell me I should buy a new one but I'm a stingy environmentalist and I refuse buying new stuff if it is not strictly necessary.

So, for 9€ I replaced the phone battery and then I installed a custom ROM, so it looks a bit newer.

Unfortunately, it seems that something in the network configuration has been fucked up.

The phone is able to browse the Internet, but:

- WiFi hotspot is not working

- USB tethering is not working

- Bluetooth tethering is not working

- PPP over USB is not working

But, hey, I never give up, so this is my current setup:

- I installed a proxy server on the phone

- I'm using "adb forward" to forward the proxy port from the phone to my laptop

- I configured Firefox to use that proxy

And, yes, I'm using that connection to write this post. :D10 -

1. Needed to access an old SVN backup.

2. Didn't have an SVN client installed.

3. Realised GIT comes with an SVN proxy included.

Cool, I'll just quickly download the repository via SVN.

> git svn init

... git sequentially downloads each of the 1800+ revisions and applies them individually.

It's cool, I didn't want to do anything productive today anyway.3 -

Decided to throw pi-hole in a bin and found enough resources to throw together my own dns filter in node, which if not on the blacklist - proxies the request to an actual dns, which allows to filter given just a word too (because it's regex matching), "came up" with the idea after @Linuxxx wanted to make (or made?) some big hosts file via php matching and blocking to block anything that e.g. contains "google".

By resources I totally mean I would have ate shit, if it wasn't for: https://peteris.rocks/blog/... as most docs are absolute garbage regarding node-dns 54

54 -

Question for networking persons or persons who might know more about this than me in general.

I'm looking at setting up a server as vpn server (that part I know) which tunnels everything through multiple other vpn connections.

So let's say I've got a vps which I connect to through vpn. I then want that vps to have one or multiple connections to other vpn servers.

That way i can connect my devices to this server which routes everything to/through other services like mullvad :).

Tried it before but ended up losing ssh access until reboot 😬

Anyone ideas?28 -

I have a Windows machine sitting behind the TV, hooked to two controllers, set up as basically a console for the big TV. It doesn't get a lot of use, and mostly just churns out folding@home work units lately. It's connected by ethernet via a wired connection, and it has a local static IP for the sake of simplicity.

In January, Windows Update started throwing a nonspecific error and failing. After a couple weeks I decided to look up the error, and all the recommendations I found online said to make sure several critical services were running. I did, but it appeared to make no difference.

Yesterday, I finally engaged MS support. Priyank remoted into my machine and attempted all the steps I had already tried. I just let him go, so he could get through his checklist and get to the resolution steps. Well, his checklist began and ended with those steps, and he started rather insistently telling me that I had to reinstall, and that he had to do it for me. I told him no thank you, "I know how to reinstall windows, and I'll do it when I'm ready."

In his investigation though, I did notice that he opened MS Edge and tried to load Bing to search for something. But Edge had no connection. No pages would load. I didn't take any special notice of it at the time though, because of the argument I was having with him about reinstalling. And it was no great loss to me that Edge wasn't working, because that was literally the first time it'd ever been launched on that computer.

We got off the phone and I gave him top marks in the CS survey that was sent, as it appeared there was nothing he could do. It wasn't until a couple hours later that I remembered the connectivity problem. I went back and checked again. Edge couldn't load anything. Firefox, the ping command, Steam, Vivaldi, parsec and RDP all worked fine. The Windows Store couldn't connect either. That was when it occurred to me that its was likely that Windows Update was just unable to reach the internet.

As I have no problem whatsoever with MS services being unable to call home, I began trying to set up an on-demand proxy for use when I want to update, and I noticed that when I fill out the proxy details in Internet Options, or in Windows 10's more windows10-ish UI for a system proxy, the "save" button didn't respond to clicks. So I looked that problem up, and saw that it depends on a service called WinHttpAutoProxySvc, which I found itself depends on something called IP Helper, which led me to the root cause of all my issues: IP Helper now depends on the DHCP Client service, which I have explicitly disabled on non-wifi Windows installs since the '90s.

Just to see, I re-enabled DHCP Client, and boom! Everything came back on. Edge, the MS Store, and Windows Update all worked. So I updated, went through a couple reboots-- because that's the name of the game with windows update --and had a fully updated machine.

It occurred to me then that this is probably how MS sends all its spy data too, and since the things I actually use work just fine, I disabled DHCP Client again. I figure that's easier than navigating an intentionally annoying menu tree of privacy options that changes and resets with every major update.

But holy shit, microsoft! How can you hinge the entire system's OS connectivity on something that not everybody uses? 6

6 -

This is fucking bad. I just stumbled across a database online, unencrypted plain text containing ALL details of thousands of students at my university. Full names, ID number (SSN), student numbers, address, family info, medical aid info, physical fitness reports

What do I do? I was not on any VPN or proxy when I accessed it19 -

Fucking IT and their self signed corporate proxy SSL bullshit getting in the way of anything that needs to verify SSL requests,

Fuck you for making my day a slow and miserable day and having to resort to forcing rest apis and SDKs to work over HTTP instead, all in the name of “Security”.2 -

Might be nothing for others, but I finally published my Vue website with the following setup:

1. Vue inside docker

2. Nodejs API inside docker

3. MongoDB inside docker

4. Nginx as reverse proxy

5. Let's Encrypt

6. NO I WILL NOT SHARE THE LINK, don't want to be hacked lol and it is for personal use only.

But I'd love to thank devRant members who have helped me reach this point, two months ago I was a complete noob in Vue and a beginner in NodeJs services, now I have my own todo website customized for my needs.

Thank you :) 26

26 -

So I manage multiple VPS's (including multiple on a dedicated server) and I setup a few proxy servers last week. Ordered another one yesterday to run as VPN server and I thought like 'hey, let's disable password based login for security!'. So I disabled that but the key login didn't seem to work completely yet. I did see a 'console' icon/title in the control panel at the host's site and I've seen/used those before so I thought that as the other ones I've used before all provided a web based console, I'd be fine! So le me disabled password based login and indeed, the key based login did not work yet. No panic, let's go to the web interface and click the console button!

*clicks console button*

*New windows launches.....*

I thought I would get a console window.

Nope.

The window contained temporary login details for my VPS... guess what... YES, FUCKING PASSWORD BASED. AND WHO JUST DISABLED THE FUCKING PASSWORD BASED LOGIN!?!

WHO THOUGHT IT WOULD BE A GOOD IDEA TO IMPLEMENT THIS MOTHERFUCKING GOD?!?

FUUUUUUUUUUUUUUUUUUUUUUU.3 -

TL;DR : do we need a read-only git proxy

Guys, I just thought about something and this potential gitpocalypse.

There is no doubt anymore that regardless of Microsoft's decisions about Github, some projects will or already have migrated to the competition.

I'm thinking : some projects use the git link to fetch the code. If a dependency gets migrated, it won't be updated anymore, or worse, if the previous repo gets deleted, it can break the project.

Hence my idea : create some repository facade to any public git repository (regardless of their actual location).

Instead of using github.com/any/thing.git, we could use opensourcegit.com/any/thing.git. (fake url for the sake of the example).

It would redirect to the right repository (for public read only), and the owner could change the location of the actual repository in case of a migration.

What do you think ? If I get enough ++'s, I'll create a git repo about this.6 -

I plan on making a proxy for my home network. Whenever you make a Google search, it will search it on duckduckgo and return the same results, but look as if it were google. Will people notice the difference?30

-

Hi lil puppies what's your problem?

*proxy vomits*

Have you eaten something wrong....

*proxy happily eats requests and answers correctly*

Hm... Seems like you are...

*proxy vomits dozen of requests at once*

... Not okay.

Ok.... What did u you get fed you lil hellspawn.

TLS handshake error.

Thousands. Of. TLS. Handshake. Errors.

*checking autonomous system information*

Yeah... Requests come from same IP or AS. Someone is actively bombing TLS requests on the TLS terminator.

Wrong / outdated TLS requests.

Let's block the IP addresses....

*Pats HAProxy on the head*

*Gets more vomit as a thank you no sir*

I've now added a list of roughly 320 IP adresses in 4 h to an actively running HAProxy in INet as some Chinese fuckers seemingly find it funny to DDOS with TLS 1.0... or Invalid HTTP Requests... Or Upgrade Headers...

Seriously. I want a fucking weekend you bastards. Shove your communism up your arse if you wanna have some illegal fun. ;)11 -

*Writes Voting platform*

*Uses ips to stop duplicate voting*

*Notices how lots of the IPS are similar*

*investigates*

*Traces IP*

London? Cloudflare?

Oh shit. Cloudflare HTTP proxy...

fail.5 -

My school has a proxy to block games and some other sites. I was thinking of ways to get around, when I remembered, "I don't have to code anything, I can use google translate!"7

-

Yesterday evening I began working on an SSL proxying system for dynamic domain names using Let's Encrypt. I finished just a few hours ago and it's working flawlessly!3

-

weather is beautiful, sun is shining, I am feeling mischevious. Shall I block stackoverflow on our proxy on Monday?2

-

A conversation with my dear sister...

She: Hey Davide, why does this message appear?

Message of youtube: "This video is not available in your country"

Me: It means that whoever uploaded the video wants to reproduce it only in the country chosen by him during the upload.

She: Ah, but how can I do to see it?

Me: You have to go through a proxy. Wait a minute... I arrive...

She: But using the incognito mode could not work?

Me: No 😑😑

Me (thinking): No please... no... please... what was the question? No...

I like you anyway ❤3 -

Network Security at it's best at my school.

So firstly our school has only one wifi AP in the whole building and you can only access Internet from there or their PCs which have just like the AP restricted internet with mc afee Webgateway even though they didn't even restrict shuting down computers remotely with shutdown -i.

The next stupid thing is cmd is disabled but powershell isn't and you can execute cmd commands with batch files.

But back to internet access: the proxy with Mcafee is permanently added in these PCs and you don't havs admin rights to change them.

Although this can be bypassed by basically everone because everyone knows one or two teacher accounts, its still restricted right.

So I thought I could try to get around. My first first few tries failed until I found out that they apparently have a mac adress wthitelist for their lan.

Then I just copied a mac adress of one of their ARM terminals pc and set up a raspberry pi with a mac change at startup.

Finally I got an Ip with normal DHCP and internet but port 80 was blocked in contrast to others like 443. So I set up an tcp openvpn server on port 443 elsewhere on a server to mimic ssl traffic.

Then I set up my raspberry pi to change mac, connect to this vpn at startup and provide a wifi ap with an own ip address range and internet over vpn.

As a little extra feature I also added a script for it to act as Spotify connect speaker.

So basically I now have a raspberry pi which I can plugin into power and Ethernet and an aux cable of the always-on-speakers in every room.

My own portable 10mbit/s unrestricted AP with spotify connect speaker.

Last but not least I learnt very many things about networks, vpns and so on while exploiting my schools security as a 16 year old.8 -

So as all of you web developers know. If you are stepping into the world of web development you stepping into a world of unlimited possibilities, opportunities and adventure.

The flip side is that you step into a world of unlimited choices, tools, best practices, tutorials etc.

Since even for a veteran programmer, this is a little overwhelming, I'd like to take the opportunity to ask you guys for advice.

I know that 'there is no best' and that everything 'depends on what you want to achieve'. So how about just say the pro's and cons or when to use and when not to use. Or why you prefer one over another. Everything is allowed! :D

Maybe it will help others too. Start a nice, professional discussion:)

These are the parts I'd like advice about:

- frontend: what frameworks, libraries

- backend: language, framework, good practice

- server: OS, proxy (nginx, Apache, passenger), extra tips (like don't use root user)

- extras: git, GitHub, docker, anything

Thanks in advance everyone willing to help!:)

Also, if you only know frontend or backend. No worries, just tell me about your specialism!6 -

So, there was an art student yesterday at my dorm complaining about free speech etc. She told me that they where trying to bring the schools proxy down.

I was pretty impressed because it's an art student!

She then proceeded to tell me she had downloaded kali linux and was learning html...3 -

Using mongodb for one product

A colleague as experimenting with elastic search (I think it was).

It installed a proxy around the collection to get all events for the external search storage.

Worked well, but it was just a test so once done we removed it

But thats where it got scary.

When we removed the proxy through the search dashboard it dropped the underlying collection of live data!!!

A collection it did not create.

Hows that for bad UI.

Always experiment on a separate db server. -

I just got my Python project working on my new work PC!!! It took all morning 😂😂😂😂😂

I had to basically hack my company so I could do my job.

More specifically, I had to install a proxy server so Python, and other CLI tools, could access the internet via our company's NTLM/web proxy server.... After some IT morons reconfigured it... without testing or providing us a way to continue using it...1 -

Today the corporate proxy decided to flake out on me. Every single external site was blocked.

I was shown a very helpful page informing me the site I wanted to visit was blocked. If I had legit need to access the site or believe the site was blocked in error I could contact IT via a helpful link.

And yeah, the IT support site was blocked by the proxy too.1 -

>Asks client if the proxy can use self-signed cert

>Client agrees, no problem

>Deploys

>Client complains about "an error they're getting"

>The error: "Error in connection establishment: net::ERR_CERT_AUTHORITY_INVALID"

:|

Am I a joke to you? Or am I just talking to a brick wall over there?7 -

#include <rant>

Using angry standard;

Int main()

{

cout << "So my mom recently started "exploring the web". I'm sure you already know where this is going; she ended up signing up for a free trail of some diet pills with her credit card on some sketchy website. The website never sent any product but attempted to charge her card over $300 multiple times. My mom's bank noticed and froze the account. She has now opened an investigation with the banks fraud department and is awaiting response. I took the liberty of running a whois look up and found the companies website is held by GoDaddy and is hiding behind Domains by Proxy (GoDaddy's sysadmin hider). I'm angry that she's in this situation but I have no idea of how to uncover the real company behind the diet pills site." << endl;

Return 0;

}2 -

FYI. Copied from my FB stalked list.

Web developer roadmap 2018

Common: Git, HTTP, SSH, Data structures & Algorithms, Encoding

------

Front-end: HTML, CSS, JavaScript > ES6, NPM, React, Webpack, Responsive Web, Bootstrap

------

Back-end: PHP, Composer, Laravel > Nginx, REST, JWT, OAuth2, Docker > MariaDB, MemCached, Redis > Design Patterns, PSRs

------

DevOps: Linux, AWS, Travis-CI, Puppet/Chef, New Relic > Docker, Kubernetes > Apache, Nginx > CLI, Vim > Proxy, Firewall, LoadBalancer

------

https://github.com/kamranahmedse/...2 -

This is how I figured out I figured out how to fix my proxy settings.

Can not Netflix and chill (work) anymore.

-

A lot of things dev say are true, but this one I don't believe as much:

Many devs say that it's important for everybody to learn a bit of a basic programming language, to learn about computers and how programs are made. I disagree, I think that instead people should learn *how* things work. Ex, in my school people always use a VPN to get around the proxy. I don't care if they know basic statements, I think it's more important to learn how a VPN works. Most of them don't even know what VPN stands for. Am I the only one?3 -

I get an email about an hour before I get into work: Our website is 502'ing and our company email addresses are all spammed! I login to the server, test if static files (served separately from site) works (they do). This means that my upstream proxy'd PHP-FPM process was fucked. I killed the daemon, checked the web root for sanity, and ran it again. Then, I set up rate limiting. Who knew such a site would get hit?

Some fucking script kiddie set up a proxy, ran Scrapy behind it, and crawled our site for DDoS-able URLs - even out of forms. I say script kiddie because no real hacker would hit this site (it's minor tourism in New Jersey), and the crawler was too advanced for joe shmoe to write. You're no match for well-tuned rate-limiting, asshole!1 -

Has been a long time since I'm appreciating working with GRPC.

Amazingly fast and full-featured protocol! No complaints at all.

Although I felt something was missing...

Back in the days of HTTP, we were all given very simple tools for making requests to verify behaviours and data of any of our HTTP endpoints, tools like curl, postman, wget and so on...

This toolset gives us definitely a nice and quick way to explore our HTTP services, debug them when necessary and be efficient.

This is probably what I miss the most from HTTP.

When you want to debug a remote endpoint with GRPC, you need to actually write a client by hand (in any of the supported language) then run it.

There are alternatives in the open source world, but those wants you to either configure the server to support Reflection or add a proxy in front of your services to be able to query them in a simpler way.

This is not how things work in 2018 almost 2019.

We want simple, quick and efficient tools that make our life easier and having problems more under control.

I'm a developer my self and I feel this on my skin every day. I don't want to change my server or add an infrastructure component for the simple reason of being able to query it in a simpler way!

However, This exact problem has been solved many times from HTTP or other protocols, so we should do something about our beloved GRPC.

Fine! I've told to my self. Let's fix this.

A few weeks later...

I'm glad to announce the first Release of BloomRPC - The first GRPC Client GUI that is nice and simple,

It allows to query and explore your GRPC services with just a couple of clicks without any additional modification to what you have running right now! Just install the client and start making requests.

It has been built with the Electron technology so its a desktop app and it supports the 3 major platforms, Mac, Linux, Windows.

Check out the repository on GitHub: https://github.com/uw-labs/bloomrpc

This is the first step towards the goal of having a simple and efficient way of querying GRPC services!

Keep in mind that It is in its first release, so improvements will follow along with future releases.

Your feedback and contributions are very welcome.

If you have the same frustration with GRPC I hope BloomRPC will make you a bit happier! 3

3 -

I'll just start off with how I really feel. Fuck big corporations with their career robots and retarded practices!

Now for a story. So I work remotely for most of the time nowadays, since my company has as clients big corporations. Used to be embedded with said clients, but it became kind of painful to work with them all so I asked to be reassigned to a remote position.

Now for the retarded part: The fucking Klingons I'm working with have two tiers to their VPN, but won't let me have the full version because it would be too fucking expensive. I checked and it's fucking 50 bucks per year difference.

So for that the Klingons are making me code through a remote connection that has a "best effort" priority.

Fuck.

Anyway after 3 weeks of writing code at a 400-600ms latency I finally snap.

I try to use a proxy and it. I write one myself, gets balcklisted in 2 days.

After about another week of writing code through a fuck straw I start working on node socket with 2 clients and a server that encrypts the send data, and syncs 2 folders between my workstation and the remote one.

It's been a month now and it is still working. It's not perfect, but I can at least write code without lag.

Question for you peeps: What shenanigans have you pulled to bypass shit like this?3 -

I am at a hotel and these fuckers are blocking outbound connections to port 22. They are also blocking access to any websites mentioning proxy or vpn, seriously fuck them. I managed to get a VNC connection open to one of my servers and I am now trying to set up a VPN tunnel to my servers so I can fucking do my work. >:-(6

-

Being a sysadmin can be the most frustrating thing ever, but it's worth it for those moments when you feel like an absolute ninja.

Switched from single threaded gevent server to an nginx configuration, added ssl, and setup a reverse proxy to flask socketio, all with less than 10 minutes aggregate downtime. On the prod server. \o/3 -

Just a rant... It really sucks to work with maven on a security-paranoid financial institution enforcing ntml proxy auth...

Also usb ports disabled... :(5 -

I took like 3 years to my company to get this huge-ass client to ask us to remake their website (the client is already our client for other purposes).

The old website was hosted on their local machine, behind a proxy that was there for other 30 website servers.

The old website took like 30-40 seconds to load on a browser and had a google score of 3-6/100.

We made the new website in wordpress, since it was basically a blog and managed all of the older links to redirect to the new pages so that SEO wouldn't get affected.

We then asked the previous developers to let their domain redirect to the new one (it was like example.com => ex.example.com and now it's just example.com, so we needed them to make ex.example.com redirect to example.com).

What they did was making a redirection to the 404 page of the new website, making everything go to fuck itself.

Damn this might be the first time I despise other developers, but this move was fucking awful.

I mean, I get it, we stole your big client, but it's not our fault if we made the google score go up to 90/100 in a week just by changing server and CMS.11 -

This Russian site (https://kzclip.com) literally cloned the whole of youtube including its channels and also its recommendation logic... i wonder how those crazy bastards did it.7

-

I hate:

- Enterprise patterns

- Enterprise type programming

- Dependency hell

- Logging hell

- Proxy hell

- Debugging hell

That will be all.7 -

!rant

I've seen some rants about people complaining about websites using the 'www' subdomain, so I'd like to take this opportunity to try to explain my opinion about why sites might use it.

I use to feel the same way about not having the www subdomain. It felt like an outdated standard that serves no purpose. But I have changed my option...

Sometimes certain servers have other services running other than just the website, such as ssh, ftp, sql, etc., running on different ports. What if you want to use a web proxy and caching service similar to cloudflare or a cdn? We'll you can't, because they won't allow traffic to flow through to your other ports.

That's where the www subdomain comes in. Enable your caching and cdn on your www subdomain, and slap a 301 redirect from your primary domain on port 80 or 443 to the www subdomain. This still allows you to access your other services via the domain name while still gaining the benefits of using a cdn.

Now I know you could use an 'ftp' subdomain or the like, but to each their own in that regard.7 -

oh, I have a few mini-projects I'm proud of. Most of them are just handy utilities easing my BAU Dev/PerfEng/Ops life.

- bthread - multithreading for bash scripts: https://gitlab.com/netikras/bthread

- /dev/rant - a devRant client/device for Linux: https://gitlab.com/netikras/...

- JDBCUtil - a command-line utility to connect to any DB and run arbitrary queries using a JDBC driver: https://gitlab.com/netikras/...

- KubiCon - KuberneterInContainer - does what it says: runs kubernetes inside a container. Makes it super simple to define and extend k8s clusters in simple Dockerfiles: https://gitlab.com/netikras/KubICon

- ws2http - a stateful proxy server simplifying testing websockets - allows you to communicate with websockets using simple HTTP (think: curl, postman or even netcat (nc)): https://gitlab.com/netikras/ws2http -

I work as a .Net consultant. Currently I am at a company that blocks all sociale media sites and sites that look like 'em. I don't mind the social media, but YouTube is also blocked and I need my dose of daily epic music world while developing. So, I set up a proxy on my server to easily bypass these blockades. Note: company policy says nothing about not being allowed certain websites, I always read this before using this trick.

Last week, a new guy joined the company and gets a desk just next to me. After a lot of looking at my screens and trying stuff he asks me for the entire office: "Hey how are you going on YouTube? It doesn't see to work for me.". 😫

The rest of the day, I had to explain to co-workers what a proxy is (they don't care about any tech they don't need...). And I had to explain to the pm that I was not hacking their network...

I'm not sure if I will be getting along with this new guy.... 😧1 -

How deep does the rabbit hole go?

Problem: Convert numpy array containing an audio time series to a .wav file and save on disk

Error 1:

Me: pip install "stupid package"

Console: Can't pip, behind a proxy

Me: Finds workaround after several minutes

Error 2:

Conversion works, but audio file on disk doesn't work

Encoding Error only works with array of ints not floats

BUT I NEED IT TO BE FLOATS

Looks for another library

scikits.audiolab <- should work

Me: pip --proxy=myproxy:port install "this shit"

Command Line *spits back huge error*

Googles error <- You need to install this package with a .whl file

Me: Downloads .whl file <- pip install "filename".whl

Command Line: ERROR: scikits.audiolab-0.11.0-cp27-cp27m-win32.whl is not a supported wheel on this platform.

Googles Error <- Need to see supported file formats

Me: python -c "import pip; print(pip.pep425tags.get_supported())"

Console: AttributeError: module 'pip' has no attribute 'pep425tags'

Googles Error <- Use another command for pip v10

Me: python -c "import pip._internal; print(pip._internal.pep425tags.get_supported())"

Console: complies

Me: pip install "filename".whl

Console: complies

Me: *spends 30 minutes to find directory where I should paste .dll file*

Finds Directory (was hidden btw), pastes file

Me: Runs .py file

Console: from version import version as _version ModuleNotFoundError: No module named 'version'

Googles Error <- Fix is: "just comment out the import statement"

Me: HAHAHAHAHAHA

Console: HAHAHAHAHA

Unfortunately this shit still didn't work after two hours of debugging, lmao fuck this7 -

I've been working on a web accelerator proxy for two days now, I got the backend done and extension is in the works.

The extension basically intercepts all static content and sends it to the proxy, which will happily rewrite these requests to their proxied counterparts. I tested it and it has a average 1-2s speed increase on a image request and 10s increase in large javascript bundles.

However I kinda need help with the extension (Im not exactly proficient with extension making) so if you wanna help the link is https://github.com/sr229/filo

The main inspiration for this is basically my shitty 3G connection and my country's likewise shitty internet situation. It's like Data saver but it works on https as well2 -

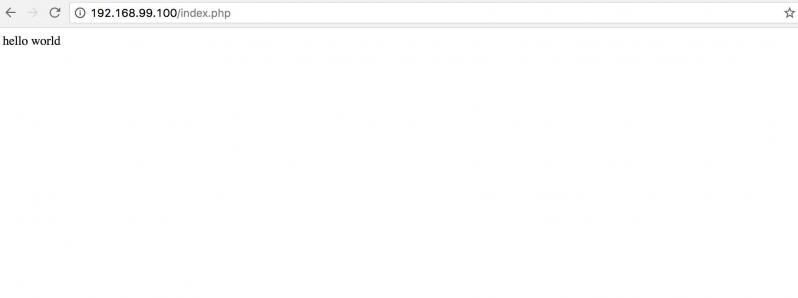

I am building a website inspired by devrant but have never built a server network before, and as im still a student I have no industry experience to base a design on, so was hoping for any advice on what is important/ what I have fucked up in my plan.

The attached image is my currently planned design. Blue is for the main site, and is a cluster of app servers to handle any incoming requests.

Green is a subdomain to handle images, as I figured it would help with performance to have image uploads/downloads separated from the main webpage content. It also means I can keep cache servers and app servers separated.

Pink is internal stuff for logging and backups and probably some monitoring stuff too.

Purple is databases. One is dedicated for images, that way I can easily back them up or load them to a cache server, and the other is for normal user data and posts etc.

The brown proxy in the middle is sorta an internal proxy which the servers need to authenticate with to connect to, that way I can just open the database to the internal proxy, and deny all other requests, and then I can have as many app servers as I want and as long as they authenticate with the proxy, they can access the database without me changing any firewall rules. The other 2 proxies just distribute requests between the available servers in the pool.

Any advice would be greatly appreciated! Thanks in advanced :D 13

13 -

It's frustrating when network guy blame tomcat is not running and hence something wrong with application without fucking checking proxy settings. Fucking waste my 2 hours and in the end he looks like idiot. Good Morning.

-

The primary concept of reactive programming is great. The idea that things just naturally re-run when anything they rely on is changed is amazing. Really, I think it's the next step in programming language development and within a decade or two at least one of the top 5 programming languages will be built entirely on this principle.

BUT

Expecting every dependency to be used unconditionally is stupid. Code that checks everything it might need all the time even if a decision can be made from much less information is simply bad, inefficient code. If you want to build a list of dependencies automatically, you have to parse the source.

And I really hate that there are TONS of languages that either make the AST readable at runtime or ship with a very powerful preprocessor that could be used to analyse expressions and build dependency lists, but by its sheer popularity the language we're trying to knead into something it was never and still isn't meant to be is JavaScript.3 -

I spent hours trying to enable CORS on AWS Lambda through API gateway (it was supposed to be simple and Amazon had a nice tutorial) but it turns out that there's a known bug that makes Lambda Proxy Integrations not adhere to any setting in the API Gateway, you have to respond with the headers through the Lambda yourself.

Amazon now mentions this in the tutorial, but if you click "Enable CORS" in API Gateway, it'll show you green check marks and tell you that everything went fine, but you'll find that the Lambda does not respond with the CORS headers. They shouldn't even have "Enable CORS" as an option when you use their Lambda Proxy Integration.1 -

!rant

Managed to find an advantage of IE, and it's not for downloading Firefox or Chrome.

Nah, I just discovered that you can actually add a shortcut on your bar task on Win7 with the favicon of the website (I guess it's the favicon), and IE will directly open to it with slight minor color changes.

So now when I need to check if any commit were made on the repository, I have a shortcut to the website so I can check fastly o/

(why I use IE for that ? Because Firefox and the proxy have some issues, and I had bad experience with Chrome. ¯\_(ツ)_/¯ But IE does the small job I give him, so I don't complain)1 -

Do you know what a meat proxy is? It's when you work as a consultant for a company, and the company doesn't give you credentials to deploy, debug, or interact in any way with your code. You then have to work through the sysadmin, while telling him how to go through every single step, every git pull, every line of code to edit. Kill me8

-

So for those of you keeping track, I've become a bit of a data munger of late, something that is both interesting and somewhat frustrating.

I work with a variety of enterprise data sources. Those of you who have done enterprise work will know what I mean. Forget lovely Web APIs with proper authentication and JSON fed by well-known open source libraries. No, I've got the output from an AS/400 to deal with (For the youngsters amongst you, AS/400 is a 1980s IBM mainframe-ish operating system that oriiganlly ran on 48-bit computers). I've got EDIFACT to deal with (for the youngsters amongst you: EDIFACT is the 1980s precursor to XML. It's all cryptic codes, + delimited fields and ' delimited lines) and I've got legacy databases to massage into newer formats, all for what is laughably called my "data warehouse".

But of course, the one system that actually gives me serious problems is the most modern one. It's web-based, on internal servers. It's got all the late-naughties buzzowrds in web development, such as AJAX and JQuery. And it now has a "Web Service" interface at the request of the bosses, that I have to use.

The programmers of this system have based it on that very well-known database: Intersystems Caché. This is an Object Database, and doesn't have an SQL driver by default, so I'm basically required to use this "Web Service".

Let's put aside the poor security. I basically pass a hard-coded human readable string as password in a password field in the GET parameters. This is a step up from no security, to be fair, though not much.

It's the fact that the thing lies. All the files it spits out start with that fateful string: '<?xml version="1.0" encoding="ISO-8859-1"?>' and it lies.

It's all UTF-8, which has made some of my parsers choke, when they're expecting latin-1.

But no, the real lie is the fact that IT IS NOT WELL-FORMED XML. Let alone Valid.

THERE IS NO ROOT ELEMENT!

So now, I have to waste my time writing a proxy for this "web service" that rewrites the XML encoding string on these files, and adds a root element, just so I can spit it at an XML parser. This means added infrastructure for my data munging, and more potential bugs introduced or points of failure.

Let's just say that the developers of this system don't really cope with people wanting to integrate with them. It's amazing that they manage to integrate with third parties at all...2 -

Chrome 63 forces .dev domains to HTTPS via preloaded HSTS.

Well, FUCK YOU google. Why do you even give a shit of my local proxy.13 -

"We want you to run the site"

"Ok but you don't need me - the rewritten codebase is javascript and the Python proxy is in the cloud. You can run it on any cheap web hotel. Or just unzip the app on your own desktop"

"We want you to run the site."

Loop this a few times. Can't say I didn't try to save them the money...2 -

F**king hate Windows for its insanely confusing proxy setup required for software development...

> Setup proxy in Windows network settings

> Then, setup HTTP_PROXY & HTTPS_PROXY environment variable at the system/user level.

> Followed by separate proxy settings for java, maven, docker, git, npm, bower, jspm, eclipse, VS Code, every damn IDE/Editor which downloads plugins...

> On top of everything, find out the domains which does not need to go through proxy and add them to NO_PROXY.. at each level..