Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "compiling"

-

Got semi drunk and thought "Now would be a good time to implement this feature"

*Codes for 2 hours straight without compiling once*

"Done. Good night"

The next morning:

Gets up and tries out feature

*Fixes 2 syntax errors/typos*

*Tries again*

*Feature works*7 -

Never look at your computer when it's compiling, it can sense you and it becomes more prone to errors.

#CompilerConspiracy4 -

So I've got a friend learning Java, using Eclipse.

I walk in one day and see him restarting his computer. I make nothing of it. Few minutes later, he's restarting again.

I jokingly say "Windows update?"

He responds with the straightest face ever: "No, compiling code."

Apparently he thought you needed to restart the computer before compiling.

Not sure if I should be mortified or laughing my ass off.5 -

If only it was this simple. Hi all, im new, I found this app while window shopping the play store, after reading some of the daily post, I think im gonna stay :) i wish your code the best. *returns to staring at compiling error for the next 3 hours*

1

1 -

"I hate C# and Java because compiling takes forever. That's why I use JavaScript."

npm install && gulp

...9 years later...13 -

I once wrote about 100 lines of C code without compiling or testing in between in notepad++.

And it had no errors and worked.😎4 -

@No-one asked me to make a devRant related waifu but I went "why not if devRant WAS the waifu"

and this was born while waiting for compiling code (again) 24

24 -

Coming from a C# background, where Visual Studio would warn me about errors even before compiling the program, Javascript was quite a shock.12

-

Code is compiling....so bored

*gets on devRant*

There's 2 minutes before my next meeting

*gets on devRant*3 -

I don't havey Friends , but the ones I have know me inside out.

I turned 18 yesterday , and what did I get for a gift , a literal 5 page C++ Program that my pals lovingly wrote for me. Compiling it right now. Let's see what it's got for me. 13

13 -

Bored waiting for code to compile so here is a joke someone sent me last week .....

A man walks in a bar with his pet monkey. He sits down and orders a drink, meanwhile the monkey is running around all over the place and jumps up on a pool table. He grabs the 8 ball, shoves it into his mouth and swallows it hole.

"Holy crap!" says the bartender, completely livid. He says to the man, "Did you see what your stupid monkey just did?"

"Nope. What did he do this time?" says the man.

"He just swallowed one of the balls off the pool table, whole!" says the bartender.

"Yeah, well I hope it kills him 'cause he's been driving me nuts" says the man.

After finishing his drink, the man leaves.

A few weeks later the man returns to the bar with his monkey. After ordering a drink, the monkey starts running wild around the bar again. Up on the bar, he monkey finds some peanuts. He grabs one out of the bowl, sticks it up his butt, then pulls it out and eats it. The bartender is disgusted.

"Did you see what your stupid monkey did this time?" he asks.

"What now?" responds the man.

"He stuck a peanut up his butt, then pulled it out and ate it!" says the bartender.

"Well, what do you expect?" replied the man. "Ever since he ate that pool ball he measures everything first!"4 -

I'M AN IDIOT.

I accidentally typed the wrong command when I was compiling my C++ code with GCC, and guess what?

My .cpp file is gone. The .exe is still there, but that's useless to me right now.

It wasn't an important code, just something I recently started on, but I can't believe I did something so ignorant. 17

17 -

Fucking pieces of shit, if I would have a list of developers that add "Whoopsie daisy, tinky winky compiling stuffie" as error messages, installer steps or as a checkbox in their github issues, I'd break their fucking back and keep them alive, just to ram fucking burning nails into their eyelids and then blast just enough power through them to make them burn and evaporate alive.4

-

There is no planet in this multiverse where chrome should be using more ram than an ENTIRE VIRTUAL MACHINE. WHILE ITS COMPILING OPENCV!!! Seriously!?!?

9

9 -

The most important part of compiling is whispering "Come on, you can do it, you can do it" to the server.1

-

Just now I was compiling a new kernel for my laptop because the last ones were from before my rootfs became LUKS-encrypted. Then I found that option about SELinux again.. NSA SELinux. A MAC system that linuxxx praised earlier. Should I tell him? 😜

8

8 -

You know you're the only coder in your group of friends when one of them mistypes "cmakin coffee then I'll be on" and

A.) You're the only one to laugh

B.) You're wondering how the hell he's compiling coffee

C.) Lastly, why won't my coffee compile?2 -

When working in embedded, you basically write your program, compile it and flash it on some hardware.

Compiling and flashing usually require some black magic commands with lots of parameters so i set up two shortcuts in my terminal

yolo to compile

swag to flash

Understandably i keep it to myself1 -

"Install through npm"

"Install through gulp"

"Install through compiling"

"Install through x"

"Install through y"

WHY CAN'T I JUST SIMPLY INCLUDE THE MOTHERFUCKING THING IN THE HTML LIKE A FUCKING NORMAL PERSON?!

ALL I WANT IS TO INCLUDE A GODDAMN UI FRAMEWORK.

When I just started web development, this stuff was so fucking easy! Why did it become so motherfucking complicated to include simple shit like this?!

All I want is to start programing this motherfucker, not spend 3 hours on compiling CSS and whatnot (because I'd have to learn this bullshit first).

Mother of god, why did this become so fucking obnoxious?

I. JUST. WANT. TO. INCLUDE. TWO. FUCKING. FILES.69 -

It's freaking 35C outside.

Guys, please close all the windows while compiling. Global warming is not a joke13 -

When I learnt programming, sugar was still made out of salt and hence not used in coffee.

Also, we didn't have source level debuggers, only the "print" method. However, compiling was also slow. It was faster and more convenient to go through the program and execute the statements in one's head. This helped understanding what code is doing just by reading it. It also kept people from trial and error programming, something that some people fall for when they resort to single step debugging in order to understand what their own code is even doing.

Compiling was slow because computers in general were slow, like single digit MHz. That enforced programming efficient code. It's also why we learnt about big Oh notation already at school. Starting with manual resource management helped to get a feeling for what's going on under the hood.14 -

Compiling 2 big projects with two Visual Studio 2017 requires less RAM (combined) than browsing the internet. Seems legit.

2

2 -

soldering on a pcb is just as stressful as programming. u solder everything and u test it out and then it doesnt work like its designed to. its just like compiling it and a lot of errors are detected. the worst part of it, you cant detect the errors as soon as u try it out. you have to find where exactly in this kind of mess is the error:

6

6 -

While compiling LLVM, you have enough time to go get a beer.

Out of the fridge.

From the supermarket.

Two town's over.6 -

My most painful coding error?

```

#!/bin/bash

APP_PREFIX=${1}

#Clean built bin dir before re-compiling

rm -rf ${APP_PREFIX}/bin

make compile

```7 -

Scariest thing I've ever experienced...

Was compiling a mod and left my laptop alone to do it's thing while I went to the bathroom. A couple of minutes later I started to smell something burning.

Rushed back to my laptop and everything was ok.

Turns out with all the coding I forgot about my toasts... 1

1 -

To incentivise myself to get fit, I decided to do push-ups/sit-ups whilst my code compiles

All that happened though is I now spend a lot more time making sure my code compiles quickly 😅5 -

On the twelfth day of Christmas

programming gave to me:

Twelve bugs in public branch

Eleven errors to fix

Ten freaking warnings

Nine Windows Updates

Eight blue screens of deaths

Seven minutes of compiling

Six servers down

Five Android Studio crashes

Four angry stackoverflow devs

Three kernel panics

Two burned graphics cards

and a one broken-dick piece of shit JavaScript framework4 -

I got ranted,by our teacher in algorithms lab, because I was compiling the code using terminal and was not using torboC (there wasn't any IDE installed on the system) 😃1

-

"I couldn't fix the test so I commented it out."

"I removed build timeouts because our jobs started taking that long."

Next I'm waiting for "Compiling the code is good enough we don't need tests" before I lose it... -

Here it is: get MythTV up and running.

In one corner, building from source, the granddaddy Debian!

In the other, prebuilt and ready to download, the meek but feisty Xubuntu!

Debian gets an early start, knowing that compiling on a single core VM won't break any records, and sends the compiler to work with a deft make command!

Xubuntu, relying on its user friendly nature, gets up and running quickly and starts the download. This is where the high-bandwidth internet really works in her favor!

Debian is still compiling as Xubuntu zooms past, and is ready to run!

MythTV backend setup leads her down a few dark alleys, such as asking where to put directories and then not making them, but she comes out fine!

Oh no! After choosing a country and language the frontend commit suicide with no error message! A huge blow to Xubuntu as this will take hours to diagnose!

Meanwhile, Debian sits in his corner, quietly chugging away on millions of lines of C++...

Xubuntu looks lost... And Debian is finished compiling! He's ready to install!

Who will win? Stay tuned to find out!4 -

on the first day of christmas my PM send to me

There's a bug in your B-tree

on the second day of christmas my PM send to me

two threads deadlocked

and a bug in your B-tree

On the third day of christmas my PM send to me

Three servers crashing

two threads deadlockd

and a bug in my B-Tree

on the Fourth day of Christmas my PM send to me

Four clients angry

Three servers crashing

two threads deadlocked

and a bug in my B-tree

on the Fith day of Christmas my PM send to me

Five SCRUM meetings

Four clients angry

Three servers crashing

two threads deadlocked

and a bug in my B-tree

On the sixth day of Christmas my PM send to me

Six deadlines waiting

Five SCRUM meetings

Four clients angry

Three servers crashing

two threads deadlocked

and a bug in my B-tree

on the Seventh day of Christmas my PM send to me

Seven machines learning

Six deadlines waiting

Five SCRUM meetings

Four clients angry

Three servers crashing

two threads deadlocked

and a bug in my B-tree

on the Eighth day of Christmas my PM send to me

Eight repos compiling

Seven machines learning

Six deadlines waiting

Five SCRUM meetings

Four clients angry

Three servers crashing

two threads deadlocked

and a bug in my B-tree

on the Ninth day of Christmas my PM send to me

Nine interns asking

Eight repos compiling

Seven machines learning

Six deadlines waiting

Five SCRUM meetings

Four clients angry

Three servers crashing

two threads deadlocked

and a bug in my B-tree

on the Tenth day of Christmas my PM send to me

Ten Features requested

Nine interns asking

Eight repos compiling

Seven machines learning

Six deadlines waiting

Five SCRUM meetings

Four clients angry

Three servers crashing

two threads deadlocked

and a bug in my B-tree

on the Eleventh day of Christmas my PM send to me

Eleven products deploying

Ten Features requested

Nine interns asking

Eight repos compiling

Seven machines learning

Six deadlines waiting

Five SCRUM meetings

Four clients angry

Three servers crashing

two threads deadlocked

and a bug in my B-tree

on the Twelve day of Christmas my PM send to me

Twelve DBs updating

Eleven products deploying

Ten Features requested

Nine interns asking

Eight repos compiling

Seven machines learning

Six deadlines waiting

Five SCRUM meetings

Four clients angry

Three servers crashing

two threads deadlocked

and a bug in my B-tree3 -

WTF MICROSOFT.

I was Compiling app on windows 10, just gone for few mins to grab a coffee and then i saw blue screen with

Updating Windows.

Nobody ask you to do it. You piece of shit.

And that's not all, it even restarted without my permission.

Seriously Fuck you Microsoft7 -

What the fuck Visual Studio? Last day my app was compiling succesfully. I DID NOT CHANGE ANY SINGLE FUCKING THING BEFORE I LEFT OFFICE. Today it refused to compile. It didn't even show the source of error, just says missing a reference.

- Clean solution, rebuild. Compile error

- Close VS re-open project. Compile error.

- Restart computer. Compile error.

- Close VS re-open project. Compile succesfully.

WHAT THE FUCK DID JUST HAPPEN? I could't believe it if it didn't happen to me. Is this shit compiling just by luck or what?5 -

Current status: i should have gone to bed hours ago and there are other things i need to do, but right now i'm compiling ffmpeg in a docker container10

-

Ah you think debugging is your ally? You merely adopted the debug. I was born in it, molded by it. I didn't see the compiling until I was already a man, by then it was nothing to me but blinding!2

-

Before I used to programming C++, but now I'm programming JavaScript, so Babel transpiling still counts? And SASS compiling?

-

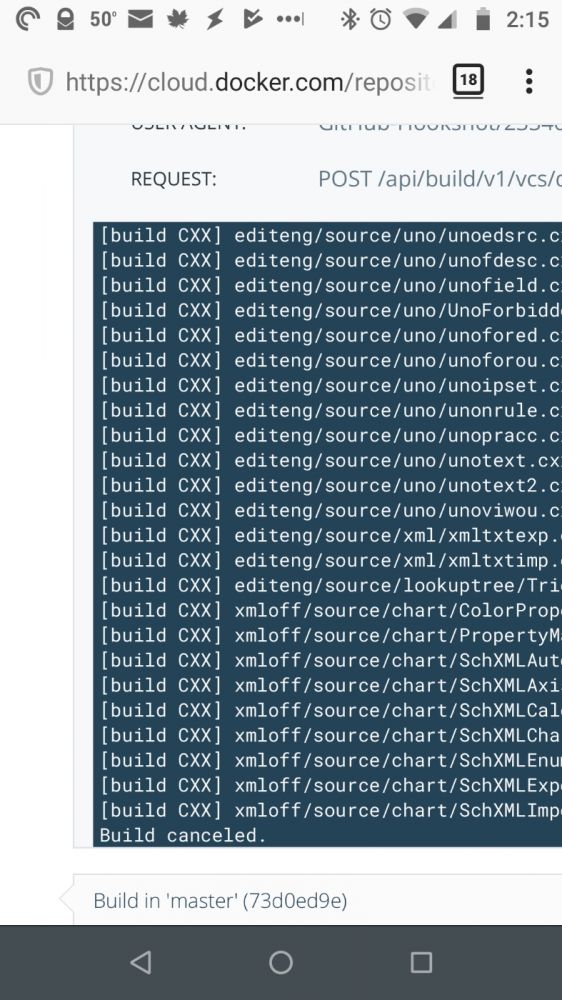

Compiling LibreOffice in DockerHub failed. Good thing it took my computer only 6 hours to do it locally.

5

5 -

I FUCKING HATE when windows 10 reboot without my permission, i left stuff compiling in vmware and now i found out that it rebooted fuck you Microsoft37

-

Happiness is removing all the compiling warning from a project which has half a million of lines of codes

*peace*3 -

Compiled a small style change in LESS file. Whole page is broken. Realized holiday replacement made all changes in main CSS file which is generated through LESS compiling.

3

3 -

Just started as a remote dev and I found that it's IMPOSSIBLE to work from home.

Get annoyed from something not compiling/errors? Go play some video games two feet away. Nothing going your way? Go lie down on the bed behind you.

But for some reason I can work from home way better at night.

Any other tips for working remotely?9 -

Android dev rant:

>Fixing some code

>Compile code

>Take a walk, waiting for gradle to finish compiling

>Almost 10 mins, notice typo on code, while still running gradle

>Fixing some code

>Compile code

>Take a... Wait a minute11 -

You know that web development realy took the wrong turn, when complex java project compile way faster, than simple javascript one.

Not to say that javascript is interpreted programming language.6 -

I feel like Unreal Engine for Linux is obsessed with compiling stuff:

Want to get Unreal Engine? Here's the source code, go compile it yourself.

Installed that, let's launch it. Compiles more stuff.

Now you're on the project selection screen, good job! Imma compile these shaders though.

Want to make a project with C++? That means I'll have to compile some more stuff...

If only my CPU wasn't a potato it wouldn't be so bad.1 -

🎶Lemon Tree🎶

I wonder why,

I wonder how,

This stupid piece of code actually works right now.

And all that I could see, it's actually compiling...

(Happens all the time)1 -

!rant

The AH-MAZ-ING feeling you get when you write 200 lines of code without compiling and everything just works as planned!!!

YAY! -

Compiling software on Linux:

Python interpreter? Easy peasy, just some dependencies here and there. Make does a good job.

Linux kernel? Piece of cake, 20 years of development will be freshly served on your machine after one hour compiling (I have a pretty powerful computer).

Tensorflow? Fuck this shit I am outta.

What is your story with self-built software? Which piece of code has the most terrible dependency hell?5 -

❤️ Swift ❤️

Compiling fails due to many inter-dependent errors (think database deadlocks).

1 - Comment the code that produces the "locking" error.

=> Code compiles.

2 - Uncomment the code that produces the "locking" error.

=> Code compiles.

My peepee hard5 -

1. IDE connected to your brain that does the coding according to your thoughts.

2. Compiling and deployment for your applications take little to no time.

3. Not having to wipe your ass 50 times to make sure it's all clean.3 -

One of my professors made us submit Hand Written codes. I mean writing, compiling, executing on the machine, and then writing by hand on paper to submit.

It was so irritating.2 -

Why is java called java?

Because by the end of the day you'll need a strong cup of coffee to retain your will to live.1 -

I'm going to be that guy .... A lot of these rants are about code compiling first time .. Throwing away code you wrote because you didn't need it... Getting in the zone and writing a billion lines before you compile .... Am I the only freaking person here that does TDD ? My rant is wake up people ! People evangelize about it because it fucking works !6

-

I spent an hour and a half googling and compiling my code because I typed `#ifdef` instead of `ifndef` in one my header files.

Can you C the difference?2 -

Freecad isn't open source software!

If it is impossible to get something compiled, it can't be open source.

When you can't compile it, all, that is left, is to use a binary.

If there is only a binary, it isn't open source.

Seriously: If you are participating in an open source project, please make sure, that compiling from source is a viable option for the generic gentoo user. Thank you.10 -

I just fucking hate compiling this fucking C# (ASP.NET) code and then transferring to staging server. Fuck you.....no no no listen to me fuck you and fuck this shit.8

-

C++ template errors.

Probably enough said... But a couple years ago I was interviewing and each interview included, do know about templates. I always answered, "enough to determine what is wrong when there is a compiler error." I always got some form of a chuckle or "wow" and NEVER a follow up question about templates. I received job offers from each interview, they all apparently needed someone who could help get their template code compiling? -

Who was working for 20 minutes without TypeScript compiling and wondering why nothing was changing: this guy 🙋🏻♂️1

-

>compiling a toolchain for my phone

>compiling gcc

>segfault

wtf, i have like 8GB RAM and 32GB Swap on an SSD

>rerun make w/o clean

>continues, no segfault

ok?

>segfault a few minutes later

FUCK

rinse and repeat like 30 times

why10 -

It’s my “duck” in a box!

https://g.co/kgs/dbFrcE

Would have paid extra for the DevRant devs to sign my duck 😂 @dfox and @trogus.

Thanks for keeping new and exciting things compiling in the community, I think I speak for everyone when I say that we’re lucky and proud to be apart of it!rant ducky! proud to be part of such an amazing community devduck sign my duck! it’s my duck in a box!5 -

What are you doing crashing on me Windows?

All I was doing was running a flight simulation, compiling a build, and doing a regex search over 6 directories. Try to open one little document and it all goes to hell.

Surely you can handle that Windows?

Apparently not.2 -

Worst tech ever? I will say, but don't throw rocks before I explain. Xcode. Why? Try to use it on MacBook 2008. And after that, compare it to Android Studio on same machine. But at least, I can just say "Compiling" to PM and play games on phone. And even "I don't give a fuck about how urgent it is. IPA build takes 15 minutes."

-

Project due next Wednesday, spends all day Friday drinking beer and playing Mario Kart Double Dash....

My codes compiling. -

If only compiling a medium to big C/C++ library wasn't a fucking nightmare still in 2016, with all those slightly incompatible build tools that fail miserably on your machine...2

-

I was finishing compiling the linux kernel in tty mode for a school project in the subway cuz the class was over and it was not finished. A guy came to me and said : I used to do that, but on a Mac. Then he left. I was like : wtf do you think I'm doing? You don't compile the linux kernel on a fucking Mac. And why would you mention it was a Mac anyway?I guess he just did ls -al on his Mac in tty.6

-

So I decided today was a good day to manually compile python 3.6 for my raspberry pi as it hasn't yet been added to any supported repo's. Site says: this will take approximately 30 minutes.

3 hours later: "starting unit testing"2 -

Awful idea of the day - Have a programming language with no comments, but regex preprocessor macros. Use macros to define your own comment syntax and strip them before compiling.

#define /\/\/.*//1 -

Learnt a very important lesson today..

To add some context; I'm currently in my second semester of uni studying a Bachelor of Computer Science (Advanced), and started the year with no experience with any language.

Up until recently all my practical work has been guided by context sheets, now I have some freedom in what my program does.

Because of the very small projects earlier in the year I have built a habit of writing the whole program before compiling anything. This worked fine since the programs were small and at most only a few errors would be present.

Cut back to today, and I had been writing a program for a bigger assignment. After an hour or so of writing I began thinking I should probably test everything up to this point. I ignored it...

Fast forward 4 hours to having "completed" writing the full program. I knew by this point I was taking a massive risk by not testing earlier.

Lo and behold, I try compiling everything for the first time and countless errors prevent the program from compiling. I tried for quite some time fixing the errors but more just kept appearing as 1 was fixed.

I'm now left with no time to fix the program before the deadline with no one but myself to blame.

Lesson learnt :/5 -

Just like compiling code that takes so long, but without errors. They sent these awesome stickers even I live in Indonesia, Thank you. @devRantApp

3

3 -

Writing simple terminal input/output lang (Hello, what's your name, hi) in D.

Compiling in D using standard lib: 6.1M

Compiling in D with inline assembly, no standard lib or libc, using syscalls: 1048 bytes!

I'm still freaking out a little.2 -

How I always feel about Xcode when compiling Swift code, opening storyboard files, or just in general.

We need to make some of these stickers. ++

-

I've just wasted 2 hours fixing an issue with a GitLab CI YAML definition, all because of a single colon:

echo "Detected changes: compiling new locks"

I swear to god, whoever thought it is a good idea to use YAML for CI scripts should rot in hell.15 -

When syncing node_modules via nfs to Vagrant it's an even better excuse than 'it's compiling' - it literally takes 2-3 hrs and the ssd request is pending for over a month now. Looks like nobody sees it as an issue...

1

1 -

You know something is wrong when chocolate-doom, a full game (actually 4) with custom software rendering engine compiles in 12s, while your stupid Webapp with a few input fields and backend calls take over 1m to build 😒😒1

-

So I made my first rust program with just a quick look at the docs on for loops. 20 minutes from nothing to exe. Just fizz buzz but still. Took me 4 hours to get C++ compiling.3

-

In the lab:

"Look! I have compiled and that gave me no errors! On the first try!"

So I look closer and

"Dude, ehm.., you are compiling the wrong file..."

Then he tries to compile the right class and the compiler returned errors on errors

"You know what? I hate you."

Never laughed that loud -

I wrote a little webserver console app that would allow me to test another project without bypassing the DRM I wrote for it. Unfortunately, after compiling, the console app immediately closes on startup, not being at all thread safe. You might say, the worst tool I've ever used is one of my own creation...

-

You will get far more rejections than acceptance. A lot of the time it has more to do with the interviewer and not the candidate (assuming the candidate is a genuine hard worker). The job search process is similar in this regard to finding a mate or compiling your code.

Keep moving forward! -

My dev teacher for mobile was teaching us react native. I got an error while compiling it (missed a try catch in a function). My teacher only looked at my code and I hear her say to herself "no semycolon missing" and then she says to me, I don't know what's wrong...

Like... Are you seriously a teacher?2 -

Compiling on Windows feels like an Internet Browsing Simulator.

Really shows how incredible the central Repository and System Package Managment systems of Unix are.

Now... Back to downloading the remainder of the required libraries.7 -

Suppose you're at work and you have this monolithic project and you hit 'compile'.. which takes half an hour each time. What do you do meanwhile?30

-

I've been compiling the project for about 5 hours now, still no successful build due to bad tests generating intermittent test failures...

All I wanted to do was to release the web project to the customer not fucking wrestle Cthulhu!

The worst part is that the release is set up so that you need to release the entire project internally before you can release one part.

-

I was really bad in physics and we had energy, force and all that stuff, when I got to C, my very first programming language. I learned the formulas by writing a program calculating all the stuff we learned about in school.:D

Back then I didn't have a computer and wrote the code on paper before actually compiling it at my moms computer. -

To all guys who write shitty code:

if (false)

I just found that when compiling for Release mode in Visual Studio the JIT compiler eliminates this:

Dead code elimination - A statement like if (false) { /.../ } gets completely eliminated.

And a lot of other similar stuff2 -

What you sitting around on devrant for. Dont you have work to do?

Compiling..

Oh ok, carry on then :)3 -

Windows piece of shit mother fucker useless trash.

Why can't I just compile without the dumb ass "Antimalware Service Executable" having to check every single fucking file and eating fucking 4GB ram. God damn it. fiadsfleaf oaiehjf afpo jafj

I start compiling binutils and then the whole thing fucking crashes ad;adsfjhc odshfaj;sdl hfja;odsfh;osa dhif;aosdhfi a;osdihf;skdjnvba; dsjch;soduf;dsao fu;nodjf ;anaod7 -

Someone approves a very old answer I gave to them 7 months ago for a question about "compiling Python to improve performance". And now, I don't even understand my answer nor the asker's question.

Edit: The Q is asked on Replit -

Me compiling c++ code at 3 am be like:

gcc filename.cpp -o filename

Some error pops up

Cries for a while, looking up for the solution

After writing the whole code again notices was using gcc instead of g++... -

One thing that I hate more than anything else in what I do: Waiting

Whether it is compiling, training, loading, IDE indexing or building I just despise it. It gets me out of my flow and forces me to be bored and often unable to do anything else because my pc is busy6 -

named two strings as fuck and cunt (because im tired of debugging this stupid bug since last 5 hours)

compiling...

aaand laptop freezes

fuck. my. life.1 -

This guy was editing a code file but compiling and running a differnet version with the same name in a different location and kept asking around not knowing why nothing was changing1

-

Did an interview and got some feedback and my coding challenge (I didn't make the cut) . Was surprised at a particular comment on why it was I didn't make the cut and it was about the code not compiling atall. So I went to check the repo and found some code which I oath to have removed lodged into the code base which prevented the reviewer from being able to compile it. How tf it wasn't flagged out when I was compiling before pushing to the repo is beyond me. Now I feel hella stupid and disappointed in myself 🤦🏾♀️ (to be fair it wasn't the only reason I didn't make the cut. The code could have being better)1

-

As a web developer i've never been able to use this as an excuse but now i can use the fact im compiling code as a reason for doing nothing. :P1

-

If you are using arch and are making packages from the aur all I can say is use makepkg -s because then it will install all the dependencies for you.

Yay5 -

Get on devRant while compiling / waiting for results. Much time later I'm wondering how long that error message has been on my screen staring at me... and then I need to get my focus back into the code :(1

-

TLDR; My laptop's CPU temperature becomes +90 C when I compile stuff.

I have two different laptops that have the exact same configs and OS. I use one as a desktop and the other one is just for school stuff and homework. As you might think, the one that I use as a desktop is looking like a mini desktop. I was into DIY stuff while ago so I made a custom case and separate the LCD as well. Don't ask me why though.

Today I was working on a personal project that has relatively complex build config. Since the compilation always took 3 to 5 mins I went to the kitchen for some coffee. Bumm. My laptops fans are working in a way that one can think they're in the airport. Seriously. All 8 cores are +90 C when I checked them.

The next thing I did was compiling the same project on the other laptop which I used for school projects. It took like 20 mins to compile but the max temperature was like 50 C.

So, in the end, I'm still trying to find the reason for that behaviour.4 -

when you fix all the server bugs successfully on a Friday, and are compiling the code just in time for happy hour

-

Not much tops the orgasm from powering thru 500+ lines of code in the zone... in vim...no debugger.. and without compiling just visually seeing in your mind the assembly be generated... and code being stepped thru.. and then compile and test and everything works as expected.. not sure anything tops that feeling ... definitely have to be in the zone.. one distraction and boom gotta compile to make sure nothing brokerant vim embedded c boom in the zone vim is life master power through c do it live god mode embedded systems3

-

Yo I heard you like compiling C/Cpp so we make you compile and link each individual file so you need a makefile for compiling everything. But that shit still gets to annoying to maintain so you make the make files with cmake. Just so you can compile a library basically at all.

And dont get me started on autoconf and random configure scripts you have to run before you actually configure shit.

Can we make compiling a regualr program any more difficult so that we need a whole ass A4 page of documentation just to end up with a binary of something?14 -

Waiting for my demo video to finish compiling and trying not to think of awful reviews I'm gonna get on my paper next year. 😐 #AnxietyIsConsistentlyFun4

-

I love waiting 30 minutes for phsyx to finally fucking be done compiling my god can you just speed uuuuuup6

-

I'm cross-compiling software I create for many years. Ignoring languages targeting some kind of VM, some additional efforts were always needed.

Go (as far as I can see, since 1.5) is doing this right and quite straightforward - select target and architecture, issue build command and you get native executable file. I'm happy ... B)7 -

Non-IT

Can't afford laptop

Want to make an app from scratch by coding , compiling from my Android mobile.

Is it possible??

What would you suggest me?

Which language would be better to start with.

step by step procedure would be helpful.

Do's and don't!!!

Or this attempt look silly/lame?!22 -

When compiling my first C++ program after sometime working on Python I got 17 compile time errors. All of them were either missing ';' or an extra ":". Damn you syntax!1

-

Sing: "110 little bugs in my code,

110 little bugs,

Fixing one bug,

Compiling again

Now there are 121 little bugs in the code"2 -

I’m on a laravel project which is going great, the ass rapping part of it is compiling this fucking sass with laravel fucking mix... fuck it I’m setting up gulp3

-

So I was compiling Resurrection Remix, Android 10. And I'm making some commits here and there, and my friend looks over and asks me "so how does this work?" And I briefly explain about device tree, vendor and kernel. So he says "oh okay, so basically it's like copy and paste?"

I've never been so offended in my life 😐rant kernel developer roms offensive android vendors pissed off kernel development mad angry dev angry3 -

Fuck.

I really want to get into source-based distros, but my laptop is pretty much a half-fried potato, so compiling takes ages.5 -

!rant Scary Stuff...

Not sure what are the rules on sharing external content, but this story freaked me out and I wanted to share with you.

Pretty scary stuff, maybe something like this is already in the wild? Especially with the NSA and other power groups trying to exploit vulnerabilities and infiltrate everything...

Found it originally on the rational subreddit. Here is the link:

https://teamten.com/lawrence/...

Spoiler alert:

It's about the The Ken Thompson Hack:

"Ken describes how he injected a virus into a compiler. Not only did his compiler know it was compiling the login function and inject a backdoor, but it also knew when it was compiling itself and injected the backdoor generator into the compiler it was creating. The source code for the compiler thereafter contains no evidence of either virus."

How to detect/deal with something like this? better no to think too much about this. -

Is the Android Build process getting slower with every update or is it just me who is experiencing that...

In the newest Android Studio Beta it went from 3m to 7m 🤦♂️

And my whole computer is blocked and not usable while compiling7 -

Finished compiling my biggest project to date. It compiles fine, but does not output anything.

Time to spend the night debugging and overeating. -

> Build project

> 13 Errors: Could not find reference

> Uninstall and reinstall NuGet package

> Build project

> 158 Errors: Could not find reference -

using AI is the new water-cooler break. the walking around and saying hi to your co-workers time-waste. the compiling procrastination1

-

Wrote a new feature for our flagship product in C. Worked perfectly, no issues. I was told to wait before submitting to SVN.

Because my company is a little cheap in engineering, they took my Green Hills license for another dev to use. I wasn't using it, and now can't compile.

Then, a month later, I was asked to submit my feature to the repo, they needed it in done version, do I did. Still not able to recompile to see if other changes broke anything...

As you probably guessed, no one's code complied after pulling from the repo! Big embarrassment. Weeks later I was told that it wasn't my fault in the end... I don't remember how my code impacted it, but man, it was a bad day for this dev.

Never again!1 -

TFW idiots use FFmpeg without looking at the license, and then spend two months compiling it themselves.

1

1 -

Got into an argument the other day over the definition of scripting languages.

He said python isn’t because it can be compiled while I said it can be both since you can you can use without compiling. Same could be said for Java when using with Selenium for automation.

Thoughts?5 -

Compiled Gentoo after ~5 days.

It's not ever yet though.

My kernel is now 7.3M, and it contains almost everything I need. Even my network drivers (intel) firmware is built-in.

It boots straight off UEFI (default BOOT/bootx64.efi), and

Managed to install X, Waylan (sway!)

Got dvorak programmer's keyboard defaulted.

df -h:

root 4.7G/14G (exact) used

boot 21M/127M (exact) used

var 701M/~5.5G used

AAAAAAAAAAAAAAAARRRRRRRRRRRRRGHHHHHHHHH

Was doing the installation from a Live CD (UEFI) during school hours, with my toughpad not working and no mouse with me. I feel bad for TAB.

I am, at this moment, still compiling... -

pls rember happy dey

wen u fel sad and lonely

pls rember happy day

Recite this when your code doesn't compile1 -

Is worth buying a gaming laptop for programming purposes and others like rendering and compiling.

like having an i7-8750H instead of an U one

and an 1050ti instead of intel 620

ofcourse the SSD is on both

the price are close enough, that is why i am thinking of getting a gaming one for it's capability7 -

One of the weirdest aspects of Docker for me is cross-compiling program installations. One would think that something as complex as a container with several programs that each make unknown decisions based on the environment as part of the installation process can't be cross-compiled.2

-

*me writing my sweet code like nothing bad could happend*

Xcode: bum! Compiling error

Me: what the...

*compile again

Xcode: yeah right. Bam! Error

*clean, etc. compile again

Xcode: yeah, try your luck looser

Me: ok, let's google it. First stack overflow answer: just change the simulator and should work correctly.

And of course it worked. And that's how it works all day.

Fuck you Xcode! Fuck you Apple!

-

I tried to convince the actual bug that landed in the middle of the code on my screen a few minutes ago to pose with my (AWESOME!!) stickers. He was lonely among my compiling code and took off so you get me instead :)

-

Visual Studio is the worst. Ever. I was about a half hour digging into debugging my code, and I was about 8 layers deep into the API, with breakpoints to anchor me to each level. With no warning, Visual Studio crashed and I lost all my breakpoints, and I didn't know which file I left off in. I had to completely restart the debugging process. Visual Studio deserves to burn...2

-

How are we feeling about the new Ryzen lineup for workstations that can do a bit of gaming on the side?

I'm so looking to go back to team red, but I don't know how beneficial the no of cores Ryzen provides is for compiling, static analysis and other dev tasks.

Anyone have experience with Ryzen for dev'ing?4 -

When you can't understand a compiler warning, try to reduce the problem to a minimal example, and the warning goes away...

... and you realize two hours later that you weren't compiling the minimal example on the same machine as the original. Different versions of g++, one with a bug fixed 😩

(assigning {} to a struct member) -

Had to switch to Linux mint from Solus cause I needed to use Coq and I didn't feel like compiling it from scratch when it's easy to get in mint. Anyone used Coq before? My teacher loves it for discrete math, and I like functional languages so I'm a bit intrigued4

-

When compiling and starting up your program lasts so long that you forgot what you wanted to test..

#dailybusiness -

When you go from compiling/testing code on every line change to not checking for hours and it just works when you run it.

😎

Not always, but sometimes. -

After a bunch of errors compiling, fixing errors and learning Haskell on the go, I finally created a basic, lacking devRant API in Haskell.

Link: https://github.com/Supernerd11/...7 -

Feels pretty good when you chroot into your first LFS enviornment and nothing breaks (yet..). Also my toolchain seems to be compiling packages correctly so that's a plus :)

-

Use Rust they said. It will be much less hassle they said.

And now rustc just stops working in the middle of compiling. No error or anything it just doesn't want to continue compiling so I'm stuck forever on "Building ...". I thought I would never have to experience this again after deciding to pretend C++ doesn't exist but alas systems programming appears to forever be a right pain in the ass6 -

Why in gods name does compiling for windows change the name of a function?? this is a function in *my* library and it doesn't have the text `erom_text` anywhere in the library???2

-

Spent the whole day testing the tests.

CI is pretty fun, I get to slack off the whole day, because one test run takes around 10 minutes (without compiling3 -

If you wirte Code for hours without testing or either compiling it and in the end you get no error and you know this is not possible and began to search the error where no is...

-

So my compiler has been compiling the newest version of my compiler for about five hours now, and progressed 10% in the last hour... Looks like my poor laptop is gonna have to pull an all nighter2

-

Need music for... actually, whatever you want? https://youtube.com/playlist/...

Coding, disc ripping, compiling, gaming, driving... Fits most everything. -

So, yesterday I finally understood the "it's compiling madness"...

I'm working as a web developer and I needed some c executable to run from the back end to convert files.

Unfortunately, you have to compile this library on your own, there's no way to just download the executable...

It took 45 minutes, and that's without counting the download of visual Studio, cloning multiple gits etc...

I'm so happy to just be able to press F5, sometime not even that is necessary....

So yeah, I guess I'll just watch another episode of hell's kitchen every time I need to compile this piece of crap....1 -

Recommendations for customizing vim & i3 for programming workflow?

Do you have any settings/plugins you prefer?

Also, any nice ideas for compiling latex docs while writing it in vim? Like a side by side thing maybe, not really looking for ide.6 -

Compiling a project (Not BIG but still pretty big)

C# compiler : YOLO, you have 12 Cores ? I'll use 12 cores. BAM : 3 seconds, compiled !

SASS compiler :

Rolf, I'm gonna take file by file, recompile all dependant files to produce a single file. 2 Seconds per file. X 150 files.... 6 Minutes SASS is still running....2 -

JUST FUCKING NOTHING WORKS!

ERROR HERE ERROR THERE.

Then i tried to copy the exact sample code and it ALSO DIDN'T WORK.

AND THEN FUCKING VSCODE shows errors THAT AREN'T EVEN THERE. THEY MAYBE WERE THERE 10 MINUTES AGO. IS THIS SOME FUCKING INTERNET EXPLORER SHIT. ALSO COMPILING THROUGH IT DOESN'T WORK JUST THROUGH THE COMMAND LINE. AAAAAAAAAAAAAAAAAAAAAAAAAAAAH FUCK IT8 -

Yesterday I decided to use Vim 8.0. Took me an hour to properly figure out all the configure options before compiling, but it worth it, all my colleagues are jealous now.2

-

That feeling when you go back to a project you haven’t touched in months, upgrade one of the dependencies to a new major version (come on how many breaking changes will actually affect this tiny project), and find nothing is compiling because the library maintainers renamed everything and introduced a lot of breaking changes that are actually relevant2

-

Extensive knowledge of the non-compiling, heavily-interpreted language known as profanity. Helps me express my problems very clearly to others in my team.

-

Which is your favourite compiling CSS language for development ?

• none (CSS)

• SCSS

• SASS

• LESS

• ???

My is SCSS.13 -

I never thought I'd be able to genuinely use the excuse "my code's compiling" to explain why I'm goofing off... I love it :D

-

Running brew install PHP

I soon get that brew is installing python and compiling its sources.

WHY?3 -

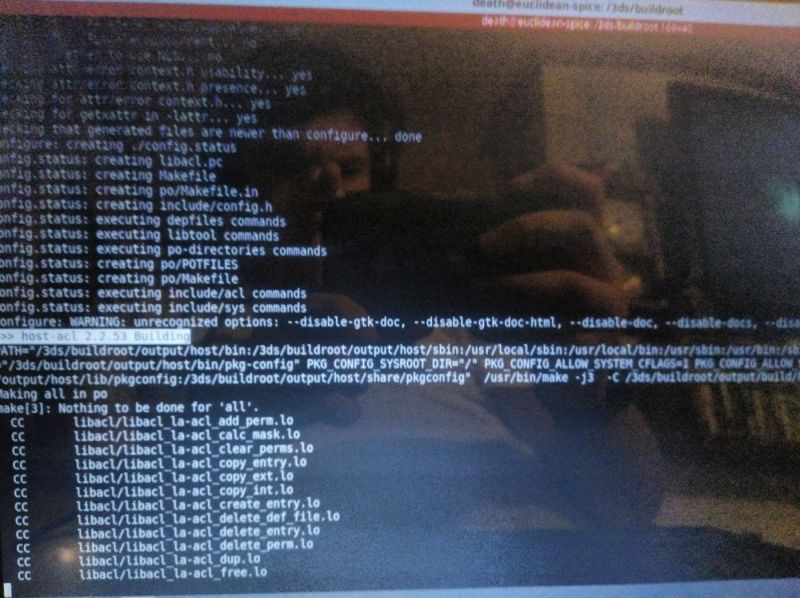

>compiling Linux 3DS' zImage overnight

>start compiler at 4PM

>11:54PM -- compiling clang: <file>: conpiler: *internal compiler error*: segmentation fault

okay, well, maybe if we continue make from there it'll fix itself? Might've run out of RAM...

>make

>5 seconds later, segfault again

FUCK1 -

My morning productivity so far: compiling some code while a full-length show I edited renders. (Not pictured: the machine learning model I'm also training.)

I pity my processor. 4

4 -

How can a LaTex document simply stop compiling after some months? Is this shit not downward compatible with newer versions? This stupid language and all those fucking compilers this is the worst user experience ever. Gonne re-type the thing in Word now5

-

You know it's all good and well but compiling and building opensource software is like falling down a stairway and getting fucked by dependencies and quirky configurations on each step.

Forever...Down one step and down again.1 -

When you don't compile small code snippet and keeps on writing lines of codes and after writing large lines of code that one moment when you start compiling your code for the first time

Brace yourself for errors 😕 -

Seriously, fuck Bazel. It's the most unintuitive build tool I have ever had the displeasure of coming across.

It works, but try compiling an Android app, that uses deps from gmaven, maven and jcenter and has java, as well as native dependencies.

It's fucking impossible. -

So today I tried to code in c++ by separating class code into header and cpp file which I had not done before. Compiler was throwing error while compiling, "undefined reference to std::cout". Took me nearly an hour to figure out I was using gcc instead of g++.6

-

can anybody give me advice on why typescript maybe be a better solution on the nodejs side. I've been trying it out but other than compiling seems like es6 is still better2

-

Ever spend 4 years compiling a library of awesome code while all the time forgetting you set up version control for it, and then one day you accidentally hit the magical undo button that annihilates all of your code in one fell swoop?

Yeah... Me either. That's totally not the reason 70% of my classes now look like they were decompiled from yesterdays build -_- -

A compiler and assembler set for bootstrapping the whole gcc Code tree and cross compiling each level1

-

One reason I hate working on hardware and prefer software. Spent all weekend trying to get this Canon photo printer to work with a raspberry pi for a photo booth project. Tried multiple cables, compiling the latest cups and gutenprint, etc. Only could get it to print via wifi. Finally, I go to the store and buy a new micro USB cable and it works right away. 😠2

-

• Compiling cool stuff in docker : ✔️

• Forget to mount a volume to get the result of my compilation: ✔️

• Trying to copy the whole thing elsewhere and having a crash of the docker daemon : ✔️

😫1 -

Worst dev experience of 2016: compiling and including SDL and SDL2 into C projects, never got it to run all the functions, sometimes wouldnt compile, etc... Never got it to work properly in the end...3

-

Pushed the code without compiling. We weren't generating builds before merging the code back then. It was so embarrassing

-

beware: yt short link.

tl:dr is that compiling and packing shit for windows & linux is usually straightforward. For mac it is a pain in the ass and contributed less than 1% percent to sales.

And the thing is, i absolutely resonate with that, because i had to go through that hell before at some point in life.

Also on another note: i recently got this guy recommended by a friend, and feel like that they have interesting topics they talk about!

https://youtube.com/shorts/...6 -

I just wanted as soon as possible to try something with boost libraries.

Opened command prompt and run

.\b2

See ya tomorrow!! -

Me VS Banana Pi: day one

Spent 5 hours compiling an OpenWRT image and then I realized it's not what I want. Tomorrow it will be Armbian turn. -

Been struggling with compiling a PyQT-program the whole weekend. It worked with PyInstaller on Friday, except that the .ui-file was not included but referenced to the path on my computer. Have tried fbs instead which caused this error that now also occurs when I try to start the program created with PyInstaller.

1

1 -

going from IT Operations to System Developer.... good progression as one knows alot about the hardware that you are compiling on.

-

listening to music of a random rock list on youtube.. meanwhile, my project is compiling and suddenly a romantic 80s song started playing!!

fuck i was so inspired!! -

!rant

This morning a coworker comes to me and has been like "I've been compiling this list of all the files on our network that have x in the title for a week now"

I'm like do you want me to use a recursive Powershell script to get you that list in like 10 minutes.

The little things that make you invaluable at work because you are the sole tech guy. -

I love the flexibility and power you get with Wordpress, WooCommerce and its entire plugin ecosystem...

BUT FUCK ME! PHP IS SHIT!!

It's like writing code by hand with pen and paper, putting it through an OCR and then compiling it. Sure, it might work if you're lucky and maybe even look cool, but good luck trying to develop a sulution with any sort of speed!3 -

I'm compiling an entire Android build from source. Even with 16 dedicated compilation threads, it's like watching paint dry.

It's nowhere near my early days taking over 24 hours to compile a Linux kernel... But it's still painful. -

I suppose the modern equivalent to waiting around code compiling is building the bloody Docker images. Vastly exacerbated by the requirement of an X86 image on ARM hardware.

FML 🙃1 -

when you find a project you'd like to clone and learn about but you cant even get their examples to build locally...

yes im looking at YOU every c based project on github.5 -

That precise moment when you have finished your brand new app, you're compiling to upload it to the devcenter and you NOTICE you haven't changed the test ID in your Ad banner unit.

Can you feel me bro, right?

-

Any recommendations for decent 13 inch laptop under 1400$ USD

- Atleast 8 gigs of RAM and option to upgrade

- resolution >= full HD

- storage >= 256 GB SSD

- battery >= 6 hrs heavy chrome usage

- Linux compatibility

- Cpu- nothing heavy - browser and docker containers, no building or compiling15 -

Okay say I'm compiling a windows binary but I need to call a function from a linux library when running on cygwin. How would one go about doing this? Does it even make sense?

My idea is compiling a dll with one function that links against the linux lib and calls the function. Then just load the dll when needed.

Kinda new to linking stuff so any input is appreciated2 -

TFW your laptop battery dies twice as fast as yesterday because you're compiling all the things ... but you don't want to go back inside yet.

I'll stay out here just 2 more percent, then I'll grab the charger. -

when you just want to set up a tiny automation and end up compiling a custom snap package for 3 hours...1

-

...patience...

...patience...

...patience...

...patience...

...patience...

I had been waiting for a hour to finish compiling and it show me the output above. -

Compiling native code under Windows without using visual studio/msbuild is an unspeakable pain in the rear...3

-

AS logcat

Sqlite.sqliteexception:no such column:Timestamp (code1):,while compiling : SELECT * FROM NOTEs ORDER BY Timestamp

I am trying to get the date and time on each entry of note..17 -

Every time I am surer that we live in a virtual reality, it has been the change of time, and when compiling the software an error has happened and the bad weather has returned.

-

In C++ we give my code is still compiling as an excuse to stack off.

Now that I have using machine learning , I can use my model is still training as an excuse. -

Send me your best git-fuckit scripts! I’m compiling the best ones. The winner needs to be versatile enough to handle both simple and upstream/forked repos.2

-

I love Kdevelop. Using it for a decade. But for last few years it's background parser eating too much RAM. Moreover heavily templated code also causes spikes of memory consumption while compiling. Sometimes it feels frustrating. But I am not willing to switch IDE. I've been habituated with Kdevelop.

-

September : started programming in xamarin Android. Slow, buggy and undocumented as hell.

October :getting used to Android. Might be nice after all.

November :started programming at ios.

December 2nd:still can't comment on it. Program hasn't finished compiling yet.