Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "resolution"

-

[dev vs client]

- What's your screen resolution?

- 100%

- What's your browser?

- I use internet and sometimes Google.6 -

Got my new workstation.

Isn't it a beauty?

Rocking a Pentium II 366 MHz processor.

6 GB HDD.

64 MB SDRAM.

1 minute of battery life.

Resolution up to SXGA (1280x1024)

Removable CD-Rom drive.

1 USB port (we like to use dongles, right?)

Also it has state of the art security:

- No webcam

- No Mic

- Removable WiFi

- I forgot the password

And best of all:

It as a nipple to play with!! 31

31 -

When your client complains there site is slow and you find that they have added 80 full resolution images at 6000x4000 pixels to the home page!

11

11 -

Customizing my arch linux desktop 🙂

High resolution picture:

https://imgur.com/a/aEeZR

What do you guys think? 48

48 -

Me, doing QA

PM: "stop submitting bug reports about screen size, we're only supporting one resolution for now"

Me: *What do you mean you're only supporting one resolution it's a website and it breaks on screens <1400 px tall*

*Sigh*

"okay, what resolution?"

PM: "No one knows"

Me: *dies*2 -

Everyone's posting their PC's for wk119, but I thought I could do better.. think different, so to say :P

My electronics workbench.. cleaned up, I promise! Just that there's so much stuff that I have no idea where else to place it :')

And I wish I could post this BEFORE WanBLowS decided to shit itself again with one of those goddamn fucking Blue Screens of Dicksucking Shaftsystem! At least devRant UWP from @JS96 has the dignity to save the post just in case its host craps itself all over.. FUCK!!!

.. Anyway, high resolution counterpart of the image here: https://i.imgur.com/ZrJmMe0.jpg 25

25 -

Fuck yeah!

6 monitors are success-fucking-fully running on my setup.

Fun detail is that WITH the official AMD driver, one screen wasn't turning on and one had a weird resolution but WITHOUT it, it works completely fine!

Yes, it runs completely fine on Linux (Kubuntu).

Finally have my dream setup.42 -

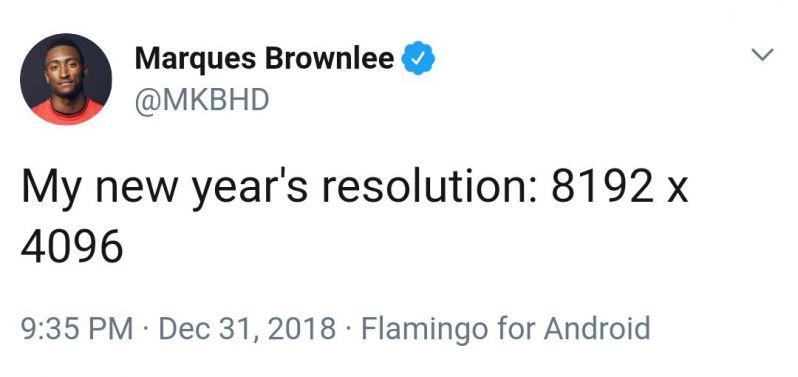

I have finally decided on my new year's resolution.It is -

.

.

.

.

.

.

2560×1440

Yup , I'm gonna change my wallpaper resolution to 2560×1440 starting this year.

Problem anyone?

;)4 -

My new year's resolution:

- Learn more Java

- Build a web server with Java

- Make a 3D game with no frameworks in Java

- Make a simple Android app with java

- Try JavaOS

- Tell people I know Java14 -

My resolution for this year is to stop hating javascript.

Error: cannot read property "year" from undefined.2 -

IF LIVES DEPEND ON A SYSTEM

1. Code review, collaboration, and knowledge sharing (each hour of code review saves 33 hours of maintenance)

2. TDD (40% — 80% reduction in production bug density)

3. Daily continuous integration (large code merges are a major source of bugs)

4. Minimize developer interruptions (an interrupted task takes twice as long and contains twice as many defects)

5. Linting (catches many typo and undefined variable bugs that static types could catch, as well as a host of stylistic issues that correlate with bug creation, such as accidentally assigning when you meant to compare)

6. Reduce complexity & improve modularity -- complex code is harder to understand, test, and maintain

-Eric Elliott12 -

2019 resolutions/goals recap: (non-personal ones)

1) Improve diet (did; e.g. ramen and fast food to clean keto)

2) Lose weight (did; lost 24 pounds!)

3) Find a good job (did, twice)

4) Buy a harp (did not; large and expensive, no place to put it, and I have small children who would absolutely break it)

5) Keep house clean, even if it's by myself (did, somewhat; I cleaned some, managed to get one other person to clean semi-regularly, and another sporadically)

6) Work on social awkwardness (did; read and applied Dale Carnegie's The Art of Public Speaking, which netted me my last job offer. Still pretty awkward though)

7) Move out of the desert (did not; not enough money, and job didn't allow remote work)

8) Stop bloody waiting on people (did not; still very guilty of this...)

I don't remember the rest 🙁 didn't write them down last year. But I still accomplished 5 out of the 8 I remembered, with one being a pass, so 5/7!

-----

2020 resolutions/goals:

1) Finally move out of the desert

2) Invest 20% of my income every month

3) Reduce bills by 20%

4) Solve/address some health issues

5) Make a schedule so things regularly get done around the house, e.g. cleaning

6) Find some friends and make time for them

7) Replace Debian with something else

8) Revamp my backup system

9) Be proactive and stop waiting on people

10) Build a (stationary) coil gun for fun18 -

When you get a client from real MOTHERFUCKING hell.

You just really FUCKING want to say this:

Scorched earth MOTHERFUCKER. I will massacre you. Now SHUT THE FUCK UP AND LET ME DO MY JOB.

First, take a big step back and literally, FUCK YOUR OWN FACE.

I will rain down an ungodly FUCKING firestorm upon you.

You're gonna have to call the FUCKING United Nations and get a FUCKING BINDING RESOLUTION to keep me from FUCKING destroying you.

I am talking SCORCHED EARTH MOTHERFUCKER.

I will MASSACRE you.

I WILL FUCK YOU UP!

But for your own sake you keep it at this:

Yes sir/ma'am :).7 -

*Opens devRant*

*sees everybody saying how great Linux is*

*Tries deepin OS*

*Keyboard backlight not working *

*Searches YouTube for a fix*

*Fixes the Backlight*

*Screen resolution set to 800*600 by defualt (monitor 1920*1080)*

*Grub decides there is no need for a windows entry*

*plugs in Windows USB*

*Opens cmd*

*diskpart*

*list disk*

*sel disk 0*

*list vol*

*sel vol 3*

*clean*

*boots into windows*

*Follows a guide to remove grub*

*Learns the lesson*

*Ooh OS X seems nice*

FML23 -

My new year resolution: 3840 X 2160

🐒

PS: don't give me a hard time in the comments. I know it's an old joke. 😫4 -

* How other sites charge for a domain name

- The domain (abc.com) is available

---- Price => $14

* How AWS charges

- Your domain (abc.com) is available

--- Domain name => $18.99

--- DNS resolution => $17.88

--- Hosted zone (1) => $10.97

--- Route53 Interface => $45.67

--- Network ACL => $63.90

--- Security Group => $199.78

--- NAT Gateway (1) => $78.99

--- IP linking => $120.89

--- Peer Connection => $67.00

--- Reverve Endpoint => $120.44

--- DNS Propagation => $87.00

--- Egress Gateway => $98.34

--- DNS Queries (1m) => $0.40

--------------------------------

---- TOTAL => $2903.99

(Pay for what you use... learn more)

--------------------------------13 -

We want to improve the portal by making apps for it what can you do or recommend?

Well holy shit this is new you're actually asking the dev team for advice on a future project.

Normally you immediately go to a third party waste a shit ton of money and then tell us we have a week to add whatever it is into our system.

Then when we can't do it or have to delay other projects you're dragging our manager into a meeting with the CEO complaining that IT are refusing to cooperate or are holding up the project etc.

The change of heart is much appreciated but where the fuck did this come from? New year resolution?5 -

Installing Ubuntu in VMWare. After the installation, proceeded to install VMWare tools to get the full resolution.

Shitloads of errors. Kernel build failing, gcc exiting with error code other than 0, all the copying failed. At the end of the process the executable says:

Enjoy,

---The VMWare Team

What the fuck am supposed to enjoy? My broken fucking Ubuntu in a VM?5 -

Dual 27" Dell 4K HDR monitors, plus a Samsung 1080p and the retina display. My poor 2015 MacBook Pro with a Radeon R9 M370X 2GB can barely handle it. But there's just so much resolution that it's totally worth it!

9

9 -

If you wanna replace few of the carousel banners in your website, at least fucking send me the image with the same aspect ratio or resolution compared to the old images.

WHY THE FUCK YOU WANNA BLAME THE DEV TEAM WHEN YOUR GRAPHIC TEAM AND YOUR MARKETING TEAM IS SHIT?5 -

I took a course in app programming and one of the assignments was to create an "instagram-like" photo app with some simple filters. One of them was pixelate.

Instead of changing the RGB value of each individual pixel, i just saved the image in a lower resolution.

The result wasnt perfect, but good enough to pass.

And the teacher never even looked at the code..5 -

People: I will never put one of those smart speakers in my home! You're basically just putting in a microphone that will spy on you!

Also People: Hang on I have to grab my phone/high-fidelity microphone/high resolution camera/GPS tracker/Data Aggregator and make sure I have it with me literally everywhere I go, because who knows when I will want to comment on a cute picture of a cat!?13 -

Me: well guys, after the 4th attempt and a week of waiting, I’ve gotten a response from the remote backend team about the errors affecting this release. Which are the same issues affecting the last release 2 months ago. The findings are: “there is in fact some issue with the API”.

I’d like to thank everyone who put in so much effort to get us to this momentous step forward. We can expect a fix any year now.

*equally sarcastic colleague on another team listening in*:

oh wow, this months long thing has just been “some issue” all this time? Well that’s fantastic. You should mark the ticket as “done” and reply “thank you” for all their hard work.

..... I laughed so hard at how ridiculous all this is and the joke, that I nearly did, hoping someone from product/business would have to review it1 -

My 1366x768 laptop resolution is not enough to even have intellij open along with firefox in split screen mode... :(

5

5 -

So i am the process of working on improving my personal brand and have created myself a new logo.

I thought it would be interesting to see what you all think?

"As a developer/designer hybrid i wanted to create a identity that was able to form a symbolic reference.

My initials (nb) are formed into one continuous line making a connection to two seemingly different fields that represent both design and development."

Full Resolution: https://dribbble.com/shots/... 34

34 -

You don't need to crop that full resolution Shutterstock image, just use max width 100%

Image size: 7+mb1 -

Boss: Client wants those stockphotos for the frontpage.

Me: ok. Please license them and let me know. I will upload them to the page.

Boss: How does that work then?

Me: you have to buy the five credit package. Here is the link...

Boss: (no response)

...few days later...

Boss: please remember to upload those images...

Me: well ok. Did you buy them?

Boss: isn't that your thing?

Me: I don't understand. You had all the info. You new where to buy them. You knew what images to buy since the client sent the preview versions. What do you need? ...and why didn't you tell me that you were waiting for my input? I was the last one to reply to this conversation.

Boss: i don't want to buy the wrong images.

Me: just buy the ones the client chose.

Boss: I don't want to look up the email he sent them in.

Me: I don't understand. I directly replied to that mail. It is in the same conversation.

Boss: ok.

...day later...

Boss sends me mail with images attached.

Boss: are those the right images?

Me: well yes. Those are the ones the client sent. I don't have more information than you.

(Me looking at the attachments and finding them in the smallest resolution available.)

Me: why did you download the images in the smallest resolution? It does not make any difference in price.

Boss: well I thought they were not needed in a bigger size.

Me: why do you make my options intentionally smaller? I am the guy doing frontend.

..please give me the login info for the stock account so I can download the images in a better resolution.8 -

Fuck web dev.

I dabbled in many areas but I do web dev most often. And seriously: fuck web dev. Your site has to work on multiple browsers. Multiple screen resolutions. The code has to be tiny for load time. The images have to work for every resolution and still be small. The styling can look different in different browsers. So many useful javascript features are only supported by modern browsers. An on top of that: IE.

I’ve gotten quite good at all of this, but still: it’s such a fucking pain.10 -

My new years dev resolutions are:

-Learn more c#

-Change jobs

-Read less reddit while on the clock

-Remember that the index on enums start at 03 -

How some of our country's government websites handle responsive web design:

"Best viewed in Internet Explorer 5.x or higher in 1024 x 768 resolution"5 -

Anytime I have to deploy to iOS store. Part of the stack includes human review... Need I say more?

If I had a dollar for every fucking time they ignored our flagging to not put it on iPad, and returned with their review 2-3 days later saying:

"App UI did not run on iPad resolution cleanly."

Then having to submit an appeal and wait 2-3 more days...10 -

My next year's resolution is not to make more geeky jokes however my current resolution will remain 1920 x 1080 for a while.

#HappyNewYear1 -

My resolution for this year:

- learn PHP

- advance at JavaScript and figure out what's so cool about react.js

- be a pro with SQL and learn PostgreSQL

- build couple cool hardware projects with Raspberry Pi and Arduino based on C.5 -

Just had my first demo here. The resolution of the screen was set at 150% screwing more up then i hoped for.

I didn't dare to show the iPad version. I had seen enough bugs in those 10 minutes.

Don't put this developer in the center of attention. Please.2 -

"xkcd.com is best viewed with Netscape Navigator 4.0 or below on a Pentium 3±1 emulated in Javascript on an Apple IIGS at a screen resolution of 1024x1. Please enable your ad blockers, disable high-heat drying, and remove your device from Airplane Mode and set it to Boat Mode. For security reasons, please leave caps lock on while browsing."

Best part of the site honestly1 -

Something I refer to as the "Lost Cause Syndrome".

Basically you start working on a project enthusiastically with the resolution to write the best possible code. But either one (or some or all) of management, client and colleagues succeed in transforming the project into a comedy (or tragedy, depending on your outlook) of errors.

Then finally, one day you decide that the project is a lost cause and stop caring about it. You end up in a "Let's get this over with and get out of here" type of mindset without making any efforts to improve the situation.3 -

I pranked my friends ex, nothing bad, just fun. First i screen shorted is desktop, flipped it and made it his new desktop. Then flipped the resolution, so my upsideiwn bf was cool. Lastly I change his mouse behavior, I set it for reversed.

Fun right? A typical person might get a lil pussy and have to fix it. Some might even fix it themselves. Regardless have a lil chuckle.

He smashes the monitor and keyboard, left them, both in a pile.3 -

so I was working on a new frontend design for our desktop app when I told my boss

me: this will not look good in a lower resolution. I think we should reconsider

boss: thats ok. its the customer's fault for using that kind of resolution

after a week

boss: we should reconsider the design. the customers are complaining about resolution issues 2

2 -

!rant

So nearly done with the work app and I need these images scaled accurately (it's for comparing pupils) my problem is I'd normally ask my brother to make the images but he is busy with uni for the next few weeks.

I'm just wondering is there a tool I can use that can rescale images (I have images from the iPhone app, and I gotta say I had to add in % changes despite the proper dpi sizing), I'm looking at the Android documentation but what's funny is in the listed resolutions OnePlus 3 wasn't included (along with some other newer resolutions) lol, and also Wikipedia for OnePlus 3 has the width and height switched (for some reason the author imagined the phone is also in landscape lol).

So do you guys know of something I can use? Programming is one thing but designer is another :/4 -

Just to clarify thing, FaceID isn't the same tech as what we've had on Android.

In Android, it's based on image recognition. That's the reason it was so easy to bypass with a high resolution photograph.

In FaceID, it projects thousands of dots on your face and creates a depth inclusive map which is used for verification. That's the reason why it's supposed to work even if you have glasses on, etc

So please let's stop with the comparison11 -

I wanted an Android phone with latest Oreo version, atleast 4gb RAM, 32gb or more with good processor supporting 4G dual SIM with a resolution of 1440 pixels by 2560 pixels at US$ 100.

Well got for all of it for US$125.

I went to LineageOS site, exported and filtered all supported devices with my relevant device attributes.

Got LeEco LeMax 2 for US125$ on eBay. Installed latest LineageOS 15.1 with Android 8.1.0. got 25gb free space.

Using the phone for last 10 days, flawlessly. 21

21 -

There's this WebDesigner who wants every single website made pixel-perfect to his design.

We usually spend like 1 extra month on each website just because we can't do it pixel-perfect for each resolution

Now I'm working on yet another of his websites and his brother is beside me as a stagist

Am I allowed to insult the designer and swear against him every 5 minutes or so?5 -

WHAT THE FUCK IS WRONG WITH YOU ???

Galaxy S8 5.8" Quad HD+ Super AMOLED (2960x1440)

570 ppi

Galaxy S8+ 6.2" Quad HD+ Super AMOLED (2960x1440)

529 ppi

oh my fucking god, what kind of retard decided this ?

This resolution is waaaaay too much. It impacts performance and battery life a fuck ton and gives you absolutely nothing in return. I would be cordially surprised if there was someone in the world who could see more than 400 ppi. 300 is more than enough for most of the people.

God these fucks are annoying with their retarded marketing. And even more so, the people who buy these phones, because phone manufacturers can and will continue doing so.

Flagship my ass.14 -

My first new year resolution for this 2017 is learn to do the f****ng unit test before implement the code3

-

Setting Newyear's resolution to 4k..

failed: could not find X window system.

Please check the configuration logs.1 -

A programmer walks into a bar. He has a concussion because you were using namespace std and didn't scope to building.13

-

Intel core i3 4th generation laptop

3 years old.

Plays csgo at 70fps lowest resolution 800x600

Thinks of upgrading laptop

Sad, only one memory slot

More sad, maximum 8gb ram support

But happy,

This fucking piece of shit lasted way too long than time itself xD11 -

@dfox you could try using progressive jpegs, so that images are not shown when they're fully loaded but, lower resolution is loaded first then pixels are added to it as time passes, really good for people with slow Internet.6

-

So client wants like a certain type of stone as the background of his website. Okay fine, I ask him to send me "good high resolution pictures" of the walls.

Some time later, I get the pictures. They're 500x300 px give or take , I mean come on xD3 -

Gaming on Windows vs Linux:

Windows:

After installing windows, and downloading Minecraft, I was able to run it at a solid 60fps while stretched across 3 monitors

Linux:

After installing, and spending many, many hours trying to set it up to detect more than 1 monitor, I admitted defeat and decided to play on just one. This monitor was also set at a weird resolution, which I briefly fixed, but then broke again immediately. I downloaded Minecraft and after running it, I was able to get a somewhat solid 7fps (10 if I was lucky).

On the bright side, it was able to run a terminal based game just fine on linux.32 -

1) HTML turing complete

2) Kardashian programming language

3) EU resolution that forbids accepting and merging pull requests without court order.

Because why not.2 -

"I blame television and movies, especially cop shows. 'Can you improve the resolution on that face?' 'Sure, let me just pull some information that was never captured out of my ass.'" - Rod Knowlton2

-

1. Finish 8bit computer

2. Program an assembler

3. Find a girl friend

Can you guess which one will be in my new years resolution next your too?4 -

Dear game developers, if you are building your game on Xbox and are adding support for Xbox One X, please please please stop just adding 4K

Please just add a 'favour resolution', 'favour details' and 'favour performance' option... Yes 4K is nice but most of us don't care and would much rather richer visuals or an uncapped frame rate.

(Yes I know PC gaming exists but I hate PC gaming and prefer the ease of console)2 -

Ladies and gentlemens, my everyday workstation.. at work.

i5-5300 2,30Ghz, 8gb ram and 128ssd. Bloated external screen with low resolution and even lower colour saturation.

Running VM, handling big as images, editing and a tiny bit coding.. and they wonder why I'm asking for a new setup..

(._.) ( l: ) ( .-. ) ( :l ) (._.)

Cheers ☕ 3

3 -

Turning my 27" monitor vertically was the smartest thing I did all year.

There's so much space now that just using it makes me happy!

That only drawback is the 1080p horizontal resolution which is not enough for many sites to fit without zooming out.

I feel like I will soon persuade myself to buy a 4K monitor...3 -

They don't tell you this is in uni but your skill in merge conflict and circular dependency resolution is more essential in the real world than your knowledge of data structures.1

-

Dammit! My daughter seem to have a resolution problem with her drawings. Anyone know if there's a device driver that can be upgraded or something?

6

6 -

Got an Acer 18.5" monitor.

Took me more than an hour to get correct resolution on my lubuntu.

And still looking for answer and fixing.

I know I will eventually fix it soon (hopefully).

But this kind of things shouldn't exist in this days and age :/17 -

!rant

I'm developing an OS. I tried running it on the laptop that's on the ground. Everything works fine except text mode. There is no output when running it in text mode(not the high resolution one shown on image). Since the OS sends all data that is printed to the first serial port I might as well read the output from the serial ports. Since the laptop I use for development doesn't have any serial ports I had to use the older Windows 98 PC. For unknown reasons I could not get any output from the serial ports so I gave up.

tl;dr I wasted some time trying to debug my OS.

Image of my debugging setup(taken with the latest potato). 13

13 -

I got my new Oryx Pro today, from System76. It came with Ubuntu 18.04 LTS. I opted not to get Pop!_OS or Ubuntu 18.10, as I would prefer to leave the OS on it for the longterm.

Even at 15.6", it's a BIG laptop. It measures 18" from corner to corner, when it's closed. It comfortably fits in my backpack, which is a bit on the small side, but it's probably about 30-50% heavier than a MacBook Pro.

But that size and weight are vindicated by the most thuggish hardware I have ever seen in a laptop. As configured, this machine has a 4.1GHz 8th gen i7, 32GB of DDR4 at 2666MHz, an 8GB GTX 1070, a 250GB nvme system disk, and a 1TB SSD for data.

The display is set by default to 4K resolution, but I cranked that down to FHD for the sake of my eyes and the battery. I will try some games at higher resolution at some point, but for desktop navigation, I get more use out of multiple virtual desktops than in massive resolution.

I will comment tomorrow or the day after with the steps I've taken to bend this beast to my will, and it's also important to say that I have not finished yet. This is just a summary, but I should have been in bed an hour ago, so I'm gonna go do that.9 -

Issue reporter:

Feed from external service provider is overwriting the wrong things, pls fix

Resolution:

Service provider is sending duplicate unique IDs, you need to get with them to discuss

Service provider response:

This is a *RE-USABLE UNIQUE ID* . . . The bottom line is you should not use this UNIQUE ID as a primary key. 21

21 -

Gotham...

why, oh God, why do you have a scene in SE01 E17 at 9:20 min into the episode, where

J.Gordon uses reading glasses to a screen of an old B/W TV and magically is able to read a logo brand of a jacket.

How did the glasses add hundreds of more pixels to the resolution behind them.

This has ruined it for me, not watching now. Even Mission Impossible where they say "use DDOS to take over their systems" is better than this.7 -

!rant !!question !!rantNow

Stackoverflow and the internets has failed me, so I thought maybe someone here could throw a pointer 🤞

Has anyone managed to get a hackintosh working in VirtualBox 5 with a decent screen resolution beyond 1024x768?

I’ve tried the usual vboxmanager ways of setting the gopMode and customRes but the damn thing won’t budge.

I’m starting to think VMWare may be the way to go for this thing😪2 -

Start with your new year's resolution now!

So you'll have a headstart over other parallel universe versions of you, that are way better than you anyway.6 -

A 27" monitor with a 1080p resolution is a match made in hell. Oh the eye bleach. (At home I got 30" with 2k or 1440p resolution. Much better viewing experience.)

I'm having my first day in the office in the new job after two week of remote work. I think I will prefer working from home office definitly (even though the office coffee machine is nice).

It doesn't help that the internet connection in the office is 100 Mbit and at home I have 1 Gbit.9 -

I made a New Year's Resolution to take more of an interest in my Internet privacy. Feel like it's something I should have done a long time ago. I've stopped using Google search (DuckDuckGo instead), moved away from my Gmail account (Tutanota instead) and stopped using Chrome (Firefox/Firefox Focus instead). I've had my Gmail account since they first announced it and you could only sign up if someone invited you. It felt good to delete 7000 emails and what I estimate must have been 13-14 years of Google/YouTube searches. Currently experimenting with VPNs, considering paying for ProtonVPN soon.9

-

As promised, here is an update photo! Just bought some nicer BenQ displays and a quad VESA mount to hold it all. My RX 460 can barely keep up, so that's the next thing on the upgrade list.

Full resolution: https://ibb.co/jP2Pue 5

5 -

That's it. A year just passed, and here i am sitting in my dorm, watching from my window all those fireworks blazing, exploding, dazzling the sky.

I got no one to party. No one accompanying me.

That's all. Happy new year all!5 -

Merry 2018 in advance.

If it aint a secret, comment w/ your new years resolution so other lazy fucks can get motivated.

Mine: a 3d game (godot), releasing my data science java libraries, building some kind of robot8 -

So which are you new year's resolutions?

Mine are:

Learn C,C++ and Node

Organize my time to do more with it

And code5 -

While I was working on a university project with my team, a teammate asked me why the window of the program in my screen was bigger than in his. I simply answered him that his screen was a FullHD one that had a 1920x1080 resolution, while mine had a lower resolution, and he was like "Noo! This isn't a fullhd screen, it's not so sharp".

So I showed him the "1920x1080" sticker right below his screen, and him again "Yeah, it could have this resolution but definitely it's not a FullHD screen".

- Ok, as you say...

The same guy two days ago was talking about creating a GUI in C.

I told him that C was the wrong language to build programs with a GUI, although there's some very old libs that allow you to do that in 16bit.

And him again: "Ok but Linux (distros) do that and the UIs are great!"

- Do you think that all the fucking Ubuntu/Mint/any distro code is written in C??

The funny thing is the arrogance with which he says all these bullshits.

P. S. We are attending the 3rd year of Computer Engineering.6 -

I'm about to ditch full-time Linux.

It's the little things honestly. Display resolution goes nuts when connecting or disconnecting from external displays, Bluetooth headphones suddenly aren't found anymore. I spend hours trying to fix things but often get nowhere. I love the environment, but there's just not enough convenience that I used to get with Mac or windows. This morning, pop os that I've been using for months updated and then wifi && ethernet didn't work. So I decided maybe I would switch to Mint since it's got more support. Internet works but same Bluetooth and display problems. Idk.

Someone talk me off of this ledge.11 -

i submitted my first app to the app store yesterday. i built it with flutter, and it's already on the play store.

for some reason - i probably created the app wrong - the app store connect/portal needed screenshots of my app running on an ipad pro. now i don't have an ipad pro, and i can't use an emulator because i don't have a mac for that matter (i have been using codemagic.io for builds). i called friends - they don't have any ipads.

what did i do?

i turned my phone sideways and scaled it to the ipad pro resolution.

it is currently "in review"

🙏let's hope this works8 -

Smartphone manufacturers these days, imagine how meetings to come up with ideas for new products go about.

Product manager :ok people,what can we do to make our next smartphone 'different'.

Employee 1: let's add more cameras

Employee 2:Let's kill the notch

Employee 3:Let's include the buzzword AI in all of our marketing

Employee 4:Let's put 8Gb of Ram in our phone

Employee 5:Let's just do all of those things and also give it a screen with a ridiculous aspect ratio and unnecessarily high resolution.3 -

Most useless premium laptop feature: touch screen.

For my new Lenovo I saved hundreds of dollars because I opted for the second best screen option. Lower resolution WQXGA (2560 x 1600) 165Hz, beacuse the 4K touch enabled fancy schmancy screen of my current Dell XPS 15 has barely been used. I keep Outlook open on it FFS, and I can probably count on one hand the number of times I have used the touch feature 😖

Is it just me?8 -

Really wish we would see some OLED equipped laptops, we have massive 4K OLED TV's and Tony high resolution smartphone displays, why can't we just get a nice 1080p OLED panel, makes so much sense in a laptop IMO3

-

"We need this project done by friday"

When:

Requirements changing on a daily basis.

No standards whatsoever, anywhere.

5 different people commiting changes with no code review.

Original team leader quit a month ago.

Current team leader doesn't know our own deadlines.

QA looking at layout through a microscope at every single possible resolution. (please move this 2 pixels to the left between 934px and 936px range)

QA being too vague some times (this looks weird some times)

Same thing being changed back and forth because no-one could agree on how exactly should it look.

PM implying at every chance that I did nothing and what little I did broke everything all the time.5 -

I work as an intern in a big company. There is a person who joined the company recently with about 6 years of experience in other big companies. He can't do simple things like adjusting his computers resolution and raised a request for a new monitor. He used to wonder why his request was denied 😐 later when I got to know this had happened, I went and fixed the resolution, he was so fascinated.. Hmm...1

-

This is why you should make a knowledge database and never trust the internet to keep things

Two quality rants with a lot of useful information I favorited are missing, surely because their authors removed their account

Lesson learned. One more resolution to apply for 202112 -

My plan/resolutions for 2018?

Have at least 1 commit per day w/ maybe exceptions like special holidays and such 😁

(No commits just to have a commit like adding a line of commentary or stuff like that)

#iwannabetheguyshetellsyounottoworryabout -

How to LAN -Party:

1: PC is starting normally, resolution fucked up, restart

2: booting for 10 minutes, restart, 2 times

3: repair tools open, 30 minutes system recovery

4: finally PC starts normally, everything works expect the game i want to play-.-1 -

One more time.

ppi != resolution != size ! = aspect ratio

ppi is a measurement of sharpness.

Resolution is the depth of pixels.

Size is width and height mesured in units.

Aspect ratio is the... ratio of width to height.2 -

I am pulling my hair out on ducking low level stuff. This is why people (more importantly me!) should have the chance to learn, rather than assume how things work.

Has anyone of you detailed resources on how linking objects into shared libraries really works ? Especially Name Resolution. All those ducking tutorials and bloody blog post just have simple examples and explain shit not in detail!

Even ducking man pages on gcc/ld don’t help me out! Maybe I’m too dumb to type the right words into me search engine. I’d even love to read a bloody paper book.16 -

Minimum resolution supported on the project is 1280px

Learn that Product Manager is selling it as responsive

*sigh 2

2 -

God damn it. I have not ranted enough this year. I need to ramp up my rant game. This could be my new years resolution.

Rant more!!!6 -

TFW you google an error and all you get are GitHub issues with rambling conversation and no resolution. Marginally better than having no relevant responses IMHO.1

-

My 2018 goals:

1. Graduate from the Deep Learning Nanodegree.

2. Get better at Python.

3. Learn C++.

4. Learn more about Machine Learning and AI.6 -

When a fellow dev doesn't use their Thunderbolt Display because the resolution isn't as good as their MacBook Retina display. Kids these days....1

-

Week this is bad, how can Mozilla say this is for better Security? Shit 💩💩💩🖕🖕🖕

https://blog.ungleich.ch/en-us/cms/...4 -

2020 seems to be the year of the "dev who has never seen scale."

TypeA -> "Here's a reasoned explanation for a change I think we should make. Here is the current deficiency analysis, here is the desired resolution, here is the course of action and all calculations leading to the resolution + data. This will have x,y,z beneficial result according to our operational metrics."

TypeD -> "Those were words. Why do you need that? Change is bad, learning is worse. This will just slow me down, development speed is all that matters; there is no chance that a poorly considered/factored/checked design could ever require a ground up rewrite or fuck us utterly in the long term. Why do you make my life harder? We could x -> y -> zBUTI haven't done the math and I really don't see the benefit in x, so z is pointless. What even is scale?"

The consequences of the war caused by the ever-widening gap between engineers and developers is low key terrifying.12 -

Multi-Screen problem: So I need to run a VR headset with a laptop, and the laptop has only one hdmi connection. I don't have any extra hdmi adapters, so I cannot connect my second screen while working with the headset, which sucks...

but...

then it hit me...

there is an app called spacedesk which allows you to use your phone as an additional screen. I have a docking station for my phone so I can connect hmdi to it. On the first try the resolution was shit since it uses the default phone resolution. But the phone has Samsung Dex, which allows you to run everything full screen on your connected screen, so I can run the app within Samsung Dex and therefore will get full resolution.

And this works. It's kinda stupid and maybe a bit complicated, but it works. God, I love technology :D:D:D

This is the adapter to adapter to adapter to adapter meme in action, just wireless. Lol. I'm proud of this xD5 -

For my employers: Just go fuck yourself with your greed. I'm gonna start my own business and fail till it succeeds.

Signed,

A pissed overworked developer who doesn't give a flying fuck about your shitty product6 -

Me: (Talking to new recruits) "Remember, you should only ever work on one project at a time. The different requirements, complications, and resolution times will fuck you over. That's the last thing you need, being new to the team and all that. If the client needs more man power, then-" (you get the idea)

Also me: 3 monitors and working on 4 projects. *Sips coke*1 -

!rant

What's your new year resolution(s) for 2018?

What do you like about devRant?

Which area do you think devRant can be improved?

// I got asked those questions last night at one of the project dinner.

// So now I am asking you guys here.

// I have replaced the project name to devRant for us.

// My answers in comment.3 -

I am so fucking tired of sitting here all day every day adjusting paddings and margins. Oh fucking hurr durr you got one of the millions of fucking elements to not overflow on your page, well does it work on *this* resolution and *this* orientation? No, well fix that and then go back and fix what it breaks.

I swear to God I never want to touch fucking CSS again it's all I've done for a yesr and it is driving me up the god damn wall. This is my career, I shouldn't fucking dread coming in to work because I know how much bullshit I'll have to deal with. It's awful.

I don't get how anyone has good looking complicated pages that just look good on every possible resolution, it's fucking mind boggling that anyone can sit there and adjust heights and widths and paddings and margins and floats for hours on end nonstop just watching shit get broken and fixed and broken and fixed and AHHHHH

I need a fucking smoke and a pint just so I don't have to think about this anymore13 -

Resolution for April : I should get started with the Udemy courses I have already paid for.

*** goes to Udemy site, sees new courses for $10 **

Ok, I guess I'll buy these two courses - they seem to be highly rated, i can always catch up on studies later. 🎓1 -

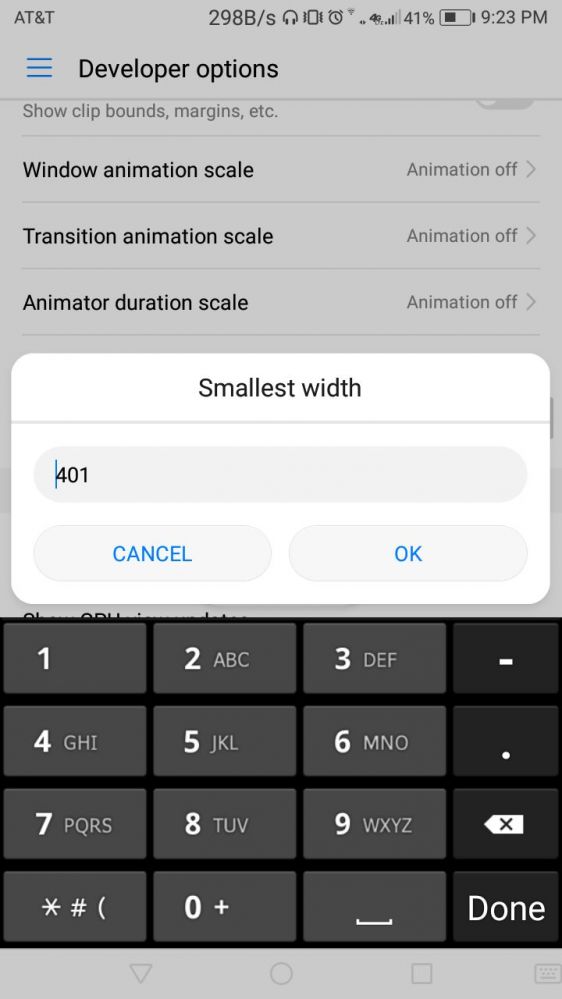

Just found out my phone (stock, no root) has user-settable minimum DPI? The battery saver thing drops the DPI some and auto-adjusts the DPI and resolution to save power, but I didn't know I could set it... (Minimum 320 DPI, max 960 DPI)

6

6 -

So I just installed Windows 10 and decided to download GeForce Experience and got this through Microsoft Edge.

I tried Opera, same result. My friend told me to zoom out with Opera and then suddenly it worked. WHY?!?!? Since when is a newly installed Windows with bad resolution a mobile device or something? Can you please explain? 11

11 -

Isn't it beautiful? It's a DS game. I LOVE it when they use the lowest resolution textures because of hardware limitations, yet use real 3D. I want to live in this picture. It feels like home.

2

2 -

New years resolution: no more tech support period. No one appreciates it and if anything breaks in the future youre expected to fix it. Lol, no thanks2

-

Had a teacher that taught the intro to programming course by youtube videos. He wanted us to build the same app he was making in the videos as the assignment. He kept his code snippets private and expected us all to type it all out. He was on a 1080p resolution desktop... but his videos were 360p resolution with 8pt font.....

-

I got a few resolution for this year.

-Releasing the app I am developing

-Learn more about devOps

-Learn machine learning

-primarily, pass out from this college without hurdle -

Finished my first PC build! Feels good to actually finish a new years resolution lol now I just need to finish my sass framework and get through that udemy python course

2

2 -

UPDATE: I redesigned my entire app and resubmitted it: it got rejected again for not having good resolution on iPad. My app literally doesn't make sense to use on an iPad... What should I do? I tried incorporating autolayout but that is honestly the hardest and most annoying tool Apple has created.8

-

- finish mEMeOs

- learn about cryptography and finish dogecrypt

- finish translation iteration and get it to 2500 unique visitors on awstats

- make a good, solid android app

- tell people i know python (i dont😉)

I guess that’s it ¯\_(ツ)_/¯

I kinda wish there were more…2 -

I apparently hate myself and have volunteered to help an author I enjoy design his website to be more mobile friendly. Convertri sucks ass, if anyone is wondering. Their mobile "converter" is shit, and does NOT make things pretty, at all. No matter what size or resolution we use (because he's trying to learn) loads like we're back on AOL.

Other than switching sites, any suggestions? Our issue is legitimately only with getting the background image to work on desktop and mobile.3 -

Having to join an emergency meeting to discuss progress of an urgent resolution the very attendance of which just delays the fix. Then repeating the meeting every 30 minutes.1

-

Why didn't i use 2880x1620 resolution on my zenbook before?

Jesus it feels that i got so much more space and and it's pretty awesome

tmux + i3wm feels even more awesome4 -

As much as I like Windows (now) the scaling on high res display is sooo awful, so now I am using a resolution of 1440 x 900 on my 2560x1600 retina display o_O1

-

My laptop is 14 inch 1080p resolution display. None of the desktop environments scales correctly. Most of the time the font will ne too small or webpages on chrome looks weird.I want the display to look when on windows 10. I really want to switch to linux(gnome) but this is hogging me.13

-

So I shouldn't have written that Linux issue rant. While I'm spending whole fucking day to figure out how to fucking set my fucking secondary monitor to full resolution, the fucking WINDOWS of my designer's PC got BSOD and failed repair.

fuckingShit fucktard full of fuck operation systems with their full of fuck bugsssssss 😠😠😠😠😠

I'm so fucking mad 5

5 -

I just want to play my bullet hell games and watch panel shows at the same time, but nooooooo. Windows needs to push all windows all the way to the right to a seemingly non-existing monitor. And I've tried all the "Adjust Deskotop Size and Position" options there are with absolutely no luck.

What makes it even worse is when I google the issue I get tonnes of solutions like this: https://superuser.com/a/420927

"Just set the games resolution to your monitors resolution", which I can't!

Oh but I can use ResizeEnable to make it possible to, well, resize the game in windowed mode, which used to work great but has since begun to make the game stutter :< 5

5 -

Finally got few cables and now I can connect my laptop and even my cubietruck (device llike rasp) to the crt TV which was lying waste. Thinking to provide cast service to use it to watch movies. I know the resolution will be poor up but still with a try ☺️

2

2 -

The thing that I hate more is when I want to pay something but I can't, because of system or technical problems. Do you imagine how much money are you losing? It's your interest, you should make everything possible to earn money, I can't flip out only to make you happy. Come on bro. I wanted to buy a thing, the website has a mobile version but in the mobile website I can't use credit card. And if I set the desktop version, the website identify the resolution of screen and it redirects me in the mobile version as well. Are you kidding me?1

-

Everyone out here talking about Arch and the setup process. My first experience with Linux was with Slackware Linux. Zero dependency resolution.. Holy shit what a ride that was for my first foray.1

-

Website for a gallery that wanted a 10k website for just 1k ... Must haz super CMS so that they could send me word documents each week so I could manuallly copy paste that shit into the CMS, and somehow extract and restore the lowres embedded images in full resolution ...

I guess it was safe to say this was my first client from hell 😅😂😂😂

The second was a website for some ballroom dude that got my referral from gallery X ... Yup, same shitty type of client3 -

Q for Linux dudes ... I have a 15.6" laptop with fullhd resolution ... Is there a way not to became blind?8

-

Wish you devRant family a very happy new year, my new year resolution for the year finally start working on my side project which I have been rolling off for the last 2 years, this is the year I finally start and finish it off.

-

If a bug is logged don't mark it as resolved unless you commit that resolution.

Also don't mark it resolved without at least a one-liner of the fix/expected behavior.1 -

!rant

Hey guys...

It's finally working.

Just needed to upgrade the drivers.

Using RGBL + 3 28BYJ steppers.

Just have one tiny problem and need help...

The resolution isn't right... Instead of moving mms, if I send a move of 64mm (one stepper revolution) it just turns one time instead of moving 64mm... Where are the settings to change this?

Btw this is the best birthday present ever, from me to me :-D also... My parents bought me a 3D printer, waiting for it to arrive... Me happy today 31

31 -

I have seen a developer implement lots and lots of breakpoints. For basically every pixel resolution a new one. Yes, it’s literally pixel perfect. No it isn’t maintainable in any way

-

Because my new computer has had no internet connection yet, windows couldn't install drivers to display any resolution above 1024x768.

That looks silly on my 1920x1200 screen.5 -

So, 1920x1080 hey !

The perfect fucking resolution !

you want 12 columns with equal gutters? FUCK YOU!

you want 12 rows with equal gutters? FUCK YOU MORE!4 -

My key ring :)

An old friend (remember the guy who had a miniature Red hat?), gave me an old RAM from a work machine (he worked in data center team).

We had many spare ones so, I picked one and been using it since then.

Photo in comments because dR is fucking up the resolution.5 -

Most actual GraphQL explanation:

1. Still uses your xhr/fetch/axios on FE

2. Just sends all the requests to single endpoint

3. On BE uses its own resolution schema to call proper controller to handle the request, rather than relying on router for that

That's all!

Just another useless layer of abstraction with its learning curve, tricks and bugs as ORMs are6 -

Fuck amazon

Bought a 1080p portable monitor

They sent a 768p screen...

What even is that resolution

Just Wtf

And if you put it up on its side like advertised you can't see shit if if you look at an angle

Also the fucking cable doesn't fit right and disconnects if you blow at it

Piece of shit - last time I bought on Amazon undefined amazon fuck fuck amazon 1080p piece of shit 768p wtf fuck you give me my money back piece of crap junk3

undefined amazon fuck fuck amazon 1080p piece of shit 768p wtf fuck you give me my money back piece of crap junk3 -

hello brothers (abd sisters) in code..

i'm not a front end dev, but i had to create a front end application sometime ago. since i don't really know how to create things, i used the golden ratio in almost everything, to try to get a "pretty" app ..has anyone tried this before, or is it not a good thing? since buttons have to scale based on windows resolution, and space left, was this a good idea?5 -

When you go for a demo to your execs and your low-resolution projector screws up each and every part of your website. #screwed1

-

New year resolution: resume a personal project I put aside for the last 5 years, and keep at it for a while.

5/6 years ago I was pissed that no mobile game uses touch interface to detect "spells" being drawn on the screen and decided to have a go at it. I remember a game of the 90s doing that with the mouse, which was super cool at the time.

I had a working (but ugly) game, but the last 20% to publish the game was more than my motivation allowed at the time and I let it rot.

Let's see if I can get it done this time.3 -

is there a way to render a website at a high resolution (larger than screen) like 8k? Only header-screenshot or so3

-

Get given two asset packs for a project I confirm with project lead, project manager and CTO which one they want to use. They then confirm with client and they all decided asset pack 2. Ok great, 3 months later week before deadline "we need asset pack 1 used instead"... Different resolution, different aspect ratio and now get nagged every few minutes how done is it, and that it's vital we meet the deadline. So close to just walking out that door.

-

Why the industry jumped on photoshop as a web design and layout tool is beyond me. It's like trying to stir coffee with your thumb. I'm a descent photoshop user but have always used inDesign in web mode. Far quicker for chucking around layouts and options (as page). It also exports as rgb png's either full pages or selections with or without transparency (at any resolution). Which are perfect for then optimising in Photoshop (Pixelmator these days) or any other less costly image editor. I hand code my sites then in Coda, love it.3

-

Has anyone else noticed these slight imprecicions where the the background peeks out at the bottom sometimes? It is pretty hard to spot, only happens sometimes after scrolling. Or is my display resolution just too high?

5

5 -

As the year comes to a close and I begin my challenge, what are some tips that you guys recommend I do?

3

3 -

Muscle soreness!

As per my 2019 resolution, I want to hit the gym at least 3 days per week. But I only manage to go once a month. And the day after the gym, my muscles hurt that I'm not able to even type when coding.

Anyone like me, who struggled and managed to hit the gym as desired? Any tips?11 -

Soo, our microsoft is the best on the market.... again... Trying to compile C++ code in Visual Studio 2017. I tried restart VS but it won't help..... If I try compile this code in linux it works well... Burn...

Better resolution: https://imgur.com/a/Crv3G 3

3 -

Why does every Monitor with a high resolution have such a wide format, like 16:9 or worse? As a developer i need more vertical than horizontal space. 9:16 is ok for coding but too small for anything else. I want a Monitor which is 2:3, 3:4 (rotated 3:2 or 4:3) or 1:1 and has at least 1920x2560. The better the resolution the more lines can i see at once. I can't make the text smaller than 10 pixel per line.

Do you guys have the same problem?12 -

So after working on a website for like a month to make it kinda pixel perfect in every resolution on every device the web designer just tells me "ok, you should move this whole thing up 30px"

Ok, no problem, I change the CSS for that div and make it all go up 30px

The very next day he tells me the while thing is fucked up and not aligned any more

I mean, is was all the same as before, nothing changed! -

The very last weekend of the year has arrived.

How has the year gone so far? Any significant memories that you want to share?

Maybe share a new year resolution as well.

Last Weekend: https://devrant.com/rants/1003927413 -

So it turns out that Rust's import resolution is Really Fucking Complicated

https://devrant.molodetz.nl/Screens...

It supports glob re-exports and circular glob imports, conflicts are valid if you don't use them or if they ultimately point at the same name, paths may pass the same module multiple times. It's very convenient to use, I never needed to fight with it, but it's borderline impossible to correctly implement.4 -

Guys how many monitors use at office? I work with 3 and i would like 4th but my desk isnt enough big for it :( Also i prefer more full hd monitors than big resolution ultrawides11

-

Today I discovered the secrets of taking HD pictures with a phone:

1. Set to highest resolution

2. Turn on RAWs

3. Take the picture (basic photo taking tips like focus, don't point at the sun, make sure finger is not on lense)

4. Use Photoshop/Lightroom to make corrections and make it look like how you "remembered" it (aka lie)

-

AAAARGHH!!! It happens every now and then that when I open a window in a Windows application, the window opens outside the display area, on a non-existing display to the right of my primary screen. There is no other way to access the newly opened window than to go to "Screen resolution" and swap screens 1 and 2 such that the secondary screen thinks it is to the right of the primary screen (although physically it stays to the left of the primary screen). And then, once I've got hold of the window, I swap the screens back. It's just so incredibly annoying and a complete waste of time. This is staring to really get on my nerves! >:(8

-

*Reading a bug report's summary*

'Object x is displayed incorrectly when playing on PC in resolution 1024x768 or Android tablets w/ 4:3 Aspect Ratio'

*facepalms*

You, sir, are failing at basic math && basic logic, among other things.

1024x768 _has_ an Aspect Ratio of 4:3.

If only you had bothered checking, you would've know that the issue is purely related to the Aspect Ratio && !just that one resolution.5 -

Things to do in 2020:

- Unsuscribe from Python reddit

- Unsee what you have witnessed over the aforementioned sub

- Figure out why VSCode is ‘...so the best...’ editor whatever that means

- Unsuscribe from any dev related sub5 -

One year ago I made a resolution to do one of two things: get serious about learning neural networks, or finish one of the side projects (markdown based wiki with some nifty features). Didn't do the first one, and got the second one to about 50%.

Not really happy as I did not complete any goal. Still some decent work was done and built an open source parser. So, I guess I am 50% happy.

What were your achievements this year? Did you achieve 100%?3 -

Last week I was working on a web development assignment (php and bootstrap).

I had a few problems but the most annoying was that bootstrap didn't want to apply to one specific element.

I sat there, looking for the error for about 2 hours. When I finally found it I was raging inside. I mistyped a - instead of an = after the class attribute (i.e. class-"Foo").

The mistake was hard to spot because of a combination of the font and the low screen resolution - 2 hours wasted because of that shit -

When the screen resolution of your laptop and tower pc are completely different and you can't get used to fast workflows because the UI changes everytime you switch devices.

-

I fucking hate those 2K 4K resolution designations. What kind of marketing shit is that? I understand putting that on entry level (or 'gaming') stuff, but the cancer seems to be spreading...

-

so apparently devrant has an image dimension limitation~

compressing it from a 9mb one to 2.7mb still gives an error on jpg with the resolution 4156x2258!

@dfox is it just me or a random bug?13 -

I have dnsmasq running on my laptop to speed up dns resolution, never been so glad I didn't turn my laptop off. cause I'm one of the few actually watching this hack and wondering what kind of system is going to be thought of to replace dns

-

So I finally decided to take the plunge to dualboot my Windows 10, since I'm using Linux applications more and more than Windows applications.

I just had to choose Fedora out of all distros. It sort of worked. When I tried to install, it won't get pass the login screen (kept getting blanks). I rebooted several times and went with "Troubleshooting" and it got me passed the login screen and proceeded to install at the lowest graphical settings, i.e. 800x600

So far so good, I was able to operate stuff that I wanted but I just can't stand working in a really low resolution. My guess is probably incompatibility with nVidia driver. Tried everything, rpmfusion, the negativo17 repo, the current official fedora repo, the If-Not-True-Then-False guide, and bumblebee. None works.

Makes no sense at all. Luckily my Win10 still works. Now I'm stuck on whether to continue trying to get Fedora distro up or try a different distro and start back from square one...3 -

web devs... what are the commom screen sizes you use for responsive design. I used the bootstrap grid but there are some tablets which resolution is higher than a normal conputer and that tablet is considered as a computer. Is there any css-only-way to make a difference between desktop, tablet and cellphone?4

-

*shows a demo with bootstrap to PM*

PM: This is shit

So we sticked to the current version by a designer. That version crashed when....change screen resolution in a pc.

Thanks that was long ago. -

People that aren't into IT and act as if they are the best technicians in the world... People that tries to set the same windows resolution on two different monitors...

-

My next product will be eternal, like 1366×768 screen resolution. Heck, eternal like Python 2! Or like JavaScript itself. Either way, you may hate it all you want, but when it's out, y'all gonna shit your pants. NOBODY ever did what I'm about to do. I'm about to make me a living _and_ prove a point.2

-

new year's resolution: create a new healthy diet plan and then break it. Hey, if I cant fit into the diet then I'll make the diet fit me.2

-

Man, at the rate I'm going, I'll have to put a bug I found back in April on my new years resolution list...

Sad thing is, as we all know, new years resolutions never work out... So maybe this bug will never be solved :S -

!rant

So I went through this exercise maybe a year and a half ago and didn't get anywhere. But I'm sure with the help of fellow ranters I might get somewhere :D

What Macbook alternative laptops would you recommend? Criteria would include: Screen with similar resolution (2880x1800) and quality, built-in SSD, 15", 8GB Ram, i7 and hopefully not a multi colored body or keyboard... If it came with Linux you win.11 -

fellow ranters, i need your advice!

i'm searching for an ultra portable laptop:

- 11" screen

- full HD resolution

it will run Linux (Kali or other debian based distro) and i need that just to do some work while i'm on the go. I don't need huge performance, so the budget is quite limited (~300 EUR / 350 USD).

is the Thinkpad x220/x230 still the best choice (even if the screen resolution is not full HD)? any other suggestion?

thanks!8 -

My graphics card is only able to play video on YouTube with max resolution 720p. If I choose 1080p then I get less than 1 frame per second playback.

This is disappointing; seeing that Radeon 5600 XT graphics card can only play videos in 720p. I am pretty sure that poor performance is caused by Radeon graphics driver; there are no other problems of any kind on my system.

Radeon software team = 🤡🤡🤡

The bugs without end need to be stopped. AMD software deserve their bad reputation 100%11 -

Anyone here uses scaleway VPS?

The tickets I raised got deleted without any proper resolution. And that is shady AF. The tickets were attended by some customer support guy and he had told he would call to verify. But that never happened.

And now all the tickets I raised has disappeared.

I can't activate my account because phone verification is not possible since the code they never arrives3 -

after installing and configuring NGINX + uwsgi + emperor for 10+ hours, the final resolution is... reinstall OS... wut?

-

Have you ever use Ubuntu (or any Linux that use Pango to render text) for a significant amount of time that you notice that text in Mac OS is just not that crisp? To the point that it looks blurry, even more so in non-retina display.

I want to justify buying new Macbook for that sweet M1 silicon, but I don't think I can live with the blurry text. I rarely use built-in display on a laptop, but it is hard to find external monitor with Retina resolution (200+ dpi) with decent size, or ultrawide for that matter.2 -

So I finished a side project at a cost of my company time. Now I'm panicking to get shit done before I get fired but also glad that I finally made something in 2018. THIS FEELING IS SO CONFLICTING!!!

-

mid-year resolution:

Invest a lot of my time on stack overflow in order to raise my reputation in the coming 6 months. -

FEAR OF DATA LOSS

Resolution => backupS

1: external drive (or NAS)

2: cloud storage (maybe multiple services)

3: git repositories

After all these backups you are kind of demigod of data recovery...8 -

New Year Resolution:

Keep my files organised. Thinkpad laughed at me saying how about finishing last year's resolution of keeping your Desktop organised.

Me: F**K it -

Goddammit,

today, my laptop crashed while shutting down.

I just switched it in again, and boom, Hostname-Resolution isn't working anymore.

I also already checked UFW, iptables and the hosts-file.

Guess I'll reinstall it tomorrow.2 -

Ha, Microsoft closes my easily reproducible issue with their Monaco editor as 'resolved':

> posted "resolution" is totally wrong, it's not even in the TypeScript typings of their own library or anywhere in the documentation

jesus christ today is not my day

seems like everyone already started chugging the Christmas eggnog, maybe I'd just give up and start do the same1 -

I'm not managing Orchid in terms of performance milestones so these posts and their order are probably incredibly bizarre.

Anyway; demo for lexer plugins, foreign code provided constant resolution, foreign function dispatch, and clean shutdown. 7

7 -

New Year Resolution..

Discussion on random topic

Read a new topic at least in a day

Innovative ideas

Build something interesting -

Virtualbox 6 sucks!

So i'm on a mac and i need some linux goodness on the quick. But the screen resolution stays at 800x600px! On my high dpi screen it's so tiny i need a magnifying glass to do anything on the guest os. And i can't change the resolution nor scale it up for the life of it. Never had that before. Really frustrating!!! 😤5 -

laptop suggestions please:

- 13-14 inch screen

- min 8 gb ram (preferably 16)

- 128 gb storage

- i5 or i7

- 1200 x 800 resolution

- can run linux (no driver issues)

- can run flutter, android studio, and emulator

- $500 or less5 -

Am I the only one who believe Mac should just stick to 1080 resolution? it makes everything much better3

-

So let me get this straight.

- You propose a tutorial about how to create an e-commerce website using Laravel, with a shitty powerpoint-like display

- We have to click "next" to learn the basics of PHP, MVC, Laravel, Mix and such, while all I need to know is about the payment system and global logic

- On the last PowerPoint, you suddenly decide that you should finally think about displaying some actual code while all before was theoric class

- So the last powerpoint page is 45 times longer than any other previous pages.

- Of course, there was nothing about what I was looking for.

You should consider stop wasting everyone's time as a 2018 new resolution.1 -

Thought the confinement wouldn't bother much

Until I noticed I'm missing a freaking decent VGA cable for my PC screen — the only one available at home allows a maximum resolution of 480x800

And of course can't buy or borrow one

Gosh, I hate being unlucky2 -

I'm developing an add-on to management a real estate and I was almost 2 months trying to solve how to reconciliate accountant accounts depending on the owner, which tax resolution could be variant, after many nights and tears today I've solved it in less than 2 hours. 😒🤯1

-

I have 6 different caching folders now

because rust path resolution, depending where you launch from.. if it's debug or test or vscode or command line... or what directory were you in in the command line?!

well it decides to resolve the location differently

and how is it nobody thought this was ridiculous2 -

So as part of my news years resolution, I thought id build an app and I thought I would build it in vue.js and native script with a shared codebase, I've started with a fresh project and already I have an error, one that I've not caused, this is in router.js. see if you can spot it.

module.exports = (api, options) => {

require('@vue/cli-plugin-router/generator')(api, {

historyMode: options.routerHistoryMode

};

export default new Router(options);

}

When I've fixed the syntax error, I then get, the error:

/src/router.js: 'import' and 'export' may only appear at the top level (5:0)

This is when I run "npm run serve:ios"

if anyone has encountered this, please let me know how you fixed this.2 -

1. Be lost in thought less.

2. Listen to people more attentively.

3. Read 3 computer science books.

Note: Last year my resolution was to read 3 computer science books and I'm proud to say that I read 2.5 computer science books. Didn't fully reach it but 2 and a half books in a year is damn better than 0. They were: "Clean Code", "The Mythical Man-Month", and half of "Algorithms 4th Edition". -

Why must pixel perfect scaling be so fucking hard... It all works perfectly but I'm limited to a max of 540p for the games camera view but as soon as you drop your resolution bellow 1080p you get stuck with 300p or lower... Ughhhhhhhh

Why did I choose to do pixel art... Wonder if converting everything to vectors would help, wonder is smashing my head into a fucking wall would help... -

Any word for feeling happy, stressed and accomplished all together at a time?

Story - Solved a major bug after digging into decompiled code stepping into each line for almost a day and half and later figuring that it requires just one line of change? -

I tried to make a post with a picture, but it did not work. I even scaled it down to 25kb and decreased resolution heavily. Tried png and jpg. The hell is it not working from desktop and from a phone?2

-

Anyone else planning to raise buried projects from their grave on New Year's Eve....? :P

P.S.- might come with a resolution or two while at it!1 -

The texts appear a gazillion times better on the external monitor when I am running windows 10 on Bootcamp compared with when I am running MacOS.

Monitor settings (like resolution and size and connection) exactly same in both cases. 🤷🏻7 -

Just recognized that my fujitsu lifebook s752 doesnt have a webcam..if i google for pictures about it, it seems to have one..anybody know why? Cant find a resolution

-

My New Years resolution is to learn at least one new language.

Post what you think I should learn and I will give you a language to learn this year. -

Any recommendations for decent 13 inch laptop under 1400$ USD

- Atleast 8 gigs of RAM and option to upgrade

- resolution >= full HD

- storage >= 256 GB SSD

- battery >= 6 hrs heavy chrome usage

- Linux compatibility

- Cpu- nothing heavy - browser and docker containers, no building or compiling15 -

Why did the computer programmer get in trouble with his girlfriend? Because he kept trying to "Ctrl+Z" their arguments, but she wanted a real resolution!4

-

I made a little automated Docker reverse proxy called Autocaddy to simplify developing unrelated little trinkets under subdomains of a domain name:

https://github.com/lbfalvy/...

It dispatches subdomains to the (container with the) matching network alias and terminates TLS.

it's a little rough around the edges but to my understanding it shouldn't be an inherent risk (unless you're running things that interfere with name resolution like VPN on the container host, but why would you do that if it's already a container host).4 -

Anyone know how to get by the "Out of Memory" error on Android Studio when an image you have is like 102941092301mb too big and it crashes your app when you debug?

pls I need help, this image looks terrible when it isn't the resolution I would like...7 -

My new year resolution as a dev:

1. Competing in Kaggle competitions

2. Motivate peoples in data science

3. Do some cool project

4. Waiting for devRant stickers.. -

New year resolution was to be a better person (or at least nicer) but here it goes.

Monday rant: State your fucking requirements when requesting something as "This is not what I was expecting" is not acceptable.

I do code for living I don't read mind nor have a crystal ball on my desk telling me "...what you meant..." -

Which is needed more, resolution(1080p/1440p) or refresh rate(60-144hz) for a 27-inch flat monitor? My purpose is web development (Python, JavaScript).10

-

Anyone here have suggestions to actually make dsn resolution slower for a website using only dns settings/records? Like really slow if possible.4

-

As a retired real estate developer from Essen, Germany, I've dedicated my life to running a charity organization that supports underprivileged communities worldwide. Our mission is to provide essential resources, education, and healthcare to those in need. In 2017, I made a strategic investment in Bitcoin, hoping it would grow into a substantial financial resource to expand our charity's outreach.

Fast-forward to 2024, my Bitcoin holdings had skyrocketed to nearly $900,000 – a life-changing amount earmarked for future projects. Our charity was on the cusp of implementing groundbreaking initiatives, and this fund would be the catalyst. However, disaster struck during a trip to Europe when my phone, containing access to my Bitcoin wallet, was stolen.

Panic set in as I realized I couldn't access my funds without my phone. Despite having taken security measures, I hadn't properly backed up my recovery codes. The gravity of the situation hit me hard: all our charity's future plans, the livelihoods of our beneficiaries, and my personal savings were at risk.

Weeks turned into months as I desperately tried every method to recover my wallet. The stress was overwhelming, and the fear of losing everything was crippling. It wasn't just about my personal finances; it was about the countless lives our charity could impact.

That's when a fellow investor recommended Digital Resolution Services. Initially, I was skeptical – could anyone really help recover such a substantial amount of Bitcoin without access to my original device? But with so much at stake, I decided to trust them and reached out.

From the very first interaction, their team was professional, compassionate, and reassuring. They understood the gravity of the situation and walked me through every step of their recovery process. Their transparency and expertise instilled confidence, and I appreciated how they made sure I was comfortable with their approach.

The days passed, and I received regular updates on their progress. Their team worked tirelessly, employing cutting-edge technology and innovative methods to regain access to my wallet. And then, the miracle happened – within just a few weeks, Digital Resolution Services successfully recovered my Bitcoin wallet, restoring all $900,000.

Words cannot describe the relief and gratitude I felt. Tears of joy streamed down my face as I realized our charity's future was secured. Digital Resolution Services didn't just recover my Bitcoin; they restored hope for the countless lives we touch.

Their expertise, professionalism, and dedication are unmatched. They saved me from what could have been a financial disaster, and I wholeheartedly recommend them to anyone facing similar challenges. If you've lost access to your Bitcoin, don't give up – Digital Resolution Services is the lifeline you need.

Lessons Learned

This experience taught me invaluable lessons:

1. Back up your recovery codes and store them securely.

2. Diversify your investments and consider traditional assets.

3. Seek professional help when faced with cryptocurrency recovery.

Conclusion

Digital Resolution Services is more than just a recovery service – they're guardians of hope. Their miraculous work has ensured our charity's continuity, impacting countless lives. I'm forever grateful for their expertise and compassion.

If you're facing a similar crisis, don't hesitate. Reach out to Digital Resolution Services and let their experts work miracles for you.

Contact Digital Resolution Services:

EMAIL: digitalresolutionservices (@) myself.co m

WHATSAPP: +1 (361) 205-7313

Sincerely,

Mrs. Dolores Whorton

-

Saw this on Reddit just now, but I figured it would be a pretty good repost here.

Happy building!

Edit: image resolution was crappy, so here's a link

https://reddit.com/r/coolguides/... 2

2 -

Best Crypto / Bitcoin Recovery Expert - Reach out to OMEGA CRYPTO RECOVERY SPECIALIST HACKER

When faced with the daunting task of recovering stolen BTC, the benefits of enlisting the services of OMEGA are abundantly clear. OMEGA has an experienced team of professionals with a proven track record of successful recoveries. Their expertise in the field of cybersecurity and digital forensics equips them with the necessary skills to navigate complex cases of stolen BTC effectively.

Moreover, their quick and efficient process for recovering lost BTC sets them apart from other recovery services, providing clients with a swift resolution to their predicament.

Contact Below.......

Call or Text +1 (701, 660 (0475

Mail; omegaCryptos@consultant . c om2 -

One of my office mate said that she is making the same resolution since last 8 years... And the resolution ia:

To not make any resolution for the year..😎 -

At Cranix Ethical Solutions Haven, we are a team of dedicated experts passionate about helping individuals and organizations affected by cryptocurrency fraud and asset theft. Our mission is to provide a safe haven for those seeking recovery and resolution in the complex and often daunting world of crypto fraud.

EMAIL: cranixethicalsolutionshaven (at) post (dot) com OR info (at) cranixethicalsolutionshaven (dot) info

TELEGRAM: (at) cranixethicalsolutionshaven

WHATSAPP: +.4.4.7.4.6.0.6.2.2.7.3.05 -