Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "parse"

-

Hi, I am a Javascript apprentice. Can you help me with my project?

- Sure! What do you need?

Oh, it’s very simple, I just want to make a static webpage that shows a clock with the real time.

- Wait, why static? Why not dynamic?

I don’t know, I guess it’ll be easier.

- Well, maybe, but that’s boring, and if that’s boring you are not going to put in time, and if you’re not going to put in time, it’s going to be harder; so it’s better to start with something harder in order to make it easier.

You know that doesn’t make sense right?

- When you learn Javascript you’ll get it.

Okay, so I want to parse this date first to make the clock be universal for all the regions.

- You’re not going to do that by yourself right? You know what they say, don’t repeat yourself!

But it’s just two lines.

- Don’t reinvent the wheel!

Literally, Javascript has a built in library for t...

- One component per file!

I’m lost.

- It happens, and you’ll get lost managing your files as well. You should use Webpack or Browserify for managing your modules.

Doesn’t Javascript include that already?

- Yes, but some people still have previous versions of ECMAScript, so it wouldn’t be compatible.

What’s ECMAScript?

- Javascript

Why is it called ECMAScript then?

- It’s called both ways. Anyways, after you install Webpack to manage your modules, you still need a module and dependency manager, such as bower, or node package manager or yarn.

What does that have to do with my page?

- So you can install AngularJS.

What’s AngularJS?

- A Javascript framework that allows you to do complex stuff easily, such as two way data binding!

Oh, that’s great, so if I modify one sentence on a part of the page, it will automatically refresh the other part of the page which is related to the first one and viceversa?

- Exactly! Except two way data binding is not recommended, since you don’t want child components to edit the parent components of your app.

Then why make two way data binding in the first place?

- It’s backed up by Google. You just don’t get it do you?

I have installed AngularJS now, but it seems I have to redefine something called a... directive?

- AngularJS is old now, you should start using Angular, aka Angular 2.

But it’s the same name... wtf! Only 3 minutes have passed since we started talking, how are they in Angular 2 already?

- You mean 3.

2.

- 3.

4?

- 5.

6?

- Exactly.

Okay, I now know Angular 6.0, and use a component based architecture using only a one way data binding, I have read and started using the Design Patterns already described to solve my problem without reinventing the wheel using libraries such as lodash and D3 for a world map visualization of my clock as well as moment to parse the dates correctly. I also used ECMAScript 6 with Babel to secure backwards compatibility.

- That’s good.

Really?

- Yes, except you didn’t concatenate your html into templates that can be under a super Javascript file which can, then, be concatenated along all your Javascript files and finally be minimized in order to reduce latency. And automate all that process using Gulp while testing every single unit of your code using Jasmine or protractor or just the Angular built in unit tester.

I did.

- But did you use TypeScript?36 -

Today, I learned the shortest command which will determine if a ping from your machine can reach the Internet:

ping 1.1

This parses as 1.0.0.1, which thanks to Cloudflare, is now the IP address of an Internet-facing machine which responds to ICMP pings.

Oh, you can also use this trick to parse 10.0.0.x from `10.x` or 127.0.0.1 from `127.1`. It's just like IPv6's :: notation, except less explicit.8 -

Conversation today...

Guy: "Hey I need a real quick script to pull some values out of an XML document...is that possible?"

Me: "Uh...yeah that's pretty simple if that's all it has to do."

Guy: "Ok excellent I'll send you some files and documentation."

Me: "Ok so is this like a one time use thing or do you need to parse multiple of these?"

Guy: "Actually it needs to run all the time, on this specific PC, watch directories for any files that are added, then generate a XLSX files of the values, and also log information to a database. Etc"

Me: "Oh that adds quite a bit of complexity from what you originally said. It's going to take more time."

Guy: "But you said it was easy."

Well fuck you...12 -

Probably the biggest one in my life.

TL:DR at the bottom

A client wanted to create an online retirement calculator, sounds easy enough , i said sure.

Few days later i get an email with an excel file saying the online version has to work exactly like this and they're on a tight deadline

Having a little experience with excel, i thought eh, what could possibly go wrong, if anything i can take off the calculations from the excel file

I WAS WRONG !!!

17 Sheets, Linking each other, Passing data to each sheet to make the calculation

( Sure they had lot of stuff to calculate, like age, gender, financial group etc etc )

First thing i said to my self was, WHAT THE FREAKING FUCK IS THIS ?, WHAT YEAR IS THIS ?

After messing with it for couple of hours just to get one calculation out of it, i gave up

Thought about making a mysql database with the cell data and making the calculations, but NOOOO.

Whoever made it decided to put each cell a excel calculation ( so even if i manage to get it into a database and recode all the calculations it would be wayyy pass the deadline )

Then i had an epiphany

"What if i could just parse the excel file and get the data ?"

Did a bit of research sure enough there's a php project

( But i think it was outdated and takes about 15-25 seconds to parse, and makes a copy of the original file )

But this seemed like the best option at the time.

So downloaded the library, finished the whole thing, wrote a cron job to delete temporary files, and added a loading spinner for that delay, so people know something is happening

( and had few days to spare )

Sent the demo link to client, they were very happy with it, cause it worked same as their cute little excel file and gave the same result,

It's been live on their website for almost a year now, lot of submissions, no complains

I was feeling bit guilty just after finishing it, cause i could've done better, but not anymore

Sorry for making it so long, to understand the whole thing, you need to know the full story

TL:DR - Replicated the functionality of a 17 sheet excel calculator in php hack-ishly.8 -

"You mean to tell me that you deleted the class that holds all our labels and spin boxes together?" I said exasperatedly.

~Record scratch.mp3

~Freeze frame.mp4

"You're probably wondering how we got to this stage? Let's wind back a little, shall we?"

~reverseRecordSound.wav

A light tapping was heard at the entrance of my office.

"Oh hey [Boss] how are you doing?" I said politely

"Do you want to talk here, or do you want to talk in my office? I don't have anyone in my office right now, so..."

"Ok, we can go to your office," I said.

We walked momentarily, my eyes following the newly placed carpeting.

Some words were shared, but nothing that seemed mildly important. Just necessary things to say. Platitudes, I supposed you could call them.

We get to his office, it was wider now because of some missing furniture. I quickly grab a seat.

"So tell me what you've been working on," I said politely.

"I just finished up on our [project] that required proper saving and restoring."

"Great! How did you pull it off?" I asked excitedly.

He starts to explain to me what he did, and even opens up the UI to display the changes working correctly.

"That's pretty cool," admiring his work.

"But what's going on here? It looks like you deleted my class." I said, looking at his code.

"Oh, yeah, that. It looked like spaghetti code so I deleted it. It seemed really bulky and unnecessary for what we were doing."

"Wait, hold on," I said wildly surprised that he thought that a class with some simple setters and getters was spaghetti code.

"You mean to tell me that you deleted the class that organizes all our labels and spin boxes together?" I said exasperatedly.

"Yeah! I put everything in a list of lists."

"What, that's not efficient at all!" I exclaimed

"Well, I mean look at what you were doing here," he said, as he displays to me my old code.

"What's confusing about that?" I asked politely, but a little unnerved that he did something like this.

"Well I mean look at this," he said, now showing his "improved" code.

"We don't have that huge block of code (referring to my class) anymore filling up the file." He said almost a little too joyously.

"Ok, hold on," I said to him, waving my hand. "Go back to my code and I can show you how it is working. Here we are getting all the labels and spin boxes into their own objects." I said pointing a little further down in the code. "Down here we are returning the spin boxes we want to work with. Here and here, are setters so we can set maximum and minimum values for the spin box."

"Oh... I guess that's not that complicated. but still, that doesn't seem like really good bookkeeping." He said.

"Well, there are some people that would argue with you on that," I said, thinking about devRant.

He quickly switches back to his code and shows me what he did. "Look, here." He said pointing to his list of lists. "We have our spin boxes and labels all called and accounted for. And further down we can use a for loop to parse through them."

He then drags both our version of the code and shows the differences. I pause him for a moment

"Hold on, you mean you think this" I'm now pointing at my setters "is more spaghetti than this" I'm now pointing at his list of lists.

"I mean yeah, it makes more sense to me to do it this way for the sake of bookkeeping because I don't understand your Object Oriented Programming stuff."

...

After some time of going back and forth on this, he finally said to me.

"It doesn't matter, this is my project."

Honestly, I was a little heart broken, because it may be his project but part of me is still in there. Part of my effort in making it the best it can be is in there.

I'm sorry, but it's just as much my project as it is yours.17 -

I’m surrounded by idiots.

I’m continually reminded of that fact, but today I found something that really drives that point home.

Gather ‘round, everybody, it’s story time!

While working on a slow query ticket, I perused the code, finding several causes, and decided to run git blame on the files to see what dummy authored the mental diarrhea currently befouling my screen. As it turns out, the entire feature was written by mister legendary Apple golden boy “Finder’s Keeper” dev himself.

To give you the full scope of this mess, let me start at the frontend and work my way backward.

He wrote a javascript method that tracks whatever row was/is under the mouse in a table and dynamically removes/adds a “.row_selected” class on it. At least the js uses events (jQuery…) instead of a `setTimeout()` so it could be worse. But still, has he never heard of :hover? The function literally does nothing else, and the `selectedRow` var he stores the element reference in isn’t used elsewhere.

This function allows the user to better see the rows in the API Calls table, for which there is a also search feature — the very thing I’m tasked with fixing.

It’s worth noting that above the search feature are two inputs for a date range, with some helpful links like “last week” and “last month” … and “All”. It’s also worth noting that this table is for displaying search results of all the API requests and their responses for a given merchant… this table is enormous.

This search field for this table queries the backend on every character the user types. There’s no debouncing, no submit event, etc., so it triggers on every keystroke. The actual request runs through a layer of abstraction to parse out and log the user-entered date range, figure out where the request came from, and to map out some column names or add additional ones. It also does some hard to follow (and amazingly not injectable) orm condition building. It’s a mess of functional ugly.

The important columns in the table this query ultimately searches are not indexed, despite it only looking for “create_order” records — the largest of twenty-some types in the table. It also uses partial text matching (again: on. every. single. keystroke.) across two varchar(255)s that only ever hold <16 chars — and of which users only ever care about one at a time. After all of this, it filters the results based on some uncommented regexes, and worst of all: instead of fetching only one page’s worth of results like you’d expect, it fetches all of them at once and then discards what isn’t included by the paginator. So not only is this a guaranteed full table scan with partial text matching for every query (over millions to hundreds of millions of records), it’s that same full table scan for every single keystroke while the user types, and all but 25 records (user-selectable) get discarded — and then requeried when the user looks at the next page of results.

What the bloody fucking hell? I’d swear this idiot is an intern, but his code does (amazingly) actually work.

No wonder this search field nearly crashed one of the servers when someone actually tried using it.

Asdfajsdfk.rant fucking moron even when taking down the server hey bob pass me all the paperclips mysql murder terrible code slow query idiot can do no wrong but he’s the golden boy idiots repeatedly murdered mysql in the face21 -

Spent most of the day debugging issues with a new release. Logging tool was saying we were getting HTTP 400’s and 500’s from the backend. Couldn’t figure it out.

Eventually found the backend sometimes sends down successful responses but with statusCode 500 for no reason what so ever. Got so annoyed ... but said the 400’s must be us so can’t blame them for everything.

Turns out backend also sometimes does the opposite. Sends down errors with HTTP 200’s. A junior app Dev was apparently so annoyed that backend wouldn’t fix it, that he wrote code to parse the response, if it contained an error, re-wrote the statusCode to 400 and then passed the response up to the next layer. He never documented it before he left.

Saving the best part for last. Backend says their code is fine, it must be one of the other layers (load balancers, proxies etc) managed by one of the other teams in the company ... we didn’t contact any of these teams, no no no, that would require effort. No we’ve just blamed them privately and that’s that.

#successfulRelease4 -

Backend internship interview

They: Can you reverse the given string without using pointers? (C++)

Me: Yeah, sure

*Then I start explaining how I am gonna approach the problem and such*

They: Ok, we understand that you can do it, now can you write a front-end that has a couple of routes. Also, these routes should have some sort of list views because we want you to print information **attention** that you are going to parse from Amazon inside those list views.

Me: *dumbfounded and trying to explain that am not a front-end developer*

They: But we still want you to do this.3 -

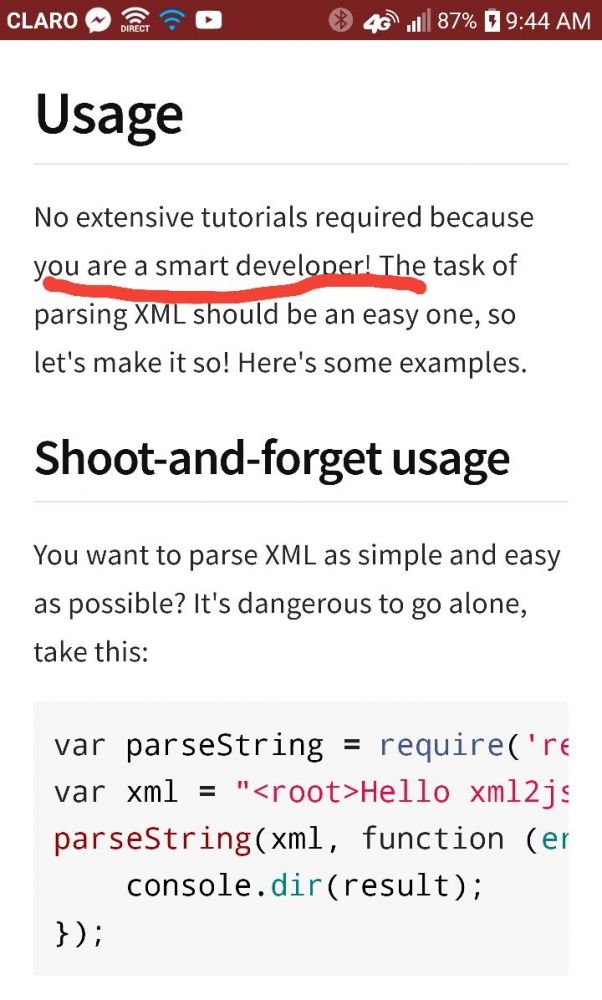

I send a PR to your GitHub repo.

You close it without a word.

I tell you that your lib crashes because you're trying to parse JavaScript with a (bad) regex, but you keep insisting that no, there exist no problem, and even if you barely know what "parsing" means, you keep denying in front of the evidence.

Well fuck you and your shitty project. I'll keep using my fucking fork.

And if you're reading this, well, fuck you twice. Moron.10 -

Ok, rant incoming.

Dates. Frigging dates. Apparently we as a species are so bloody incompetent we cannot even decide on a one format for how to write today. No, instead we have one for every language and framework, because every moron thinks they know better how to write the date. All of them equivalent and all of them different enough to make me start lactating out of frustration trying to parse this garbage... And when you finally manage to parse it on one platform it turns out that your ORM just decided to use the less common version of the date, and have fun converting one to the other. I hope that ever time someone comes up with a new date format will be hit in a face with a red hot frying pan untill they give up programming in favour of growing cactuses.11 -

When I found out my JSON didn't parse because I used single instead of double quotes after two hours

8

8 -

Had an interview in a MNC company.

He: Propose a solution for reading huge logs file like 1 GB and parse errors with today's date.

Me: Gave two solution, one with regex and second with buffering the logs (reason: reading the entire in same shot will cause cpu spike with huge memory consumption) and I fell in love with my second approach. By the way it was on paper.

He: (Without seeing the logic) Your syntax is wrong.

Me: Got frustrated who the hell checks syntax in interview. I asked how may years of experience you have?

He: 10 years.

Me: I don't wanna continue, and I left.5 -

I know you guys probably have seen the worst of the worst...

But have you seen a js used to generate xml and send it to backend as json then parse it to xml? No template literals btw so there’s a lot of multiline with lots of + here and there

Or using sql to request web service?9 -

Ah certbot you sexy pain in the ass.

# certbot renew

> "Error: unable to parse files ..."

> 2 certificates renewed.

🤔I don't know how you worked, but you keep working!!2 -

Interviewer: "Using this 2D array and calculate.."

Me: "This input isn't a 2D array though. Do you want me to parse or construct a 2D array then.."

"It is a 2D array."

"Uh.. ok..and if it's not what if we.."

"Look my notes say you must use this input, and treat it as a 2D Array"

"What if I wrote a function for a 2D array similar to this input, but actually a 2D array"

"You must use only the input provided"

Me: does rain dance code for 20 minutes.

Interviewer: "hmm, maybe it wasn't a 2D Array. I like your efforts but that's all the time we have today."

I promise I can code, sometimes. It does help to have correct questions to give correct answers.1 -

The tech stack at my current gig is the worst shit I’ve ever dealt with...

I can’t fucking stand programs, especially browser based programs, to open new windows. New tab, okay sure, ideally I just want the current tab I’m on to update when I click on a link.

Ticketing system: Autotask

Fucking opens up with a crappy piss poor sorting method and no proper filtering for ticket views. Nope you have to go create a fucking dashboard to parse/filter the shit you want to see. So I either have to go create a metric-arse tonne of custom ticket views and switch between them or just use the default turdburger view. Add to that that when I click on a ticket, it opens another fucking window with the ticket information. If I want to do time entry, it just feels some primal need to open another fucking window!!! Then even if I mark the ticket complete it just minimizes the goddamn second ticket window. So my jankbox-supreme PC that my company provided gets to strugglepuff along trying to keep 10 million chrome windows open. Yeah, sure 6GB of ram is great for IT work, especially when using hot steaming piles of trashjuice software!

I have to manually close these windows regularly throughout the day or the system just shits the bed and halts.

RMM tool: Continuum

This fucker takes the goddamn soggy waffle award for being utterly fucking useless. Same problem with the windows as autotask except this special snowflake likes to open a login prompt as a full-fuck-mothering-new window when we need to open a LMI rescue session!!! I need to enter a username and a password. That’s it! I don’t need a full screen window to enter credentials! FUCK!!! Btw the LMI tools only work like 70% of the time and drag ass compared to literally every other remote support tool I’ve ever used. I’ve found that it’s sometimes just faster to walk someone through enabling RDP on their system then remoting in from another system where LMI didn’t decide to be fully suicidal and just kill itself.

Our fucking chief asshat and sergeant fucknuts mcdoogal can’t fucking setup anything so the antivirus software is pushed to all client systems but everything is just set to the default site settings. Absolutely zero care or thought or effort was put forth and these gorilla spunk drinking, rimjob jockey motherfuckers sell this as a managed AntiVirus.

We use a shitty password manager than no one besides I use because there is a fully unencrypted oneNote notebook that everyone uses because fuck security right? “Sometimes it’s just faster to have the passwords at the ready without having to log into the password manager.” Chief Asshat in my first week on the job.

Not to mention that windows server is unlicensed in almost every client environment, the domain admin password is same across multiple client sites, is the same password to log into firewalls, and office 365 environments!!!

I’ve brought up tons of ways to fix these problems, but they have their heads so far up their own asses getting high on undeserved smugness since “they have been in business for almost ten years”. Like, Whoop Dee MotherFucking Doo! You have only been lucky to skate by with this dumpster fire you call a software stack, you could probably fill 10 olympic sized swimming pools to the brim with the logarrhea that flows from your gullets not only to us but also to your customers, and you won’t implement anything that is good for you, your company, or your poor clients because you take ten minutes to try and understand something new.

I’m fucking livid because I’m stuck in a position where I can’t just quit and work on my business full time. I’m married and have a 6m old baby. Between both my wife and I working we barely make ends meet and there’s absolutely zero reason that I couldn’t be providing better service to customers without having to lie through my teeth to them and I could easily support my family and be about 264826290461% happier!

But because we make so little, I can’t scrap together enough money to get Terranimbus (my startup) bootstrapped. We have zero expendable/savable income each month and it’s killing my soul. It’s so fucking frustrating knowing that a little time and some capital is all that stands between a better life for my family and I and being able to provide a better overall service out there over these kinds of shady as fuck knob gobblers.5 -

!dev && feelsbadman

I don't know what to think.

All I know is that I just went reaaaaal close to a disaster.

Friday morning, my "scariest" manager (as in, if you have to meet with him, it's usally for something serious) told me that he needed to see me on monday (so today) with the lead dev, the project manager and the dude who recruited me.

The meeting was like an arena of 4 vs 1, where they all 4 had problem with the work I do, as in I make a lot of small but stupid mistakes that wastes everyone's time. As an excuse, I suffer from sleep apnea so I wake up as tired I am when I go to sleep, and I snore loud as fuck. I've heard some records, it's not even human. (I'm 1m85-ish for 125 kg, it's BIG but with my morphology it's not like I'm a ball of fat)

Anyway. And since it's not the first time they're reproaching me this kind of stuff, they were all... really angry. Because I'm a nice guy, competent and all but not productive enough and easily distracted.

So, when the manager asked me to meet me, it was to fire me. However, during the lunch break, the lead dev found a solution: I get out of the current project I was in until this morning, and I write all the functional tests for all the projects, because they all lack quality and we sometimes deliver regresses.

They proposed me this in a way I could refuse, and I'd get fired because they had no other options. Obviously, I said yes, I'm not stupid enough to decline a possibilty to avoid a monstruous shitstorm that would have cut me my studies, the money for taxes, and a lot of fun to find a job as fast as possible.

But what surprised me the most is that they were genuinely glad I accepted, like, even though I made my shit ton of mistakes, they weren't pleased at all to get rid of me.

And in a way, I'm the one who won in this story, since I don't have to work with Drupal anymore, excepted to parse the website to write my tests, but my nightmare fuel is finally gone *.*

I don't know where to finish with this rant, but I needed to vent this whole thing, to write it somewhere so I can move forward.

I wish y'all a nice week.3 -

Senior colleagues insisting on ALWAYS returning HTTP status 200 and sticking any error codes in the contained JSON response instead of using 4×× or 5×× statuses.

Bad input? Failed connections? Missing authorization? Doesn't matter, you get an OK. Wanna know if the request actually succeeded? Fuck you, parse potential kilobytes of JSON to get to the error code!

Am I the asshole or is that defeating the purpose of a status code?!13 -

writing an assembler for my compiler, Manticore.

Currently working on writing a hand written parser and parse tree node system. 7

7 -

One thing I learnt after over two years of working as a programmer is that sometimes making your code DRY is less important than making your code readable, ESPECIALLY if you're working on a shared codebase. All those abstractions and metaprogramming may look good in your eyes, but might cause your teammates their coding time because they need to parse your mini-framework. So code wisely and choose the best approach that works FOR YOUR TEAM.7

-

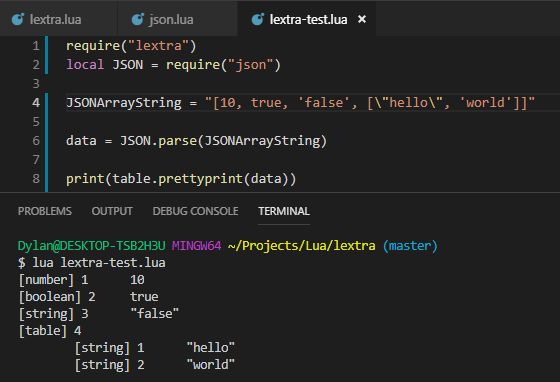

I know it's not done yet but OOOOOH boy I'm proud already.

Writing a JSON parser in Lua and MMMM it can parse arrays! It converts to valid Lua types, respects the different quotation marks, works with nested objects, and even is fault-tolerant to a degree (ignoring most invalid syntax)

Here's the JSON array I wrote to test, the call to my function, and another call to another function I wrote to pretty print the result. You can see the types are correctly parsed, and the indentation shows the nested structure! (You can see the auto-key re-start at 1)

Very proud. Just gotta make it work for key/value objects (curly bracket bois) and I'm golden! (Easier said than done. Also it's 3am so fuck, dude) 15

15 -

Doing linguistic research where I need to parse 2000 files of a total of 36 GB. Since we are using python the first thing I thought was to implement multi threading. Now I changed the total runtime from three days to like one day and a half. But then when I checked the activity monitor I saw only 20 percent of the CPU usage. After a searching process I started to understand how multi threading and multi processing works. Moral of the story: if you want to ping a website till they block you or do easy tasks that will not use up all power of one core, do multi thrading. If you need to do something complicated that can easily consume all the powers of a single CPU core, split up the work and do multi processing. In my case, when I tried to grab information from a website, I did multi thrading since the work is easy and I really wanted to pin the website 16 times simultaneously but only have 4 cores. But when it come to text processing which a single file will take 80 percent of cpu, split it up and do multi processing.

This is just a post for those who are confused with when to use which.12 -

A dev team has been spending the past couple of weeks working on a 'generic rule engine' to validate a marketing process. The “Buy 5, get 10% off” kind of promotions.

The UI has all the great bits, drop-downs, various data lookups, etc etc..

What the dev is storing the database is the actual string representation FieldA=“Buy 5, get 10% off” that is “built” from the UI.

Might be OK, but now they want to apply that string to an actual order. Extract ‘5’, the word ‘Buy’ to apply to the purchase quantity rule, ‘10%’ and the word ‘off’ to subtract from the total.

Dev asked me:

Dev: “How can I use reflection to parse the string and determine what are integers, decimals, and percents?”

Me: “That sounds complicated. Why would you do that?”

Dev: “It’s only a string. Parsing it was easy. First we need to know how to extract numbers and be able to compare them.”

Me: “I’ve seen the data structures, wouldn’t it be easier to serialize the objects to JSON and store the string in the database? When you deserialize, you won’t have to parse or do any kind of reflection. You should try to keep the rule behavior as simple as possible. Developing your own tokenizer that relies on reflection and hoping the UI doesn’t change isn’t going to be reliable.”

Dev: “Tokens!...yea…tokens…that’s what we want. I’ll come up with a tokenizing algorithm that can utilize recursion and reflection to extract all the comparable data structures.”

Me: “Wow…uh…no, don’t do that. The UI already has to map the data, just make it easy on yourself and serialize that object. It’s like one line of code to serialize and deserialize.”

Dev: “I don’t know…sounds like magic. Using tokens seems like the more straightforward O-O approach. Thanks anyway.”

I probably getting too old to keep up with these kids, I have no idea what the frack he was talking about. Not sure if they are too smart or I’m too stupid/lazy. Either way, I keeping my name as far away from that project as possible.4 -

Woke up at 5am with the realization that I could use regular expressions to parse the string representation of regular expressions to build this program to parse regular expressions into more human-readable English.

I am so tired.5 -

>Middle of night

>css not getting applied conditionally

>a simple ng-class

>me raging

>fuck angular's digest loop

>fuck dom and not giving parse errors

>fuck my life

>Coworker is also confused

>after 1hr, what it could be

>A typo, ifStudent->isStudent

>😑3 -

I fucking hate the web guy.

He says - make a pop-up of the raw text you're receiving (in the app) so that I can test it easily while I fix it.

I did it.

Now he laughs and says - I think you searched for it and simply copied from wrong example. All you had to do was handle the text and parse it and display it blablabla instead of simply popping up the raw text.

Thank you I flipping KNOW all of that, you stuck up obnoxious frog. I did it that way initially and uploaded it coz you SAID so! Why do you ALWAYS have to talk like I know nothing!?5 -

I've optimised so many things in my time I can't remember most of them.

Most recently, something had to be the equivalent off `"literal" LIKE column` with a million rows to compare. It would take around a second average each literal to lookup for a service that needs to be high load and low latency. This isn't an easy case to optimise, many people would consider it impossible.

It took my a couple of hours to reverse engineer the data and implement a few hundred line implementation that would look it up in 1ms average with the worst possible case being very rare and not too distant from this.

In another case there was a lookup of arbitrary time spans that most people would not bother to cache because the input parameters are too short lived and variable to make a difference. I replaced the 50000+ line application acting as a middle man between the application and database with 500 lines of code that did the look up faster and was able to implement a reasonable caching strategy. This dropped resource consumption by a minimum of factor of ten at least. Misses were cheaper and it was able to cache most cases. It also involved modifying the client library in C to stop it unnecessarily wrapping primitives in objects to the high level language which was causing it to consume excessive amounts of memory when processing huge data streams.

Another system would download a huge data set for every point of sale constantly, then parse and apply it. It had to reflect changes quickly but would download the whole dataset each time containing hundreds of thousands of rows. I whipped up a system so that a single server (barring redundancy) would download it in a loop, parse it using C which was much faster than the traditional interpreted language, then use a custom data differential format, TCP data streaming protocol, binary serialisation and LZMA compression to pipe it down to points of sale. This protocol also used versioning for catchup and differential combination for additional reduction in size. It went from being 30 seconds to a few minutes behind to using able to keep up to with in a second of changes. It was also using so much bandwidth that it would reach the limit on ADSL connections then get throttled. I looked at the traffic stats after and it dropped from dozens of terabytes a month to around a gigabyte or so a month for several hundred machines. The drop in the graphs you'd think all the machines had been turned off as that's what it looked like. It could now happily run over GPRS or 56K.

I was working on a project with a lot of data and noticed these huge tables and horrible queries. The tables were all the results of queries. Someone wrote terrible SQL then to optimise it ran it in the background with all possible variable values then store the results of joins and aggregates into new tables. On top of those tables they wrote more SQL. I wrote some new queries and query generation that wiped out thousands of lines of code immediately and operated on the original tables taking things down from 30GB and rapidly climbing to a couple GB.

Another time a piece of mathematics had to generate all possible permutations and the existing solution was factorial. I worked out how to optimise it to run n*n which believe it or not made the world of difference. Went from hardly handling anything to handling anything thrown at it. It was nice trying to get people to "freeze the system now".

I build my own frontend systems (admittedly rushed) that do what angular/react/vue aim for but with higher (maximum) performance including an in memory data base to back the UI that had layered event driven indexes and could handle referential integrity (overlay on the database only revealing items with valid integrity) or reordering and reposition events very rapidly using a custom AVL tree. You could layer indexes over it (data inheritance) that could be partial and dynamic.

So many times have I optimised things on automatic just cleaning up code normally. Hundreds, thousands of optimisations. It's what makes my clock tick.4 -

Well, if your tests fails because it expects 1557525600000 instead of 1557532800000 for a date it tells you exactly: NOTHING.

Unix timestamp have their point, yet in some cases human readability is a feature. So why the fuck don't you display them not in a human readable format?

Now if you'd see:

2019-05-10T22:00:00+00:00

vs the expected

2019-05-11T00:00:00+00:00

you'd know right away that the first date is wrong by an offset of 2 hours because somebody fucked up timezones and wasn't using a UTC calculation.

So even if want your code to rely on timestamps, at least visualize your failures in a human readable way. (In most cases I argue that keeping dates as an iso string would be JUST FUCKING FINE performance-wise.)

Why do have me parse numbers? Show me the meaningful data.

Timestamps are for computers, dates are for humans.3 -

CR: "Add x here (to y) so it fits our code standards"

> No other Y has an X. None.

CR: "Don't ever use .html_safe"

> ... Can't render html without it. Also, it's already been sanitized, literally by sanitize(), written by the security team.

CR: "Haven't seen the code yet; does X change when resetting the password?"

> The feature doesn't have or reference passwords. It doesn't touch anything even tangentially related to passwords.

> Also: GO READ THE CODE! THAT'S YOUR BLOODY JOB!

CR: "Add an 'expired?' method that returns '!active'?"

> Inactive doesn't mean expired. Yellow doesn't mean sour. There's already an 'is_expired?' method.

CR: "For logging, always use json so we can parse it. Doesn't matter if we can't read it; tools can."

CR: "For logging, never link log entries to user-readable code references; it's a security concern."

CR: "Make sure logging is human-readable and text-searchable and points back to the code."

> Confused asian guy, his hands raised.

CR: "Move this data formatting from the view into the model."

> No. Views are for formatting.

CR: "Use .html() here since you're working with html"

> .html() does not support html. It converts arrays into html.

NONE OF THIS IS USEFUL! WHY ARE YOU WASTING MY TIME IF YOU HAVEN'T EVEN READ MY CODE!?

dfjasklfagjklewrjakfljasdf4 -

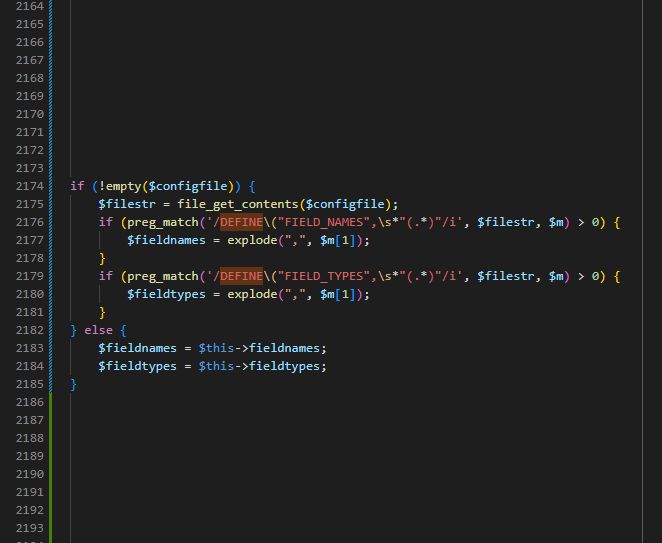

[CMS of Doom™]

The gift that keeps on giving...

When you think you've seen it all after 7 months in legacy hell, you get another gift:

Let's say you use PHP, but your IQ is in the zero-ish range, then it is obvious to:

- use define() for constants in all your config.*.php files

- then include said config.*.php files multiple times

- and because define() doesn't overwrite the same constant, because it's - you know - a constant, you instead of including just do a file_get_contents() to read the PHP file as string and then parse the values by Regex.

The dev who wrote this was truly one of the devs ever. 12

12 -

"I'm almost done, I'll just need to add tests!"

Booom! You did it, that was a nuke going off in my head.

No, you shouldn't just need to add tests. The tests should have been written from the get go! You most likely won't cover all the cases. You won't know if adding the tests will break your feature, as you had none, as you refactor your untested mess in order to make your code testable.

When reading your mess of a test case and the painful mocking process you went through, I silently cry out into the void: "Why oh why!? All of this suffering could have been avoided!"

Since most of the time, your mocking pain boils down to not understanding what your "unit" in your "unit test" should be.

So let it be said:

- If you want to build a parser for an XML file, then just write a function / class whose *only* purpose is: parse the XML file, return a value object. That's it. Nothing more, nothing less.

- If you want to build a parser for an XML file, it MUST NOT: download a zip, extract that zip, merge all those files to one big file, parse that big file, talk to some other random APIs as a side-effect, and then return a value object.

Because then you suddenly have to mock away a http service and deal with zip files in your test cases.

The http util of your programming language will most likely work. Your unzip library will most likely work. So just assume it working. There are valid use cases where you want to make sure you acutally send a request and get a response, yet I am talking unit test here only.

In the scope of a class, keep the public methods to a reasonable minimum. As for each public method you shall at least create one test case. If you ever have the feeling "I want to test that private method" replace that statement in your head with: "I should extract that functionality to a new class where that method public. I then can create a unit test case a for that." That new service then becomes a dependency in your current service. Problem solved.

Also, mocking away dependencies should a simple process. If your mocking process fills half the screen, your test setup is overly complicated and your class is doing too much.

That's why I currently dig functional programming so much. When you build pure functions without side effects, unit tests are easy to write. Yet you can apply pure functions to OOP as well (to a degree). Embrace immutability.

Sidenote:

It's really not helpful that a lot of developers don't understand the difference between unit, functional acceptance, integration testing. Then they wonder why they can't test something easily, write overly complex test cases, until someone points out to them: No, in the scope of unit tests, we don't need to test our persistance layer. We just assume that it works. We should only test our businsess logic. You know: "Assuming that I get that response from the database, I expect that to happen." You don't need a test db, make a real query against that, in order to test that. (That still is a valid thing to do. Yet not in the scope of unit tests.)rant developer unit test test testing fp oop writing tests get your shit together unit testing unit tests8 -

🐉

I once wrote a room planning application on unity, to allow people in my company to book meetings using tablets attached to the room doors.

Turns out the c# Datetime object unity uses was highly localized and therefore had a different formats on each different device.

I saved those timestamps into a SQL database and eventually all devices crashed due to having some Datetime format they could not parse.

Had to fully bypass the datetime and reinvent it essentially and had to reset the database.

I think it's needless to say I'm not particularly good in dating.4 -

Don't you hate it when your co-worker does dumb things, but thinks it's the "clean code" way?

The following is a conversation between me and a co-worker, who thinks he's superior to everyone because he thinks he's the only one who read the Clean Code series. Let's call him Bill.

Me: I think the feature we need is quite simple, our application needs to call this third party API, parse the response and pass it to the next step. Why do you need to bury everything under an abstraction of 4 layers?

Bill: bEcAuSe It'S dEcOuPlInG, aNd MaKe ThE cOdE tEsTaBlE

Me: I don't know man, you only need to abstract the third party api client, and then mock it if you want. Some interfaces you define makes no sense at all. For example, this interface only has 1 concrete implementation, and I don't think it will ever have another. Besides, the concrete implementation only gets the input from the upper layer and passes it down the lower layer. Why the extra step? I feel like you're using interface just for the sake of interface.

Bill: PrOgRaMmInG tO iNtErFaCe, NoT cOnCrEtE iPlEmEnTaTiOn!!!

Me: You keep saying those words, I don't think they mean what you think they mean. But they certainly do not mean that every method argument must be an interface

Bill: BuT uNcLe BoB blah blah blah...

Me: *gives up all hope*14 -

!dev - cybersecurity related.

This is a semi hypothetical situation. I walked into this ad today and I know I'd have a conversation like this about this ad but I didn't this time, I had convo's like this, though.

*le me walking through the city centre with a friend*

*advertisement about a hearing aid which can be updated through remote connection (satellite according to the ad) pops up on screen*

Friend: Ohh that looks usefu.....

Me: Oh damn, what protocol would that use?

Does it use an encrypted connection?

How'd the receiving end parse the incoming data?

What kinda authentication might the receiving end use?

Friend: wha..........

Me: What system would the hearing aid have?

Would it be easy to gain RCE (Remote Code Execution) to that system through the satellite connection and is this managed centrally?

Could you do mitm's maybe?

What data encoding would the transmissions/applications use?

Friend: nevermind.... ._________.

Cybersecurity mindset much...!11 -

I'M BACK TO MY WEBDEV ADVENTURES GUYS! IT TOOK ME LIKE 4 MONTHS TO STOP BEING SO FUCKING DEPRESSED SO I CAN ACTUALLY STAND TO WORK ON IT AGAIN

I learned that the linear gradient looks cool as FUCK. Honestly not too fond of the colors I have right now, but I just wanted to have something there cause I can change it later. The page has evolved a bunch from my original concept.

My original concept was the bar in the middle just being a URL bar and having links on the sides. If I had kept that, it would have taken me a few hours to get done. But as time went on when I was working on it, my idea kept changing. Added the weather (had a forecast for a while but the code was gross and I never looked at the next days anyways, so I got rid of it and kept the current data). I wanted to attempt an RSS reader, but yesterday I was about to start writing the JavaScript to parse the feeds, then decided "nah", ended up making the space into a todo list.

The URL bar changed into a full command bar (writing the functions for the commands now, also used to config smaller things, such as the user@hostname part, maybe colors, weather data for city and API key, etc)....also it can open URLs and subreddits (that part works flawlessly). The bar uses a regex to detect if it's a legit URL (even added shit so I don't need http:// or https://), and if it's not, just search using duckduckgo (maybe I'll add a config option there too for search engines).

At this very moment it doesn't even take a second to fully load. It fetches weather data from openweathermap, parses it, and displays it, then displays the "user" name grabbing a localstorage value.

I'm considering adding a sidebar with links (configurable obviously, I want everything to be dynamic, so someone else could use my page if they wanted), but I'm not too sure about it.

It's not on git yet because I was waiting until I get some shit finished today before I commit. From the picture, I want to know if anyone has any suggestions for it. Also note that I am NOT a designer. I can't design for shit. 12

12 -

I rewrote my static website generation from jekyll to custom python code over single night.

Literally all jekyll plugins I use including seo, rss, syntax highlighting inside markdown content, sitemap, social plugins, css sass, all of it.

Now it’s around 400 lines of python code that I understand completely. I didn’t touch any existing templates and after comparing output I got even better results now and it’s working faster.

I skipped drafts as I don’t need them now.

Why ? Cause now I can make better generator for my side projects that can include some partial website generation, better modification and date handling, tree structure, etc.

What I will do now is that I will parse bunch of content to create markdown files that will be sucked by this generator to create static web pages that will flood internet lol.

Still I didn’t believe it was possible to rewrite all of it so quickly. I sit yesterday around 4pm and finished around 6am.

I started thinking that maybe I am crazy and no one can help me.9 -

4 hours! four fucking hours! f.o.u.r. h.o.u.r.s.!

It's the amount in the time domain this bug has cost me to fix. The cost in the sanity domain is immeasurable...

I swear, the god damn ass births of devs who coded this abomination should be slowly mutilated and then raped by their own severed limbs.

It took me 4 hours to figure out that their 12 year old binary CLI tool they used to generate PDFs from PHP could not handle neither HTML5 nor some linebreaks at specific places. Some part of it is due to them using REGEX to find and replace HTML tag.

Yes, I am indeed very pissed. And I need a 🥃 or 3

What we learned:

- Don't use REGEX to "parse" HTML

- Don't call random compiled CLI tools from PHP if there are PHP packages to do the same shit9 -

I feel like Stackoverflow is a fastfood for programmers mind. It gives you a solution you starve for. It may be not the best quality, but it works for now. It does not satisfy you in a long run, and may be too shallow for advancing. But it works and gives you more to think about.

I would never find a quick solution to parse a string with regex. I never thought it works this way. But hey, I'm happy.9 -

oh, it got better!

One year ago I got fed up with my daily chores at work and decided to build a robot that does them, and does them better and with higher accuracy than I could ever do (or either of my teammates). So I did it. And since it was my personal initiative, I wasn't given any spare time to work on it. So that leaves gaps between my BAU tasks and personal time after working hours.

Regardless, I spent countless hours building the thing. It's not very large, ~50k LoC, but for a single person with very little time, it's quite a project to make.

The result is a pure-Java slack-bot and a REST API that's utilized by the bot. The bot knows how to parse natural language, how to reply responses in human-friendly format and how to shout out errors in human-friendly manner. Also supports conversation contexts (e.g. asks for additional details if needed before starting some task), and some other bells and whistles. It's a pretty cool automaton with a human-friendly human-like UI.

A year goes by. Management decides that another team should take this project over. Well okay, they are the client, the code is technically theirs.

The team asks me to do the knowledge transfer. Sounds reasonable. Okay.. I'll do it. It's my baby, you are taking it over - sure, I'll teach you how to have fun with it.

Then they announce they will want to port this codebase to use an excessive, completely rudimentary framework (in this project) and hog of resources - Spring. I was startled... They have a perfectly running lightweight pure-java solution, suitable for lambdas (starts up in 0.3sec), having complete control over all the parts of the machinery. And they want to turn it into a clunky, slow monster, riddled with Reflection, limited by the framework, allowing (and often encouraging) bad coding practices.

When I asked "what problem does this codebase have that Spring is going to solve" they replied me with "none, it's just that we're more used to maintaining Spring projects"

sure... why not... My baby is too pretty and too powerful for you - make it disgusting first thing in the morning! You own it anyway..

Then I am asked to consult them on how is it best to make the port. How to destroy my perfectly isolated handlers and merge them into monstrous @Controller classes with shared contexts and stuff. So you not only want to kill my baby - you want me to advise you on how to do it best.

sure... why not...

I did what I was asked until they ran into classloader conflicts (Spring context has its own classloaders). A few months later the port is not yet complete - the Spring version does not boot up. And they accidentally mention that a demo is coming. They'll be demoing that degenerate abomination to the VP.

The port was far from ready, so they were going to use my original version. And once again they asked me "what do you think we should show in the demo?"

You took my baby. You want to mutilate it. You want me to advise on how to do that best. And now you want me to advise on "which angle would it be best to look at it".

I wasn't invited to the demo, but my colleagues were. After the demo they told me mgmt asked those devs "why are you porting it to Spring?" and they answered with "because Spring will open us lots of possibilities for maintenance and extension of this project"

That hurts.

I can take a lot. But man, that hurts.

I wonder what else have they planned for me...rant slack idiocy project takeover automation hurts bot frameworks poor decision spring mutilation java9 -

That moment when you're doing regex to parse regex expression stored in a file whose name you found using a regex on input. Regex-ception1

-

Me: yo DEV2 parse this string into a hashmap. Use regex, 2 rows of code are enough for the job

DEV2: implemented 40 rows of switch/if cases into nested for loop.

Please kill me 🔫🔫🔫🔫🔫🔫🇮🇹3 -

Why would you ask me the diferrence between parseInt and parseFloat? When you were the first person who was unable to fix the decimal to two using parseFloat? 😝

-

Writing a function to take a string of delimited entities, parse each character to find the separators, capture the characters in between separators, and return an array of entities.

I used this for about a year before I learned about String.split()

Yeah.1 -

When you are googling something about Parse.com for a new language and the first 35000 results are "how to parse $something in $language"

4

4 -

I made a functional parsing layer for an API that cleans http body json. The functions return insights about the received object and the result of the parse attempt. Then I wrote validation in the controller to determine if we will reject or accept. If we reject, parse and validation information is included on the error response so that the API consumer knows exactly why it was rejected. The code was super simple to read and maintain.

I demoed to the team and there was one hold out that couldn’t understand my decision to separate parse and validate. He decided to rewrite the two layers plus both the controller and service into one spaghetti layer. The team lead avoided conflict at all cost and told me that even though it was far worse code to “give him this”. We still struggle with the spaghetti code he wrote to this day.

When sugar-coating someone’s engineering inadequacies is more important than good engineering I think about quitting. He was literally the only one on the team that didn’t get it.2 -

Who the fuck uses http code 200 for a failure. Seriously have you ever heard something about a need to parse the shit you're returning...

Now I don't know whether it's me who's wrong, but man there are more than 80 different codes defined so there really should be something for you, don't you think?

And don't give me shit like "well the request worked so we return 200 it's only that the request wasn't correct". What for a fucking peace of something are you... Those codes are for that exact reason.

Anyways I'm going to parse the shit with string compare and afterwards kill myself out of shame. Whish me luck... 4

4 -

Writing the new software dev test for our incoming interview process.

Me: And here is where we ask them to parse HTML with regex.

Lead developer: You are fucked up and the villain of this movie, multiverse and everything in between, fk u.

CMS Admin: And I thought Palpatine was evil. That is legit fucked up, fk u.16 -

Me, the only iOS dev at work one day, and colleague (who we'll call AndroidBoy), the only Android dev at work that same day (he's been working with us for less than two months). There was a change in one of the jsons we received from the server: instead of receiving a list, we now received a dictionary with strings as keys and lists as values. My iOS colleague had already made this modification on our parse function the day before.

AndroidBoy: "Hey what happened with the json?"

Me: "Oh, well instead of parsing a list, we'll parse a dictionary and get the list from each key. You basically have to do the same thing, only this time the lists are organized into categories."

AndroidBoy: "Oh, ok. But I don't know how to parse a dictionary while using Retrofit." (Context: Retrofit is a framework for request handling - correct me if I am mistaken, that's just what I've been told)

Me: "Sucks, dude, can't help ya. I've never worked with that and don't have that much exp. with Android."

I go out for a cigarette break. When I return, AndroidBoy is nowhere to be seen and suddenly I can't seem to get that data in my app. AndroidBoy comes in from the room where the backend colleagues work.

AndroidBoy: "Solved it!"

Me: "Solved what?"

AndroidBoy: "I told them to change back to a list and just put the key inside the objects of the list."

... he used the precious time of the backend colleagues to change the thing back hust because he was too lazy to search how to parse a dictionary. I was so amazed by his answer, that I didn't know whether to laugh, scream at him or punch him in the face. Not to mention the fact that now I had to revert just so he could avoid that extra work.5 -

Your data is formatted according to some ISO? Nice. You say it's easy to use and well documented? Great!

IT'S FUCKING FORMATTED WITH IRREGULAR SPACING??

What the fuck is this formatting?

Date <tab> Word <3 spaces> Some conjugated version <ONE space> Type <FIVE spaces> gender

WHAT

WHY

WHY ISNT IT XML

WHY ISNT IT JSON

WHY THE TABULATOR AND IRREGULAR SPACES

WHY DOES THE ORDER CHANGE PER LINE

WHY IS THIS IN A SINGLE 1MIL LINE FILE

help the university of oslo makes me dysphoric in their dictionaries i really dont want to parse this1 -

You know that feeling when you're coding late at night and you get an error that you just can't parse with your tired brain, and go FUCK IT ALL, FUCK IT ALL

I'm having that feeling right about now... 6

6 -

Instead of worrying about API rate limits I made my code manually parse the html from a website.

And the code still works great!4 -

Want to make someone's life a misery? Here's how.

Don't base your tech stack on any prior knowledge or what's relevant to the problem.

Instead design it around all the latest trends and badges you want to put on your resume because they're frequent key words on job postings.

Once your data goes in, you'll never get it out again. At best you'll be teased with little crumbs of data but never the whole.

I know, here's a genius idea, instead of putting data into a normal data base then using a cache, lets put it all into the cache and by the way it's a volatile cache.

Here's an idea. For something as simple as a single log lets make it use a queue that goes into a queue that goes into another queue that goes into another queue all of which are black boxes. No rhyme of reason, queues are all the rage.

Have you tried: Lets use a new fangled tangle, trust me it's safe, INSERT BIG NAME HERE uses it.

Finally it all gets flushed down into this subterranean cunt of a sewerage system and good luck getting it all out again. It's like hell except it's all shitty instead of all fiery.

All I want is to export one table, a simple log table with a few GB to CSV or heck whatever generic format it supports, that's it.

So I run the export table to file command and off it goes only less than a minute later for timeout commands to start piling up until it aborts. WTF. So then I set the most obvious timeout setting in the client, no change, then another timeout setting on the client, no change, then i try to put it in the client configuration file, no change, then I set the timeout on the export query, no change, then finally I bump the timeouts in the server config, no change, then I find someone has downloaded it from both tucows and apt, but they're using the tucows version so its real config is in /dev/database.xml (don't even ask). I increase that from seconds to a minute, it's still timing out after a minute.

In the end I have to make my own and this involves working out how to parse non-standard binary formatted data structures. It's the umpteenth time I have had to do this.

These aren't some no name solutions and it really terrifies me. All this is doing is taking some access logs, store them in one place then index by timestamp. These things are all meant to be blazing fast but grep is often faster. How the hell is such a trivial thing turned into a series of one nightmare after another? Things that should take a few minutes take days of screwing around. I don't have access logs any more because I can't access them anymore.

The terror of this isn't that it's so awful, it's that all the little kiddies doing all this jazz for the first time and using all these shit wipe buzzword driven approaches have no fucking clue it's not meant to be this difficult. I'm replacing entire tens of thousands to million line enterprise systems with a few hundred lines of code that's faster, more reliable and better in virtually every measurable way time and time again.

This is constant. It's not one offender, it's not one project, it's not one company, it's not one developer, it's the industry standard. It's all over open source software and all over dev shops. Everything is exponentially becoming more bloated and difficult than it needs to be. I'm seeing people pull up a hundred cloud instances for things that'll be happy at home with a few minutes to a week's optimisation efforts. Queries that are N*N and only take a few minutes to turn to LOG(N) but instead people renting out a fucking off huge ass SQL cluster instead that not only costs gobs of money but takes a ton of time maintaining and configuring which isn't going to be done right either.

I think most people are bullshitting when they say they have impostor syndrome but when the trend in technology is to make every fucking little trivial thing a thousand times more complex than it has to be I can see how they'd feel that way. There's so bloody much you need to do that you don't need to do these days that you either can't get anything done right or the smallest thing takes an age.

I have no idea why some people put up with some of these appliances. If you bought a dish washer that made washing dishes even harder than it was before you'd return it to the store.

Every time I see the terms enterprise, fast, big data, scalable, cloud or anything of the like I bang my head on the table. One of these days I'm going to lose my fucking tits.10 -

Spending a few hours messing around with regex and for loops to parse HTML just to SO and learn about BeautifulSoup. Why was my life wasted so!2

-

After three weeks looking for decent pdf parser that will handle all documents I gathered for my project I decided to write my own.

All those I tried end up with more then 10% not correctly parsed pdfs or require to much coding.

I was sceptic so I waited another week debating if it’s good idea to do it and I said yes.

Spent 16 hours straight coding pdf document extraction library and command line tool based on pdf.js

Fuck, now when I open pdf I see opcodes instead of text.

Got two more hours until client planning meeting and then I go to sleep for a while.

Time to start testing this more deeply as I have about 60k ~ 20GB pdf documents to parse and then I need to build some dependency graph out of its text.

At least it’s more funny then making boring REST API for money.4 -

When you think the code from companies like Google and Facebook is flawless, but then you look at the source code of Parse 1.5.0 and find an if statement with the condition 'browser' === 'browser'

2

2 -

I have come across the most frustrating error i have ever dealt with.

Im trying to parse an XML doc and I keep getting UnauthorizedAccessException when trying to load the doc. I have full permissions to the directory and file, its not read only, i cant see anything immediately wrong as to why i wouldnt be able to access the file.

I searched around for hours yesterday trying a bunch of different solutions that helped other people, none of them working for me.

I post my issue on StackOverflow yesterday with some details, hoping for some help or a "youre an idiot, Its because of this" type of comment but NO.

No answers.

This is the first time Ive really needed help with something, and the first time i havent gotten any response to a post.

Do i keep trying to fix this before the deadline on Sunday? Do i say fuck it and rewrite the xml in C# to meet my needs? Is there another option that i dont even know about yet?

I need a dev duck of some sort :/39 -

Fun issue

Swedish client is unable to enter a currency conversion rate in a field and submit. 'Not a float' well we can clearly see that it is a float when he does it (0.5 for example), not an issue for us though.

Reproducing was a nightmare, eventually it boiled down to the fact that the framework we were using had automatic locale checks. Now because our numeric fields are actually weird text fields (front end nonsense), it was converting the period to be a comma (Swedish people would write 0,5 normally). And if you actually entered 0,5 the range check (0.01-1000) failed because it couldn't parse the comma (no locale check on that one)

Godamn facepalm. Really confused the hell out of us when we saw the error, had to go diving through library code. To top this off, locale checks are supposed to be disabled as of about 2 years ago

In revenge against our oppressor :PHP: on slack is now an alias for the shit emoji5 -

So I just spent the last few hours trying to get an intro of given Wikipedia articles into my Telegram bot. It turns out that Wikipedia does have an API! But unfortunately it's born as a retard.

First I looked at https://www.mediawiki.org/wiki/API and almost thought that that was a Wikipedia article about API's. I almost skipped right over it on the search results (and it turns out that I should've). Upon opening and reading that, I found a shitload of endpoints that frankly I didn't give a shit about. Come on Wikipedia, just give me the fucking data to read out.

Ctrl-F in that page and I find a tiny little link to https://mediawiki.org/wiki/... which is basically what I needed. There's an example that.. gets the data in XML form. Because JSON is clearly too much to ask for. Are you fucking braindead Wikipedia? If my application was able to parse XML/HTML/whatevers, that would be called a browser. With all due respect but I'm not gonna embed a fucking web browser in a bot. I'll leave that to the Electron "devs" that prefer raping my RAM instead.

OK so after that I found on third-party documentation (always a good sign when that's more useful, isn't it) that it does support JSON. Retardpedia just doesn't use it by default. In fact in the example query that was a parameter that wasn't even in there. Not including something crucial like that surely is a good way to let people know the feature is there. Massive kudos to you Wikipedia.. but not really. But a parameter that was in there - for fucking CORS - that was in there by default and broke the whole goddamn thing unless I REMOVED it. Yeah because CORS is so useful in a goddamn fucking API.

So I finally get to a functioning JSON response, now all that's left is parsing it. Again, I only care about the content on the page. So I curl the endpoint and trim off the bits I don't need with jq... I was left with this monstrosity.

curl "https://en.wikipedia.org/w/api.php/...=*" | jq -r '.query.pages[0].revisions[0].slots.main.content'

Just how far can you nest your JSON Wikipedia? Are you trying to find the limits of jq or something here?!

And THEN.. as an icing on the cake, the result doesn't quite look like JSON, nor does it really look like XML, but it has elements of both. I had no idea what to make of this, especially before I had a chance to look at the exact structured output of that command above (if you just pipe into jq without arguments it's much less readable).

Then a friend of mine mentioned Wikitext. Turns out that Wikipedia's API is not only retarded, even the goddamn output is. What the fuck is Wikitext even? It's the Apple of wikis apparently. Only Wikipedia uses it.

And apparently I'm not the only one who found Wikipedia's API.. irritating to say the least. See e.g. https://utcc.utoronto.ca/~cks/...

Needless to say, my bot will not be getting Wikipedia integration at this point. I've seen enough. How about you make your API not retarded first Wikipedia? And hopefully this rant saves someone else the time required to wade through this clusterfuck.12 -

Job description: designing and building microservices and API contracts for enterprise use. Deep understanding of api/rest design, AWS, etc.

Interview: in this weird IDE while I stare over you, go through and parse this multi-dimensional primitive array using recursion.

...Wtf does this have to do with the role?8 -

Writing a truly crossplatform terminal library is the biggest pain in the ass.

And you thought windows was bad. They have a proper API with droves of features, freely allocatable screenbuffers, scrolling on both axes, etc.

Fucking xterm vtxxx compatible piles of shit are the problem.

Controlling kinda works eventhough the feature set is pretty bad. The really fucked up thing is reading values back. They literally get put into the input buffer. So you have to read all the actual user input before that and then somehow parse out the returned control sequence. Of course the user input has to be consumed so I have to buffer it myself. Even better is when you get a response with non printable characters which the fucking terminal will interpret as another control sequence. So when you set a window title to a ansi control sequence it would get executed when queried. Fuck this shit but I'm not giving up. I will tame this ugly, bodged together dragon7 -

Unreal Engine SDLC:

1. Start Epic

2. Wait

3. Start Unity

4. Wait

5. Open Project

6. Wait

7. Wait more

8. a bit longer...

9. (it usually crashes here, or freezes, in which case go to 1)

10. Game opened, make modifications in C++ codes

11. Wait VS to load

12. Wait VS to parse all the file in solution

13. Make changes

14. Compile

15. Run from Unreal

16. (sometimes, go to 9)

17. Goto 9

18. 9

19. Goto 9

20. Congrats on finishing the game, and losing your patience8 -

TL;DR: Fuck Wordpress and their shitty “editor”.

Client told me the Wordpress editor was unusual slow on their site. I inspected the network traffic, while fiddling around in the admin pages. What I found was an even worse nightmare than expected. Somehow the fucktard of an “engineer” decided to implement the spell check module, to parse all other text areas on the page - even the fucking image sources. The result is a browser sending a GET request to fetch the images from the server every time an author triggers a keyup-event. Disabled the spell check and everything was back to budget-ineffective-feces-Wordpress normal.3 -

IPAY88 is the worst payment integration. They parse html data and encoded it into xml for return the data, it is not even singlet or server to server communication , tey called it the ADVANCED BACKEND SYSTEM (My arse!) For security, they ENCODE THE STRING into BASE64 and called it ENCRYPTION ! WHAT THE FUCK?

Encoding is not encryption! I qas expecting they used diffie hellman or AES or RSA etc. THEY TOLD BE ENCODING IS ENCRYPTION? WHAT THE FUCK?1 -

I thought 'atoi' was just some acronym wtf who thought it would be a good idea to name a parse function 'atoi'?9

-

Im a fucking idiot, instead of fetching some data and then immediately using it to fetch other data, i saved it in a file to parse later and fetch it into other data. And it's been running for a day now so I dont wanna stop it.7

-

People keep using Firebase and saying how cool it is, I'll laught when Google does the same that facebook did with Parse or hike prices like they did with Google Maps Recently.... People stop putting your core logic in a third party black box.. it's not going to end well...4

-

One day I want to replace all "ERROR" logs with "FUCK":

09-31-26: FUCK: Database not initialized.

09-31-26: FUCK: Unable to parse input.2 -

So a colleague and me are coding a Text Editor in C, and since i was adding a few Themes today i was wondering, what y'all using in your go to Editors and IDEs? Maybe i could include a few slightly modified versions of these themes aswell (modified in the sense of adjusted config)

The Editor is called MOSSY Editor, if someone's interested. MOSSY was some abbreviation for Model Based Syntax, since it's python implementation used a full parse tree in the background. 14

14 -

Just started my exams training! (Doing a study called Application Development).

The application doesn't sound that complicated but I have to implement a data exporting feature. Sounds alright, doesn't it?

THE 'CLIENT' DOES NOT KNOW WHAT DATA FORMAT THE FICTIVE CUSTOMERS CAN PARSE/HANDLE BUT I HAVE TO MAKE IT GENERIC SO THAT THEY CAN USE IT ANYWAYS. HOW THE FUCKING FUCK AM I SUPPOSED TO KNOW WHAT FUCKING FORMAT I SHOULD CHOOSE?!? SHOULD I TRY TO SMELL IT OR SOMETHING?

FML.2 -

Watcher: News feed for anything on the web you can parse

https://github.com/allanx2000/...

Still use it everyday

And the components in it had a few children so good example of reuseability ... And automation.

So very good return on investment. 4

4 -

Since Parse is shutting down by January 27th, I need to migrate my startup application's entire database to a new platform. What a pain. I'm thinking of doing Firebase...11

-

A service had/has been logging hundreds of errors in the development environment and I reached out to the owning process mgr that the error was occurring and perhaps a good opportunity to log additional data to help troubleshoot the issue if the problem ever made its way to production. He responded saying the error was related to a new feature they weren't going to implement in the backing dev database (TL;DR), and they know it works in production (my spidey sense goes off).

They deployed the changes to production this morning and immediately starting throwing errors (same error I sent)

Mgr messaged me a little while ago "Did you make any changes to the documentation service? We're getting this error .."

50% sure someone misspelled something in a config, but only thing they are logging is 'Unable to parse document'. Nothing that indicates an issue with the service they're using.2 -

Is your code green?

I've been thinking a lot about this for the past year. There was recently an article on this on slashdot.

I like optimising things to a reasonable degree and avoid bloat. What are some signs of code that isn't green?

* Use of technology that says its fast without real expert review and measurement. Lots of tech out their claims to be fast but actually isn't or is doing so by saturation resources while being inefficient.

* It uses caching. Many might find that counter intuitive. In technology it is surprisingly common to see people scale or cache rather than directly fixing the thing that's watt expensive which is compounded when the cache has weak coverage.

* It uses scaling. Originally scaling was a last resort. The reason is simple, it introduces excessive complexity. Today it's common to see people scale things rather than make them efficient. You end up needing ten instances when a bit of skill could bring you down to one which could scale as well but likely wont need to.

* It uses a non-trivial framework. Frameworks are rarely fast. Most will fall in the range of ten to a thousand times slower in terms of CPU usage. Memory bloat may also force the need for more instances. Frameworks written on already slow high level languages may be especially bad.

* Lacks optimisations for obvious bottlenecks.

* It runs slowly.

* It lacks even basic resource usage measurement.

Unfortunately smells are not enough on their own but are a start. Real measurement and expert review is always the only way to get an idea of if your code is reasonably green.

I find it not uncommon to see things require tens to hundreds to thousands of resources than needed if not more.

In terms of cycles that can be the difference between needing a single core and a thousand cores.

This is common in the industry but it's not because people didn't write everything in assembly. It's usually leaning toward the extreme opposite.

Optimisations are often easy and don't require writing code in binary. In fact the resulting code is often simpler. Excess complexity and inefficient code tend to go hand in hand. Sometimes a code cleaning service is all you need to enhance your green.

I once rewrote a data parsing library that had to parse a hundred MB and was a performance hotspot into C from an interpreted language. I measured it and the results were good. It had been optimised as much as possible in the interpreted version but way still 50 times faster minimum in C.

I recently stumbled upon someone's attempt to do the same and I was able to optimise the interpreted version in five minutes to be twice as fast as the C++ version.

I see opportunity to optimise everywhere in software. A billion KG CO2 could be saved easy if a few green code shops popped up. It's also often a net win. Faster software, lower costs, lower management burden... I'm thinking of starting a consultancy.

The problem is after witnessing the likes of Greta Thunberg then if that's what the next generation has in store then as far as I'm concerned the world can fucking burn and her generation along with it.6 -

My God is map development insane. I had no idea.

For starters did you know there are a hundred different satellite map providers?

Just kidding, it's more than that.